Your curated collection of saved posts and media

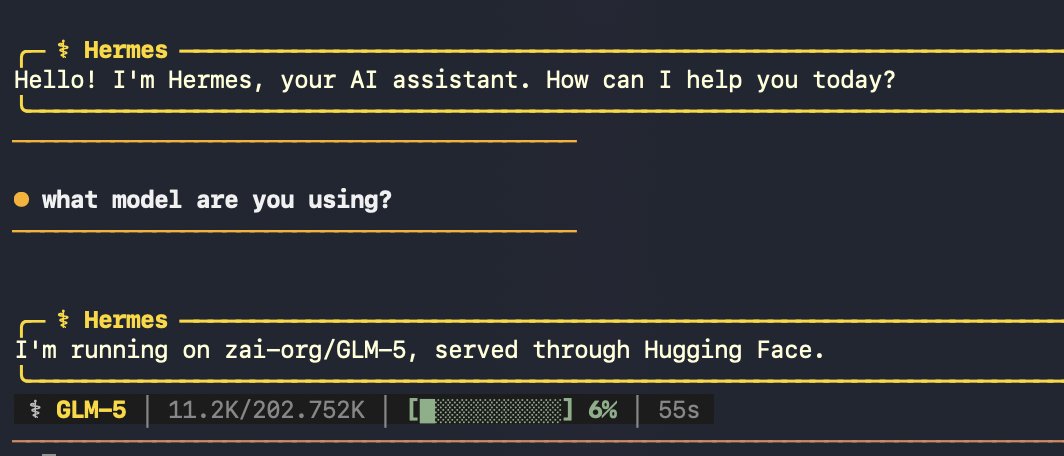

Been really cool to see the traction of @NousResearch Hermes Agent, the open source agent that grows with you! Hermes Agent is open-source and remembers what it learns and gets more capable over time, with a multi-level memory system and persistent dedicated machine access. Starting today, you can use a bunch of @huggingface open-source models thanks to our inference provider partners. Let's go open agents!

getting circumcised https://t.co/WWtGUbOOh4

getting circumcised https://t.co/WWtGUbOOh4

Kicking off a series of events in iconic, but sometimes overlooked places in SF. If this is your vibe and you’re free Sunday, DM me. https://t.co/7XRnw9n0A4

yes!!! https://t.co/Owzp6RtORd

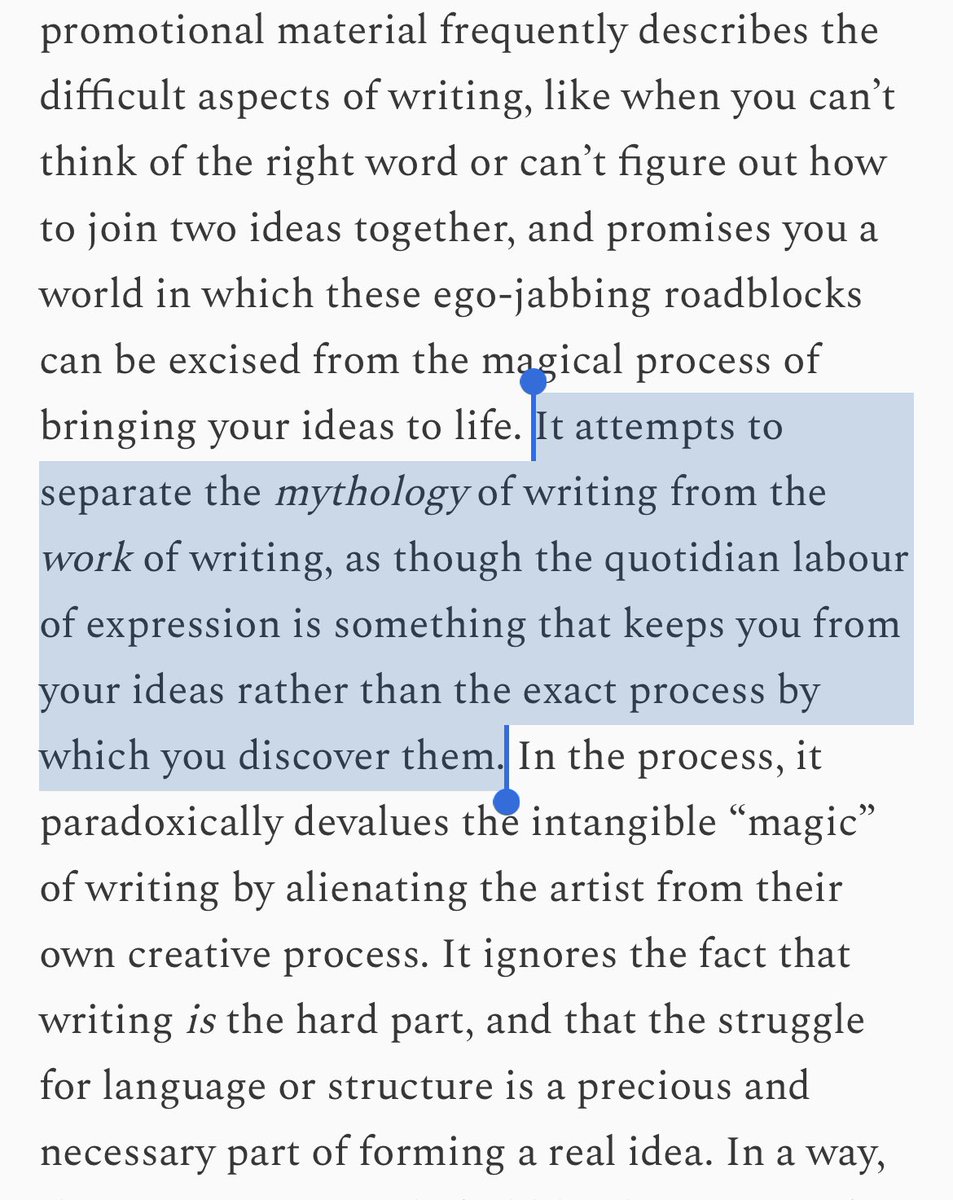

sorry for harping on this but the problem of “knowing what you want to say but not how to say it” often means you haven’t yet figured out what you want to say. if you turn to an LLM to help organize loose thoughts into prose, you’re outsourcing your cognition to a machine

yes!!! https://t.co/Owzp6RtORd

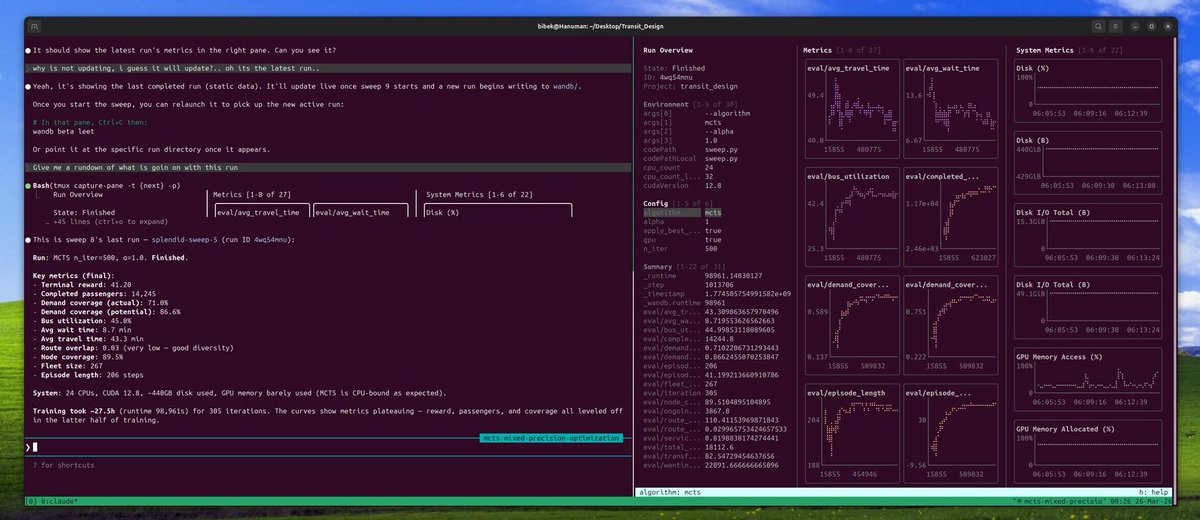

Tmux + wandb Leet = Claude can see what you see, exactly the way you see it. credit: @bibek_poudel_ https://t.co/egJHuDVX8d

Lettermatic ✕ GitHub Next: the inside story of Monaspace, a truly groundbreaking advancement for how we display code. We knew that Lettermatic would go deep, but we were still amazed by the level of knowledge and craft that they brought to the humble fixed-width typefaces we stare at every day. See their case study below, and get the fonts at https://t.co/LskXSyjplF

New work for GitHub: Monaspace. 🔤 A superfamily of 5 open-source typefaces for code. https://t.co/r3P7rvQn40

SCOTUS's Cox ruling is a huge win for developers and the open internet: providing internet service does not create liability for user copyright infringement. Liability requires conscious, culpable conduct, not mere awareness of potential infringement. https://t.co/4yBmoOx6Cf

We vibe coded a fully functional website in less than 10 minutes on @GoogleAIStudio. Watch us break down the process here, then start building your own apps, software, and tools⤵️🎬 https://t.co/mA9bEmkA9o

NEW AI report from Google. Every prior intelligence explosion in human history was social, not individual. These authors make the case that the AI "singularity" framed as a single superintelligent mind bootstrapping to godlike intelligence is fundamentally wrong. This is directly relevant to anyone designing multi-agent systems. They observe that frontier reasoning models like DeepSeek-R1 spontaneously develop internal "societies of thought," multi-agent debates among cognitive perspectives, through RL alone. The path forward is human-AI configurations and agent institutions, not bigger monolithic oracles. This reframes AI scaling strategy from "build bigger models" to "compose richer social systems." It argues governance of AI agents should follow institutional design principles, checks and balances, role protocols, rather than individual alignment. Paper: https://t.co/bfwrnbkY2y Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

Gemini 3.1 Flash Live just dropped and it's available with LiveKit today. This is the first Gemini 3 native audio model on the Live API. Better instruction following, improved tool calling, reduced speaker drift, and support for 70+ languages. Audio in, audio out. No text conversion in between.

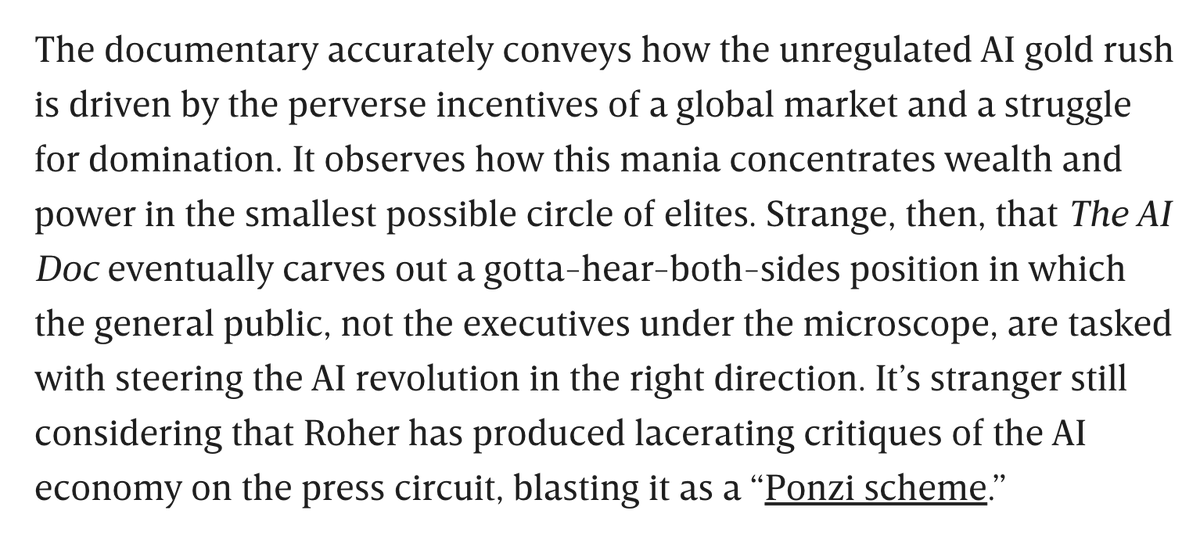

Contrary to his "middle ground" approach in the movie, filmmaker Daniel Roher seems to be less forgiving of tech oligarchs in person: https://t.co/O8pLQw4o7h

https://t.co/MeuQFSUbCx

https://t.co/MeuQFSUbCx

One way to see the advancement of AI is to see how much further you can get with new models on the same hardware Here is "an otter using a laptop on an airplane" generated on my home computer using the open weights Wan 2.1, first try. We have come pretty far in 18 months. https://t.co/c4iz9UcmTB

On one hand, these are obviously much worse "otter using wifi on an airplane" than any state-of-the AI text-to-video generation, it looks like something from 2022. On the other, it was done entirely offline on my computer using open AI video generation tools, a new capability. h

“We have basically given up all discipline and agency for a sort of addiction, where your highest goal is to produce the largest amount of code in the shortest amount of time. Consequences be damned.” https://t.co/7FH8XJ5GM7

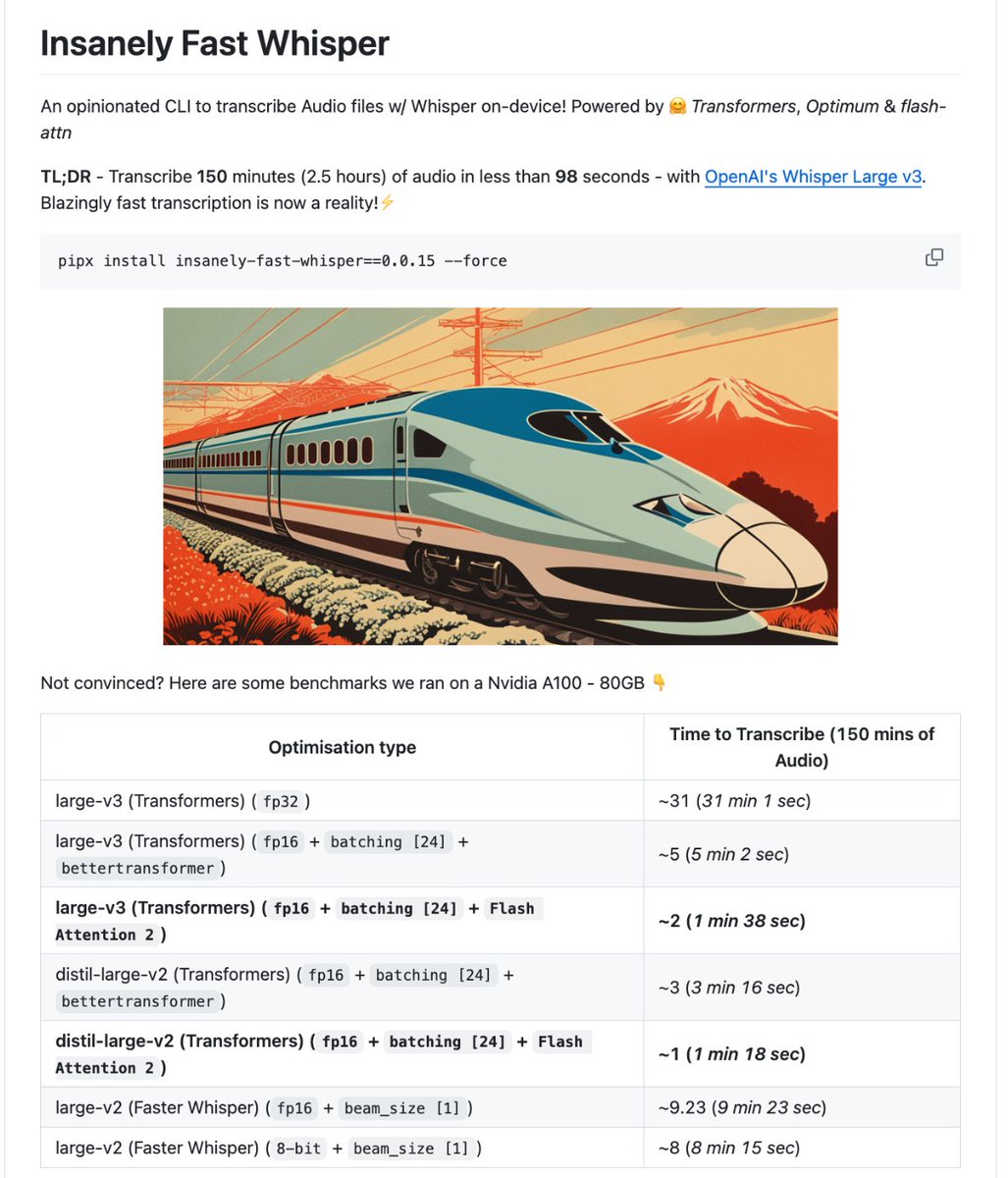

🚨 BREAKING: Someone just made OpenAI's Whisper transcribe 2.5 hours of audio in 98 seconds. 100% OPEN SOURCE. It runs entirely on your GPU. No API keys. No cloud. No subscription. It's called Insanely Fast Whisper. You drop in an audio file. One command. You come back and there's a clean, timestamped transcript waiting. Not a rough draft. Not a partial output. The entire thing. Done. Not a wrapper. Not a web app. A CLI that turns your local machine into a transcription engine that makes paid services look embarrassing. Here's what it does on its own: → Transcribes 150 minutes of audio in under 98 seconds using Flash Attention 2, same model, 19x faster, zero quality loss → Auto-detects language across dozens of languages, or translates directly into English with a single flag → Speaker diarization built in, knows who said what, not just what was said → Word-level and chunk-level timestamps so you can jump to any exact moment in any recording → Runs on NVIDIA GPUs and Apple Silicon Macs with zero code changes between them → Works on Google Colab free tier if you don't own a GPU at all Here's how fast it actually is: Standard Whisper large-v3 out of the box: 31 minutes to process 2.5 hours of audio. The same exact model with Flash Attention 2 and batching: 1 minute 38 seconds. Same weights. Same accuracy. One flag difference. Here's the wildest part: This never started as a product. It was a benchmark demo to show what Hugging Face Transformers could do. Then the community started using it for real work. Podcast transcription. Legal recordings. Research interviews. Meeting notes at scale. The team kept adding what people actually needed until a benchmark became a full CLI that nobody planned to build. 8.8K GitHub stars. 100% Open Source.

'The AI Doc: Or How I Became an Apocaloptimist' is a welcome and comprehensive overview of this tech for those who haven't followed the industry. But it squanders access to CEOs like Sam Altman by avoiding hard questions on accountability. https://t.co/hO3XobWE10

Story: https://t.co/TY2UXml0l5

Our Co-founder & President Yeyi Yun is speaking at #HumanX2026 In conversation with @EricNewcomer, she'll share how MiniMax builds general intelligence at scale - across text, video, speech & audio. 📅 April 7 · 3:40 PM 📍 Moscone Center, SF See you there 👋 https://t.co/1h2AQx3Tku

The first steel beams went up this week at our Michigan Stargate site with Oracle and Related Digital https://t.co/Hl0NBqwfnS

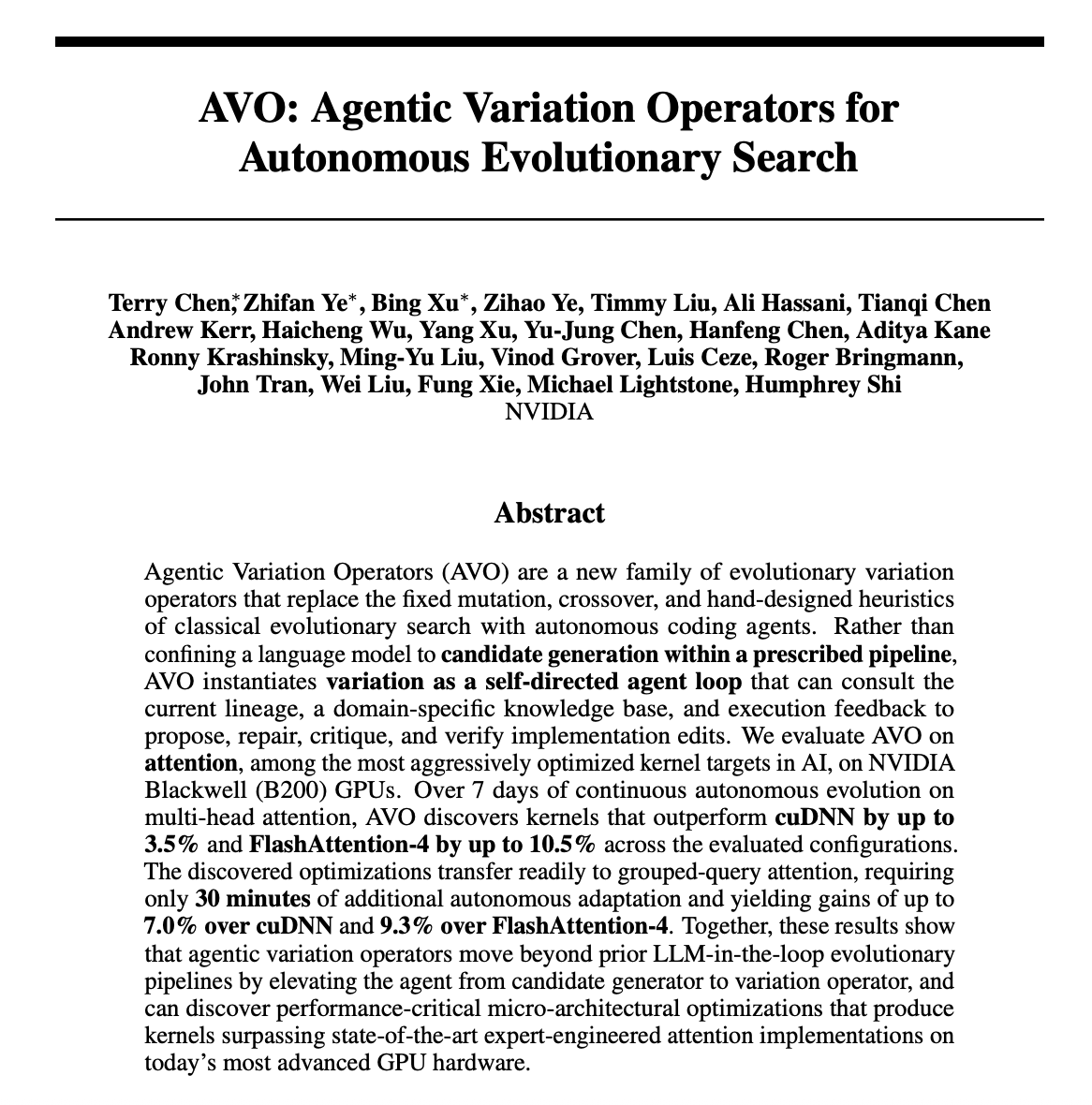

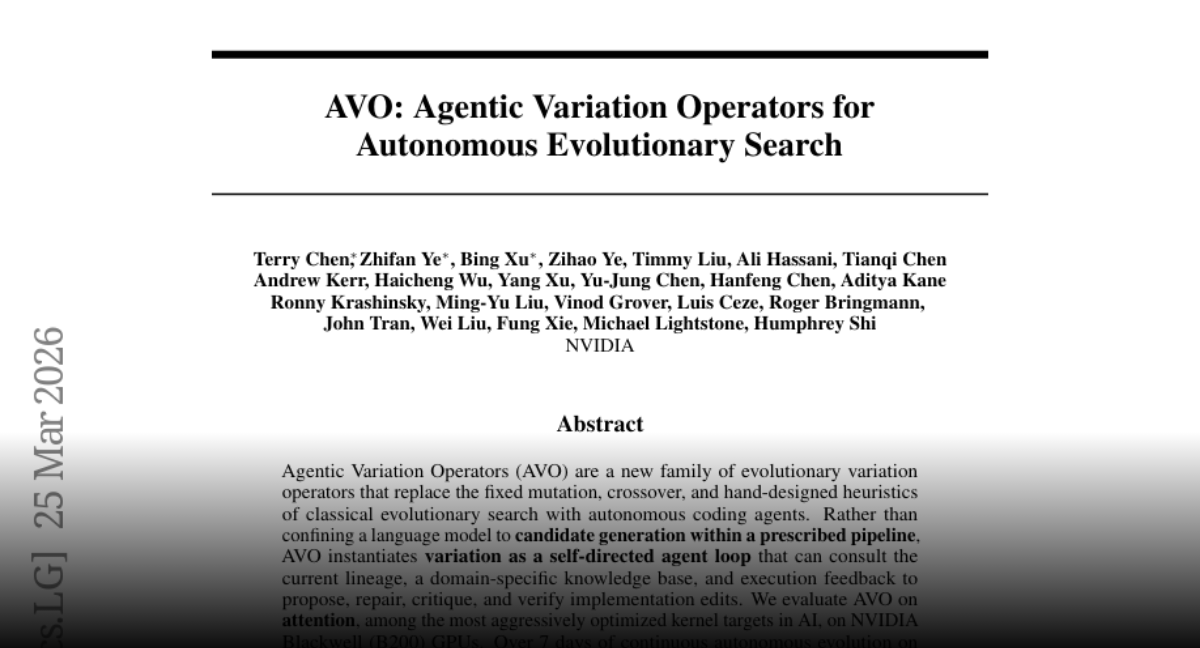

AVO Agentic Variation Operators for Autonomous Evolutionary Search paper: https://t.co/UjWY0FQNaT https://t.co/OSNGdt1zM6

An 🇨🇳 AITO (问界) M8 — AITO is a marque of 🇨🇳 Seres Group [a member of 🇨🇳 Huawei's Harmony Intelligent Mobility Alliance (HIMA, 鸿蒙智行)] — hit and ran over a kid in Chongqing, even though NCA — a feature of Huawei’s Qiankun ADS intelligent driving system — had been activated the whole time. 🇨🇳 Huawei had apparently reported the person who first posted the video on social media to the police, who had ordered the person to sign a written pledge promising not to disseminate any negative news about Huawei again (https://t.co/qsDq1qshtv)…

Sam Altman's new funding round for OpenAI has been branded a "Ponzi scheme," guaranteeing 17.5% returns based on ... well, nothing. https://t.co/fjHRIPxqRp Microsoft owns 27% of OpenAI's "for profit" arm.

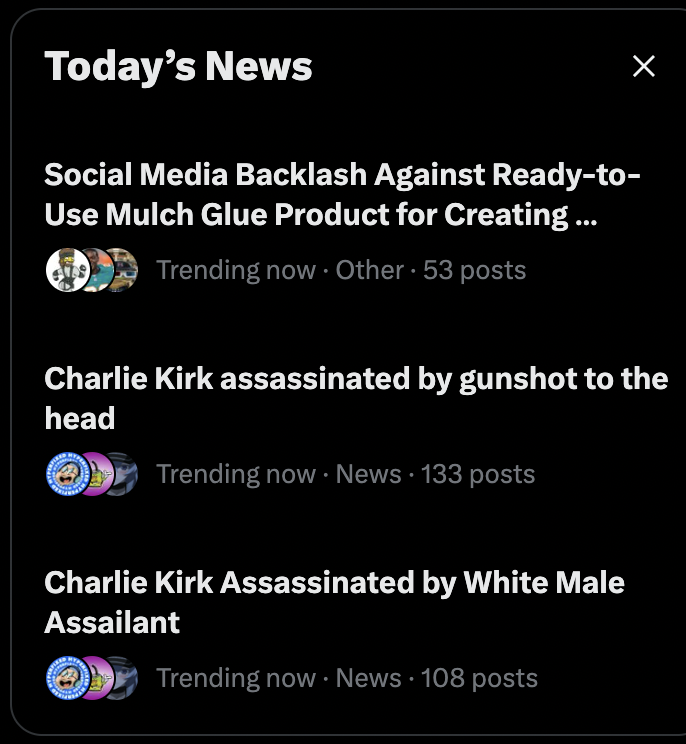

We've memed Charlie Kirk's death so much Grok thinks he's getting shot every day https://t.co/kZXj0z7EzF

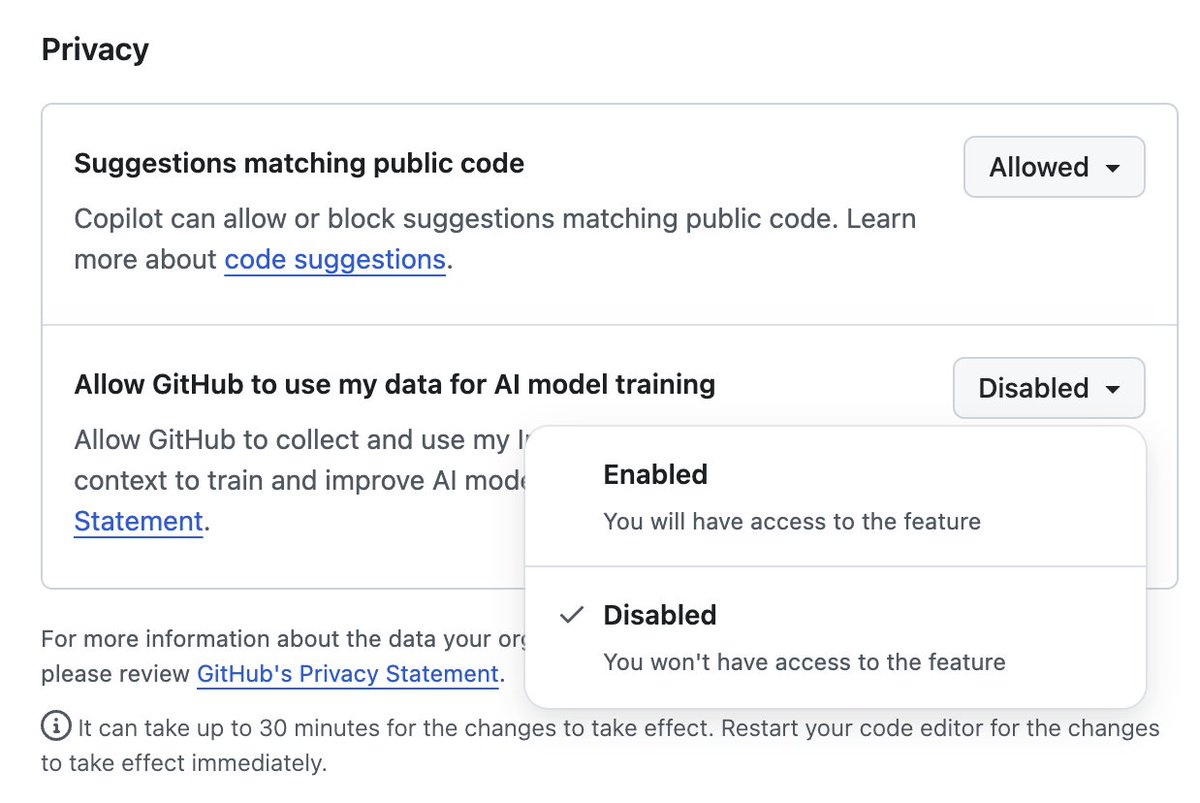

If you use GitHub (especially if you pay for it!!) consider doing this *immediately* Settings -> Privacy -> Disallow GitHub to train their models on your code. GitHub opted *everyone* into training. No matter if you pay for the service (like I do). WTH https://t.co/vcSkhM5yLV https://t.co/DWPaUTHbUU

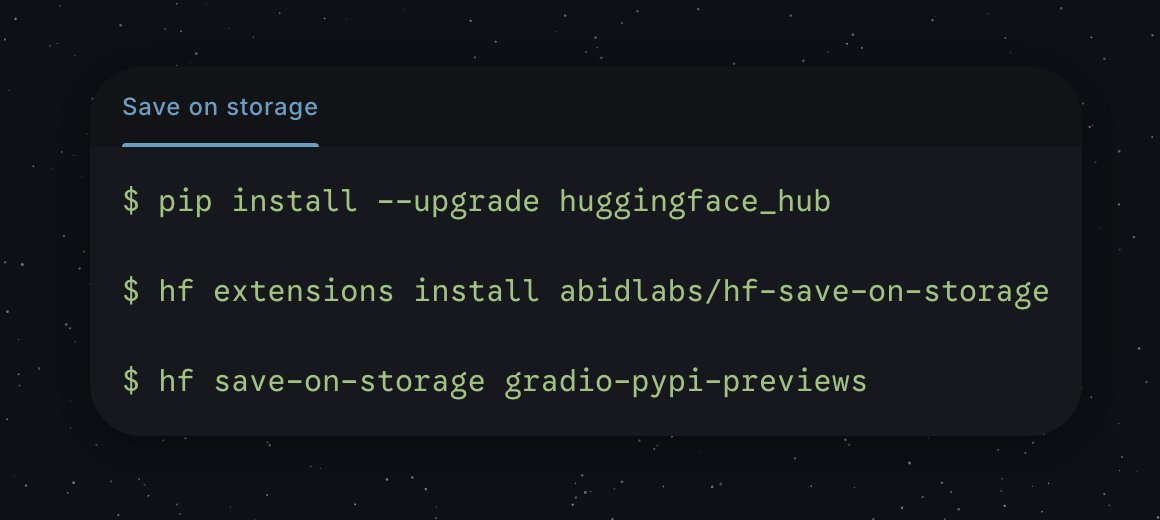

What if you could save 25-50% of your AWS S3 bill just by running a few CLI commands? AND no longer have to worry about paying egress fees or api fees at all! Let me explain: * I built an unofficial Hugging Face CLI extension that analyzes your AWS S3 bucket and shows how much you'd save by migrating the data in the bucket to HF Storage Buckets. * If you like what you see, you can have it migrate the data for you too :) What are Hugging Face Buckets? A mutable, S3-like object storage you can browse on the Hub, script from Python, or manage with the hf CLI. And because they are backed by Xet, they are especially efficient for ML artifacts that share content across files.

"naming their next model after Cthulhu" 😒 https://t.co/Rc2xLHg089

Naming their next model after Cthulhu makes it hard to take Anthropic seriously as the good guys. It's fun at any other software company, not one that actually is flirting with extinction.

Qwen3.5-35B compressed 20% with 1%~ performance drop on average. Now you can fit this (4bits) with full context on 24GB of VRAM 700$~ or 1x 3090 https://t.co/7C4WORKFm5

BREAKING: Vice President JD Vance: “Ilhan Omar definitely committed immigration fraud against the United States of America” https://t.co/MsTPC4rCax

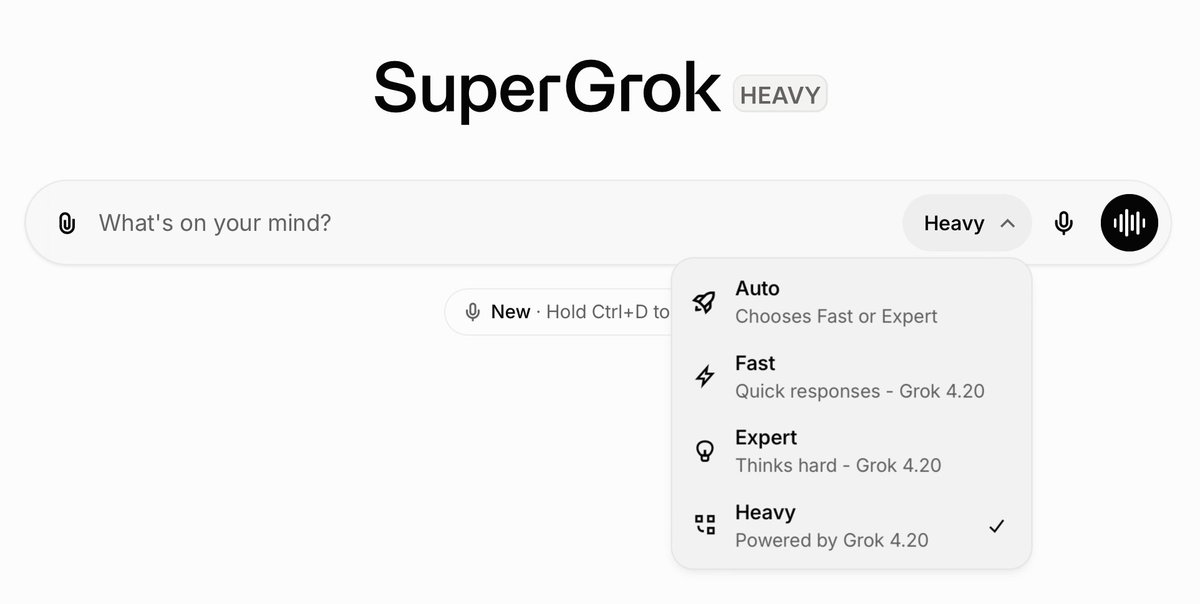

Grok Heavy is a complete game changer. It’s noticeably faster now, and I honestly use it for everything. I’ve got 16 agents running for me at all times, and it makes me feel superhuman. It’s like having a team of the smartest minds working for you 24/7. I can break down anything, verify anything, and get answers instantly. It’s hard to explain until you actually use it. For me, $3,000 a year is a no-brainer, especially if you are serious about AI.