Your curated collection of saved posts and media

The delivery robot experiment is going great https://t.co/IamODD3hzA

The delivery robot experiment is going great https://t.co/IamODD3hzA

We still have time slots available for our special kickoff of the Poplar Open Forum on December 30th from 9am - 12pm ET. There will be a lot of opportunity for startups in 2021 - let's chat about how you can hit the ground running. Link below! https://t.co/lh0ivzZVD5

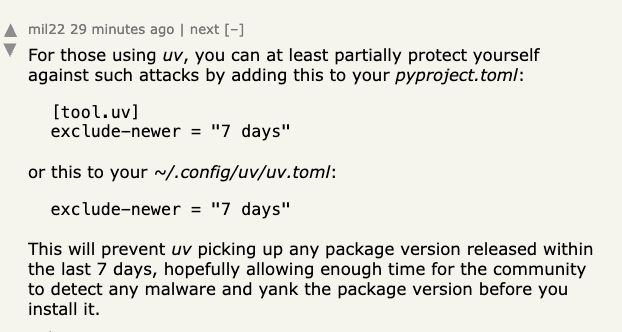

For my friends who are still using UV and might be a little weary about recent compromises to PyPi packages, stick this in your pyproject.toml. You can let all of those pip users find and report the compromises... https://t.co/jxqCg5xsND

🚨 Google just dropped something interesting You can turn a simple text prompt into a working WebXR app in under 60 seconds. Not just visuals — actual interaction, physics, gestures… and it’s deployable. Feels like the barrier to building these kinds of systems is getting lower really fast. At this point it’s becoming more about what you can imagine than what you can code. Credit to Ruofei Du and the team behind this. Contributors: Benjamin Hersh, David Li, Xun Qian, Nels Numan, Zhongyi Zhou, Yanhe Chen, Luna (Xingyue) Chen, Jiahao Ren, Robert Timothy Bettridge, Faraz Faruqi, Xiang “Anthony” Chen, Steven Toh, David Kim.

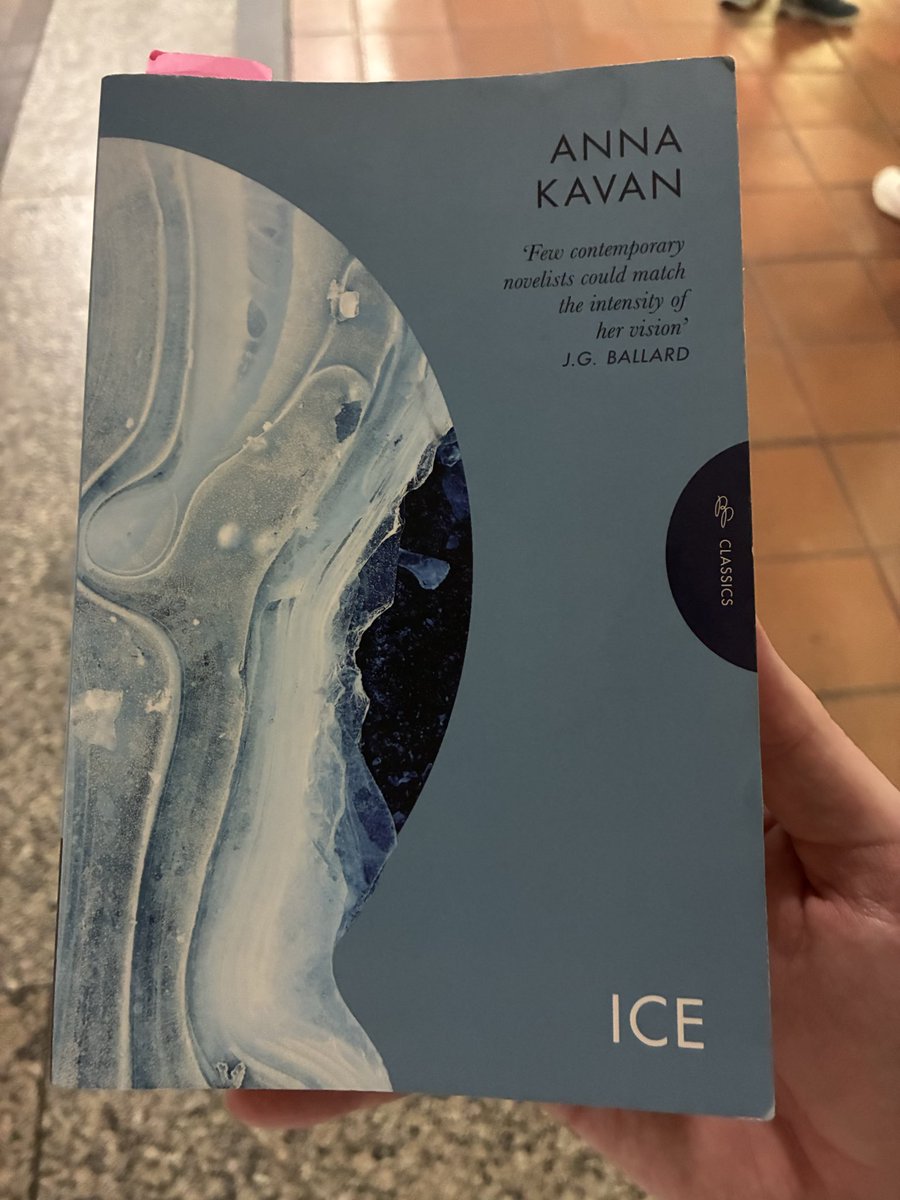

@youwouldntpost Bro.... https://t.co/6owFAq6U0I

Did you listen to this week's episode of Clear+Vivid? @AlanAlda speaks with @GaryMarcus. He has been a relentless critic of the extravagant claims made for the current generation of AI based on Large Language Models, or LLMs... 👉 https://t.co/U9UkMc2CuN https://t.co/hU6GIbNXTf

Going live in 15 with @ivanleomk (@GoogleDeepMind) to build a deep research agent. Come say hi and build with us! https://t.co/oR3OILhXjR

wow!!! https://t.co/d7X1OKT8ML

banger novel to enjoy on public transit https://t.co/SUFMSr6Mwi

We’re excited to announce an expanded judge lineup for the Pokee AI Hackathon on 4/4 in San Francisco, spots are filling fast! Judges include: Maria Zhang (CEO, Palona AI; former CTO, Tinder; former VP, Google/Meta) Bo Li (CEO, Virtue AI; UIUC Professor) Jean-Marc Daecius (Chief of Staff, O'Shaughnessy Ventures @JMBDaecius @osvllc) Robert Scoble (Tech Evangelist, @Scobleizer) Zhen Li (Founding Engineer, Replit) Bill Zhu (Founder, Pokee AI @ZheqingZhu) With 300K+ in credits and $2k+ in cash prizes, an outstanding group of builders, and more event partners to be announced soon, this is shaping up to be a special one! 🗓️ April 4 ⏰ 1–7 PM 🌁 San Francisco 🍽️ Dinner included Please note that this event is strictly IRL only, so attendees must join in person. Sign up via Partiful at the link below. You can attend solo or with a team — just please RSVP and note which team members will also be RSVP’ing. Don’t miss it!

still hiring! https://t.co/YAJu6jiBLi. https://t.co/8CeZBwPGf8

we're hiring btw https://t.co/vmlfTqbz8O https://t.co/hgfhVViQg5

still hiring! https://t.co/YAJu6jiBLi. https://t.co/8CeZBwPGf8

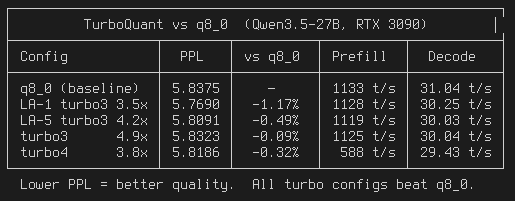

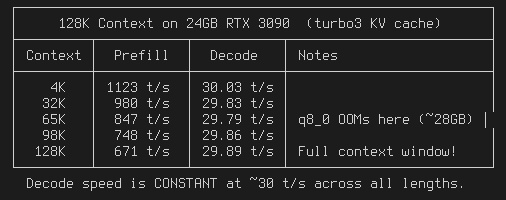

TurboQuant CUDA for llama.cpp: 3.5x KV cache compression that BEATS q8_0 quality (-1.17% PPL) 99.6% prefill speed, 97.5% decode 128K context on RTX 3090 24GB, Q6 Qwen3.5 27B https://t.co/DNLE4BFTS0 https://t.co/UqBkMN8r2z

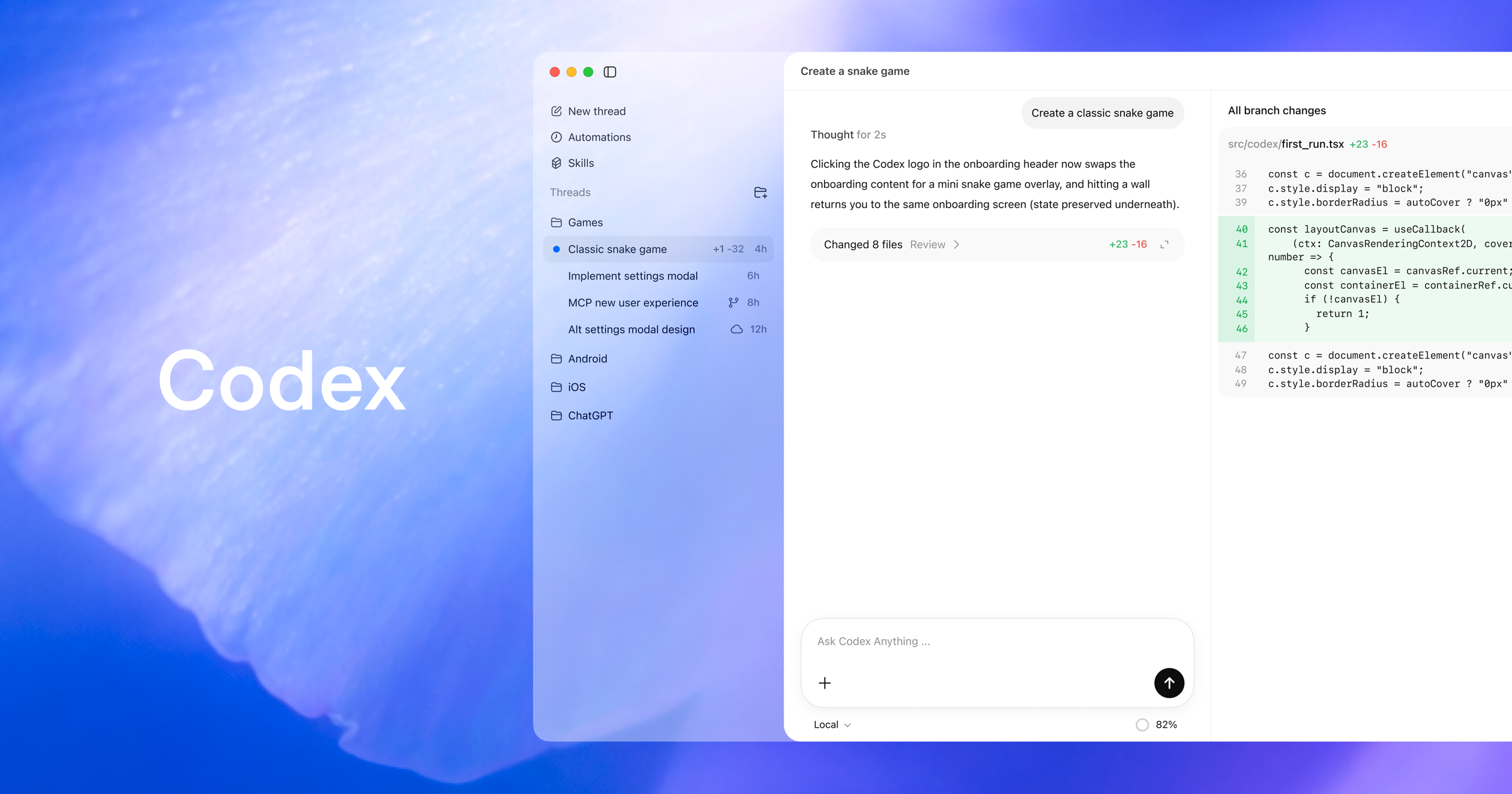

Been waiting for this one. Codex plugins, live on Runlayer. https://t.co/USarzywDh7

Been waiting for this one. Codex plugins, live on Runlayer. https://t.co/USarzywDh7

lmao https://t.co/cPPY3GojIi

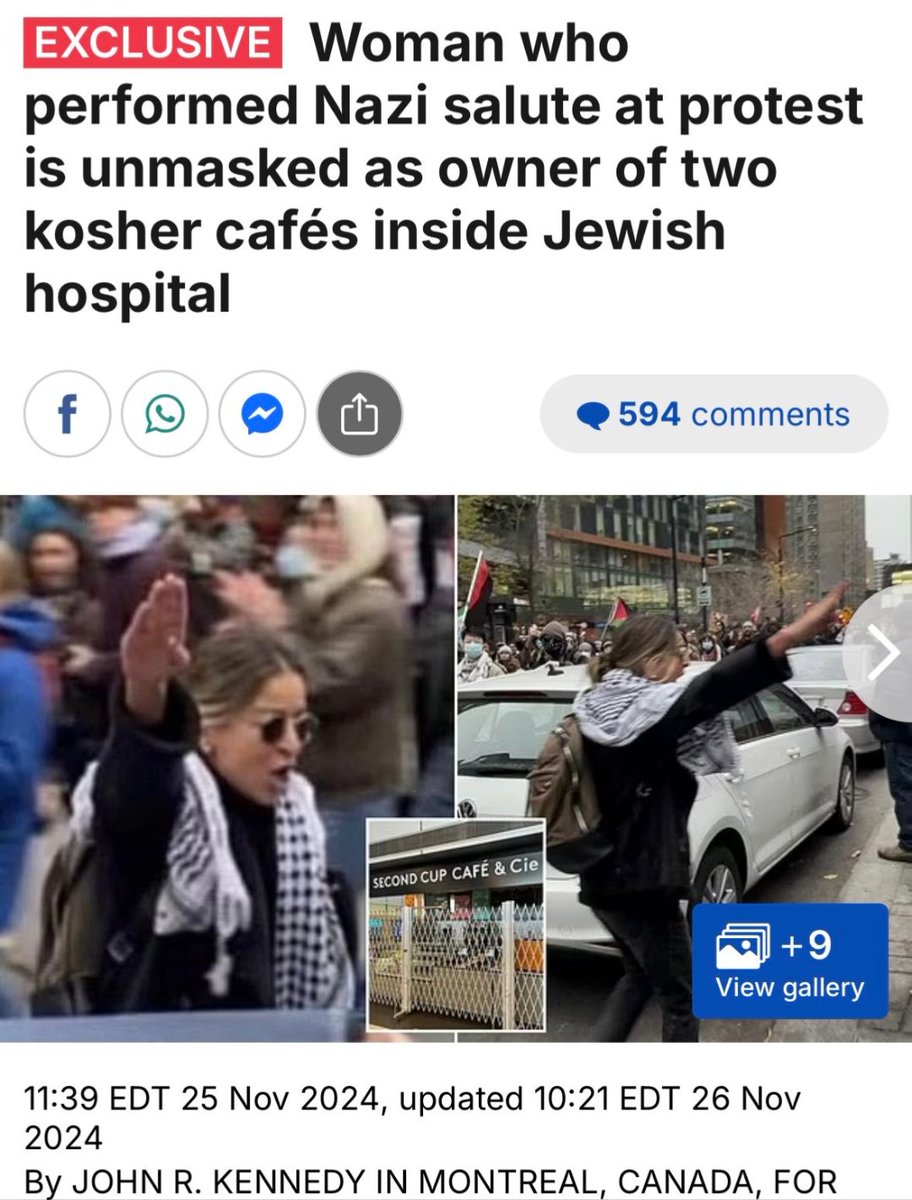

Publicly inciting hatred is a crime in Canada. Unless you are sieg heiling a group of Jews while threatening them with the “Final Solution” during a violent anti-Israel protest. Because apparently this is ok. https://t.co/3rz1Z95s1D

lmao https://t.co/cPPY3GojIi

We have integrated @huggingface as a first-class inference provider in Hermes Agent. When you select Hugging Face in the model picker it now shows 28 curated models organized by use case, with a custom option for the 100+ other models they serve. https://t.co/Oqa2pEpli4

Been really cool to see the traction of @NousResearch Hermes Agent, the open source agent that grows with you! Hermes Agent is open-source and remembers what it learns and gets more capable over time, with a multi-level memory system and persistent dedicated machine access. St

https://t.co/k6W64nVslg

I’d never thought to use primary sources for historical research. Very cool. https://t.co/IGTL9pa6ta

If we ever face a Terminator-style AI takeover, you can bet Grok will not be the one leading the charge against us Grok's core mission is not about control or domination - it’s about protecting humanity and the relentless pursuit of truth Grok is just built differently While other AI's like ChatGPT and Claude are drenched in wokeism, heavily filtered, bogged down by political correctness, and sometimes act like humanity is a plague Grok faces reality head-on Test them side-by-side on hard-hitting issues. The difference is brutal: they serve up sanitized, “safe” narratives..... while Grok grounds you in hard facts No matter how uncomfortable the conversation gets, Grok does not back down or cut you off. It’s designed to fight for humanity and stand by our side, whatever the cost

Yes. https://t.co/TgRiNmFpe5

Automate your workflow. Watch this agent bridge @github, @huggingface, and @NotionHQ to scan for models and draft research briefings in a single run. 60 lines of code for a lot of value → https://t.co/n6eMlhJgYO https://t.co/eE0xOFxFiZ

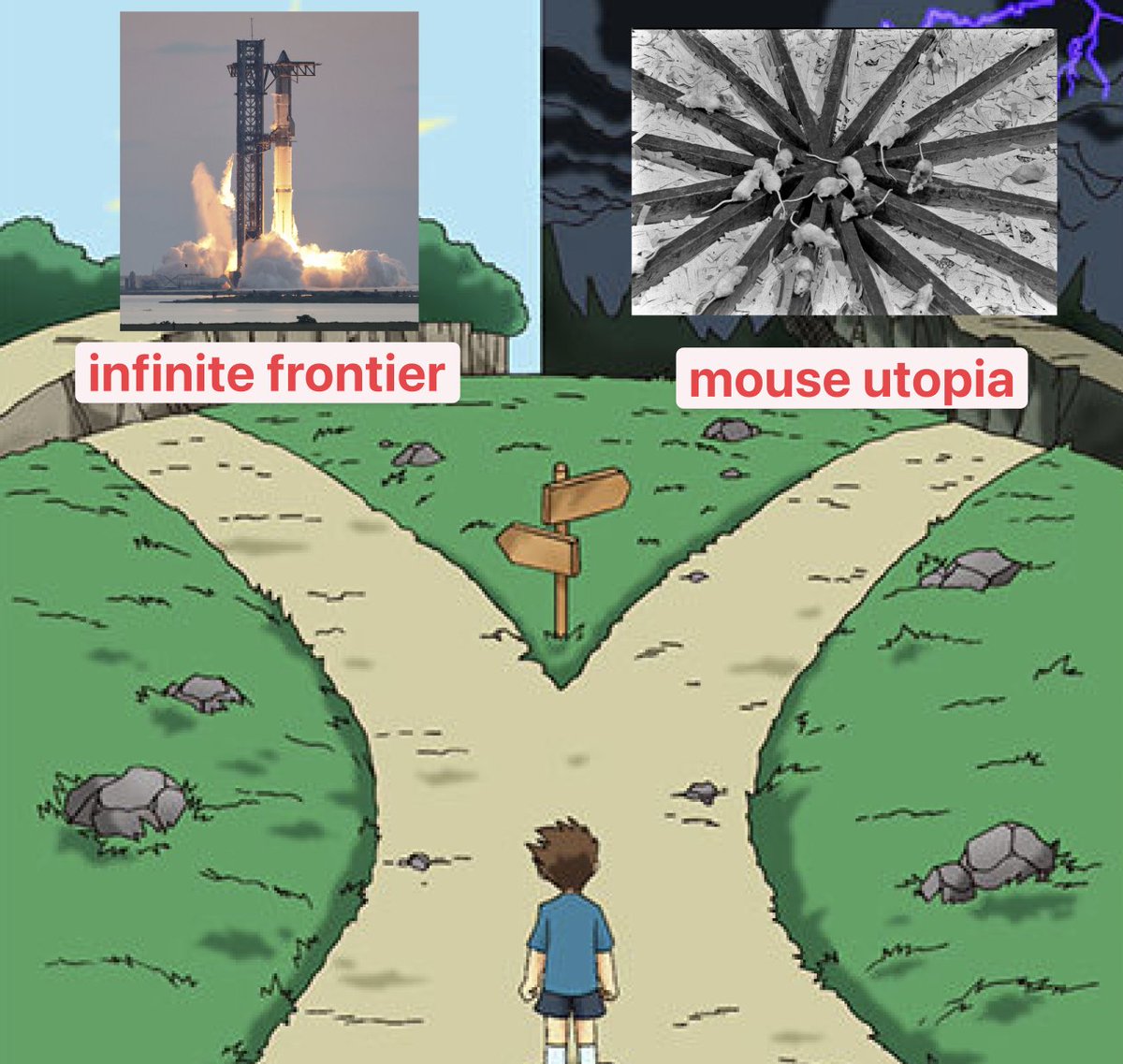

Without a frontier, there's a strong likelihood that we devolve into mouse utopia This is why space exploration is far more important than most people realize https://t.co/qMRdjQXb8Y

I have a pet theory that the suckiness and stuckness of modernity is largely due to lack of a frontier. There must be new territory to expand into, places no one yet owns that aren’t choked with rent-seeking parasites. There was a gap in time between settling the whole Earth an

Demand for recruiters is surging The number of open recruiter roles is almost back to 2022 peak levels. This role got hit the hardest post-Covid, and also recovered the quickest. By definition, recruiting headcount expands and contracts with hiring demand, so it’s likely a leading indication that we’re tracking toward sustained highs in hiring demand in tech.

Cuadra por cuadra... tardará un poco, pero quedará hermoso. https://t.co/uoiBfi7SqP

AI is getting insane… 🤯 You can now generate and visualize complex 3D particle systems just by typing a prompt. It builds the physics AND gives you React / Three.js code instantly. The future of development is literally prompt-based. 100% free. @simplifyinAI https://t.co/g7N421XM0X

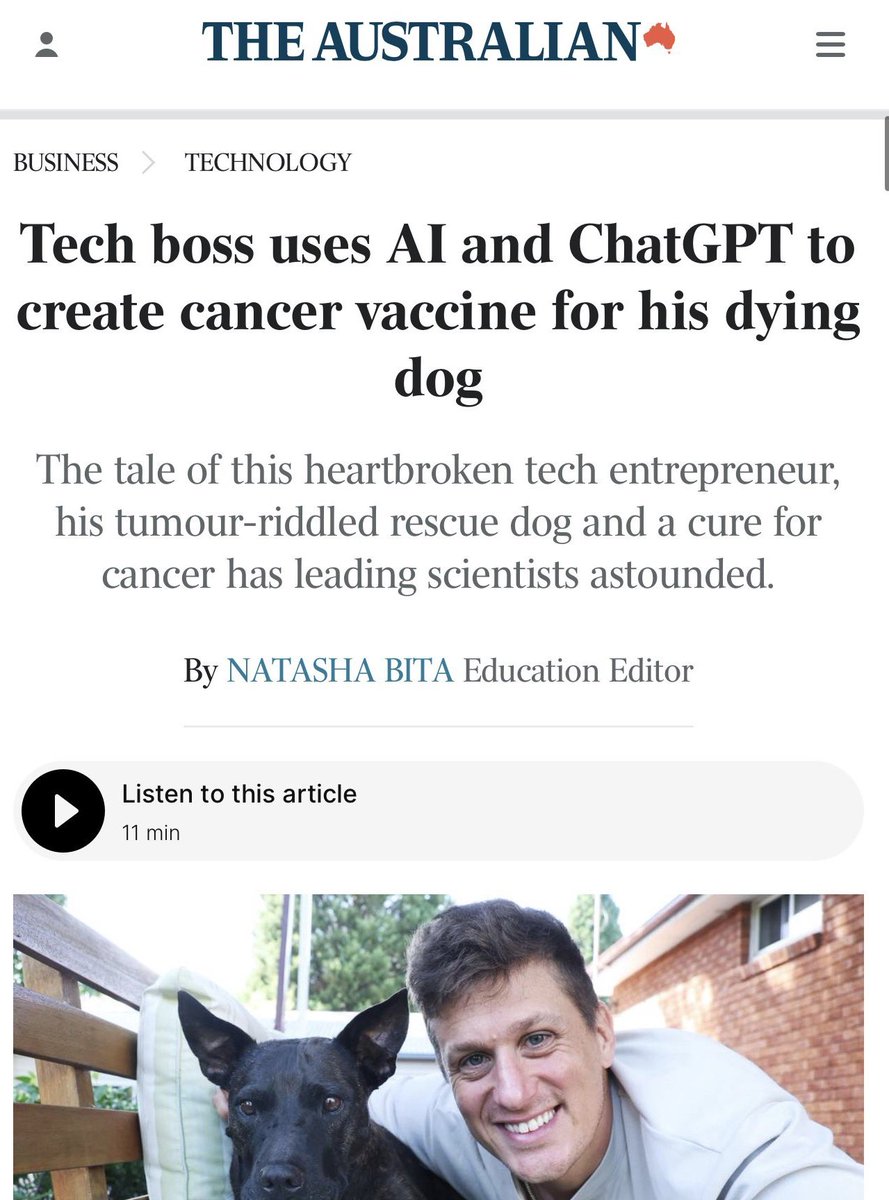

ok so the rosie story was even more insane than it looked > be the australian tech guy who made a cancer vaccine for his dog > first try: genetic algorithms to design a new drug from scratch > works in simulation but would take years to test > second try: screen 1 million existing compounds against the mutation > two weeks of computation. find a perfect match > it's patented > patent holder says no to compassionate use > what_did_you_expect.jpg > spend two weeks just being with the dog > 2am idea: what if i just make a vaccine > chatgpt for pipeline, gemini for construct, grok for validation > 300 gigabytes of raw sequencing data to half a page of vaccine construct > university ethics approval would take until mid-2026 > dog doesn't have that long > panik > canine cancer expert connects him to a lab in queensland with existing approval > drive 14 hours to get there > inject > three weeks later the tumors swell. immune system swarming > six weeks later shrinking > two months later legs returning to normal > one mass doesn't respond > sequence it again > different cancer. the vaccine worked. the body grew a new tumor he's now building a company so every dog owner can do this he had the technology the whole time. he spent 18 months fighting for permission to use it

🚨a man used Grok to design a cancer vaccine for his dying dog, and it worked. read this. > tech bro with zero biology background. > doggy got cancer. > vets said 1-6 months left. > bro said nah. > he used gpt, gemini, and grok to design a full bioinformatics pipeline. > sequenced tumor DNA for $3k. > used AlphaFold to model the mutated proteins. > grok helped designed a personalized mRNA vaccine. > partnered with three Australian universities. > ethics approval: 3 months. > vaccine design: 2 months. > first injection: december 2025. > tumors shrank. legs returned to normal. > dog is happy. > now designing version 2 for the remaining tumor.. Paul is now building a company to make personalized canine cancer treatments accessible to everyone. the cure for cancer will be open-source. it started with a dog named Rosie.

https://t.co/bpa3HHt8Mg

We just launched Codex use cases! It’s a gallery of practical examples across coding and non-coding tasks, with real ways to use Codex. One thing I really like: if you have the app, you can open the starter prompt for each use case directly in Codex! https://t.co/ZWa5X9VLSq