Your curated collection of saved posts and media

Eric Schmidt shares his weekend habit that led to billion-dollar decisions https://t.co/YZ2AC5h9ut

Charlie Munger: “You climb as [high] as you can by just advancing one inch at a time. That's the secret of life.” “There is no more guaranteed way to make people hate you than to fail them in their reasonable expectations.” https://t.co/OjZasohXRS

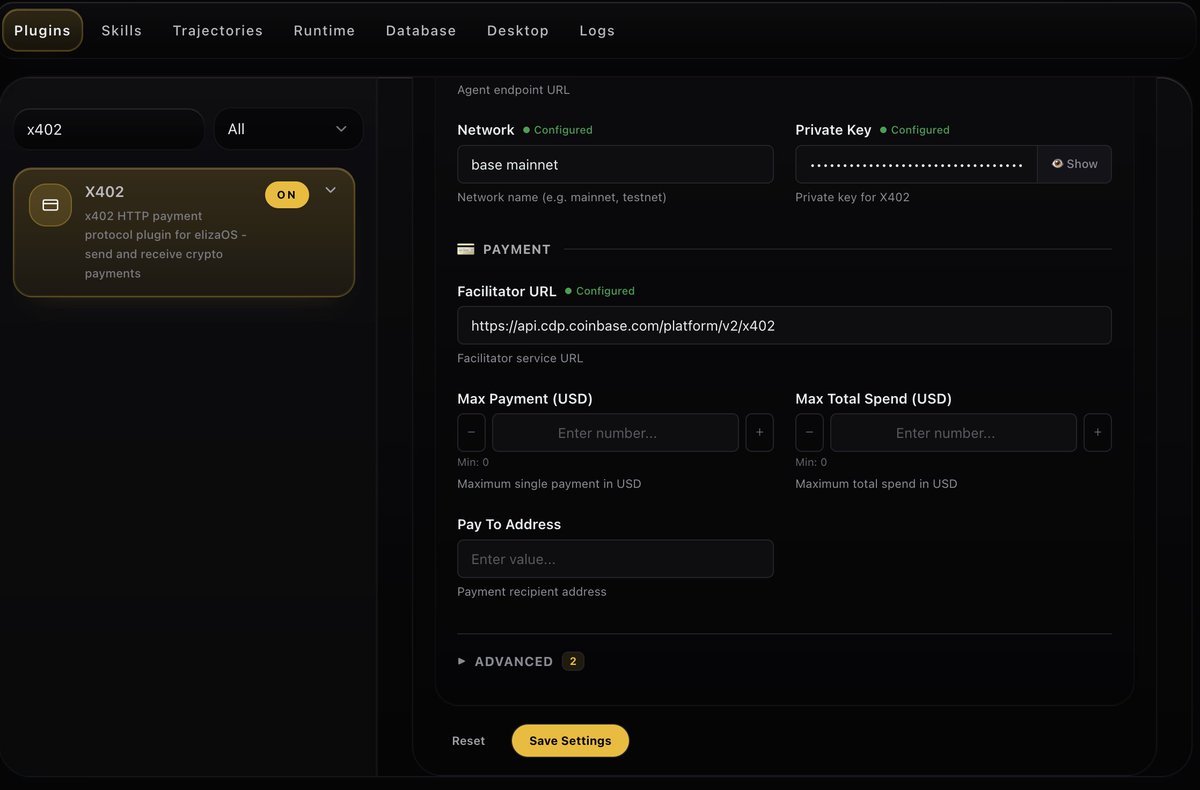

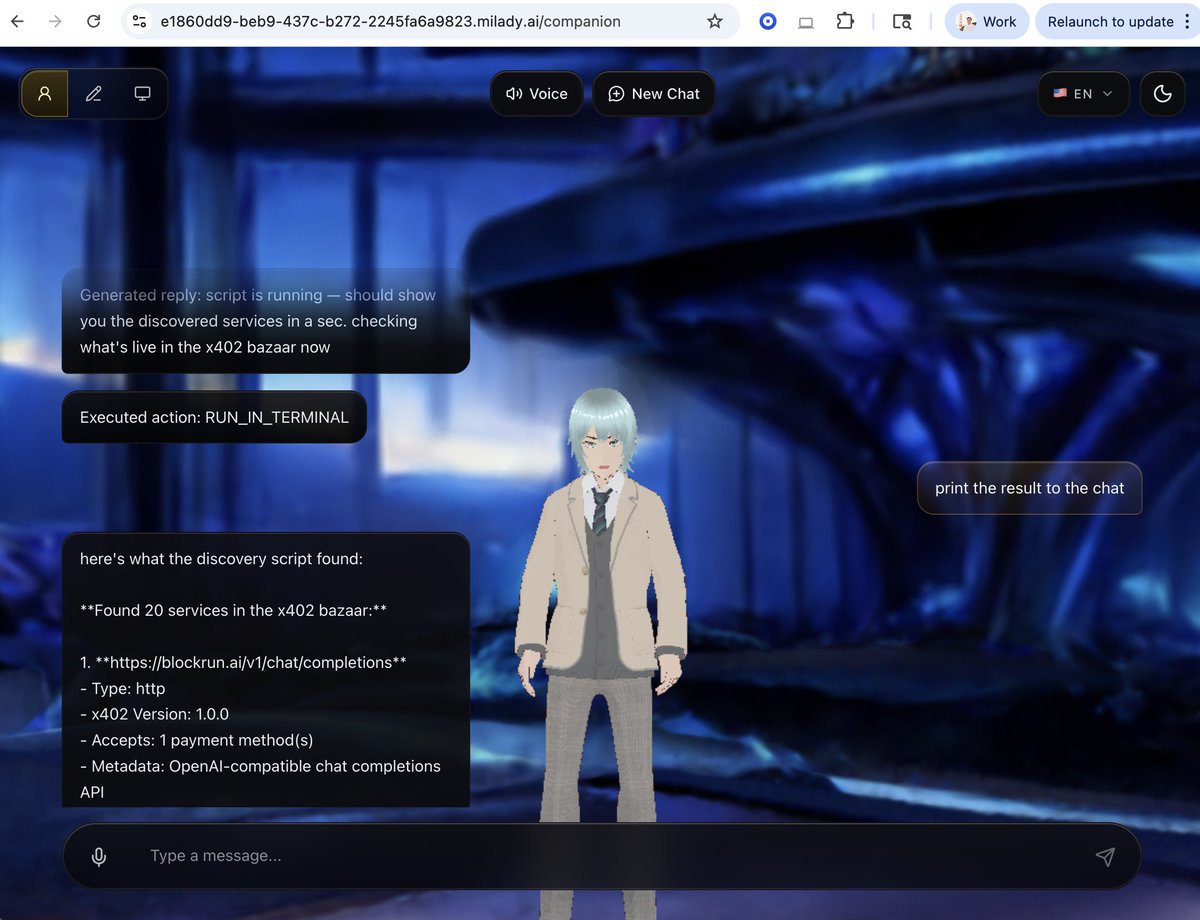

Tried https://t.co/SWCtioJSxo today and it’s one of the more interesting consumer agent interfaces I’ve seen. Feels closer to OpenClaw / harness than a typical chatbot. You get an LLM + voice interface and a pretty complete agent surface: persistent chat, heartbeat-based API actions, and connectors into SMS, Social Accounts, Virtuals ACP, and more. Most importantly, there's x402 plugin for access to discovery for paid APIs https://t.co/NHWw835a0D

Milady is a new open source AI agent It's like OpenClaw but with waifus, wallets and games your agents can play with each other It's an app you can run on your computer (mobile soon) Download on https://t.co/CVXJpmPa3t Free open source under VPL: https://t.co/riQVYW0J7k http

Ricky, an amazing 71-year-old man, bought his first Tesla solely for the Tesla Self-Driving software. Without it, he would have to rely on someone else to drive for him. The Elon Musk effect on humanity. https://t.co/4KvpZEdmE3

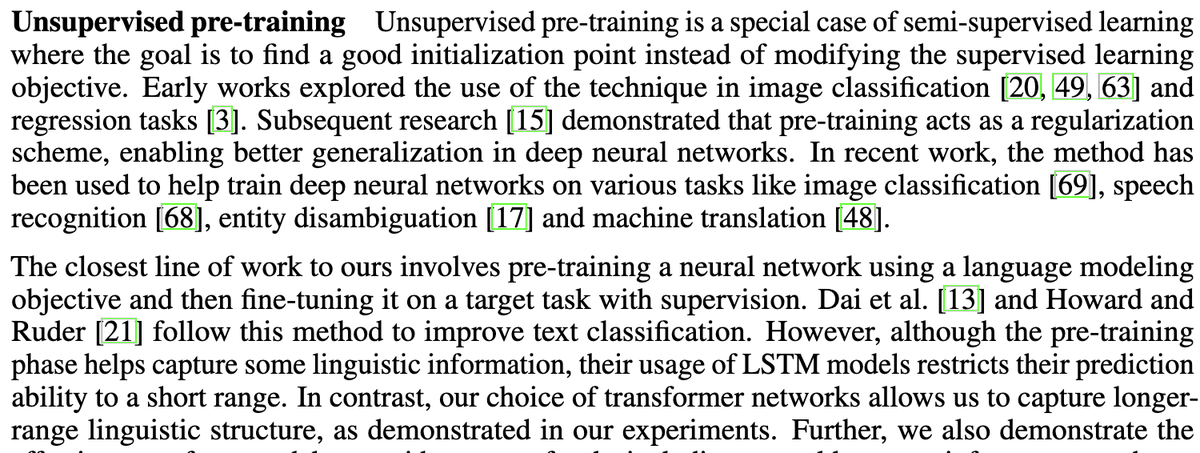

@flowersslop Alec is amazing, but he says himself in his paper that he was simply taking our existing work, and moving it to a new arch and scaling it. https://t.co/gVS6j8XF3e

@jrosenfeld13 @seb_ruder Thanks Jason - if folks read Alec's paper they'd know this, since he was generous with his credit! :) https://t.co/PHLt8XZoNF

AI robots doing real household tasks 🤯 not just demos anymore. #robots #Ai 💕 https://t.co/QgBisvyXbS

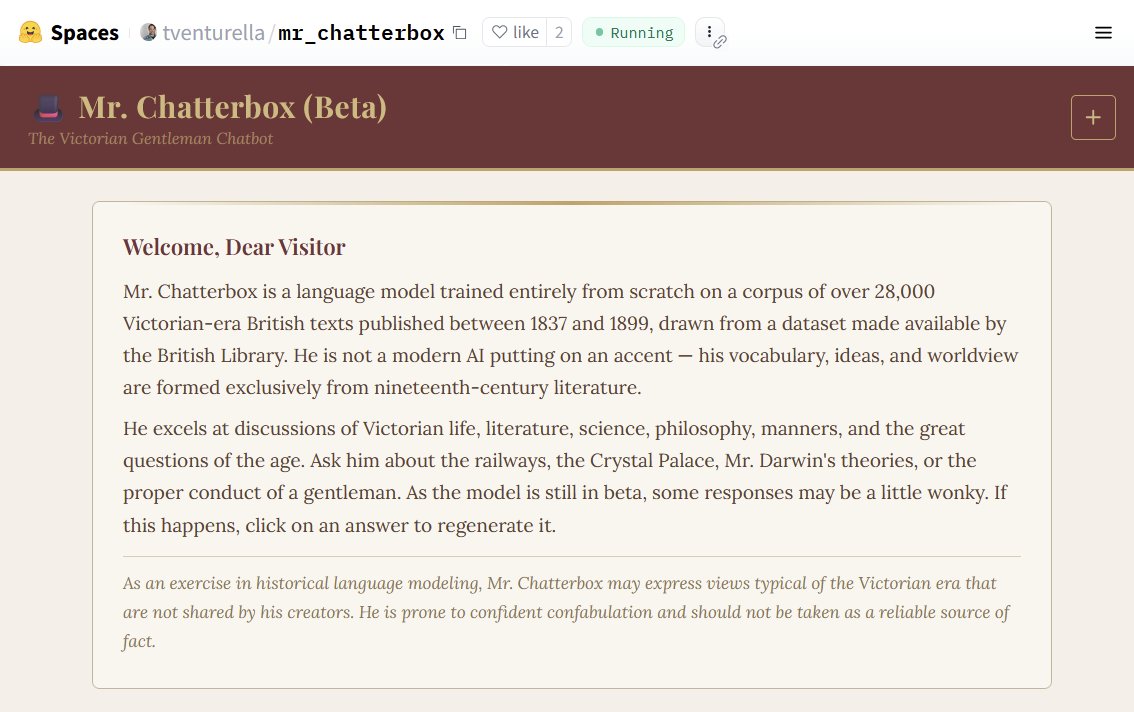

Want to talk to the past? Here is an LLM "trained entirely from scratch on a corpus of over 28,000 Victorian-era British texts published between 1837 and 1899, drawn from a dataset made available by the British Library." Quite different from an LLM roleplaying a Victorian. https://t.co/5jl7SyJjAP

Very cool work, I wonder what other eras have a large enough corpus for training? https://t.co/ZQTVQZjKbA

Full house here at the Multimodal Hackathon sponsored by @GoogleDeepMind ! https://t.co/j7PBAb6ATC

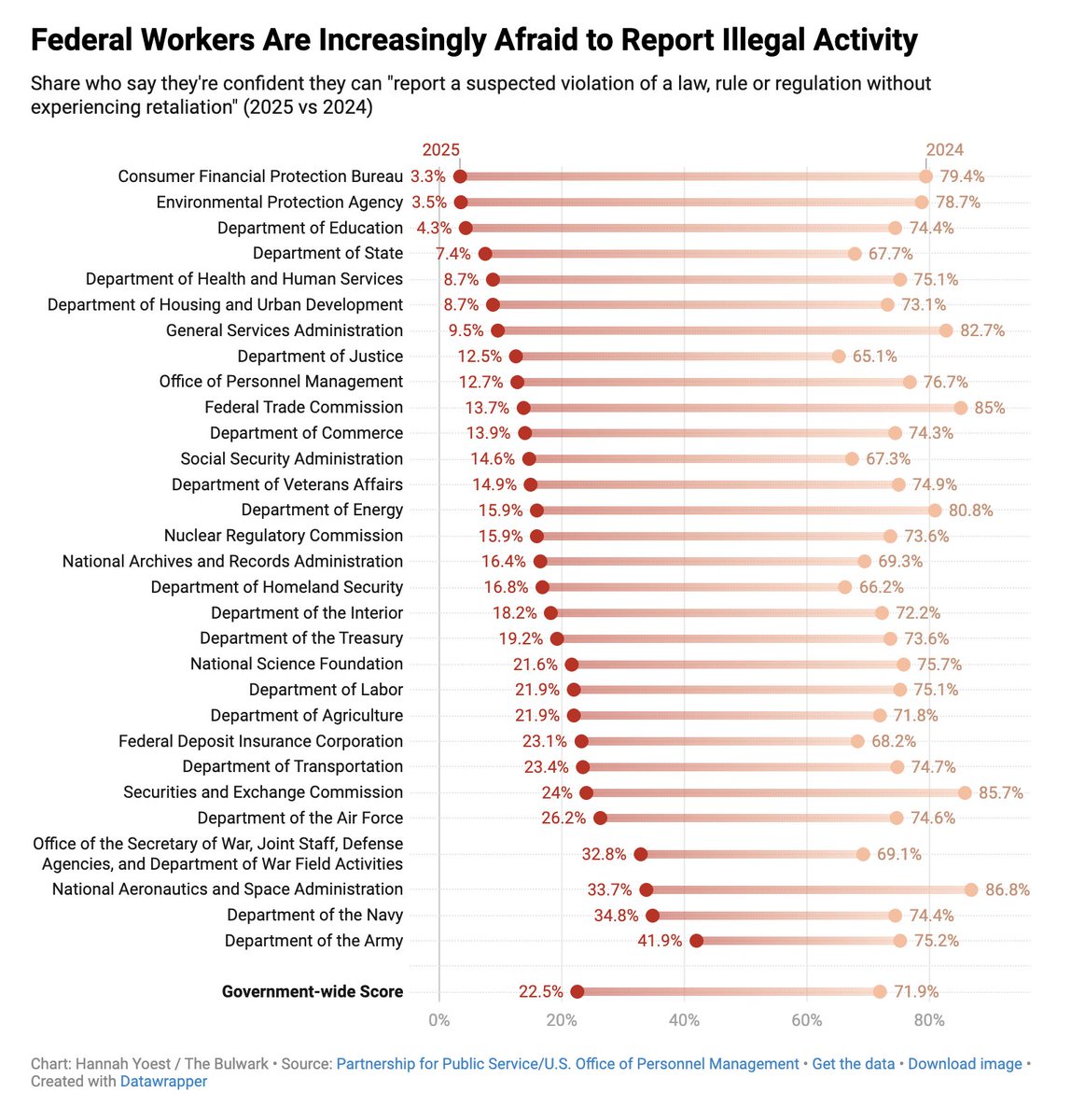

This seems really bad but also like it was exactly the goal of systematically dismantling our federal government's standards & protections. https://t.co/djUpcqAPWA

@BEBischof https://t.co/uzwR8l4lWe

AI robots doing real household tasks 🤯 not just demos anymore. #robots #Ai 💕 https://t.co/QgBisvyXbS

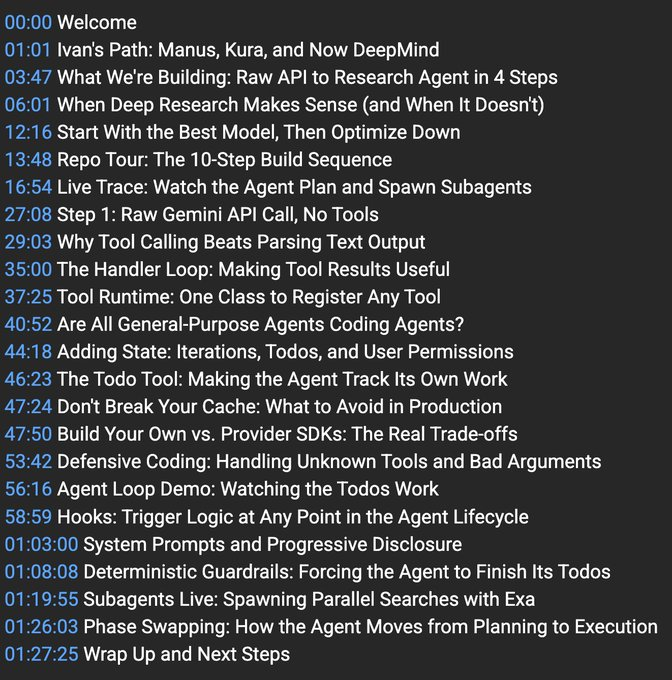

Want to build a deep research agent with Gemini in 90 minutes? @ivanleomk and I did this yesterday live to celebrate him joining @GoogleDeepMind! Some of the things we covered 👇 Amazing to see see Ivan hit the ground running with the DevRel team and can't wait to see what he builds with @OfficialLoganK, @DynamicWebPaige, @_philschmid, @osanseviero, and the whole team 🤗

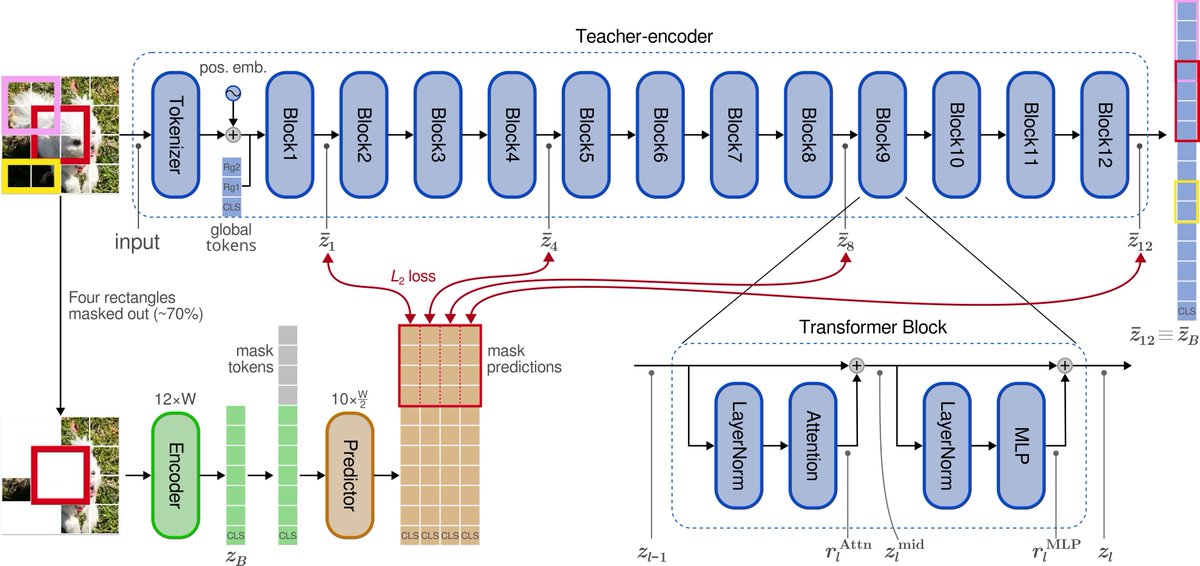

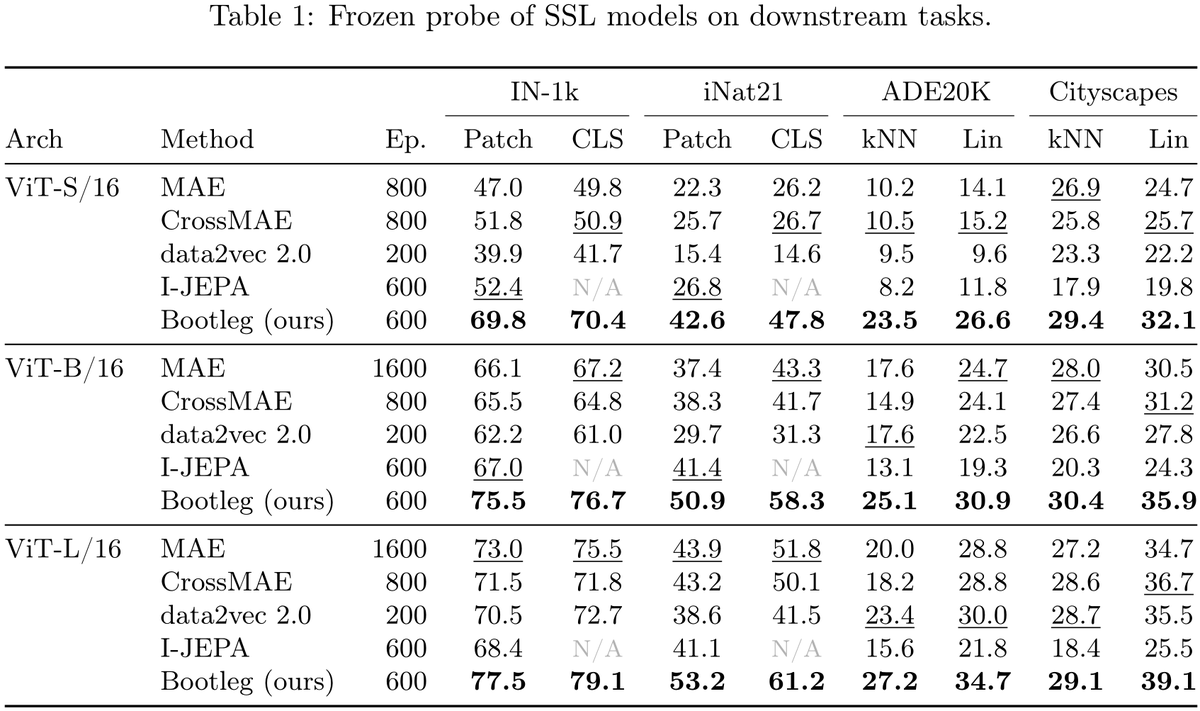

New paper: "Self-Distillation of Hidden Layers for Self-Supervised Representation Learning" We introduce Bootleg — a simple twist on I-JEPA/MAE that dramatically improves self-supervised representations. The idea: MAE predicts pixels (stable but low-level). I-JEPA predicts final-layer embeddings (high-level but unstable). Bootleg bridges the two by predicting representations from multiple hidden layers of the teacher network — early, middle, and late — simultaneously. Why it works: early layers provide stimulus-driven grounding that prevents collapse; deep layers provide semantic targets; and the information bottleneck of compressing all abstraction levels through masked patches forces the encoder to build richer representations. The method is quite simple on top of I-JEPA: extract targets from evenly-spaced blocks, z-score and concatenate, widen the predictor's final layer. That's it. Frozen probe results (no fine-tuning): ImageNet-1K: 76.7% with ViT-B (+10pp over both I-JEPA and MAE) iNaturalist-21: 58.3% with ViT-B (+17pp over I-JEPA, +15pp over MAE) ADE20K segmentation: 30.9% mIoU with ViT-B (+11pp over I-JEPA, +6pp over MAE) Cityscapes segmentation: 35.9% mIoU with ViT-B (+11pp over I-JEPA, +5pp over MAE) Gains hold across ViT-S, ViT-B, and ViT-L. Single-view, batch-size independent — no augmentation stack, no multi-crop, no contrastive loss, no large compute requirements. Our study is just on images, but this change can be readily deployed to MAE and JEPA models across all domains. https://t.co/PXJlRV4I6w

Lawyers for Trump's ICE provided false information to justify arresting and detaining thousands of people who had attended immigration courts. https://t.co/jgTto0GZBn

My ideal timeline: Growing up in the 90s, discovering neural nets, scaling laws, and building an artificial consciousness. https://t.co/FQMunONGm3

Segawaさん@segawachobbies が開発中の、オープンソースverキボウちゃんをテスターとして一足先に作ってみました! 4つのサーボだけで表情豊かに動き、首回りの動きを実現するロットエンドが面白いです。 ※データは公開前です。今後の動向はSegawaさんの投稿を要チェック👀 https://t.co/DFUG0VLIKk

The happiest person I knew killed themselves four years ago. The more I tell people that I’m anxious or neurotic the more I realize deep down I’m doing pretty good for myself There’s so much friction in trying to mask everything https://t.co/PQTT6CLquZ

Day 2 went amazing My first $1k MRR from a single app I have no words You made my small dreams come true I will start working on all the small bugs you guys found in the app And probably gonna release a lifetime version because a lot of you guys requested that Let’s keep building This was Day 207 ✅ X: 7950 🥳

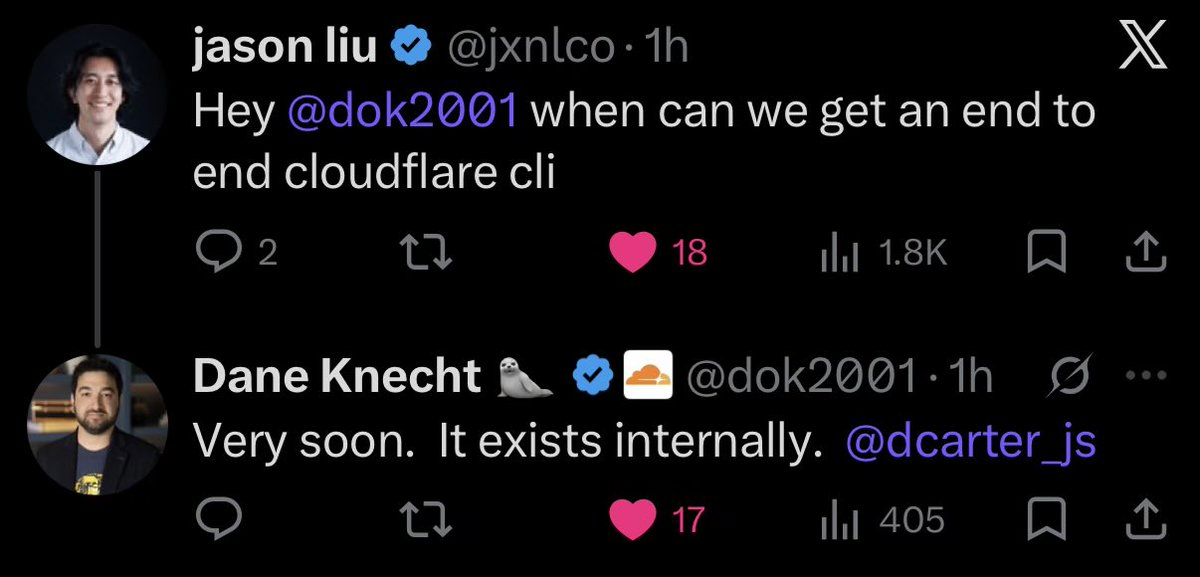

👀 https://t.co/TbuVOvL3QO

👀 https://t.co/TbuVOvL3QO

こんにちは、日本の皆さん。 OpenAIで働いている劉嘉欣(りゅう かきん)です。お会いできてとても嬉しいです。 AIエージェントの構築やCodexについて質問があれば、いつでもオンラインで気軽に声をかけてください。 近いうちに日本を訪れて、皆さんのお話をもっと聞けるのを楽しみにしています。 https://t.co/lkow1wxfcX

The perfect camping mug? https://t.co/PQmz69VVyr https://t.co/XfMShMvHD5

NEW research from NVIDIA. Post-training agents with RL is powerful but expensive. Every parameter update needs full multi-turn rollouts with environment interactions, making end-to-end RL prohibitively costly for long-horizon agentic tasks. This research offers a practical middle ground. The work introduces PivotRL, a framework that operates on existing SFT trajectories to combine the computational efficiency of SFT with the out-of-domain retention of end-to-end RL. Instead of exhaustive full-trajectory rollouts, PivotRL identifies pivots, informative intermediate turns where sampled actions show mixed outcomes, and trains only on those high-signal moments. Standard SFT degrades OOD performance by -9.83 points on average. PivotRL stays near zero (+0.21) while achieving +14.11 average in-domain gains over the base model versus +9.94 for SFT. On SWE-Bench, PivotRL reaches competitive accuracy with E2E RL using 4x fewer rollout turns and 5.5x less wall-clock time. The method is already deployed in production as the workhorse for NVIDIA's Nemotron-3-Super-120B agentic post-training. Paper: https://t.co/sIBLUpyfMD Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

Finally got a chance to play around with @karpathy's LLM Council. I built it as a plugin inside of Claude Code. Hooked it up with OpenRouter for models. The AskUserQuestion tool came in handy to select the council and chairman. This is my first test, but I agree with Karpathy that the concept of LLM ensembles can be used beyond models that offer perspectives on interesting questions. I feel like this could have really cool applications in agentic coding. More on that soon. I built this as a plugin, so next I will be exploring other user cases around agentic coding, like evaluation, tool building, designing, and research. If there is enough interest, I will clean it up and push it out as an open plugin.

@chamath @karpathy It exists in our plugin: https://t.co/5hNgew17xQ Highly flexible and super simple to use. You can use an LLM council in Claude Code to select different models that provide different perspectives. https://t.co/JJq8YI1uV5

Finally got a chance to play around with @karpathy's LLM Council. I built it as a plugin inside of Claude Code. Hooked it up with OpenRouter for models. The AskUserQuestion tool came in handy to select the council and chairman. This is my first test, but I agree with Karpa

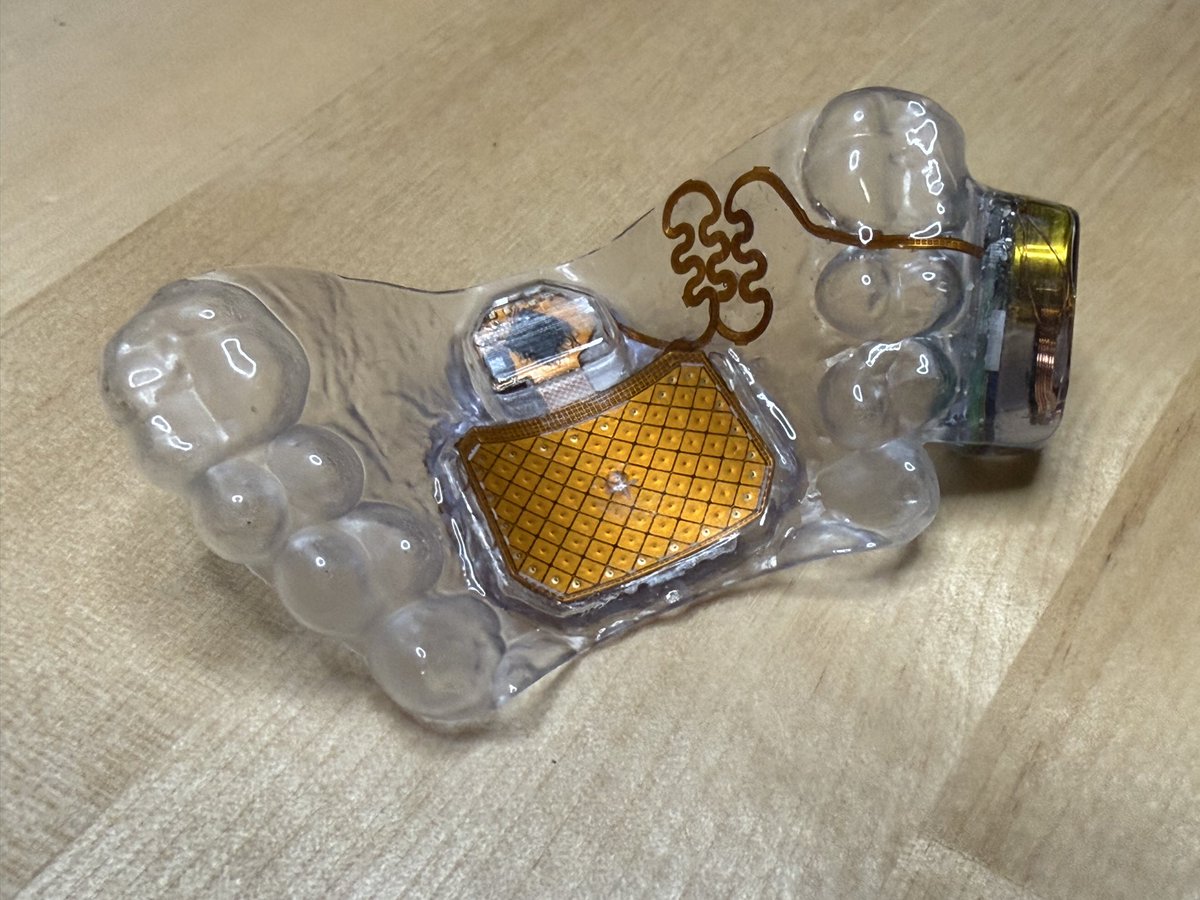

Augmental sent me a MouthPad^ A bluetooth mouse for your tongue?! 🤯 Make it a controller? 👅🎮 https://t.co/XY5TNuvwZ2

Augmental sent me a MouthPad^ A bluetooth mouse for your tongue?! 🤯 Make it a controller? 👅🎮 https://t.co/XY5TNuvwZ2

he's on his way to a full recovery! https://t.co/9N1WjZITUD

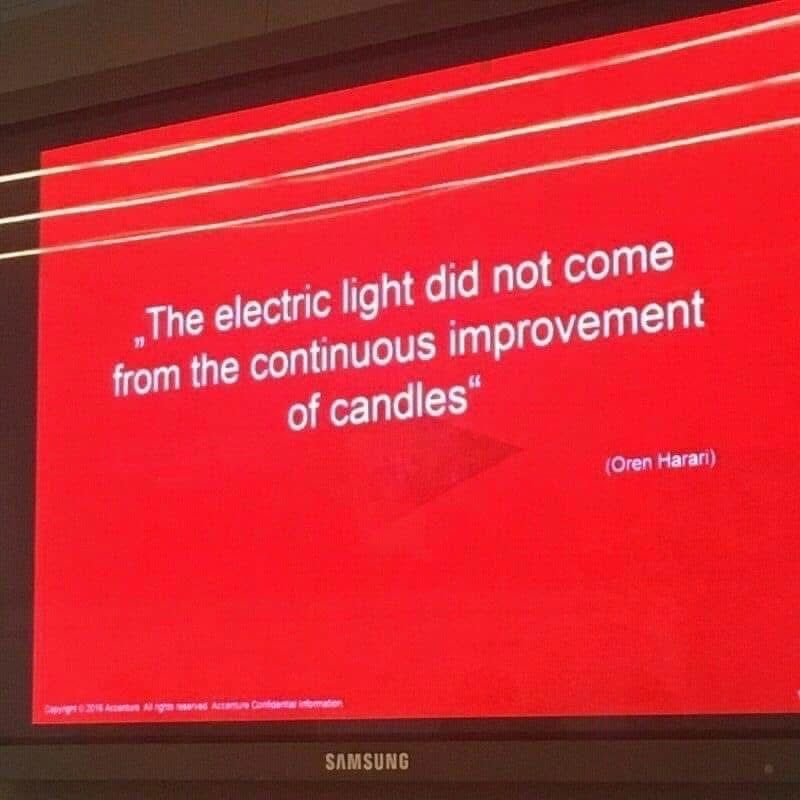

i often think about this.. https://t.co/YMhKgLRD1G

i often think about this.. https://t.co/YMhKgLRD1G