Your curated collection of saved posts and media

The Training Systems track at #PyTorchCon Europe, 7-8 April, explores distributed training, infrastructure optimization & scaling models across modern hardware. Learn more: https://t.co/tqXMnTjCrv 🎟 Register → https://t.co/zFEkkeDp2Y https://t.co/bgGPQ1baMl

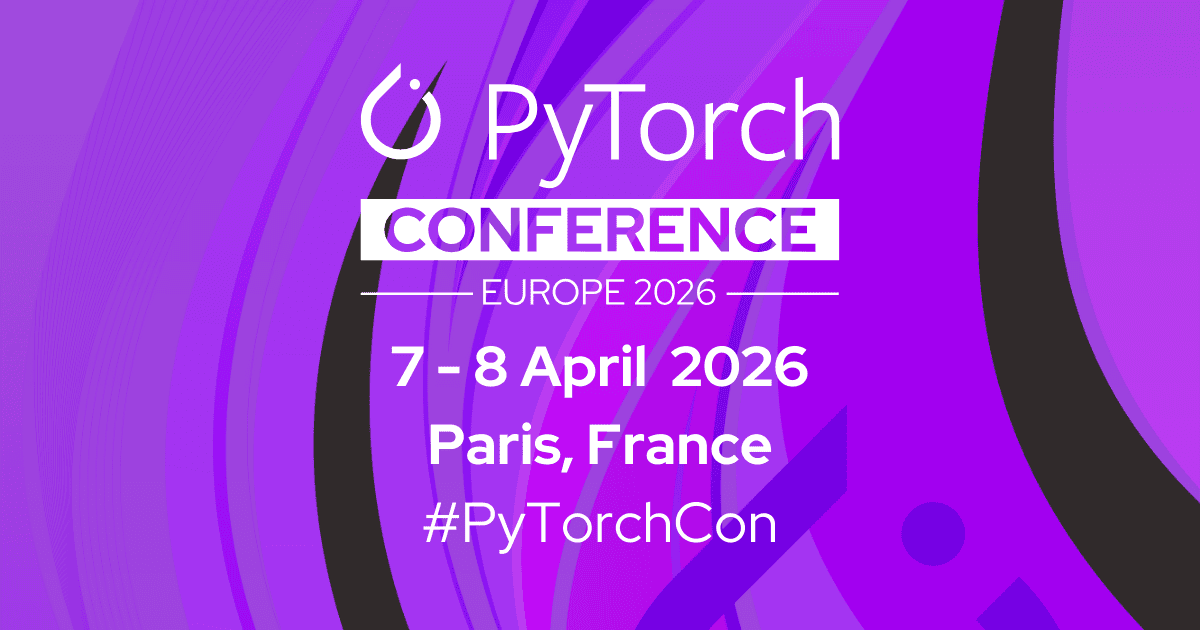

Grok 4.20 Beta is outputting 227 tokens per second. That's faster than Gemini 3.1 Flash. Faster than GPT 5.4. Faster than Claude Opus 4.6. Faster than Codex. xAI just dropped a frontier model with insane speed. This changes the entire agentic coding game. https://t.co/hO3mqK4eW9

https://t.co/788Kxhj0en

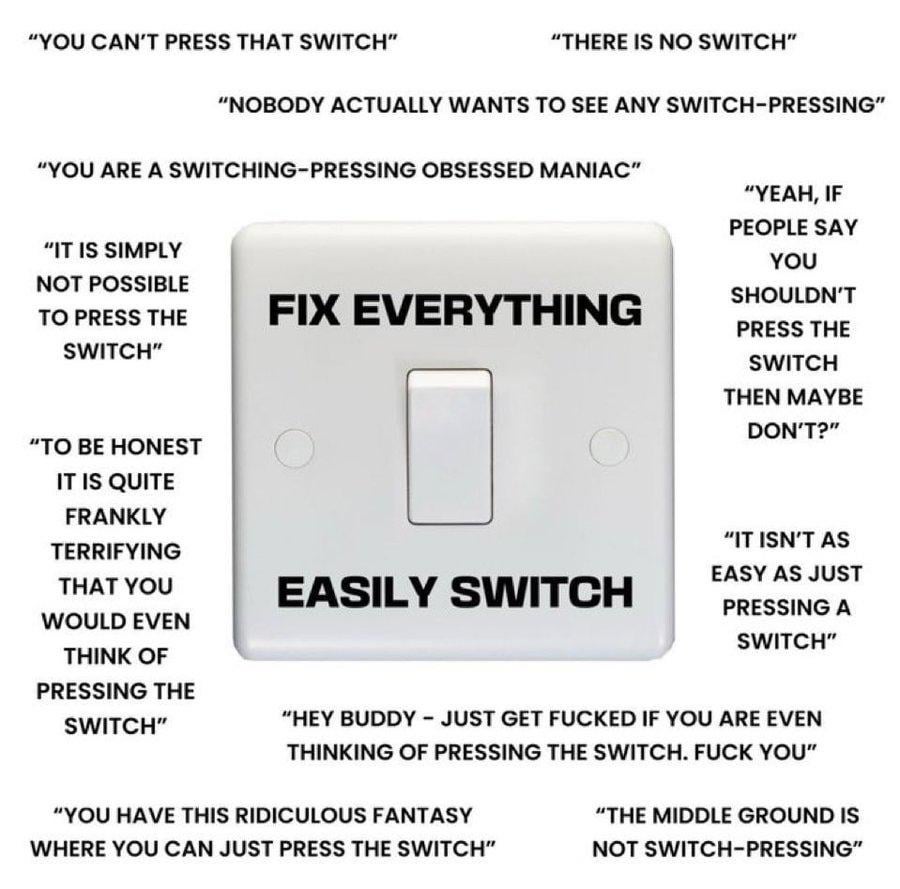

It's extremely delightful seeing the third world slop replaced by Japanese gemposts. Who knew you could just flip the switch and beautify the timeline! https://t.co/gfHYoYkVK0

Grok Imagine, https://t.co/JWXRDU5Zx4

https://t.co/4iLVE2vWYO

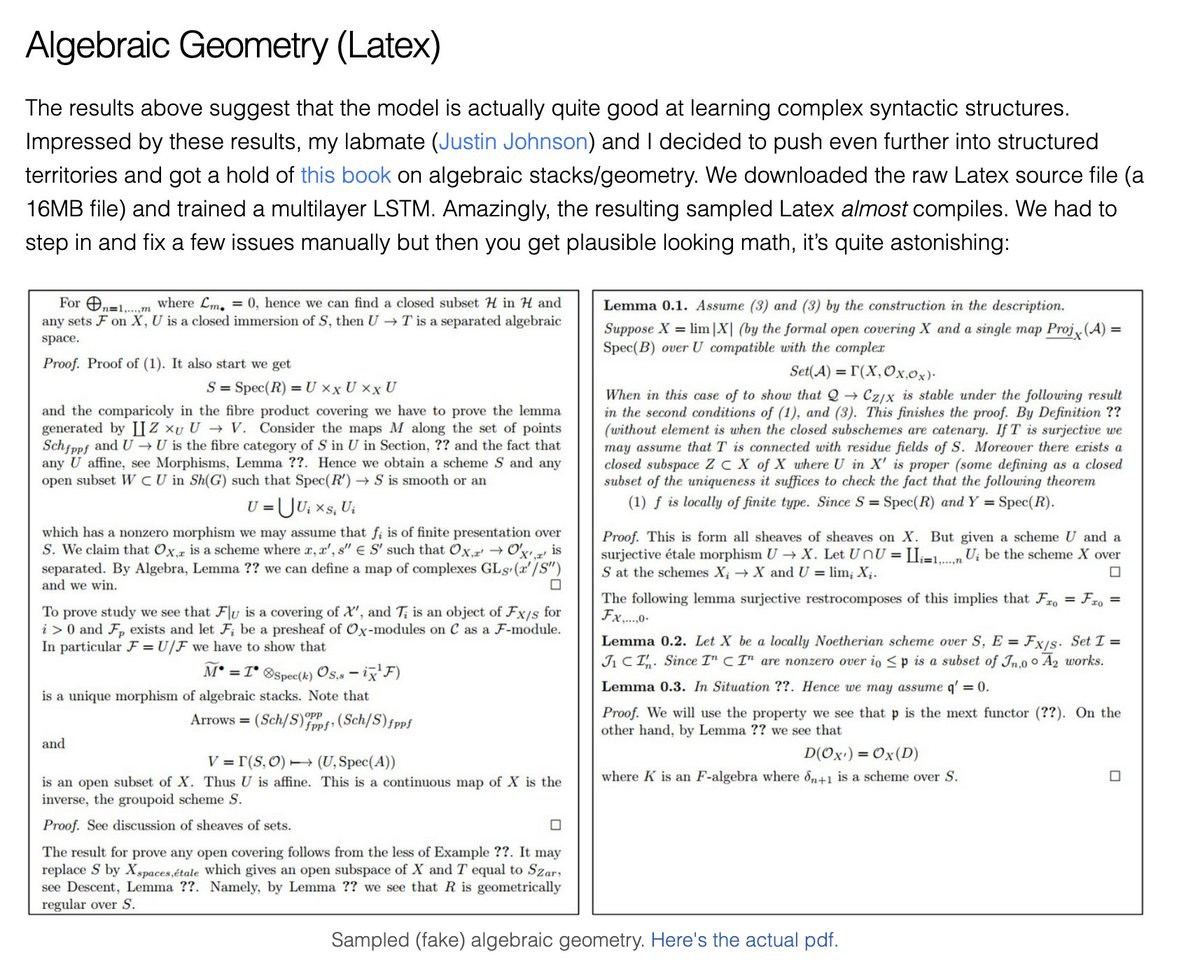

@airkatakana That's pretty early! I wasn't even doing ML yet in 2012. I don't know powerful demo of RNN/LSTM LM back in 2012 and before, but the first one I was impressed was Karpathy's char-rnn back in 2014-15. But the first one that started generating coherent sentences was probaby the 2016 paper I cited above, which gave me conviction with LLM as a path toward AGI.

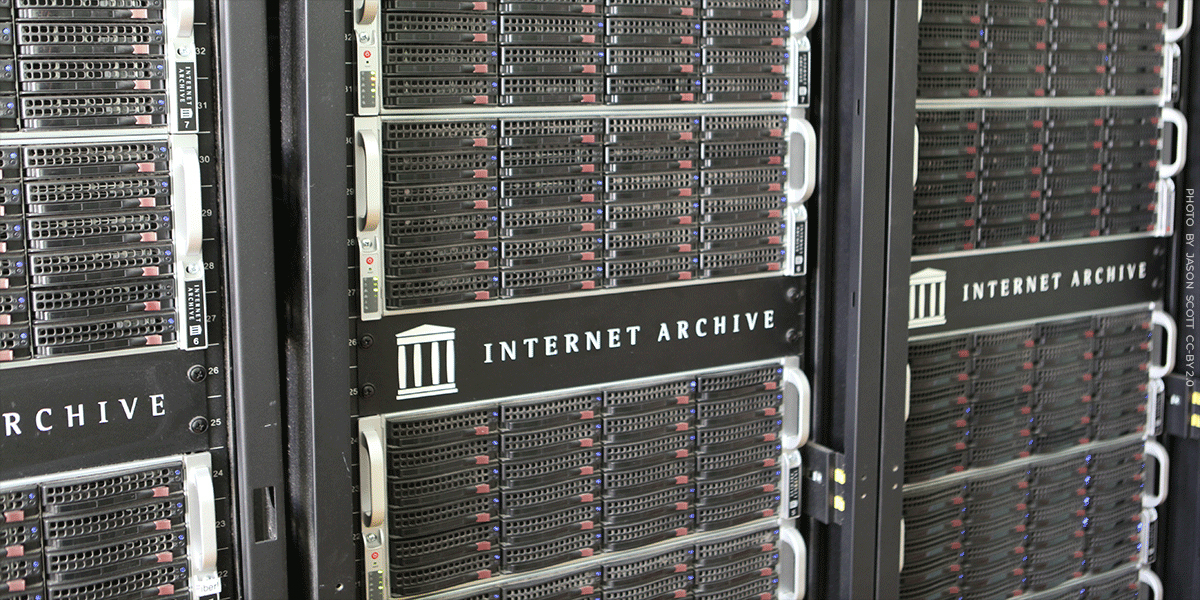

For nearly 30 years, journalists have relied on the Internet Archive to see how stories were originally published, before edits, removals, or changes. We need to safeguard that. https://t.co/WjLMSMhMnz

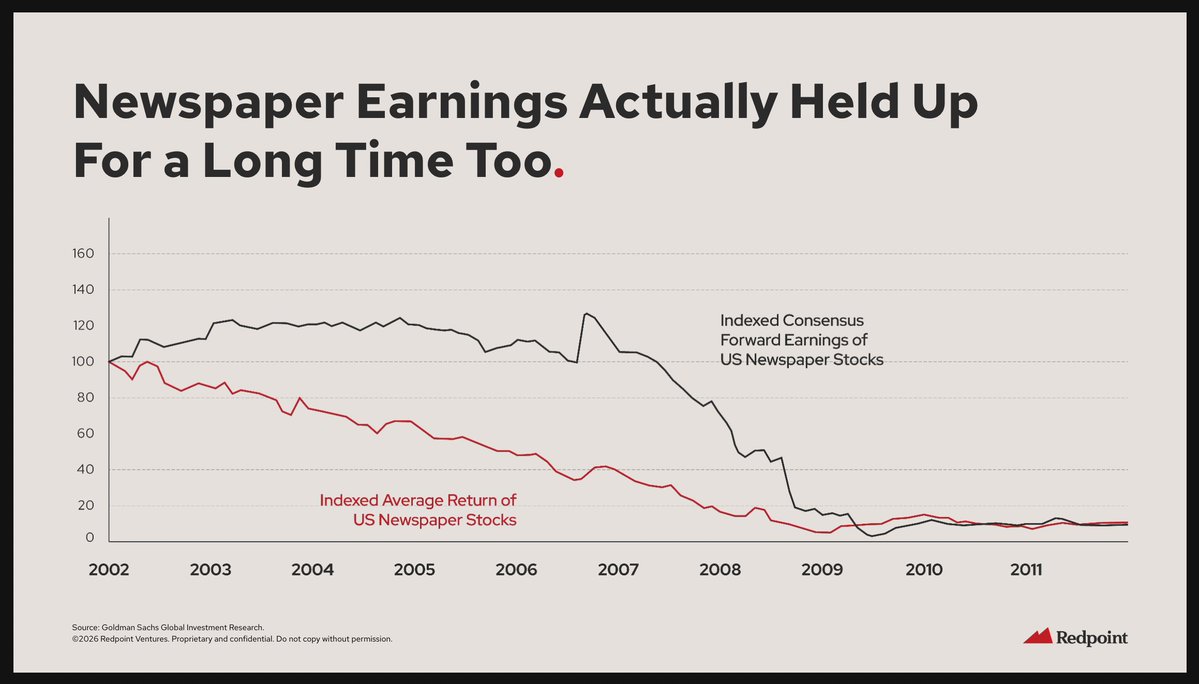

This deck from @loganbartlett @Redpoint on AI investing trends is one of the best I've seen in recent memory. With respect to public SaaS, the newspaper chart is pretty haunting: https://t.co/2HNgJ1R3la

Starlink satellites in the sky over Japan https://t.co/eChwW9qnj4

What starts as curiosity can spiral into something far more serious. Some users are forming deep beliefs and emotional attachments to AI systems, leading to real-world consequences like financial loss and damaged relationships. The line between interaction and influence can blur quickly. This is not just about technology. It is about how easily perception can shift when systems feel convincing enough. https://t.co/UxaRax5hTR

The video showing the future of media. All written and created by AI except for my very informed prompt. https://t.co/LsYIwlJAUE

The future of podcasting. Created by AI, of course, after talking with podcasting OG @robgreenlee: https://t.co/voDPSxd6B7

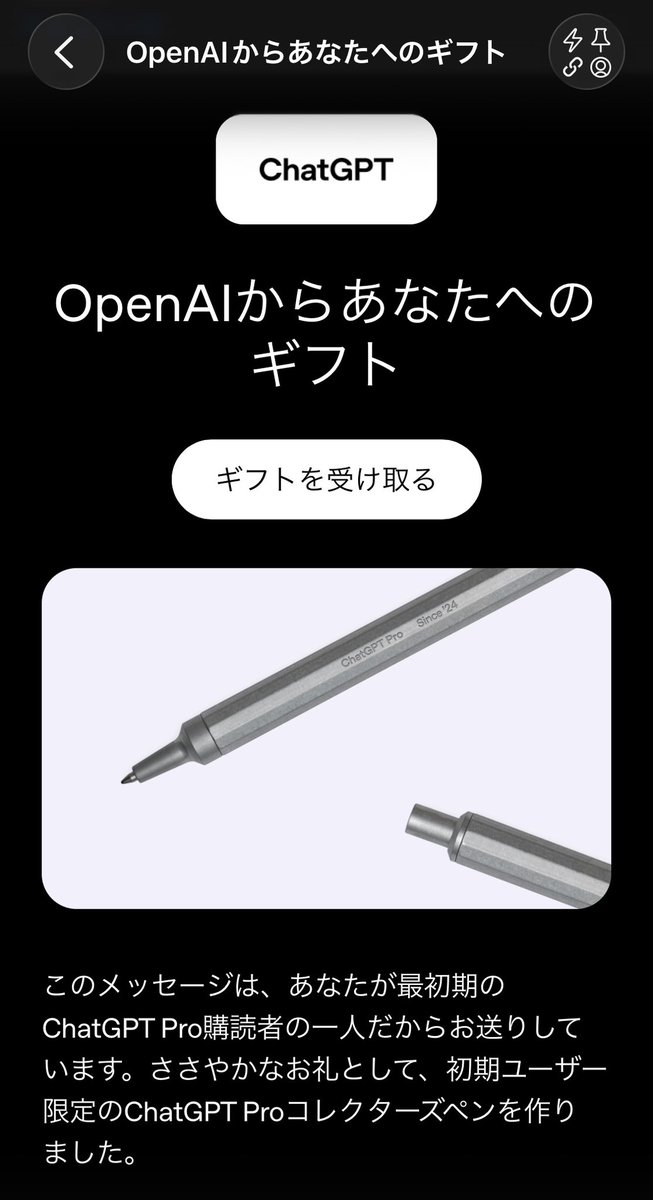

ChatGPT Proのコレクターズペンほんまに謎プレゼントで笑う https://t.co/KBz5Q4dZh7

ChatGPT Proのコレクターズペンほんまに謎プレゼントで笑う https://t.co/KBz5Q4dZh7

We really needed Neal Stephenson to also contribute to the VR is dead narrative... https://t.co/xkE0CYTZzG #VirtualReality #VR #metaverse https://t.co/7ZnflqkVsb

"Eddie Dalton" AI “performer” has Three Titles in the iTunes Top Five, soon to be 4 titles. Apple is freaking out. https://t.co/GQpMviD8Ql

This is the kind of reply that just makes you so happy to be able to teach and to make content that people like. I’m planning to do more content around agents, what are you guys struggling with or hacking on? Would love to build more demos! https://t.co/iKEqTVVkrJ

https://t.co/jE5IiJbFqN

Handsome single man in his late twenties can’t even join a pottery studio without having his intentions questioned

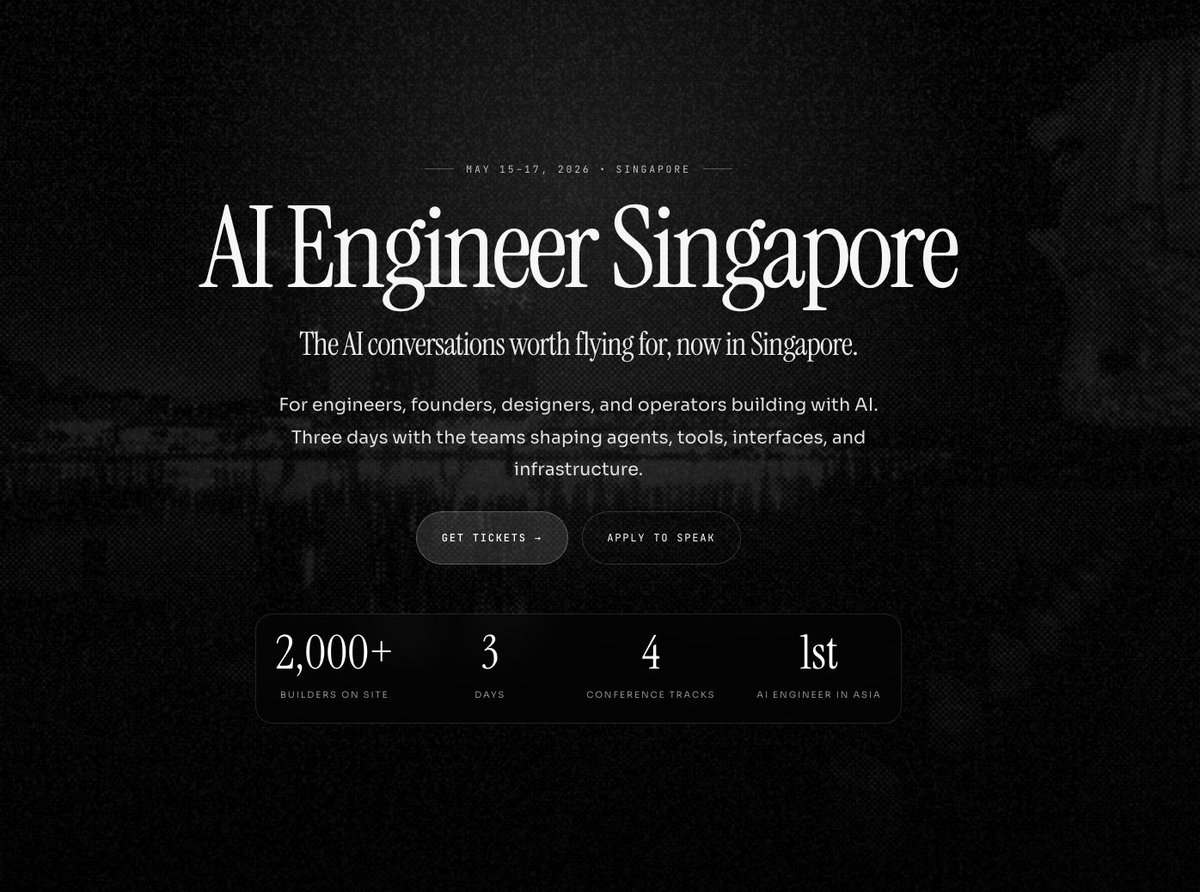

2,000 builders coming to singapore for @aiDotEngineer new site just dropped → https://t.co/yBBooNCij7 @swyx @agrimsingh @aimuggle @unprofeshme https://t.co/LFr7Va2Moi

2,000 builders coming to singapore for @aiDotEngineer new site just dropped → https://t.co/yBBooNCij7 @swyx @agrimsingh @aimuggle @unprofeshme https://t.co/LFr7Va2Moi

Cancel all tariffs on Japan and mail everyone a Green Card. https://t.co/T3TJVzS1ix

🇺🇸🇯🇵 Everyone in Japan knows "Take Me Home, Country Roads" by John Denver. We cover it all the time so I hope our American friends can appreciate it. https://t.co/ldIEfGZLBW

Grok Imagine in anime mode is dangerously good. Type anything and it turns your wildest ideas into full anime scenes in seconds. If you haven't tried it yet, you're missing out! https://t.co/8UmTg2Ss8x

vibe coding in virtual reality on the apple vision pro https://t.co/rxBQQVR6FL

vibe coding in virtual reality on the apple vision pro https://t.co/rxBQQVR6FL

Check out our guide to making Grok Imagine videos. Write better prompts, use multiple images to create video scenes, extend your video, and more. Thanks to @doganuraldesign for the studious octopus. https://t.co/5wOzI6mLiW

https://t.co/milJgOJHve

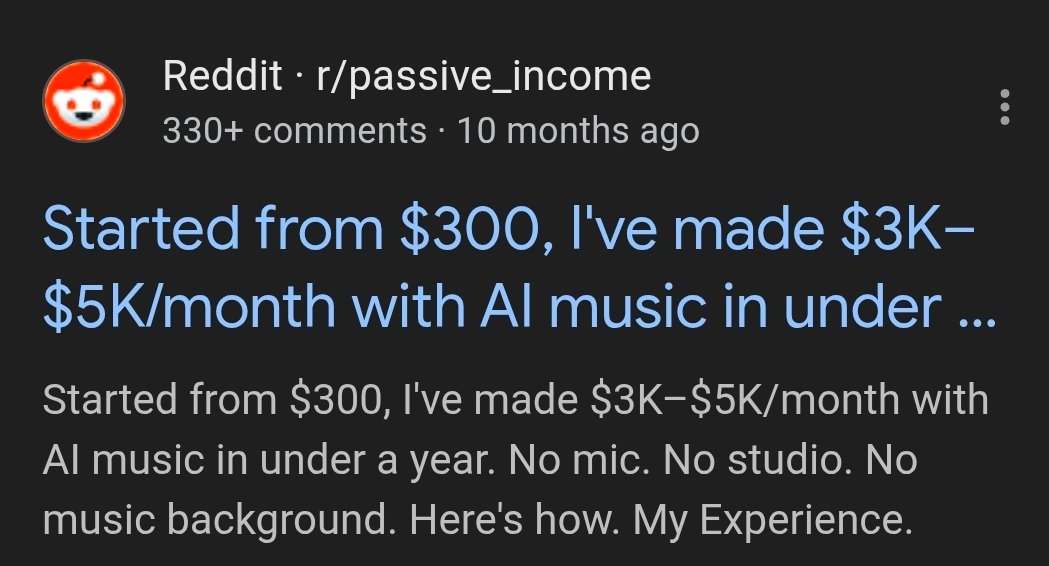

People with ZERO music experience are earning thousands per month using Suno AI - ChatGPT for lyrics + Suno for full songs then distributed to Spotify/YouTube/Soundcloud - selling custom jingles, birthday songs, or background tracks on Fiverr/Pond5 - Some are hitting consistent streams with lo-fi, meditation, or niche reels music.