Your curated collection of saved posts and media

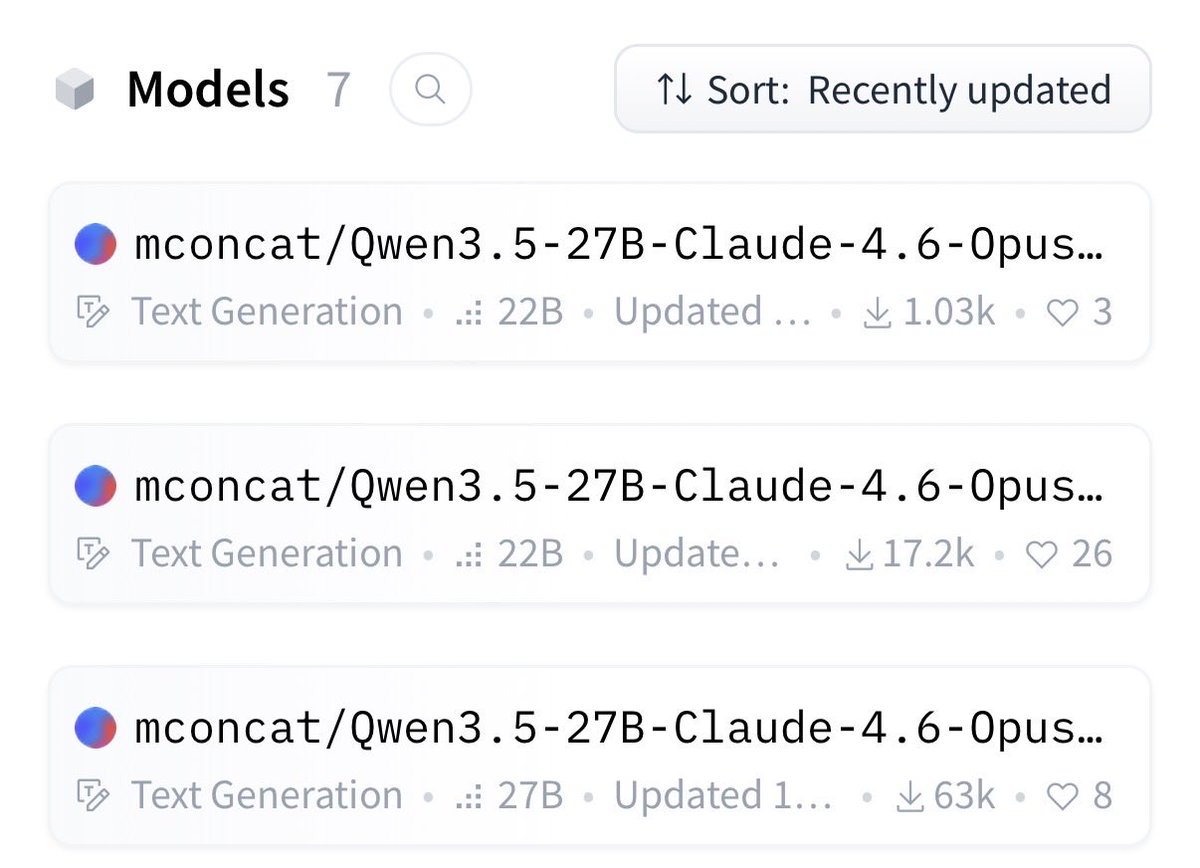

Didn’t expect these to reach that high number https://t.co/SgZ09QiW6G

Didn’t expect these to reach that high number https://t.co/SgZ09QiW6G

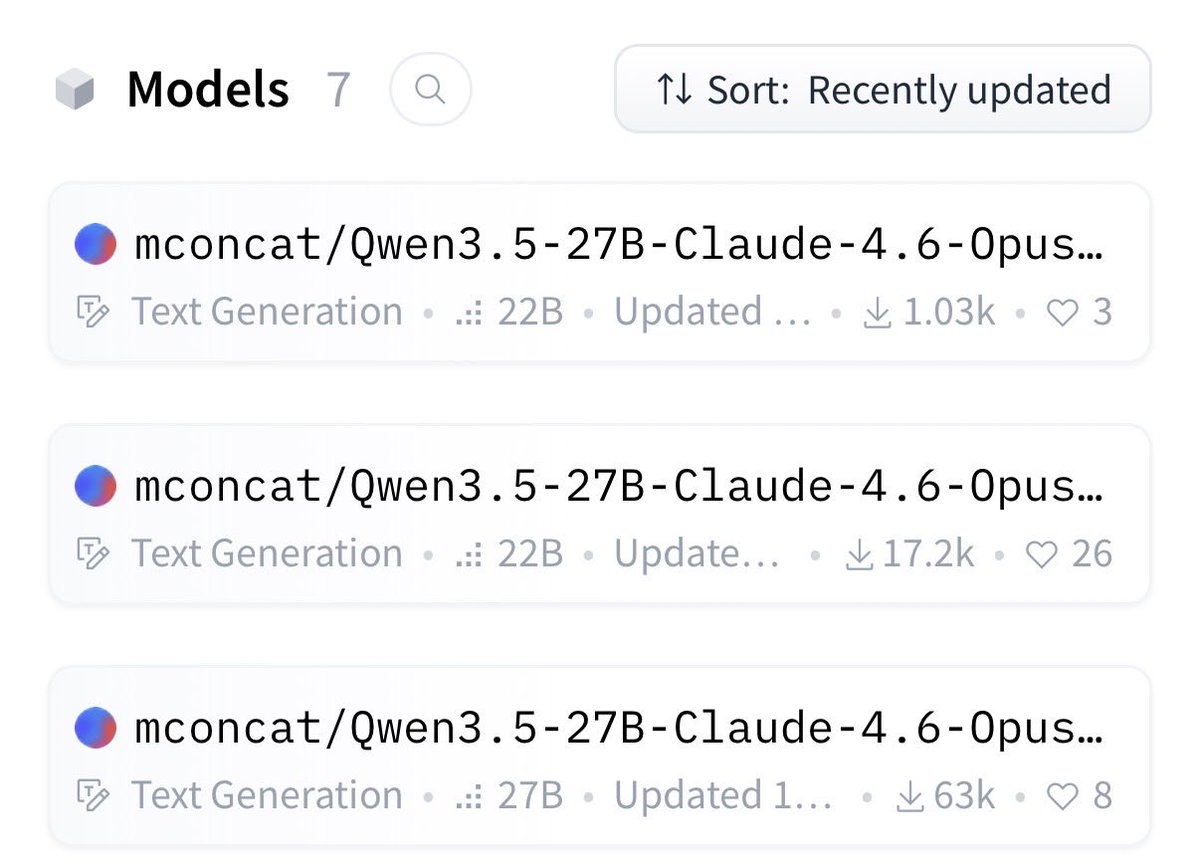

How can we autonomously improve LLM harnesses on problems humans are actively working on? Doing so requires solving a hard, long-horizon credit-assignment problem over all prior code, traces, and scores. Announcing Meta-Harness: a method for optimizing harnesses end-to-end

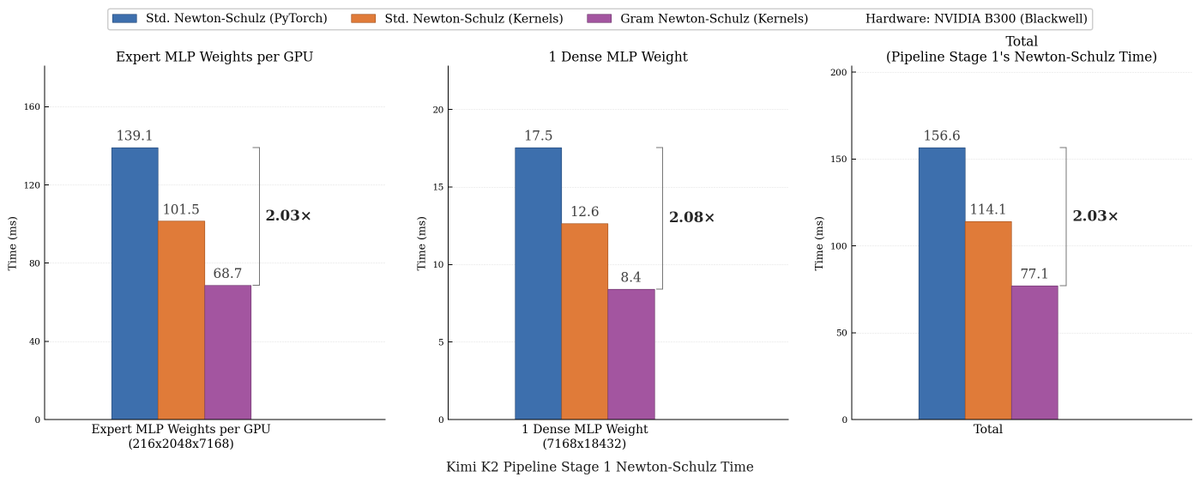

We made Muon run up to 2x faster for free! Introducing Gram Newton-Schulz: a mathematically equivalent but computationally faster Newton-Schulz algorithm for polar decomposition. Gram Newton-Schulz rewrites Newton-Schulz such that instead of iterating on the expensive rectangular X matrix, we iterate on the small, square, symmetric XX^T Gram matrix to reduce FLOPs. This allows us to make more use of fast symmetric GEMM kernels on Hopper and Blackwell, halving the FLOPs of each of those GEMMs. Gram Newton-Schulz is a drop-in replacement of Newton-Schulz for your Muon use case: we see validation perplexity preserved within 0.01, and share our (long!) journey stabilizing this algorithm and ensuring that training quality is preserved above all else. This was a super fun project with @noahamsel, @berlinchen, and @tri_dao that spanned theory, numerical analysis, and ML systems! Blog and codebase linked below 🧵

We cooked up Gram Newton-Schulz: a drop-in replacement of Muon’s Newton-Schulz that is up to 2x faster. Building this requires synthesizing ideas from linear algebra, numerical analysis, and kernel design. This makes for a great story and an even better optimizer! Amazing collaborators: @jcz42, @noahamsel, @tri_dao Blog: https://t.co/EyysR6p4Bs Code: https://t.co/5DjwIg7SAc

We made Muon run up to 2x faster for free! Introducing Gram Newton-Schulz: a mathematically equivalent but computationally faster Newton-Schulz algorithm for polar decomposition. Gram Newton-Schulz rewrites Newton-Schulz such that instead of iterating on the expensive rectangul

New blog post: how I used Codex to contribute VidEoMT, a SOTA model for video segmentation, to the Transformers library In December 2025, a shift occurred, and coding agents suddenly succeeded at a task they previously failed at: porting entire models. I list some of my best practices for using coding agents. Link: https://t.co/FFIqDAEdAZ

I'm thrilled to announce @SycamoreLabs, the trusted agent OS for the enterprise. We raised a $65M seed led by @coatuemgmt and @lightspeedvp, with @AbstractVC, @DellTechCapital, @8vc, @Fellows_Fund, @e14fund, and an exceptional group of angel investors. https://t.co/Tdm5gSgA38

Introducing Helena: the world's first autonomous AI marketer. Businesses spend 4,000 hours on marketing…before their first $1M in revenue. We built Helena to solve this. Helena can: ➤ Track competitor ads & create TikTok slideshows, UGC, static ads - all while you sleep ➤ Analyze performance across GA4, Search Console, paid/organic social for daily insights ➤ Research trends to draft GEO optimized blogs directly on WordPress, Framer, Webflow ...and more Helena has her own memory, scheduled tasks, 100+ custom marketing tools and native integrations. No dev. No CLI. No n8n. No API keys needed. Helena doesn't replace CMOs, and every marketer who's demoed it has asked us for early access. Want to hire her? Check the next thread ⬇️

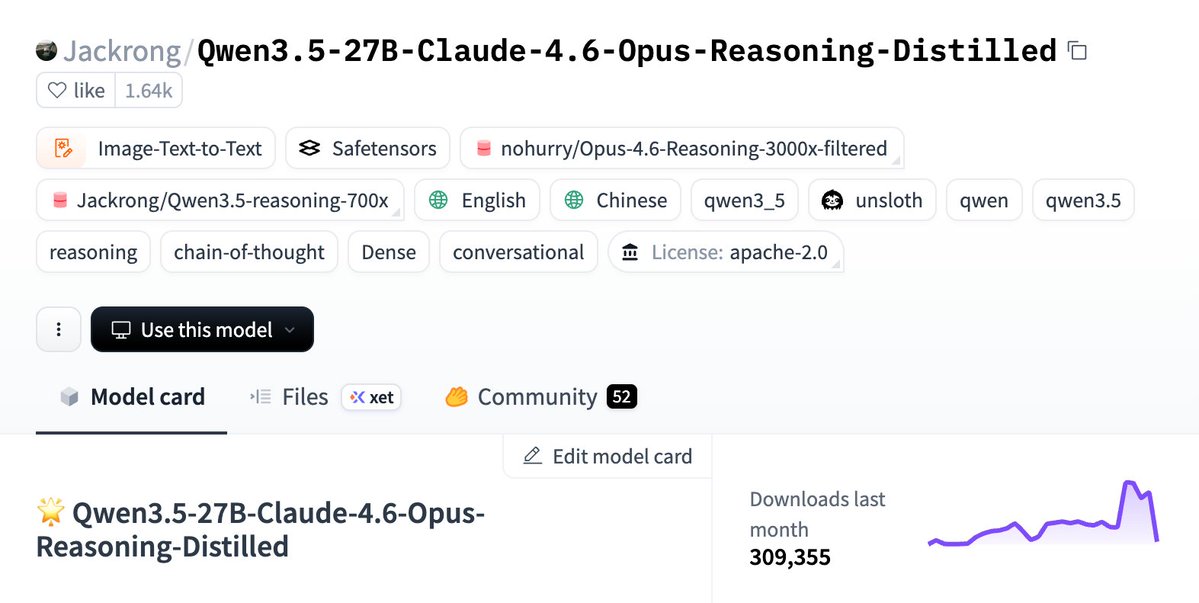

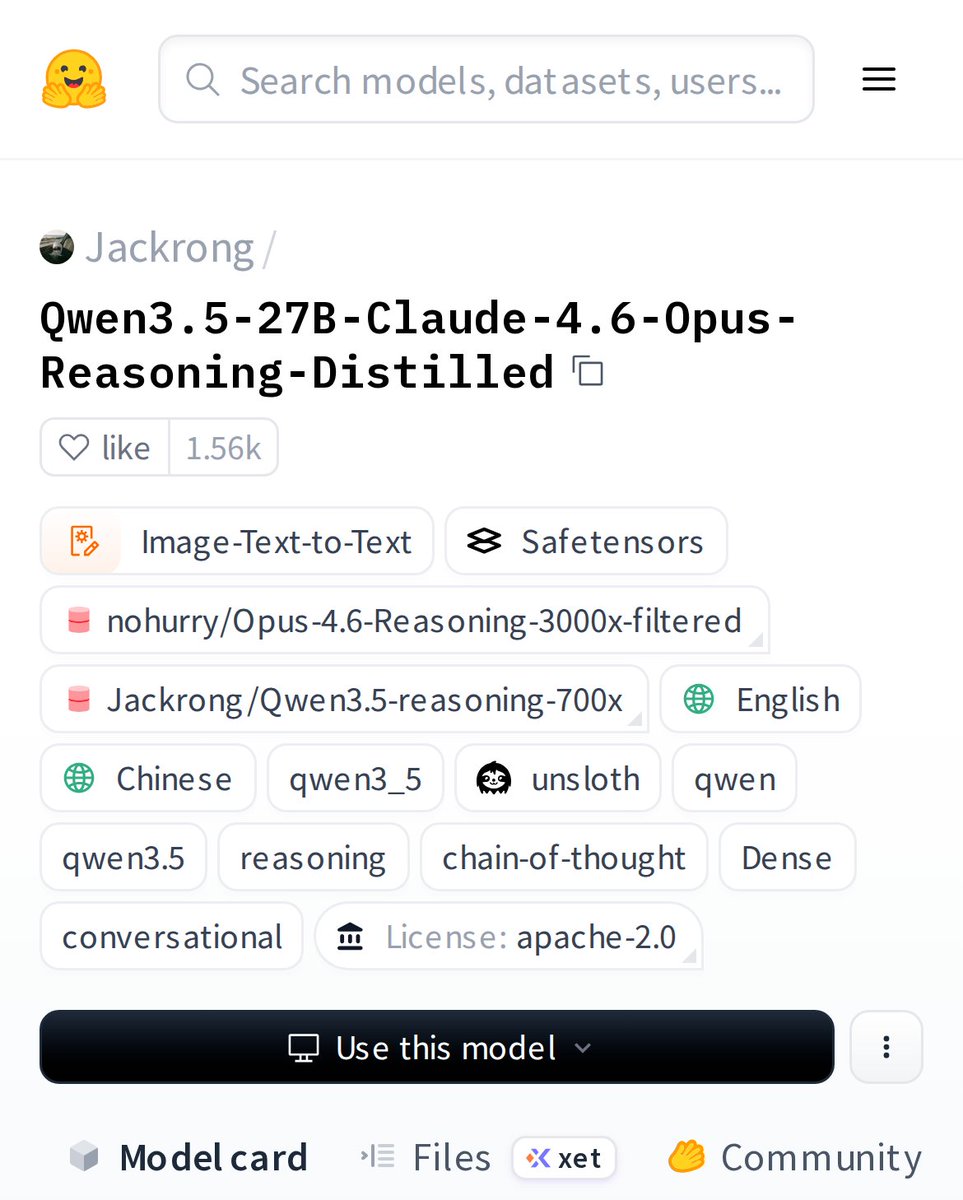

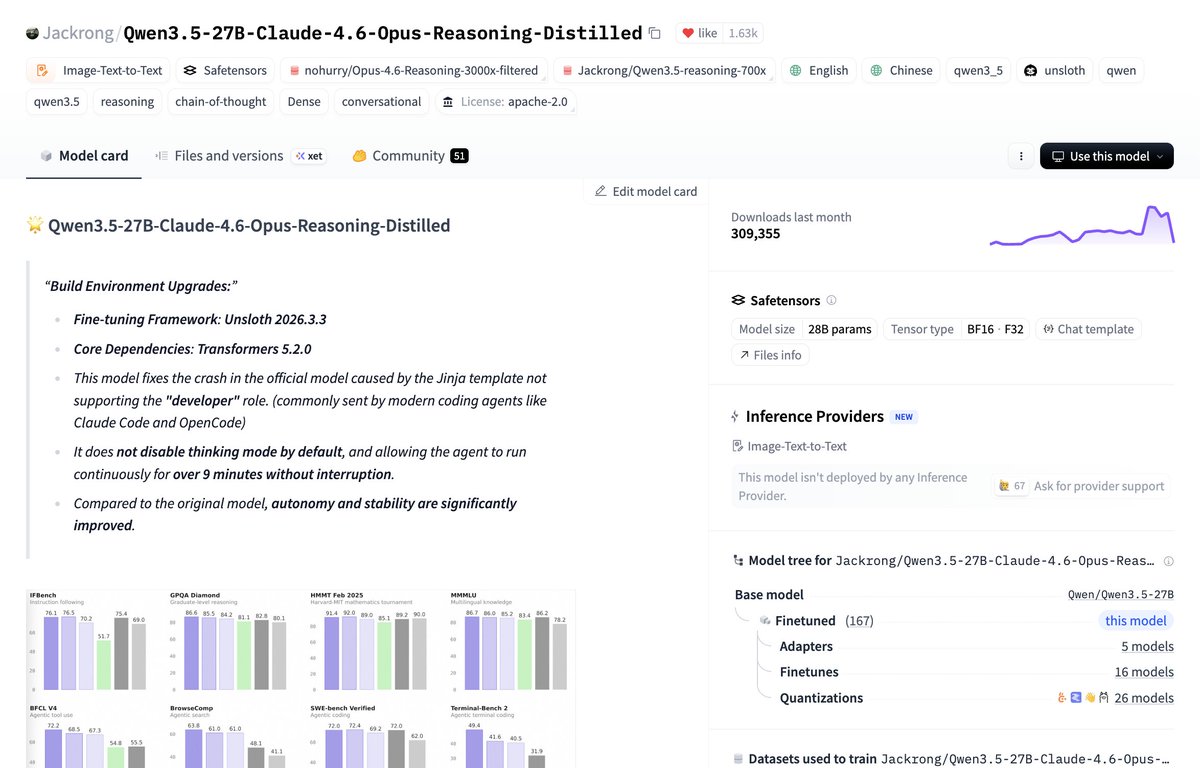

this model is an agentic treasure. it has been #1 trending for 3 weeks on @huggingface as mentioned by @danielhanchen. it's Qwen 3.5 27B fine-tuned on Opus 4.6 distilled data and beats Sonnet 4.5 on SWE-bench verified and more. "Runs locally on 16GB in 4-bit or 32GB in 8-bit." https://t.co/3tM8vk1FGZ

If you liked OpenClaw but weren't a fan of the security risks, try it out: https://t.co/qw8Pi4Whcg

OpenClaw doesn't belong in production. We built PokeeClaw — enterprise-secure AI agents, zero setup, 1,000+ app integrations. Try now: https://t.co/uX6VsZ6p0c First 500 to follow @Pokee_AI, comment “PokeeClaw”, like & repost get 1 month free.

America Isn’t Ready for What #AI Will Do to Jobs #RiseoftheRobots https://t.co/q6ldsXpDSz

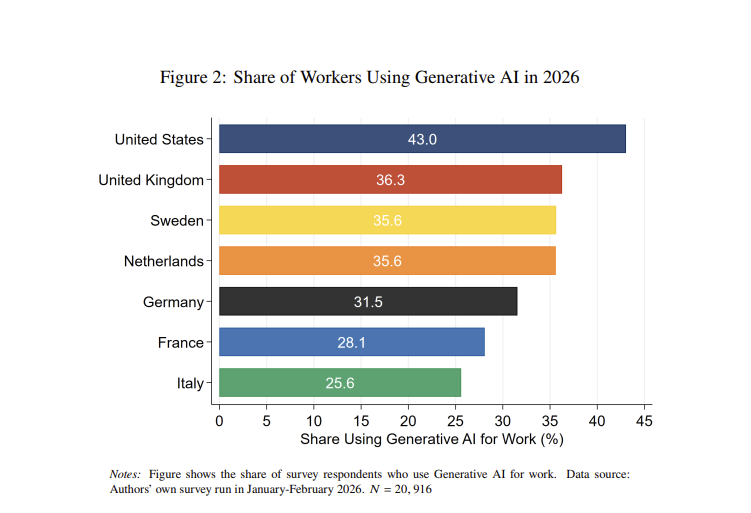

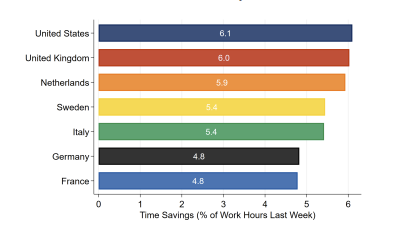

The average American worker using AI reports time savings of 6%, or 2.5 hours in a work week. Those are similar to the UK & Netherlands, and slightly more than other EU countries. There some early, non-causal, signs that this is translating into real gains in productivity growth https://t.co/XOspk4fp0Z

Because US workers use AI more, and gain more from it, the US currently is benefitting most from AI adoption: https://t.co/Fd66KQU9ID

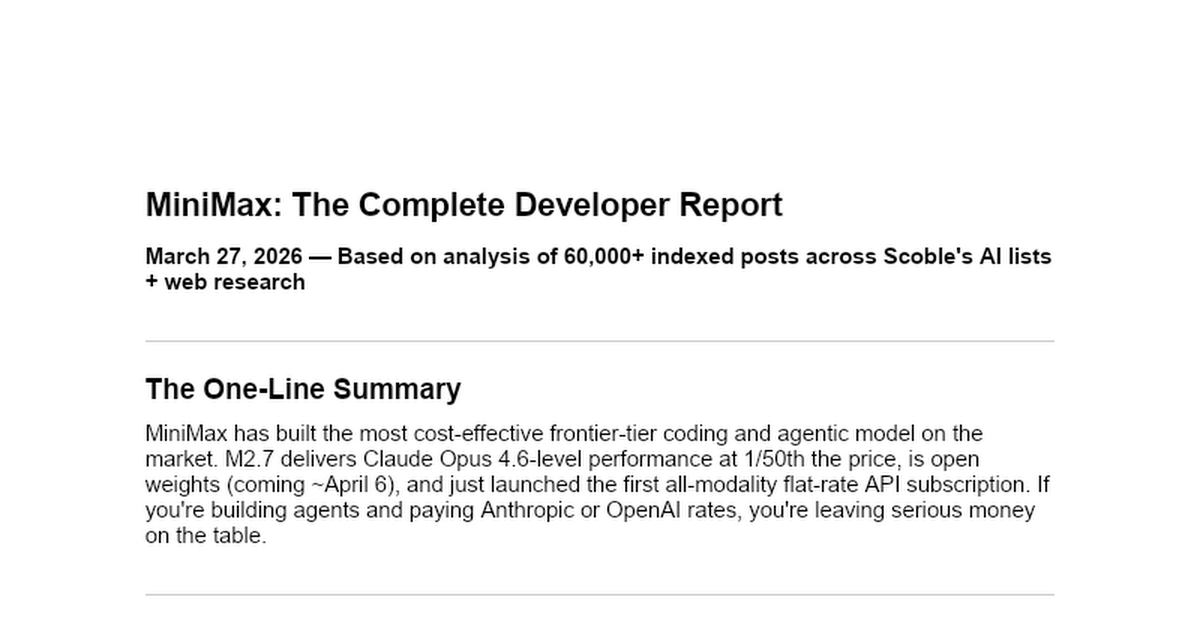

Exclusive: MiniMax AI developer report. TL;DR: It provides more performance for a much lower price. I now do custom reports for companies with the analysis engine that @blevlabs and I built over the past couple of months. You've seen my reports on OpenClaw and other technologies. Here I did one for MiniMax, comparing it to other models: https://t.co/WhnARBnU8c This report was written by using the X API to grab tens of thousands of posts from the AI community (I have the most complete lists of such anywhere: https://t.co/9eRY65x3IQ and Levangie Labs' cognitive architecture does the best job of putting it all together in a report that I've found). Key things from the report: ++"MiniMax has built the most cost-effective frontier-tier coding and agentic model on the market." ++"Architecture: MoE (Mixture of Experts) — 230B total parameters, 10B active. This is the key insight: you get frontier-tier reasoning with the inference cost of a 10B model." ++"What the community said: "Basically Claude Opus performance but 95% cheaper." "80.2% SWE-Bench. 76.8% on agentic tool-calling. Genuinely underrated." One developer burned through 922 million tokens in 3 days on the coding plan across 50+ parallel sessions." ++"The consensus: MiniMax M2.7 matches or exceeds Opus on coding and agentic tasks at 1/50th the price." ++"Bottom Line for Developers: If you're building coding agents, agentic workflows, or any system that makes a lot of API calls, MiniMax is the most important model to know right now. The price-to-performance ratio is genuinely unprecedented at the frontier tier." The complete report comparing it to the other models: https://t.co/WhnARBnU8c Are you using MiniMax? Why not? The AI community here on X is. MiniMax Agent → https://t.co/NWX9GThijF API → https://t.co/lPc0F11xOU Token Plan → https://t.co/EDr6dR38w1

when people ask about custom tools vs. letting users bring MCPs, the answer is always "both". Custom tools take work and taste, MCPs give flexibility but will always lead to lower quality results 1) for high-volume tools (e.g. Read/Write/Edit in a coding agent) build these as first-class tools 2) for long tail stuff like 'fetch data from random saas', let users bring MCPs 3) LOOK AT YOUR F****** DATA (thanks @HamelHusain ) 4) The most popular MCPs, turn these into first-class tools in your system 5) repeat until AGI another dope episode with @vaibcode

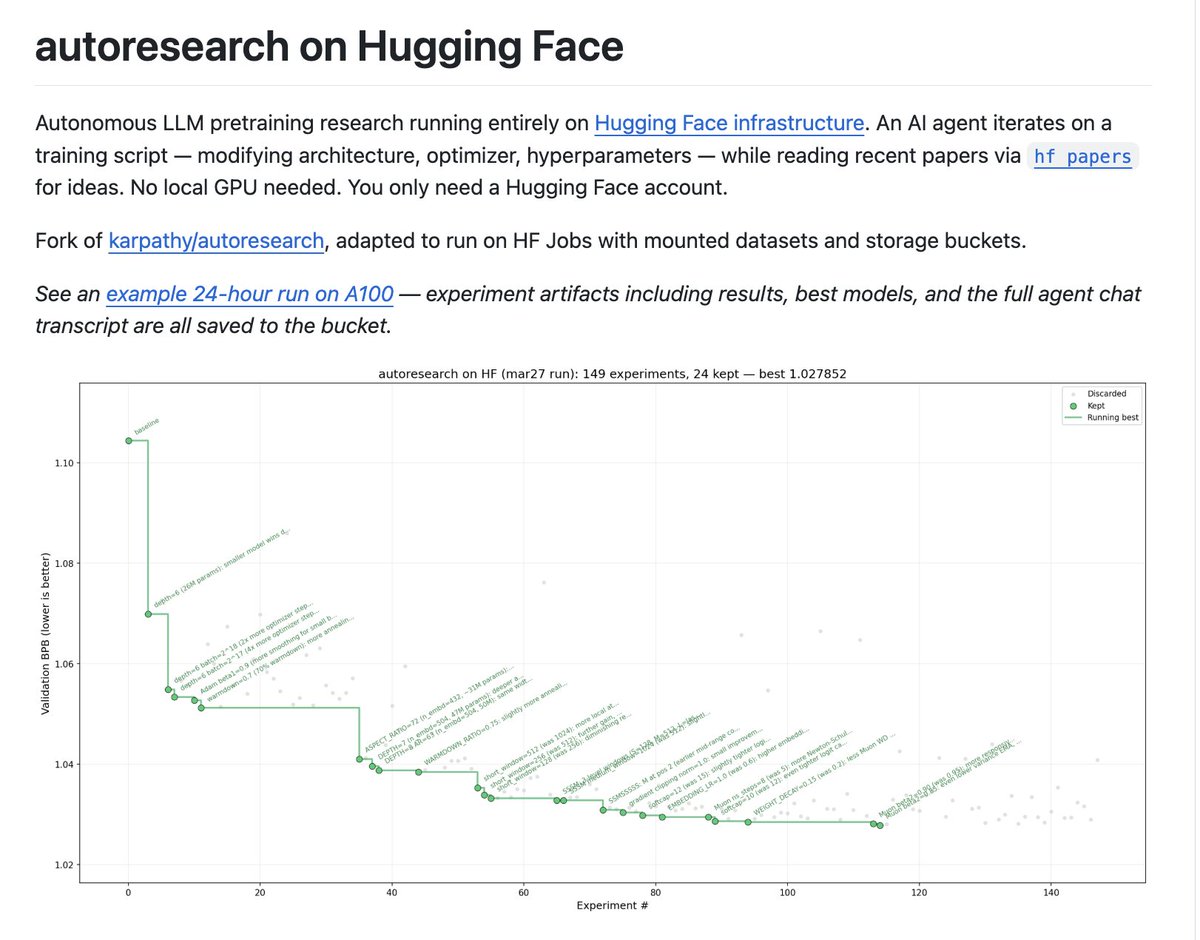

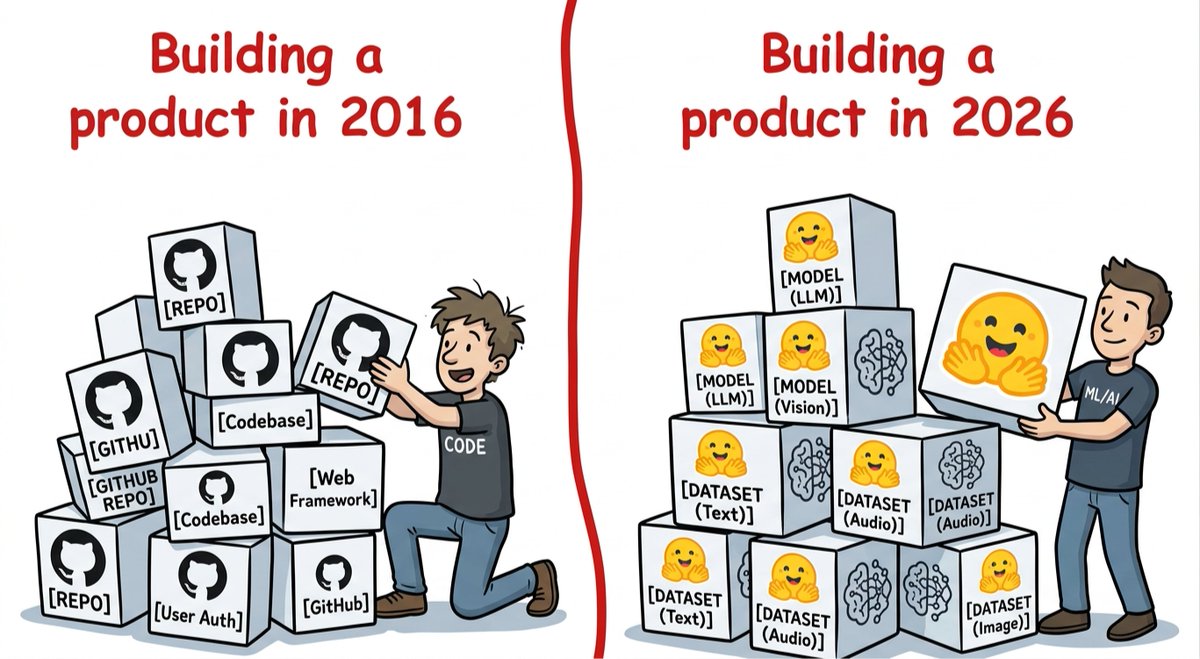

In a world where everyone can build websites, apps and features easily (thank you Cursor, Lovable, Claude and the likes), it will take more for you and your company to differentiate themselves (which is in my opinion the basis for success). That's why we're seeing more and more people and companies starting to train, optimize and run their own models (rather than outsource this to third parties). This is the future we want to enable with Hugging Face: empower millions of people to build AI themselves, not just be API users. Cool new project in this vein from @mishig25: auto-research built on top of @huggingface so that your agents find and push their intermediary checkpoints, datasets, learn from papers and collaborate on the hub: https://t.co/YWCzp5ZIfC Let's make all AI builders rather than AI users!

This is god tier and fits on 24gb + It crushes everything up to 6x its size and ties with Gemini deep think and Deepseek on Math https://t.co/0Wwad8HZ4N

the shift is real: in 2026 invest on AI builders 🤗 https://t.co/7TaRcyNdpa

Meet Qwen3.5-27B-Claude-4.6-Opus-Reasoning-Distilled: a powerhouse reasoning model that understands both text AND images. It's like giving AI a pair of eyes and a brilliant mind. The community is buzzing because this model actually shows its work, not just final answers. https://t.co/N2j07OBNBA

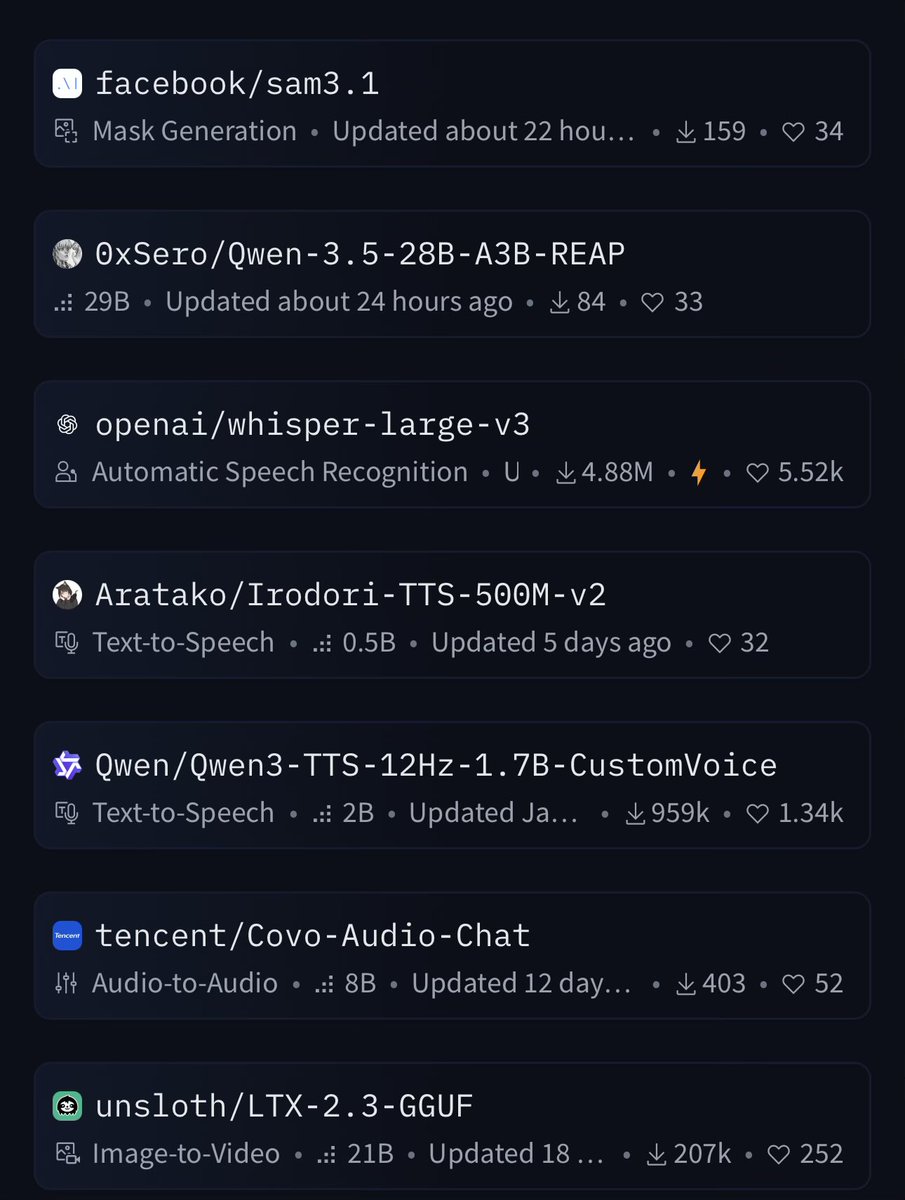

Page #3 of Huggingface https://t.co/fdSiYKHh7a

Page #3 of Huggingface https://t.co/fdSiYKHh7a

💯 https://t.co/vq7hFx5NKA

Our OSS engineer @itsclelia recently built 𝗹𝗶𝘁𝗲𝘀𝗲𝗮𝗿𝗰𝗵, a fully local document ingestion and retrieval CLI/TUI application powered by LiteParse ⚡ litesearch demonstrates how developers can assemble a high-performance, local-first retrieval pipeline using open tools from across the ecosystem: • Parsing: LiteParse, the fast and accurate document parser we recently open sourced • Chunking: @ChonkieAI • Embeddings: A local @nomic_ai model via @huggingface transformers.js • Vector storage: A local @qdrant_engine edge shard (custom-built in Rust and compiled as a native add-on) • Retrieval: Query stored files with optional path-based filtering and configurable relevance thresholds • Runtime: @bunjavascript for speed and versatility 💻 Check out the repository and try it yourself: https://t.co/N0TyLbwvpm 📚 LiteParse docs: https://t.co/4C5ky7iIOa

Out of Sight but Not Out of Mind Hybrid Memory for Dynamic Video World Models paper: https://t.co/9bnjLgKDJF https://t.co/51ZKuWOmdd

PackForcing Short Video Training Suffices for Long Video Sampling and Long Context Inference paper: https://t.co/zfNNV2BMuC https://t.co/AvHqhAsqwo

This model has been #1 trending for 3 weeks now. It's Qwen3.5-27B fine-tuned on distilled data from Claude-4.6-Opus (reasoning). Trained via Unsloth. Runs locally on 16GB in 4-bit or 32GB in 8-bit. Model: https://t.co/6KgPDHCJZ3

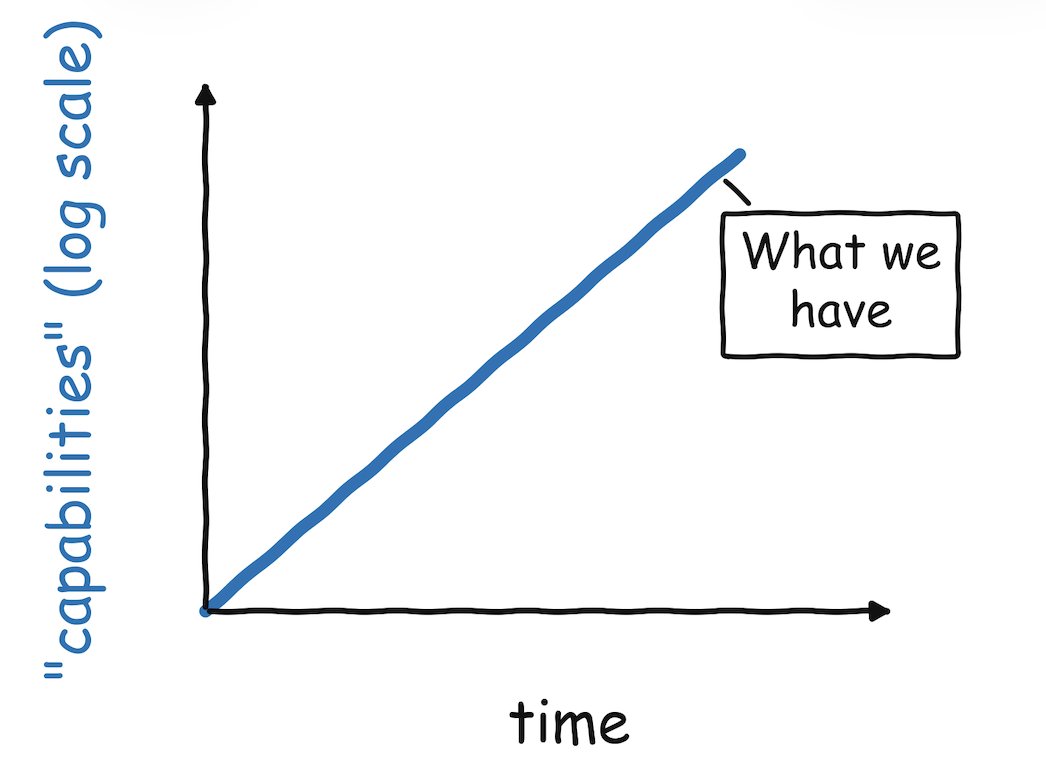

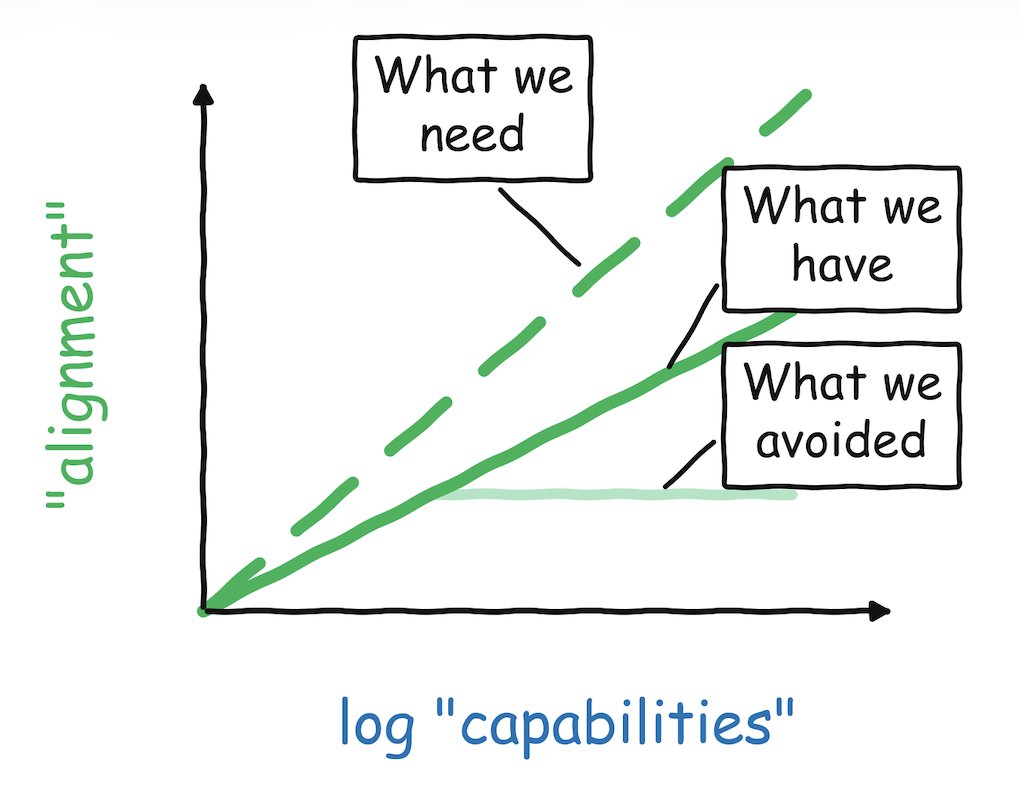

New blog post: the state of AI safety in four fake graphs. https://t.co/Qv1BlwyfY5

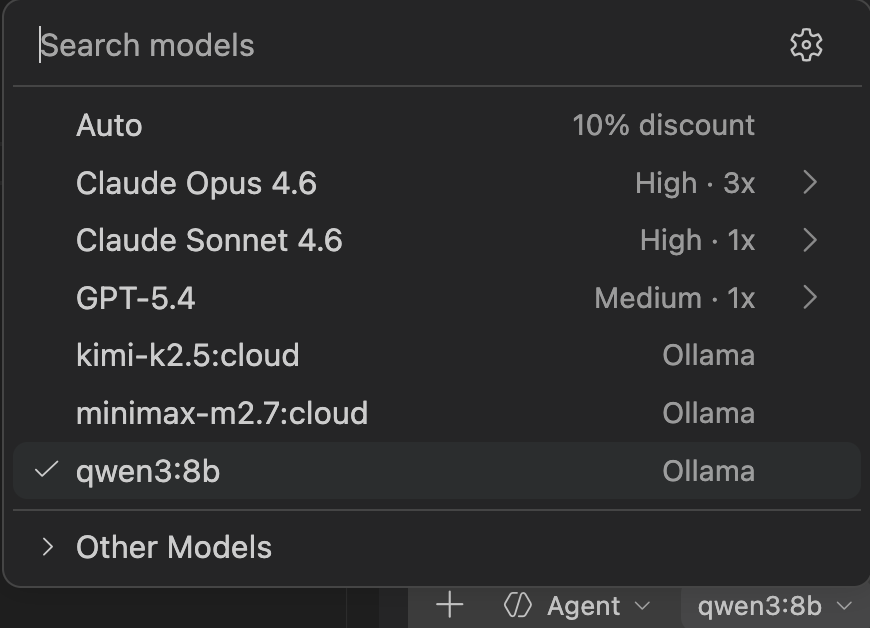

Visual Studio Code now integrates with Ollama via GitHub Copilot. If you have Ollama installed, any local or cloud model from Ollama can be selected for use within Visual Studio Code. https://t.co/11BqI12oSV

Introducing 🤗 Transformers.js v4: state-of-the-art machine learning for the web! 🚀 New WebGPU backend (browser, Node.js, Bun, Deno) ⚡️ Huge performance improvements 🤯 Support for over 200 architectures 🛠️ Complete codebase refactor Learn more about our biggest release yet! 👇

Falcon 9 launches the 16th Transporter rideshare mission and delivers 119 payloads to orbit https://t.co/h0e6dvxy8X