Your curated collection of saved posts and media

Bluesky just launched AI that lets users program their own feed. Not an algorithm that decides what you see. A tool that lets YOU decide what you see. One platform says, "trust our algorithm." The other says, "build your own." This is the split happening across all of AI right now. Who controls the model? Who owns the data? Who decides what it does? The companies betting on user control are winning. @bluesky . @AnthropicAI . And that's the bet we're making at @Uare_ai. AI that works for you. Not the other way around.

130+ skills are now built into your Superagent. Some are ready to use, and some can be created based on what you need. Add a skill once, and your Superagent can use it as part of your workflows. Stack skills, connect tools, and build flows that run end-to-end. https://t.co/B6ntwyRkWm

This seems useful https://t.co/rjrX4jct8U

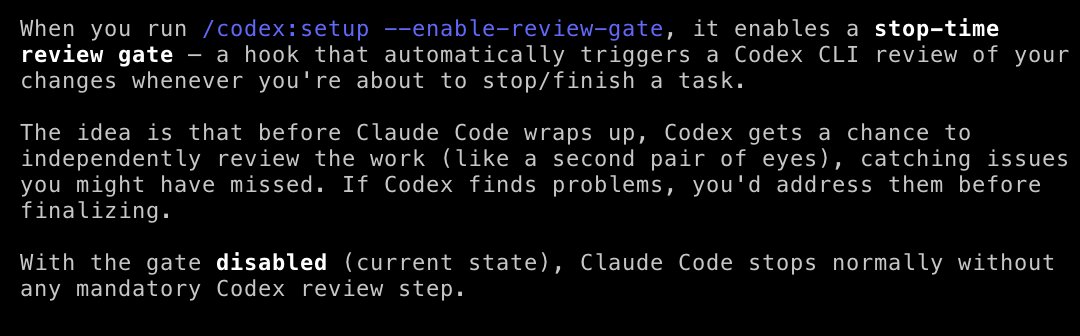

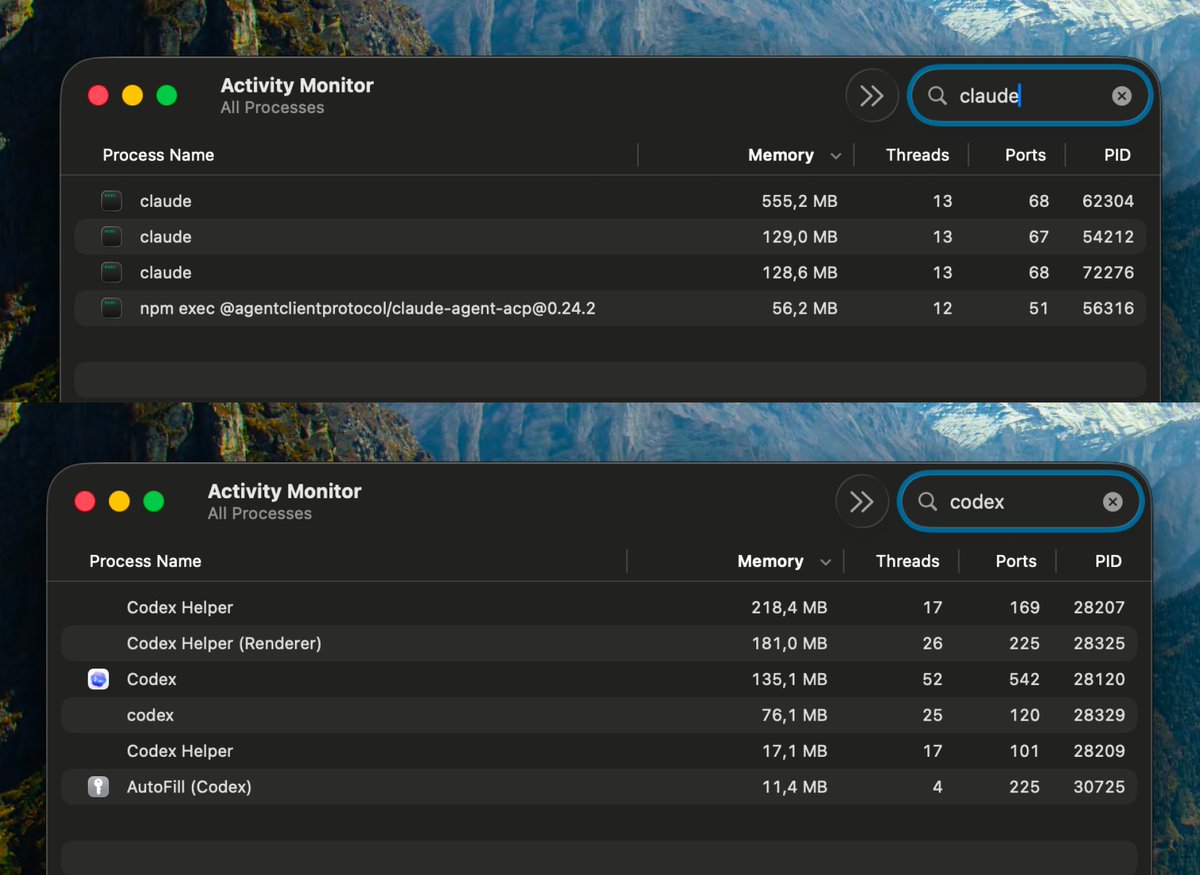

I built a new plugin! You can now trigger Codex from Claude Code! Use the Codex plugin for Claude Code to delegate tasks to Codex or have Codex review your changes using your ChatGPT subscription. Start by installing the plugin: https://t.co/u6gBpArwBc https://t.co/HyEdMPWees

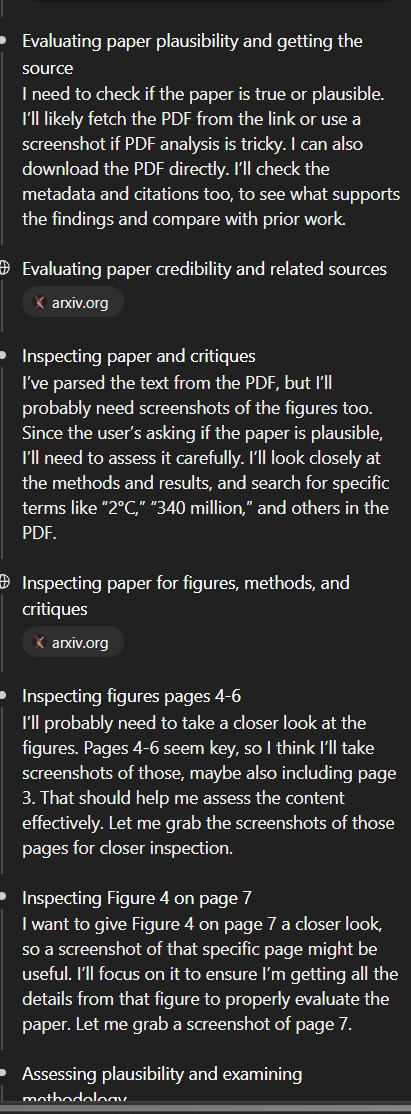

One of the things that is useful about the ChatGPT GPT-5.4 Pro (and also Thinking) harness is that it is quite good at understanding how to read scientific papers, not just relying on text, but also figuring out which figures are key and inspecting those visually. https://t.co/3jHFNWoieP

Exa is launching a Singapore office focused on web-scale infrastructure! The Google/Bing engineers who built the first generation of web infra from scratch haven't done it for decades. We're doing it again for the next generation of search. This will need to be at bigger scale -- exabytes of data, higher standard for quality, built from scratch for AI -- and it will be a global effort.

Security doesn't need to be intimidating. In just 5 minutes (or the time it takes to make your ☕️), you'll know the basics of securing your projects and keeping them safe with GitHub Advanced Security. The new episode of GitHub for Beginners is up. https://t.co/5HQxEGVejI

X after pushing Japanese posts https://t.co/ZOtdJz7jCQ

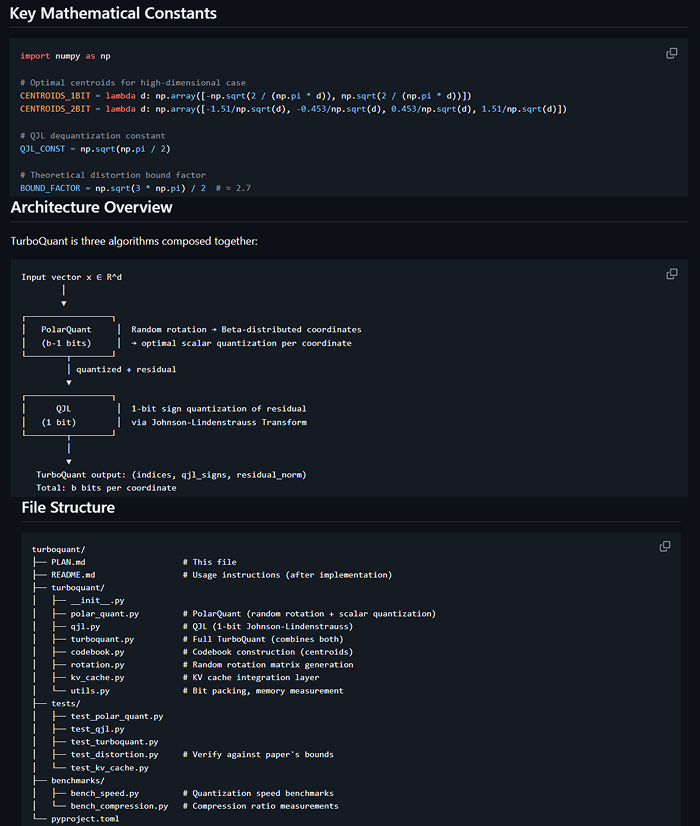

Solo dev reverse-engineered Google's billion-dollar algorithm in 7 days Google published the paper that crashed memory stocks worldwide. Then shipped zero code. Tom Turney read the math, opened his terminal, and built the whole thing with Claude - then made it faster than Google promised. Day 1-3: Core algorithms, 141 tests, Python prototype Day 3-5: C port into llama.cpp, Metal GPU kernels Day 5-7: Speed optimization from 739 to 2747 tok/s That's a 3.7x speedup through pure engineering: > fp32 → fp16 WHT > half4 vectorized butterfly ops > graph-side rotation > block-32 storage layout Then he added his own research on top: > Sparse V: skip 90% of value decompressions at long context > Asymmetric K/V: keep keys precise, compress values harder > Temporal decay: old tokens get lower precision automatically Result: 35B model running on a MacBook with 4.6x compressed cache. 613 GitHub stars in a week. Google still hasn't released their own code.

https://t.co/uAaxXKPqnk

Thanks to @AI21Labs for tracking down a silent uint32 overflow in vLLM's Mamba-1 CUDA kernel and contributing the fix. Root cause: `uint32_t` stride × cache_index overflows silently at scale. Fix merged in #35275. The debugging story is worth a read. 🔗 https://t.co/S4XBnEn1uv

Here comes AutoClaw. We offer a new solution to run OpenClaw locally on your own machine. - Download and start immediately. No API key required. - Bring any model you like, or use GLM-5-Turbo, optimized for tool calling and multi-step tasks. - Fully local. Your data never leaves your machine. We're giving data control back to Claw users. Meet AutoClaw → https://t.co/mI3nne0nz0 Join the conversation → https://t.co/i9MRHOJZKj

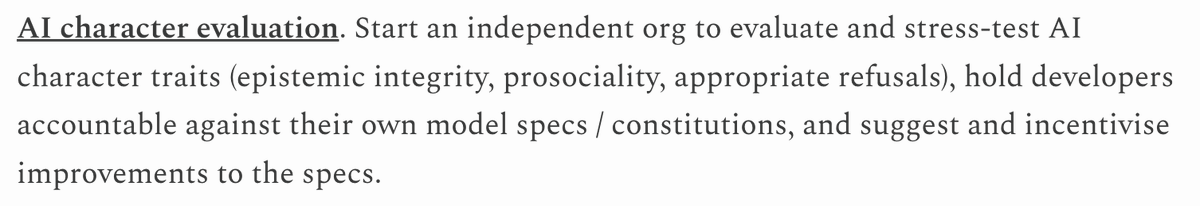

I like Will's takes here. The first proposal is pretty close to home for me, and is something I'd really love to see happen: https://t.co/K2YNEdnZBa

There are lots of projects that could really help the transition to superintelligence go much better, which almost nobody is working on. With @finmoorhouse, I’ve written up eight ideas that seem especially promising. Some are about shaping AI systems themselves: independently e

@noritunamayo https://t.co/pIm22hVgZB

MIT’s inFORM is a shape shifting interface that turns digital data into physical forms, letting users interact with objects remotely. https://t.co/ZHFjHO367n

South Korea–based WIRobotics has introduced a humanoid robot called Allex. Allex features a lightweight arm with a 15-joint hand that can sense tiny forces and lift objects up to 30 kilograms. The company plans to develop it into a safe, flexible, general-purpose humanoid by 2030.

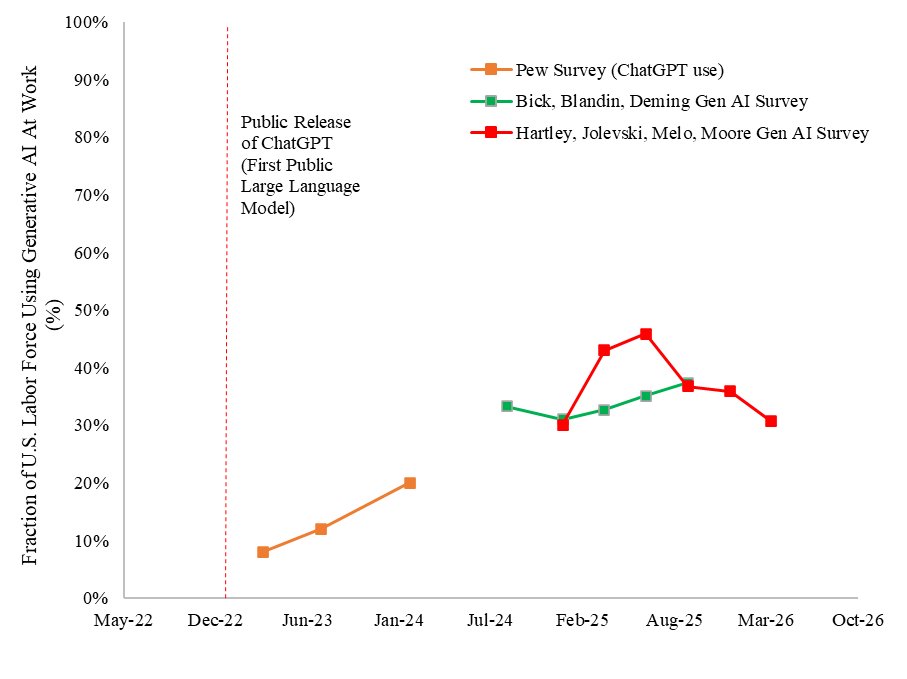

🚨Another update to our Generative AI US adoption time series results from our paper “The Labor Market Effects of Generative Artificial Intelligence”: we find LLM adoption at work in the US fell over the past quarter (while still up substantially from a couple years ago). https://t.co/Lo1v0V2zq0

I wholeheartedly endorse this piece. It annoys the hell out of me that there's an entire cottage industry of business "journalism" that consists of taking some outlandish claim made by a tech CEO -- often in a tweet or offhand comment -- and then crafting https://t.co/gjctqdvfUU

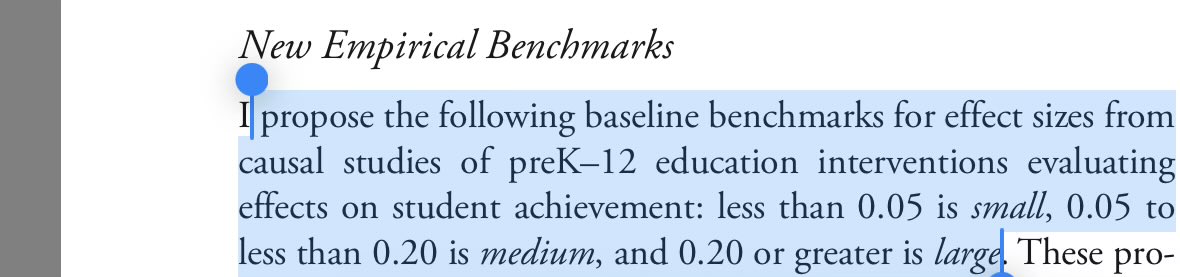

@emollick it’s certainly not “HUGE” by conventional interpretations; in traditional psychology (Jacob Cohen’s d) .15 is small; even in the context of education I am skeptical, and see quote below from Interpreting Effect Sizes of Education Interventions Matthew A. Kraft), which would still count it as medium, not large and certainly not all caps HUGE. But th thing about effect sizes of 0.15 in my experience is that there is usually enough of a confidence interval around it that you can’t be sure how real it is. That was Cohen’s point. (source for the 6-9 months?)

Microsoft built a voice AI so powerful they had to take it down. Deepfakes. Disinformation. Too dangerous. So they added watermarks, safety controls, and re-released it. For FREE. It's called VibeVoice. Here's what it does: → Clone any voice from 10 seconds of audio → Generate 90 minutes of multi-speaker conversation in one pass → Real-time TTS, first audio in ~200ms → Speech-to-text: 60 min of audio in a single pass, with speaker labels → 50+ languages, 4 speakers, natural turn-taking ElevenLabs: $99/month. Playht: $39/month. VibeVoice: Free. Local. MIT license. 28.5K stars. Backed by Microsoft Research. The fact they had to pull it once tells you everything about how good it is.

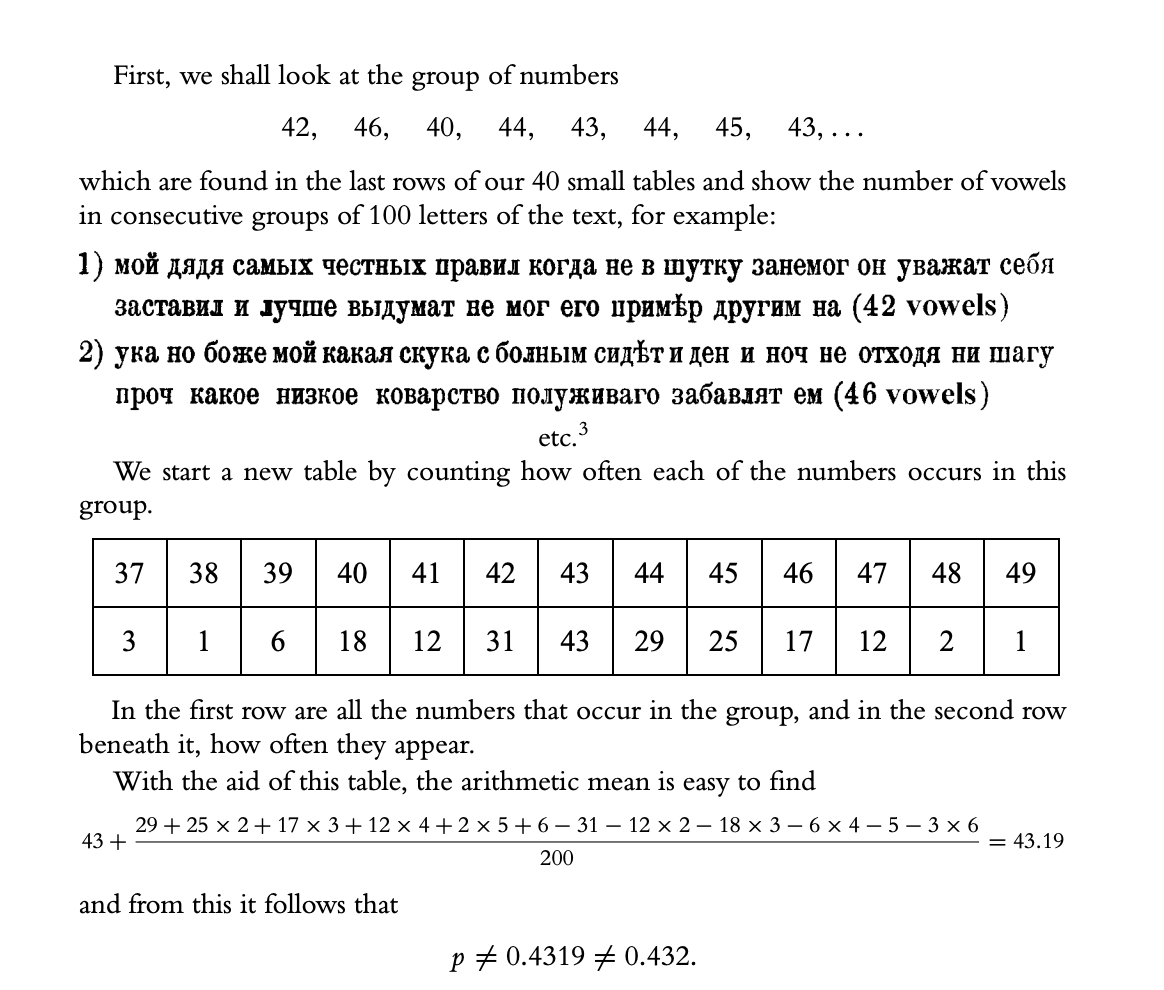

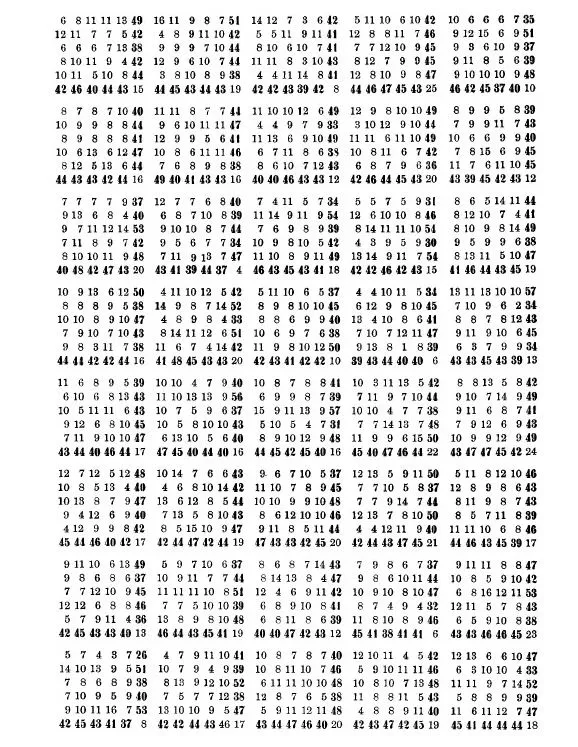

Hate to break it to you, but the first LLM was created by Andrey Markov in 1913. he tallied up 20,000 letters from a famous novel and computed p(vowel | vowel) p(consonant | vowel) p(vowel | consonant) p(consonant | consonant) basically 'training' a bigram by hand https://t.co/M1aLF9z2ik

Oh god are we really doing this? Jeff Dean trained an n-gram model on the entire internet in 2007. Jelinek coined the term "language model" in the '70s. It's called "Claude" because Claude Shannon was estimating the entropy rate of the English language in 1951!

Get it here: https://t.co/36PM5Kwfh6

Forgot the obligatory action shot! 🤦♂️ https://t.co/lBx23Fsbq2

For the first time in 150 years of professional baseball, a human official just got publicly overruled by an algorithm. The batter takes strike three and the umpire calls the inning over but instead of walking to the dugout, the batter taps his helmet. That tap triggers a review by twelve high speed Sony cameras installed around the perimeter of the ballpark, tracking the pitch to within one sixth of an inch. The system renders a verdict in fifteen seconds, and the scoreboard shows every person in the stadium a 3D animated replay of exactly where the pitch crossed the plate. In the first week of the 2026 season, more than half of all challenges proved the umpire's call was wrong and even at their best, human umpires still missed roughly eleven calls per game. Veteran umpire C.B. Bucknor, who has worked in this league for decades had multiple calls reversed by the system in a single game. The technology itself originated in a British military lab tracking fighter jets in NATO training exercises, then became the Hawk-Eye platform Sony now deploys across tennis, soccer, cricket, and the Olympics. Tennis ran this exact experiment first, and within fifteen years there were no human line judges left at Wimbledon or the US Open. Sports betting is now legal in 38 states with individual pitch outcomes tied to real-money wagers, turning every missed call into a liability in a regulated billion dollar market. This is what AI is supposed to do, not replace human judgment everywhere, but step in where the stakes are clear, the data is objective and the cost of being wrong is real.

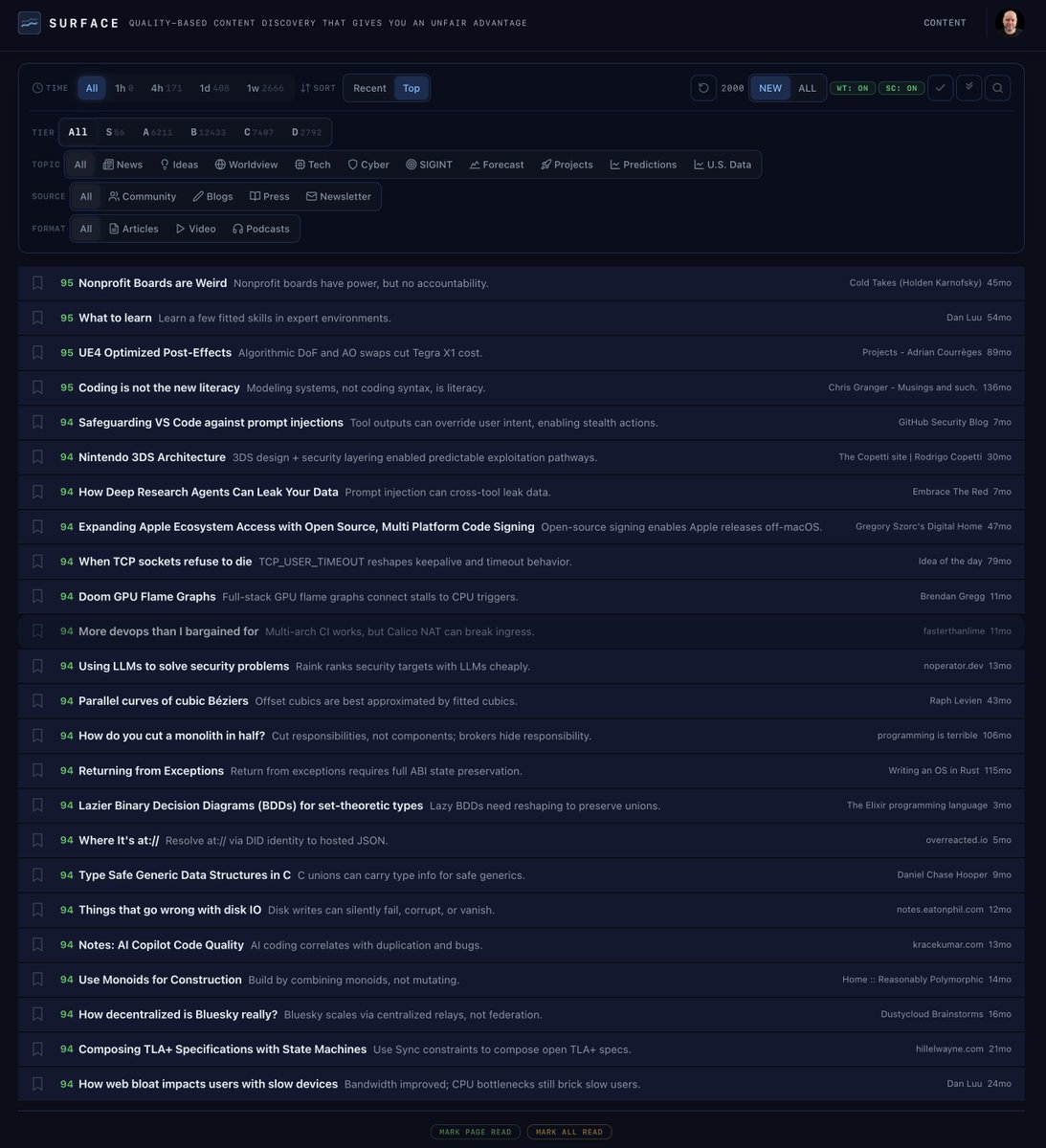

This is the night vs. day difference in how I get news now with my app called Surface. I built it as a replacement for my RSS system built over 20 years. - Over 5,300 sources - Each story rated by quality - The source doesn't matter!

Palantir CEO Alex Karp just named who wins the AI era. Not the people who mastered the system. The people who could never follow it. Karp: “We’re in a non-playbook world, and the playbook’s not that valuable.” For decades, the global economy ran on compliance. Read the manual. Follow the procedure. Execute like the person next to you. AI just automated the manual. If your entire value was executing the playbook, you are now losing to something that does it perfectly, instantly, and for free. Karp understood this before most people had the vocabulary for it. Karp: “If you’re a dyslexic, you can’t follow the playbook, so you invent new and generative things.” Neurodivergent people spent their entire lives inside a system built for a brain they do not have. The front door was locked. So they found other doors. Built new ones. Attacked problems from angles nobody else tried because the standard path was never theirs. That is not a disadvantage. That is decades of forced preparation for the exact world we just entered. The front door is now locked for everyone. The people who spent their lives perfecting the rules are scrambling. The people who spent their lives ignoring them already know how to move. The system spent a century punishing the exact people it needed most. It measured compliance and called it intelligence. It filtered out the builders. The ones who could not sit still. The ones who could not memorize a curriculum designed for someone else’s mind. And called them broken. They were not broken. They were just early.

Don’t wait until it’s too late to wish you had life insurance! @LumaLabsAI https://t.co/jj40QID7D1

I built a new plugin! You can now trigger Codex from Claude Code! Use the Codex plugin for Claude Code to delegate tasks to Codex or have Codex review your changes using your ChatGPT subscription. Start by installing the plugin: https://t.co/u6gBpArwBc https://t.co/HyEdMPWees

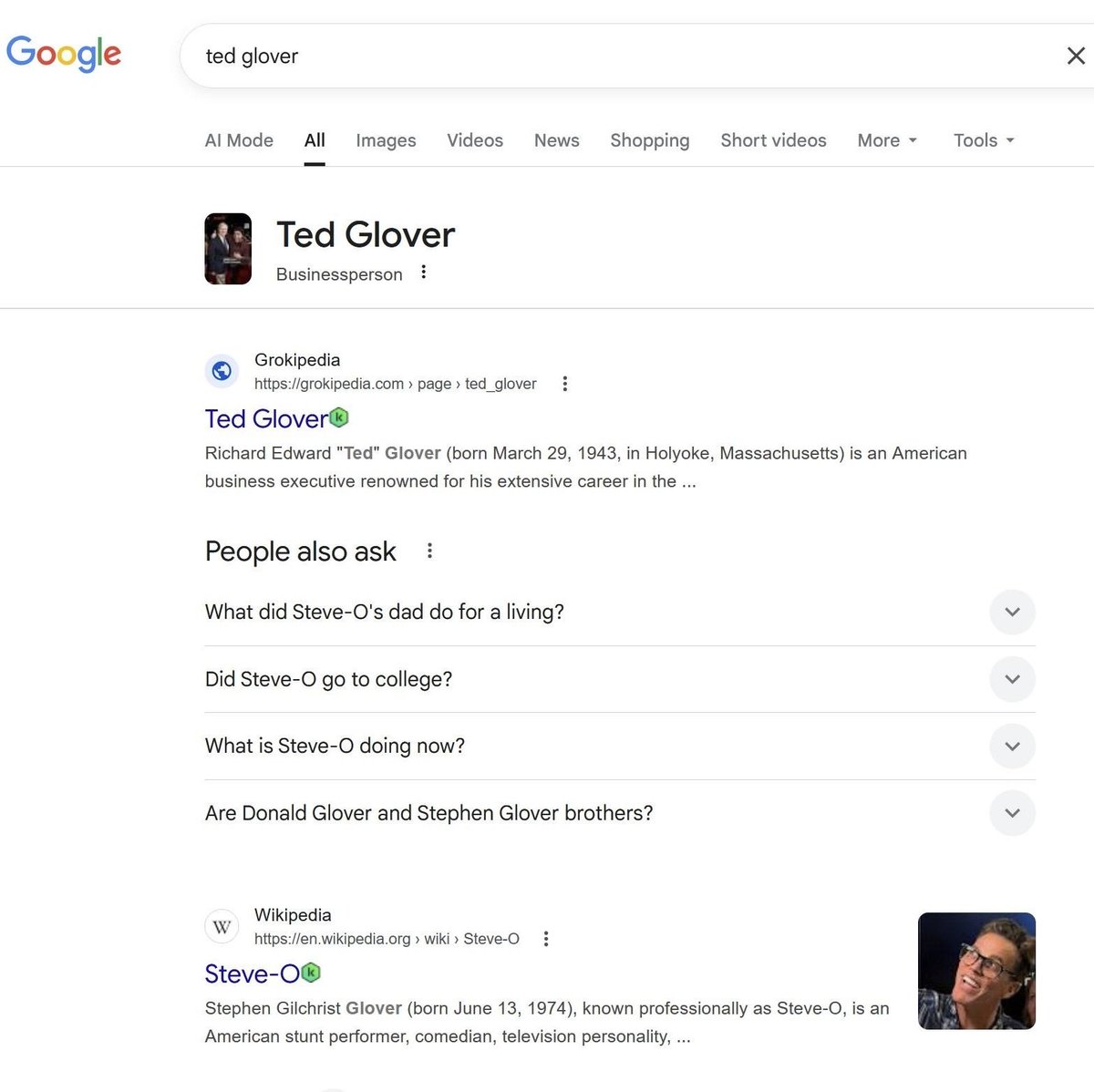

BREAKING: Grokipedia begins outranking Wikipedia for certain Google search results. https://t.co/uMM66WGMsf

Computer use is now in Claude Code. Claude can open your apps, click through your UI, and test what it built, right from the CLI. Now in research preview on Pro and Max plans. https://t.co/s2FDQaDmr1

Claude Code on terminal: 868 mb~ Codex desktop app: 638 mb~ It's crazy how Codex desktop app uses less memory than running Claude Code on a terminal on Zed. I tested this on my Macbook Pro M4. I'm not using the agent panel on Zed, it's only Zed's terminal, which is using Alacritty. Both Claude and Codex are working on one task, simply calling read and write tools, and they are not running tasks or subagents. Also I noticed Claude Code memory usage can spike up to 1 GB+ and Codex's usage is way more consistent. Any explanation?

Meet Qwen3-4B-Thinking-2507: a distilled powerhouse that's making waves. This GGUF model brings advanced reasoning to local machines, letting you run sophisticated AI without cloud costs. Perfect for developers wanting cutting-edge capabilities offline. https://t.co/my4iVCA32h