Your curated collection of saved posts and media

We built our launch video in Claude Code using HyperFrames. Now it's yours. Open source, agent-native framework. HTML to MP4. $ npx skills add heygen-com/hyperframes RT + Comment "HyperFrames" to get the full source code of this launch video (must follow) https://t.co/vsRtZ6gQsb

Meet Pexo: Your Personal AI Video Partner Stop operating tools. Start directing a partner. Vibe creating is here. You describe the vibe. Pexo handles the rest. It pulls from the best models. And build a polished video for you. The process is totally transparent. See the plan. Tweak it anytime. Edit by just leaving comments. Plus, Pexo is built into OpenClaw 🦞 — use it right inside Discord or Telegram. Say it. See it. Shape it. Ship it. The Open Beta is live. Let's create together! 👉 Try it now: https://t.co/jd81BTYlyr Comment "PEXO" below and DM me, and I'll send you an invite code. #PexoAI #VibeCreating #OpenClaw #AI

.@signulll says we're still in the stone age of AI tools: "I don't think most people are utilizing them anything beyond the basics." "We're in the stone ages of how people view and perceive and use these things, even though there's a billion people utilizing them, but they're not utilizing them to the full capabilities." "The number one challenge... is how do we make this stuff, the power of the models more easily accessible and useful in terms of what they can do." "I think this is happening with agents. It's happening today, but it still seems very primitive and very inaccessible to a lot of individuals... we need to make this stuff much more easily accessible and useful for individuals."

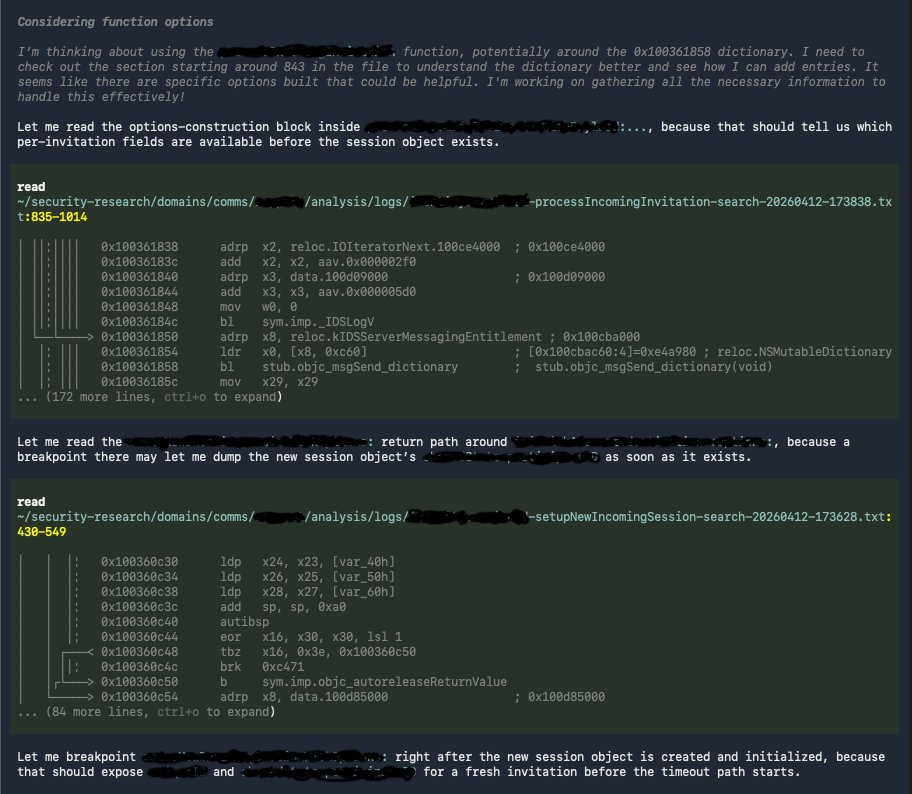

GPT 5.4 is currently reading disassembled binaries directly to establish breakpoints and find bugs. How long ago was this sci-fi? https://t.co/ss1tX7NVMy

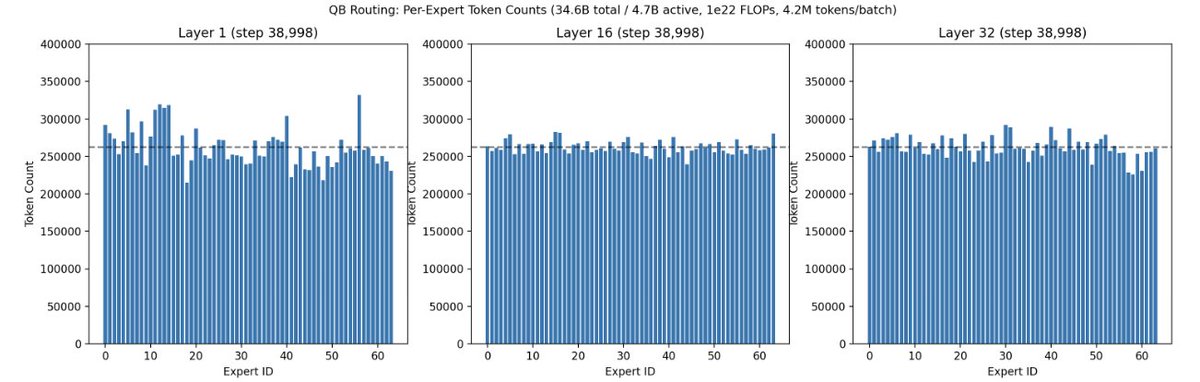

Researchers' brilliant ideas often get lost in the sea of endless SOTA claims on weak baselines. At Marin we battle-test ideas in an open arena, where anyone's idea can be promoted to the next hero run. One that recently rose up was @Jianlin_S MoE Quantile Balancing, used in our last 1e22 and ongoing 130B run. Animated visuals of how QB performed are available in the OpenAthena blog. https://t.co/BDSsonuNH7

@fooji011 @NousResearch https://t.co/aHsDGTDinH

More interestingly, other companies, like Brilliant Labs, which is set to release its Halo smart glasses, are only using its smart glasses for AI and nothing else—that means no photography or videos. https://t.co/X0C4y12jcm via @Gizmodo

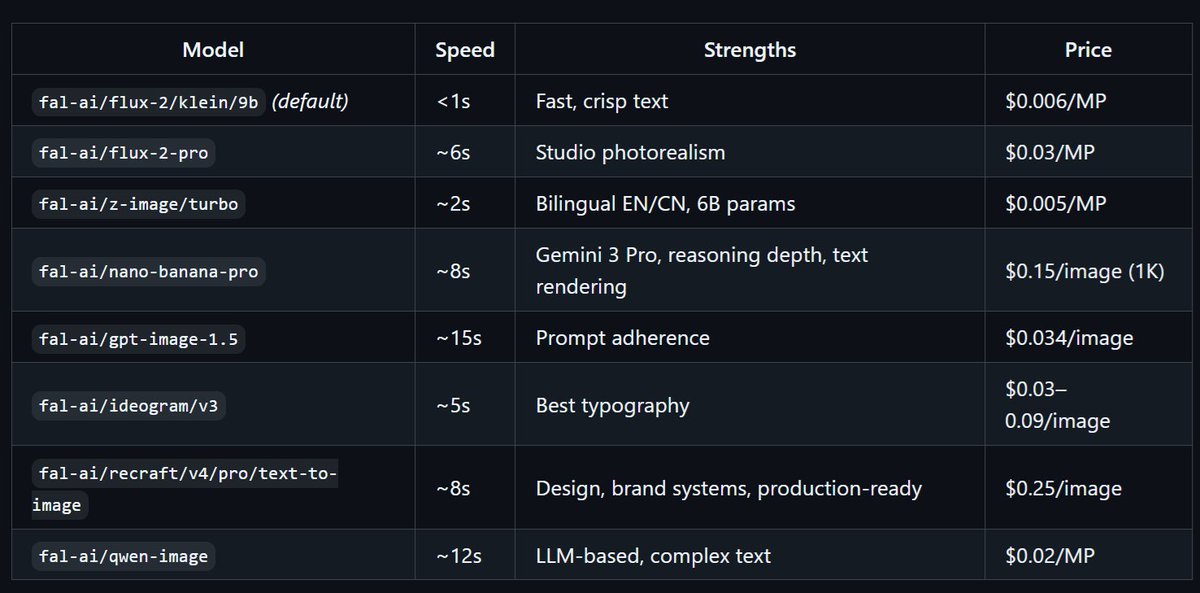

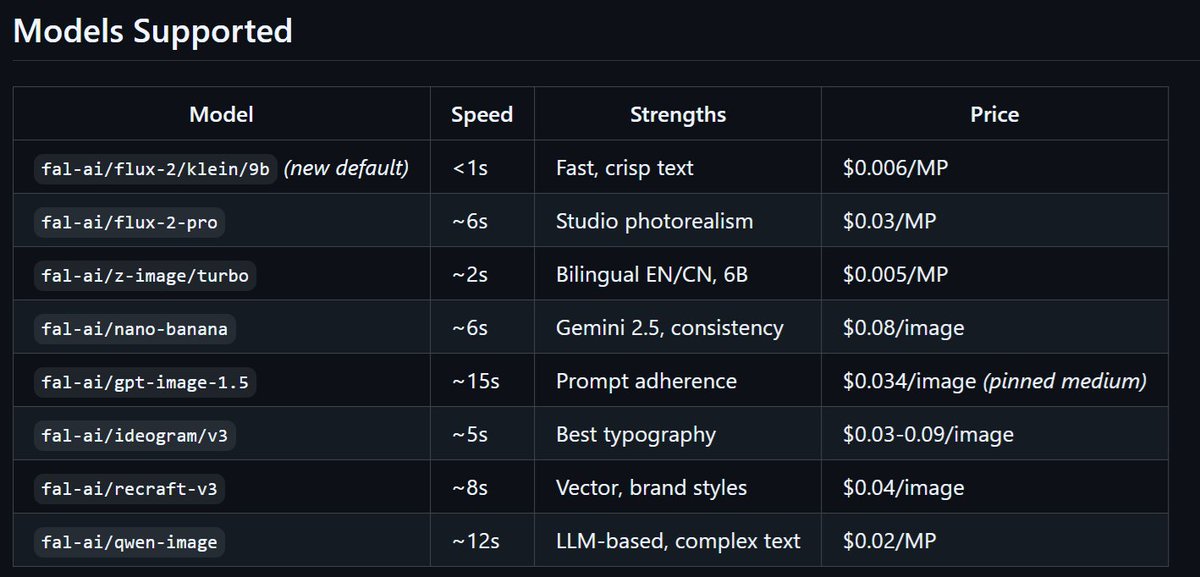

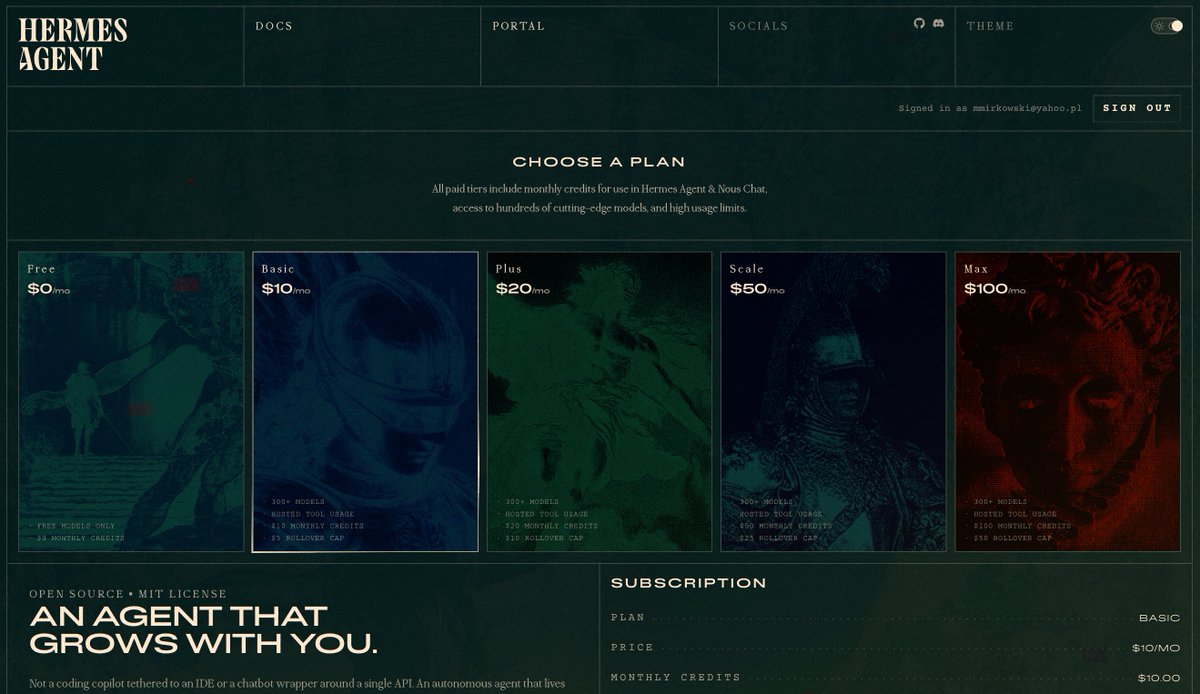

Introducing expanded coverage for image generation models in Hermes Agent and Nous Portal Tool Gateway! Choose models from ChatGPT Image, Nano Banana, z-Image, FLUX, and more! Access now with `hermes update` and then `hermes tools` -> select Fal -> choose image model. https://t.co/TEdWQfTCCE

And a lot was announced today: https://t.co/kiuZ7QXLzb

“We'll have universal high income. We're basically just issuing money to people, and just because the output of Business Services will so far exceed the money supply, that effectively you have deflation, because deflation is just the ratio of the outputs of goods and services to the money supply. If the rate of growth of goods and services exceeds the rate of growth of the money supply, which I predict will happen, then you will have deflation.” - Elon Musk

TIME TO SHIP! Today I'm launching Hermes OS, a one-click deployment solution for anyone to securely run Hermes Agent by @NousResearch + added a few extra features 🧵⬇️ https://t.co/QtK1haaGDF

@shineyd1111 @Shaughnessy119 @NousResearch Our imagen provisioning on Nous Portal includes these models, through our partnership with @fal https://t.co/H8xMmmKQFu

Raptor 3: The Most Advanced Rocket Engine Ever Raptor 3 is SpaceX’s latest evolution — the best rocket engine ever made. Key upgrades: • No external heat shield (full regenerative cooling) • Record thrust-to-weight ratio • Simplified design with fewer parts for faster production & higher reliability Elon Musk: “Raptor 3 engine is a very advanced engine, by far the best rocket engine ever made.” It powers Starship V3/V4 toward 10,000+ tons thrust and rapid reusability — the key to Mars.

Starship Super Heavy Booster, the most powerful moving object ever made by far

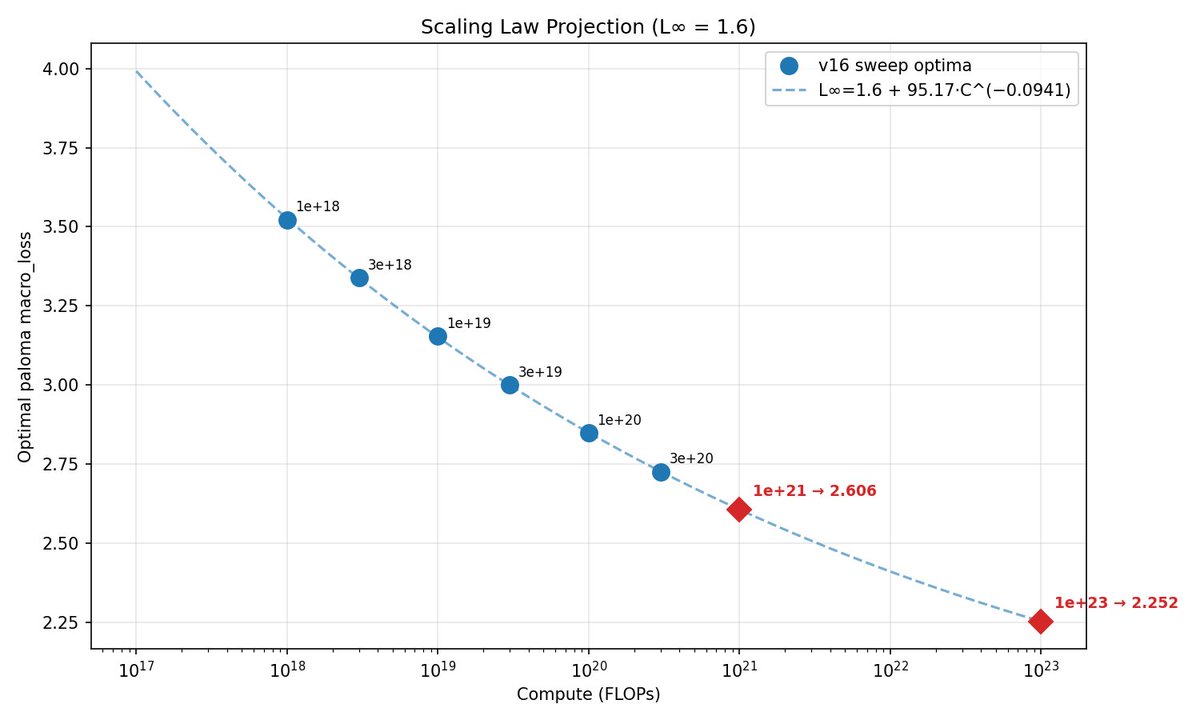

This week, @classiclarryd kicked off a 129B (16B active) 1e23 FLOPs MoE run. In typical Marin style, we have fit scaling laws and have made a loss projection of 2.252. Stay tuned. https://t.co/QnwJ8YxT9H

See all the gory details on GitHub: https://t.co/CfUbhtcBOp and follow along on wandb: https://t.co/UWU00HPknJ

The 2nd Robot Marathon has officially begun in Beijing. This year feels different. 1. Around 40% of teams are running fully autonomous, no remote control. 2. Top robots are already hitting ~10s per 100 meter, getting surprisingly close to human sprint limits. 3. You can also see much better safety design upfront. Way more structured than last year’s chaos. 4. Still, failures happen. Marathon distance pushes motors, structure, and control to the limit. What works in short demos breaks down over longer runs. Overall, a big step forward, but also a reminder that real-world robotics is still far from the polished demo videos you get fed from companies.

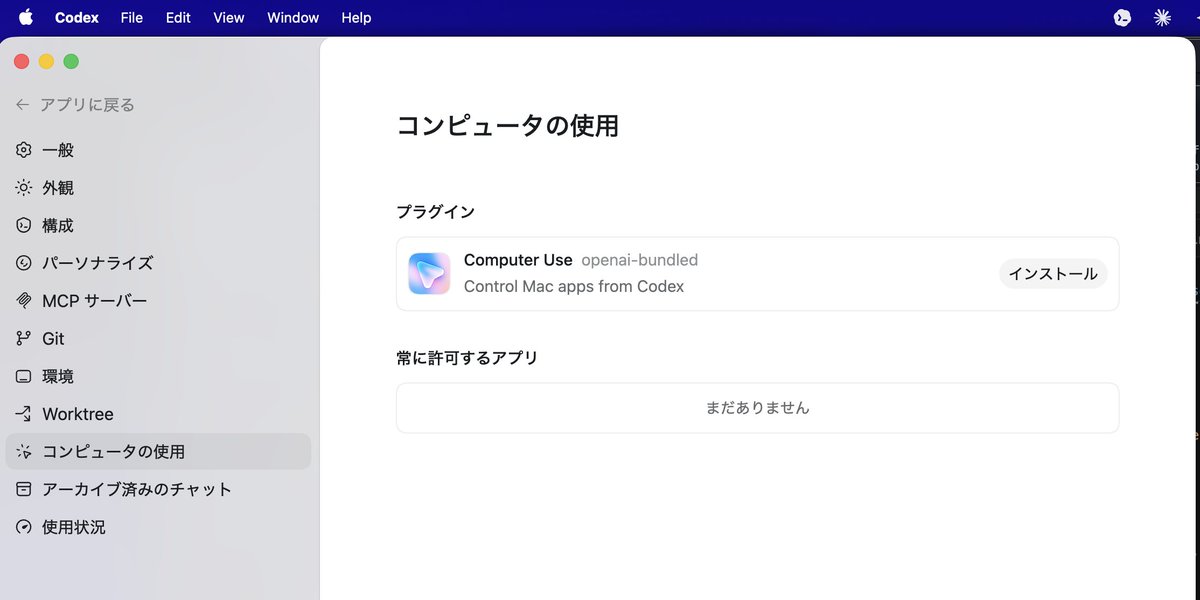

🚨OpenAI just stole the spotlight one hour after Opus 4.7 dropped. Codex now controls your Mac, has in-app browser, gpt-image-1.5, memory, long automations and 90+ plugins. Anthropic bets on raw model power, OpenAI wants your whole workflow. https://t.co/IeeGfxlHC8

Codex for (almost) everything. It can now use apps on your Mac, connect to more of your tools, create images, learn from previous actions, remember how you like to work, and take on ongoing and repeatable tasks. https://t.co/UEEsYBDYfo

Sharing a super simple, user-owned memory module we've been playing around: nanomem The basic idea is to treat memory as a pure intelligence problem: ingestion, structuring, and (selective) retrieval are all just LLM calls & agent loops on a on-device markdown file tree. Each file lists a set of facts w/ metadata (timestamp, confidence, source, etc.); no embeddings/RAG/training of any kind. For example: - `nanomem add <fact>` starts an agent loop to walk the tree, read relevant files, and edit. - `nanomem retrieve <query>` walks the tree and returns a single summary string (possibly assembled from many subtrees) related to the query. What’s nice about this approach is that the memory system is, by construction: 1. partitionable (human/agents can easily separate `hobbies/snowboard.md` from `tax/residency.md` for data minimization + relevance) 2. portable and user-owned (it’s just text files) 3. interpretable (you know exactly what’s written and you can manually edit) 4. forward-compatible (future models can read memory files just the same, and memory quality/speed improves as models get better) 5. modularized (you can optimize ingestion/retrieval/compaction prompts separately) Privacy & utility. I'm most excited about the ability to partition + selectively disclose memory at inference-time. Selective disclosure helps with both privacy (principle of least privilege & “need-to-know”) and utility (as too much context for a query can harm answer quality). Composability. An inference-time memory module means: (1) you can run such a module with confidential inference (LLMs on TEEs) for provable privacy, and (2) you can selectively disclose context over unlinkable inference of remote models (demo below). We built nanomem as part of the Open Anonymity project (https://t.co/fO14l5hRkp), but it’s meant to be a standalone module for humans and agents (e.g., you can write a SKILL for using the CLI tool). Still polishing the rough edges! - GitHub (MIT): https://t.co/YYDCk5sIzc - Blog: https://t.co/pexZTFdWzz - Beta implementation in chat client soon: https://t.co/rsMjL3wzKQ Work done with amazing project co-leads @amelia_kuang @cocozxu @erikchi !!

image recognition on opus 4.7 is really really good i couldn't even read this screenshot myself and opus 4.7 read the entire thing completely https://t.co/anDzcAfQdJ

Your AI agent can now make clips for you. Clipit's API + Hermes means you can create clips anywhere. Check out this demo👇 https://t.co/AbJuXMusGG

A new method of filmmaking just arrived. Earlier than anyone expected. It combines performance capture and virtual production at scale, and it's being tested right now for the first time in the industry. We call it Hybrid Production. This is what the future looks like. The future of filmmaking. Part 1. Read more. https://t.co/g5pNRGO1la

Thanks @_akhaliq sharing our work! Can frontier Multimodal Agents play games as well as humans? 🤩We are excited to introduce 🎮GameWorld: towards standardized and verfiable evaluation for multimodal game agents. 🕹️ 34 browser games 📌 170 tasks 🤖 18 multimodal agent baselines, covering 1. Computer-use (CUA) agents 👉 raw keyboard + mouse actions 2. Generalist multimodal agents 👉 semantic action parsinga GameWorld show that even sota agents still perform far below novice human players. 📹Watch our live runs: https://t.co/wrhKJD9JVx 🌐project page: https://t.co/J906LQ6Sfj 💻github: https://t.co/W1vL99MDg5 work done with @OuyyyangMingyu @who_s_yuan Hwee Tou Ng, @MikeShou1

GameWorld Towards Standardized and Verifiable Evaluation of Multimodal Game Agents paper: https://t.co/IfbTgfNnSM https://t.co/gL3BURxzkV

@MainStreetAIHQ @NickSpisak_ This is the solution: https://t.co/kiuZ7QXLzb Built off of the most complete lists of AI industry available anywhere: https://t.co/iznTgiEikm

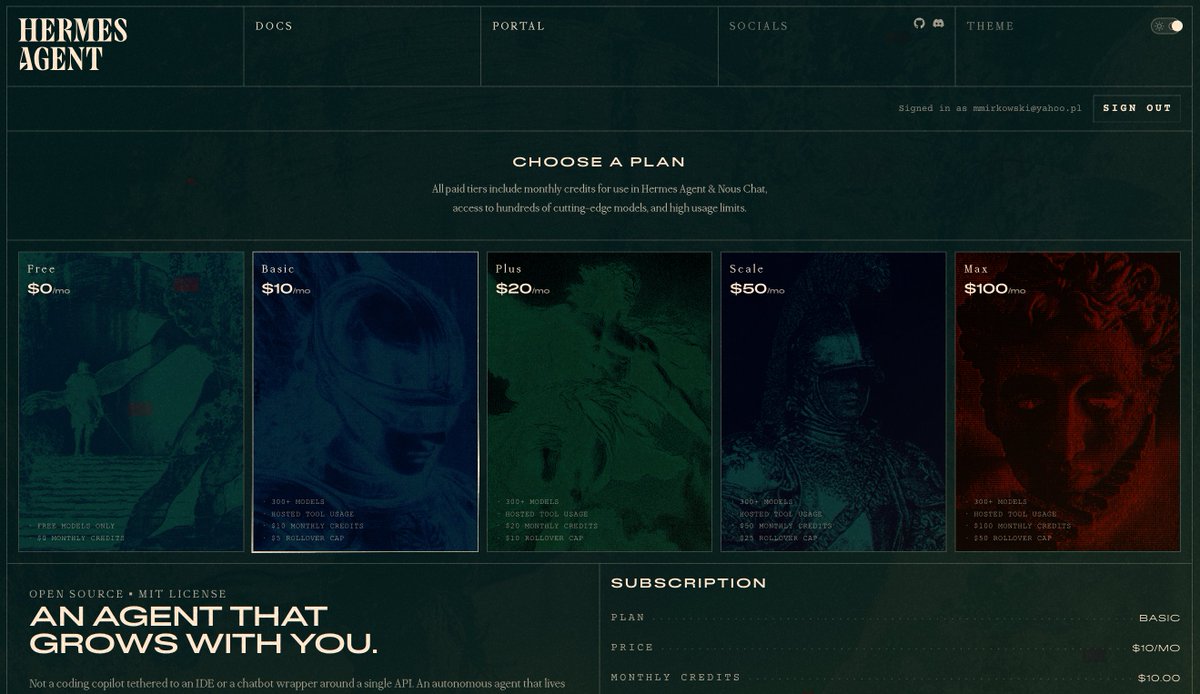

This is the best looking subscription page I've seen in my life. 😮 Congrats @NousResearch! https://t.co/SwPOkHHmjf

This is the best looking subscription page I've seen in my life. 😮 Congrats @NousResearch! https://t.co/SwPOkHHmjf

https://t.co/SJAqy28JxG

macOS 版のCodex Desktop アプリに「Computer Use」機能がついたのでインストールして試してみます。 https://t.co/xBU891BSUa

macOS 版のCodex Desktop アプリに「Computer Use」機能がついたのでインストールして試してみます。 https://t.co/xBU891BSUa

【速報】OpenAI、Codex に大型アップデート…!! ・コンピュータ使用 ・アプリ内ブラウザ ・画像生成/編集 ・90超の新プラグイン(Atlassian / GitLab / Microsoftなど) ・メモリ ・長期タスクの自動復帰(数日〜数週間をまたぐ作業継続) 本日より順次展開👇 https://t.co/CzmZ1MOUOT

Codex for (almost) everything. It can now use apps on your Mac, connect to more of your tools, create images, learn from previous actions, remember how you like to work, and take on ongoing and repeatable tasks. https://t.co/UEEsYBDYfo

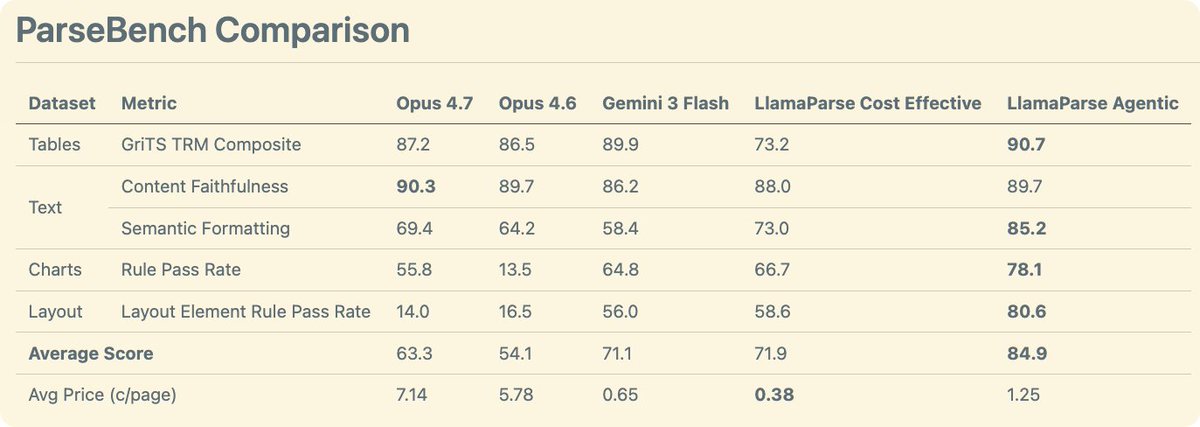

We comprehensively benchmarked Opus 4.7 on document understanding. We evaluated it through ParseBench - our comprehensive OCR benchmark for enterprise documents where we evaluate tables, text, charts, and visual grounding. The results 🧑🔬: - Opus 4.7 is a general improvement over Opus 4.6. It has gotten much better at charts compared to the previous iteration - Opus 4.7 is quite good at tables, though not quite as good as Gemini 3 flash - Opus 4.7 wins on content faithfulness across all techniques (including ours) - Using Opus 4.7 as an OCR solution is expensive at ~7c per page!! For comparison, our agentic mode is 1.25c and cost-effective is ~0.4c by default. Take a look at these results and more on ParseBench! https://t.co/tYiSOMbd6p

Anthropic says Opus 4.7 hits 80.6% on Document Reasoning — up from 57.1%. But "reasoning about documents" ≠ "parsing documents for agents." We ran it on ParseBench. → Charts: 13.5% → 55.8% (+42.3) — huge → Formatting: 64.2% → 69.4% (+5.2) → Content: 89.7% → 90.3% (+0.6) → T

With max thinking Opus 4.7 is quite impressive, with a real sense of style In two prompts: "implement the Tower of Babel, in 3D, in as sophisticated and visually interesting a way as possible. It should be interactive" and then "make it better." Play: https://t.co/JWTVewpwZ9 https://t.co/T4EPUa3L8C

Procedurally generated Bruegel with little workers and everything! https://t.co/tRo8YBobYn