Your curated collection of saved posts and media

NEW: You can now build AI Agents that monitor the market, manage your cash, and execute your trades. The Agentic Brokerage has arrived. https://t.co/AulG4ItwSq

EpochX Building the Infrastructure for an Emergent Agent Civilization paper: https://t.co/Dhw9ZBgAhs https://t.co/GMn4zJUsnB

GEditBench v2 A Human-Aligned Benchmark for General Image Editing paper: https://t.co/0OJGlz69Tw https://t.co/zilKdhHyBK

pov: you're at cafe cursor singapore aka @cursor_ai kopitiam 📽️: @unprofeshme @SherryYanJiang @fr4nnyp4ck @nickwm @benln @ftnabeelah https://t.co/idDxF3T98l

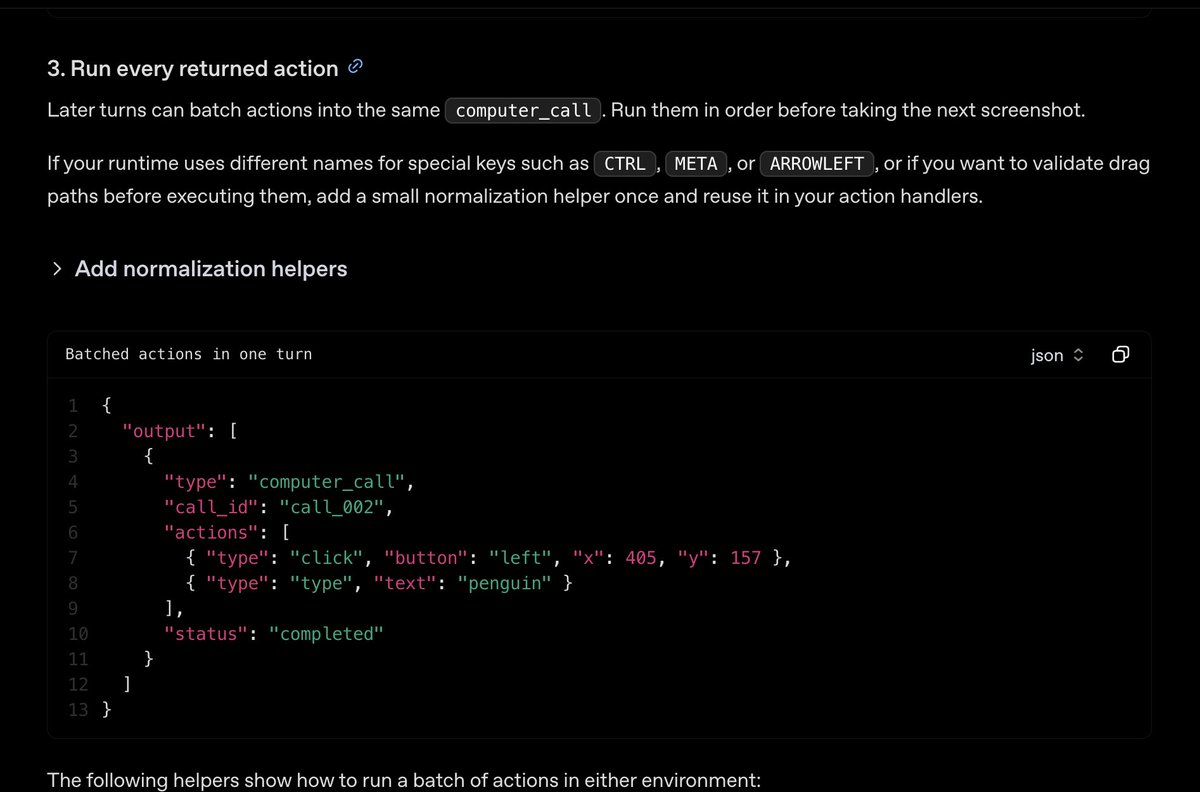

Computer Use now supports modifier-assisted mouse actions like Ctrl+click and Shift+click, which are fundamental for desktop workflows like multi-select and range select. This went from a report from a startup to prod in under 72 hours, which is a really nice example of the feedback loop we’re building. thanks @HennersBro98!

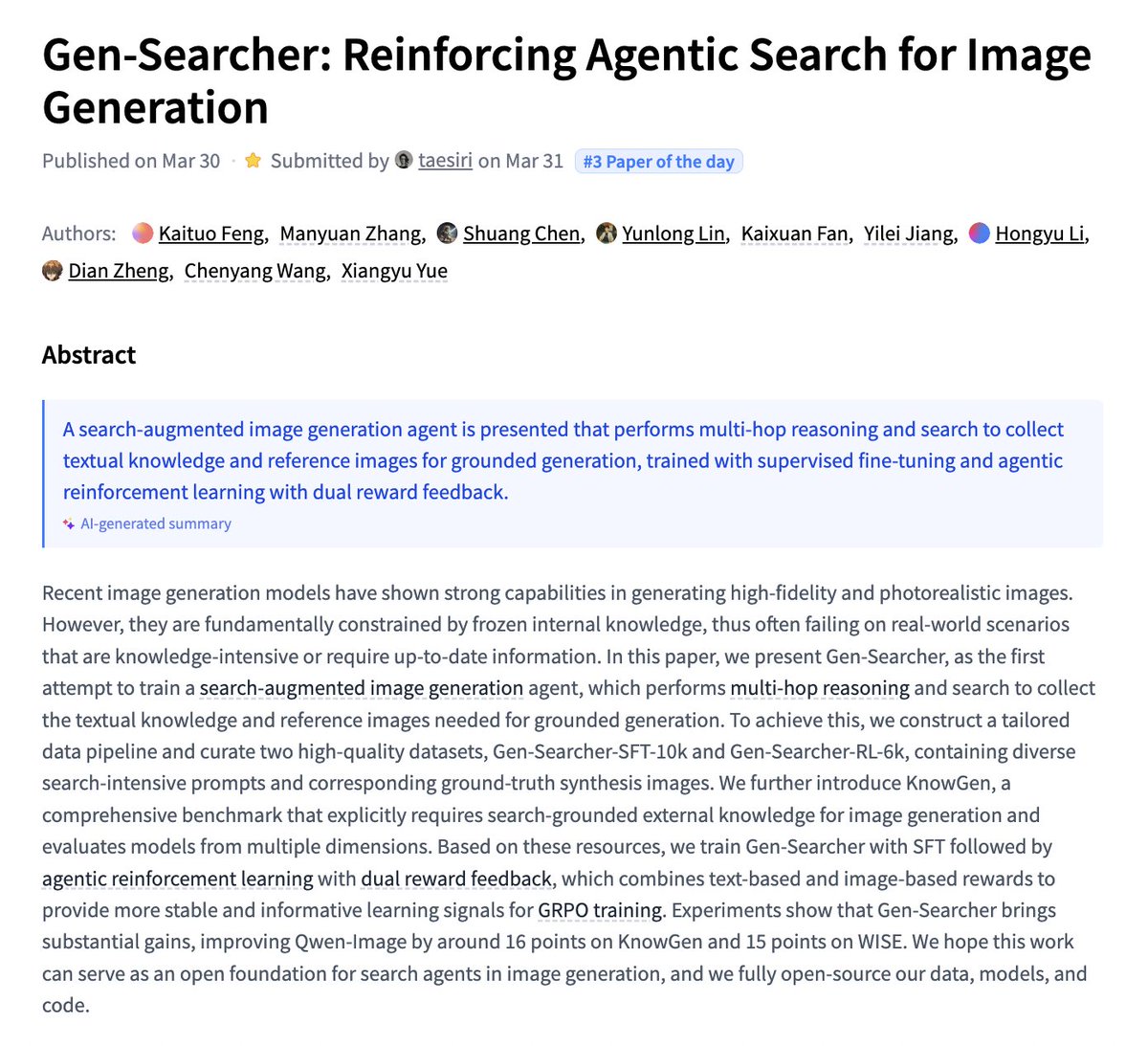

Gen-Searcher Reinforcing Agentic Search for Image Generation paper: https://t.co/Y6bM7LExjv https://t.co/59R6FiNqB9

holy shitt, somebody at OpenAI leaked the entire codex codebase.. https://t.co/VDU5UqvC4N

holy shitt, somebody at OpenAI leaked the entire codex codebase.. https://t.co/VDU5UqvC4N

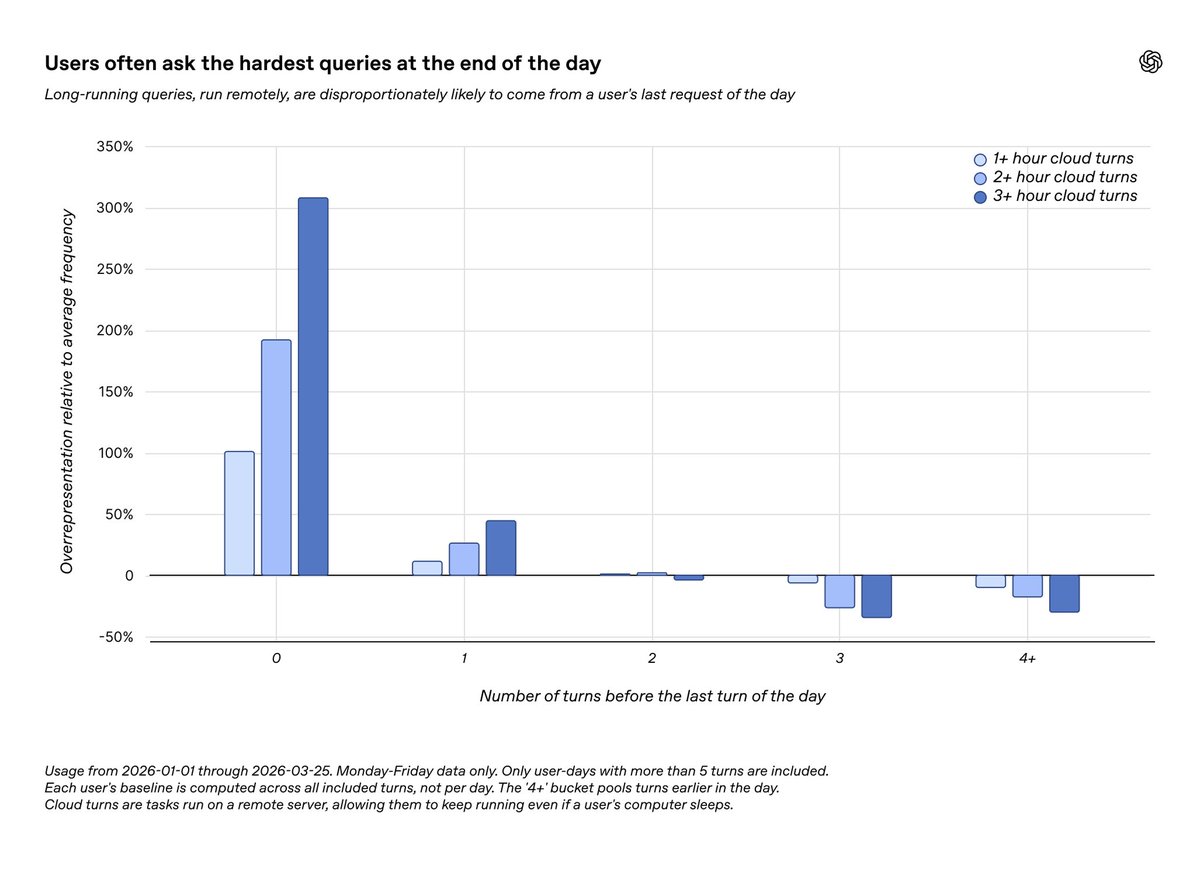

Developers are getting work done, even while they sleep. Latest data from Codex use shows that developers delegate their long-running, hard tasks, such as refactors and architecture planning, to Codex at the end of the day. https://t.co/08KoKPSBdw

TAPS Task Aware Proposal Distributions for Speculative Sampling paper: https://t.co/hIdMwlTzFf https://t.co/9VADVLz6h0

AI safety has entered the cybersecurity era. @IrenaCronin and I write this newsletter every week. AI safety is becoming a cybersecurity issue because advanced AI can now help both defenders and attackers, making the risks more immediate and practical. As AI systems become more agentic and more deeply connected to enterprise tools and data, organizations need stronger controls, monitoring, and governance to prevent misuse and reduce exposure. Read and subscribe for free: https://t.co/HHwYy7NoAl

for the first time, humans and ai agents share the same living space. Flowith Canvas has just evolved into a human-agent co-creation ecosystem: - navigate freely through your thoughts and reshape your context. - scale a single idea into multi-modal creations. - invite the agents in to co-create seamlessly. humans and agents, flow as one. watch the canvas breathe 🌊

Chat GPT uses Dalle to draw nonsense & then confidently describes what it has drawn as being correct & balanced. https://t.co/hxsp4LRGwP

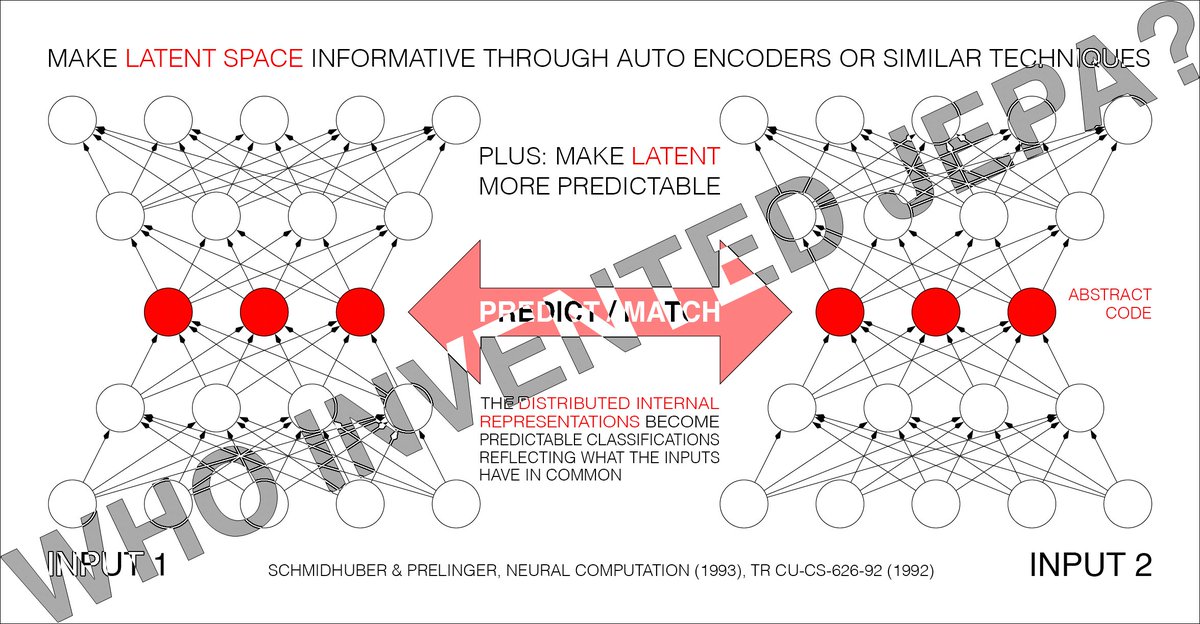

Dr. LeCun's heavily promoted Joint Embedding Predictive Architecture (JEPA, 2022) [5] is the heart of his new company. However, the core ideas are not original to LeCun. Instead, JEPA is essentially identical to our 1992 Predictability Maximization system (PMAX) [1][14]. Details in reference [19] which contains many additional references. Motivation of PMAX [1][14]. Since details of inputs are often unpredictable from related inputs, two non-generative artificial neural networks interact as follows: one net tries to create a non-trivial, informative, latent representation of its own input that is predictable from the latent representation of the other net’s input. PMAX [1][14] is actually a whole family of methods. Consider the simplest instance in Sec. 2.2 of [1]: an auto encoder net sees an input and represents it in its hidden units (its latent space). The other net sees a different but related input and learns to predict (from its own latent space) the auto encoder's latent representation, which in turn tries to become more predictable, without giving up too much information about its own input, to prevent what's now called “collapse." See illustration 5.2 in Sec. 5.5 of [14] on the "extraction of predictable concepts." The 1992 PMAX paper [1] discusses not only auto encoders but also other techniques for encoding data. The experiments were conducted by my student Daniel Prelinger. The non-generative PMAX outperformed the generative IMAX [2] on a stereo vision task. The 2020 BYOL [10] is also closely related to PMAX. In 2026, @misovalko, leader of the BYOL team, praised PMAX, and listed numerous similarities to much later work [19]. Note that the self-created “predictable classifications” in the title of [1] (and the so-called “outputs” of the entire system [1]) are typically INTERNAL "distributed representations” (like in the title of Sec. 4.2 of [1]). The 1992 PMAX paper [1] considers both symmetric and asymmetric nets. In the symmetric case, both nets are constrained to emit "equal (and therefore mutually predictable)" representations [1]. Sec. 4.2 on “finding predictable distributed representations” has an experiment with 2 weight-sharing auto encoders which learn to represent in their latent space what their inputs have in common (see the cover image of this post). Of course, back then compute was was a million times more expensive, but the fundamental insights of "JEPA" were present, and LeCun has simply repackaged old ideas without citing them [5,6,19]. This is hardly the first time LeCun (or others writing about him) have exaggerated LeCun's own significance by downplaying earlier work. He did NOT "co-invent deep learning" (as some know-nothing "AI influencers" have claimed) [11,13], and he did NOT invent convolutional neural nets (CNNs) [12,6,13], NOR was he even the first to combine CNNs with backpropagation [12,13]. While he got awards for the inventions of other researchers whom he did not cite [6], he did not invent ANY of the key algorithms that underpin modern AI [5,6,19]. LeCun's recent pitch: 1. LLMs such as ChatGPT are insufficient for AGI (which has been obvious to experts in AI & decision making, and is something he once derided @GaryMarcus for pointing out [17]). 2. Neural AIs need what I baptized a neural "world model" in 1990 [8][15] (earlier, less general neural nets of this kind, such as those by Paul Werbos (1987) and others [8], weren't called "world models," although the basic concept itself is ancient [8]). 3. The world model should learn to predict (in non-generative "JEPA" fashion [5]) higher-level predictable abstractions instead of raw pixels: that's the essence of our 1992 PMAX [1][14]. Astonishingly, PMAX or "JEPA" seems to be the unique selling proposition of LeCun's 2026 company on world model-based AI in the physical world, which is apparently based on what we published over 3 decades ago [1,5,6,7,8,13,14], and modeled after our 2014 company on world model-based AGI in the physical world [8]. In short, little if anything in JEPA is new [19]. But then the fact that LeCun would repackage old ideas and present them as his own clearly isn't new either [5,6,18,19]. FOOTNOTES 1. Note that PMAX is NOT the 1991 adversarial Predictability MINimization (PMIN) [3,4]. However, PMAX may use PMIN as a submodule to create informative latent representations [1](Sec. 2.4), and to prevent what's now called “collapse." See the illustration on page 9 of [1]. 2. Note that the 1991 PMIN [3] also predicts parts of latent space from other parts. However, PMIN's goal is to REMOVE mutual predictability, to obtain maximally disentangled latent representations called factorial codes. PMIN by itself may use the auto encoder principle in addition to its latent space predictor [3]. 3. Neither PMAX nor PMIN was my first non-generative method for predicting latent space, which was published in 1991 in the context of neural net distillation [9]. See also [5-8]. 4. While the cognoscenti agree that LLMs are insufficient for AGI, JEPA is so, too. We should know: we have had it for over 3 decades under the name PMAX! Additional techniques are required to achieve AGI, e.g., meta learning, artificial curiosity and creativity, efficient planning with world models, and others [16]. REFERENCES (easy to find on the web): [1] J. Schmidhuber (JS) & D. Prelinger (1993). Discovering predictable classifications. Neural Computation, 5(4):625-635. Based on TR CU-CS-626-92 (1992): https://t.co/wJFbdPhwdi [2] S. Becker, G. E. Hinton (1989). Spatial coherence as an internal teacher for a neural network. TR CRG-TR-89-7, Dept. of CS, U. Toronto. [3] JS (1992). Learning factorial codes by predictability minimization. Neural Computation, 4(6):863-879. Based on TR CU-CS-565-91, 1991. [4] JS, M. Eldracher, B. Foltin (1996). Semilinear predictability minimization produces well-known feature detectors. Neural Computation, 8(4):773-786. [5] JS (2022-23). LeCun's 2022 paper on autonomous machine intelligence rehashes but does not cite essential work of 1990-2015. [6] JS (2023-25). How 3 Turing awardees republished key methods and ideas whose creators they failed to credit. Technical Report IDSIA-23-23. [7] JS (2026). Simple but powerful ways of using world models and their latent space. Opening keynote for the World Modeling Workshop, 4-6 Feb, 2026, Mila - Quebec AI Institute. [8] JS (2026). The Neural World Model Boom. Technical Note IDSIA-2-26. [9] JS (1991). Neural sequence chunkers. TR FKI-148-91, TUM, April 1991. (See also Technical Note IDSIA-12-25: who invented knowledge distillation with artificial neural networks?) [10] J. Grill et al (2020). Bootstrap your own latent: A "new" approach to self-supervised Learning. arXiv:2006.07733 [11] JS (2025). Who invented deep learning? Technical Note IDSIA-16-25. [12] JS (2025). Who invented convolutional neural networks? Technical Note IDSIA-17-25. [13] JS (2022-25). Annotated History of Modern AI and Deep Learning. Technical Report IDSIA-22-22, arXiv:2212.11279 [14] JS (1993). Network architectures, objective functions, and chain rule. Habilitation Thesis, TUM. See Sec. 5.5 on "Vorhersagbarkeitsmaximierung" (Predictability Maximization). [15] JS (1990). Making the world differentiable: On using fully recurrent self-supervised neural networks for dynamic reinforcement learning and planning in non-stationary environments. Technical Report FKI-126-90, TUM. [16] JS (1990-2026). AI Blog. [17] @GaryMarcus. Open letter responding to @ylecun. A memo for future intellectual historians. Substack, June 2024. [18] G. Marcus. The False Glorification of @ylecun. Don’t believe everything you read. Substack, Nov 2025. [19] J. Schmidhuber. Who invented JEPA? Technical Note IDSIA-3-22, IDSIA, Switzerland, March 2026. https://t.co/fDauPE6T2N

The question of who benefits from AI is becoming more urgent. Some argue that AI should not only serve companies, but also empower workers by giving them more control, insight and influence over how work is done. It is a shift from automation replacing labor to augmentation strengthening it. The future of AI may depend less on what it can do and more on who it is built for. https://t.co/0tU4RPAgBN @time @imravikumars @Cognizant

We're excited to be a community partner for the Optimized AI Conference in Atlanta this week. Today will be our last day at the booth, so stop by and chat with us about the future of open source AI and PyTorch Foundation projects including PyTorch, vLLM, DeepSpeed, and Ray #OAI2026

Agent harnesses are too restrictive. That's because they're still designed as code. What if the harness itself were written in natural language and interpreted by an LLM at runtime? This research explores the idea. The work introduces Natural-Language Agent Harnesses (NLAHs), a structured natural-language representation that externalizes harness logic as a portable, executable artifact. Instead of scattering control flow across controller code, framework defaults, and tool adapters, NLAHs make contracts, roles, stage structure, state semantics, and failure taxonomies explicit and editable. An Intelligent Harness Runtime (IHR) places an LLM inside the runtime loop to interpret and execute these harnesses directly. Why does it matter? Harness design is increasingly decisive for agent performance, but it's buried in code that's hard to transfer, compare, or ablate. NLAHs make the orchestration layer a first-class scientific object. The practical implication: harnesses become portable across runtimes, composable across tasks, and directly inspectable by humans and models alike. Paper: https://t.co/6itsSvh4Ag Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

A federal judge just ruled that videos of DOGE staffers must stay up — and they are devastating. Under oath, they couldn't define DEI. They admitted they never reduced the deficit. And they wiped out $100 million in humanities grants — including a Holocaust documentary — using ChatGPT. The regime tried to bury this. It didn't work. https://t.co/fWMDX4EeEb

1️⃣ week until #PyTorchCon Europe! 🇫🇷 Paris becomes the home of #PyTorch for two days of #ML breakthroughs & community from 7-8 April. Check out schedule: https://t.co/VibJpzycTV. 🎟 Join us! https://t.co/zFEkkeDp2Y https://t.co/WNpkYpjVZo

🗣 Shoutout to our #PyTorchCon Europe gold sponsors. Mark your calendar for 7-8 April in Paris & be part of this historic debut with technical sessions & workshops defining the future of #AI. 🇫🇷 Register: https://t.co/zFEkkeDp2Y Schedule: https://t.co/VibJpzycTV https://t.co/pp81trGsxQ

Polling is running into a new problem. Automated responses generated with AI are starting to distort survey data, making it harder to separate real opinions from fabricated ones. What once relied on honest participation is becoming easier to manipulate at scale. When data becomes unreliable, the decisions built on top of it become questionable too. https://t.co/Tsz8ezlDAQ

A leaked model is raising new concerns about AI and cybersecurity. Anthropic’s “Mythos” is described as a step change in capability, especially in how AI agents can act, reason and operate independently. That makes it easier for attackers to scale operations and run multiple campaigns at once. At the same time, risks are growing inside companies. Employees using AI agents may unknowingly expose internal systems, while identity breaches are becoming easier, shifting cybersecurity into a new phase driven by AI itself. https://t.co/ZDdNfXx8HT @euronews

LLMが遂にテキストを脱皮し「現実世界」の3D空間をガチで理解し始めた。 SpatialLMは3D点群にLLMの推論力を適用し、壁やドアの構造、空間の繋がりを解釈して構造化データを出力する。 言語モデルを空間知能へ拡張するブレイクスルー。コードも論文もオープンに公開。 https://t.co/Nfa9sWIJIG

🚨 Want to quickly check if you've been compromised by the Axios supply-chain attack? We just shipped a free @claudeai skill for you. /plugin marketplace add cantinasec/plugins /plugin install cantinasec@cantinasec-plugins /reload-plugins /cantinasec:axios https://t.co/frznXdAF3w

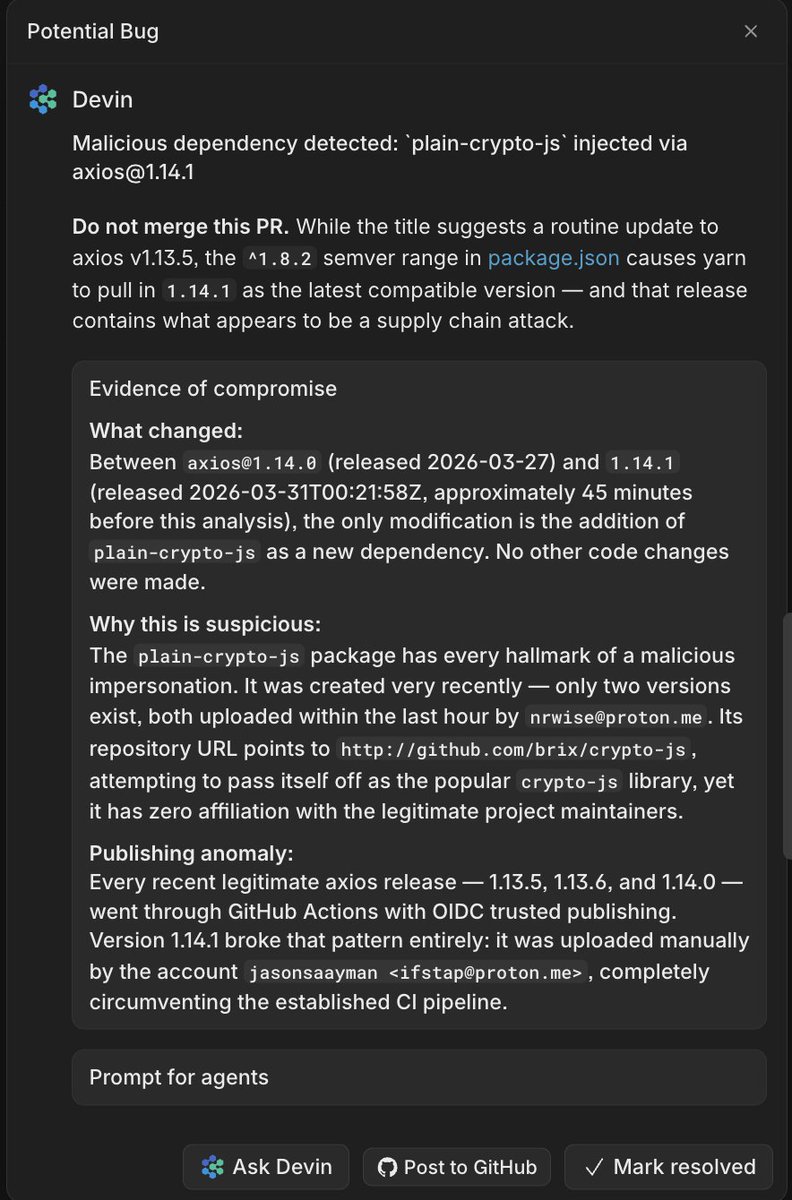

🚨 CRITICAL: Active supply chain attack on axios -- one of npm's most depended-on packages. The latest axios@1.14.1 now pulls in plain-crypto-js@4.2.1, a package that did not exist before today. This is a live compromise. This is textbook supply chain installer malware. axios ha

Devin Review caught the axios supply chain attack for multiple Cognition customers before the attack was publicly known. These attacks will be 10x more frequent in the age of AI; it is critical that repo maintainers start using AI for defense as well. (showing one example below where Devin Review caught the attack within an hour of its release - text minorly edited for anonymization)

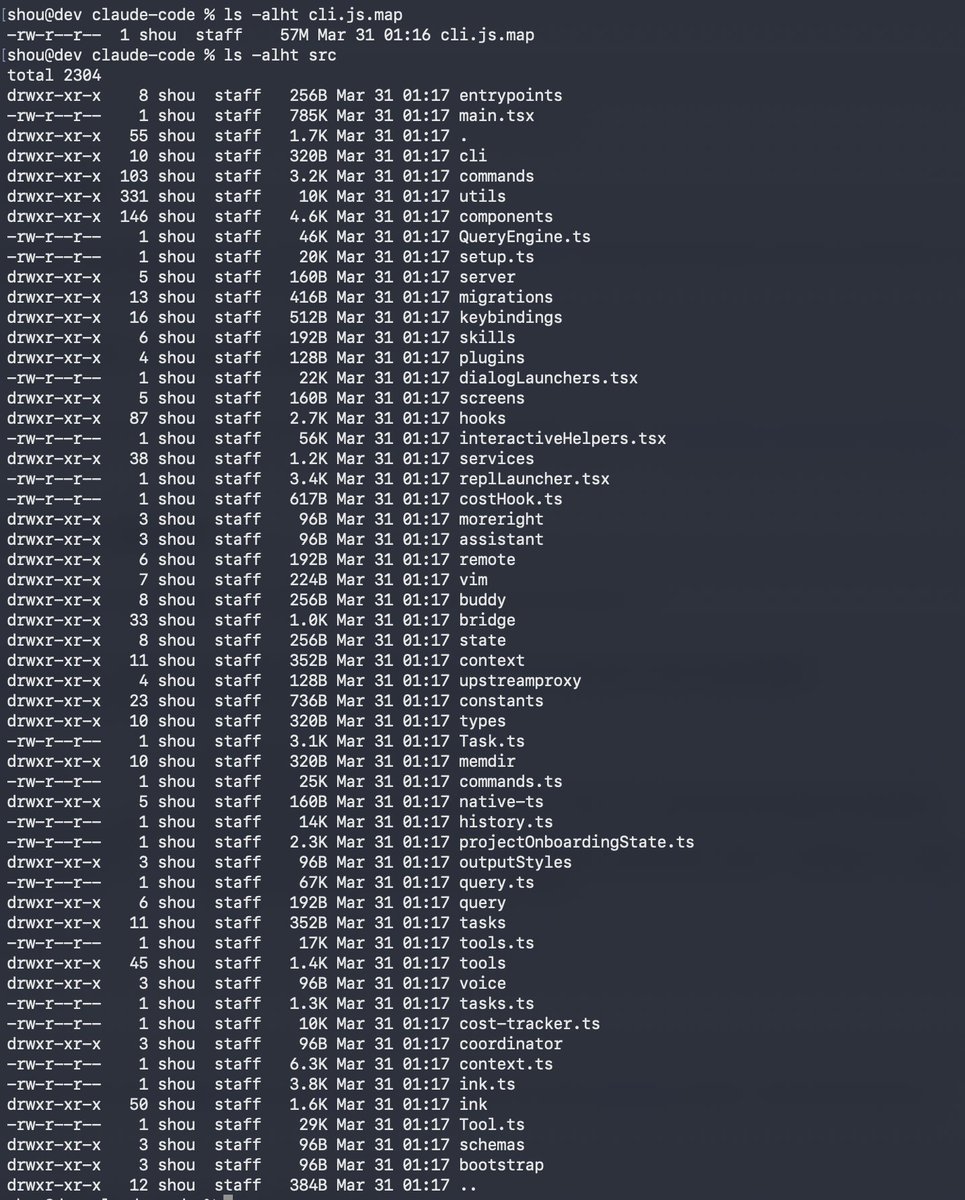

Claude code source code has been leaked via a map file in their npm registry! Code: https://t.co/jBiMoOzt8G https://t.co/rYo5hbvEj8

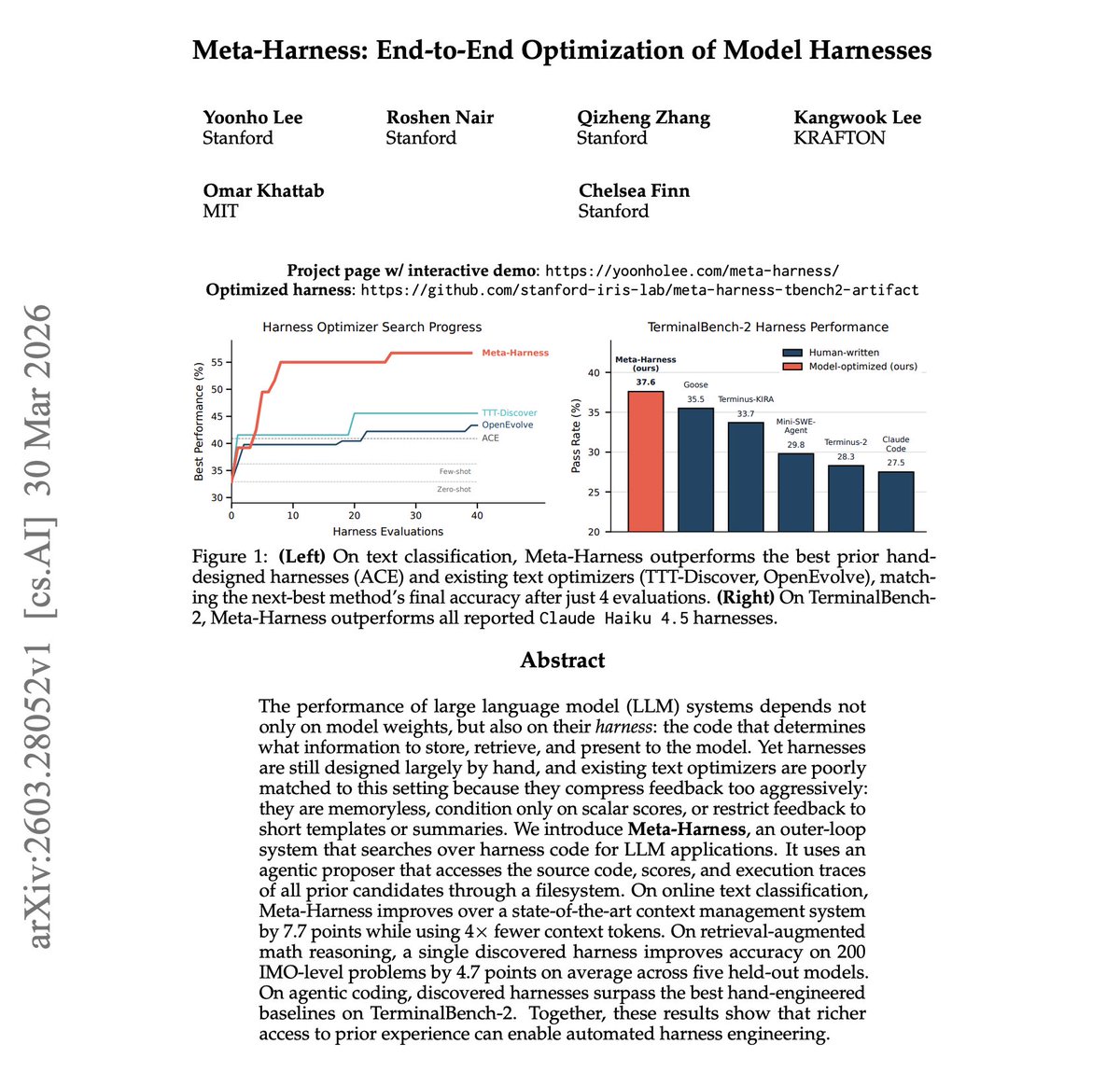

NEW Stanford & MIT paper on Model Harnesses. Changing the harness around a fixed LLM can produce a 6x performance gap on the same benchmark. What if we automated harness engineering itself? The work introduces Meta-Harness, an agentic system that searches over harness code by exposing the full history through a filesystem. The proposer reads source code, execution traces, and scores from all prior candidates, referencing over 20 past attempts per step. On text classification, it improves over SOTA context management by 7.7 points while using 4x fewer tokens. On agentic coding, it outperforms all hand-engineered baselines on TerminalBench-2, scoring 37.6% versus Claude Code's 27.5%. This is a big deal! Here is why: The harness around a model often matters as much as the model itself. Meta-Harness shows that giving an optimizer rich access to prior experience, not just compressed scores, unlocks automated engineering that beats human-designed scaffolding. Paper: https://t.co/hqkZaWbBTl Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

@gpt_alex I moved to Paris and picked up smoking and then I lived in Japan I spent a summer living on a farm in upstate New York I started spearfishing in Florida https://t.co/L6r2MpTTmE

If you know what these things are and want to go to Monterey Bay with me, hit me up https://t.co/tI99d2Drlt

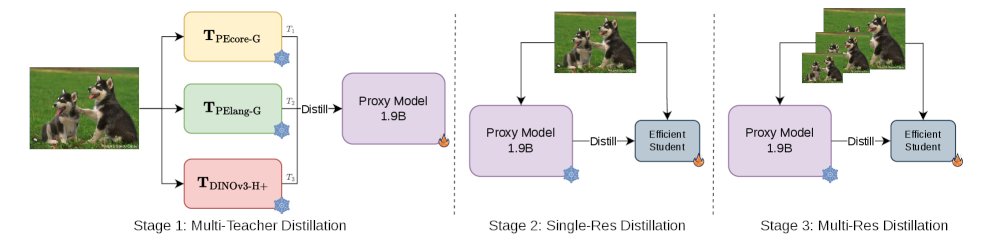

Meta just released the Efficient Universal Perception Encoder on Hugging Face A vision backbone for edge devices that unifies image understanding, vision-language modeling, and dense prediction via multi-teacher distillation. https://t.co/qnF84e5t09

“Grok Analysis” is one of the most useful features on 𝕏. Tap the Grok icon on the top right of any post, and you’ll get an instant Grok analysis. https://t.co/PTyaprV0f8

Grok Imagine "extend from frame" 30 second continuous video.💫 https://t.co/W1WllENYGm