Your curated collection of saved posts and media

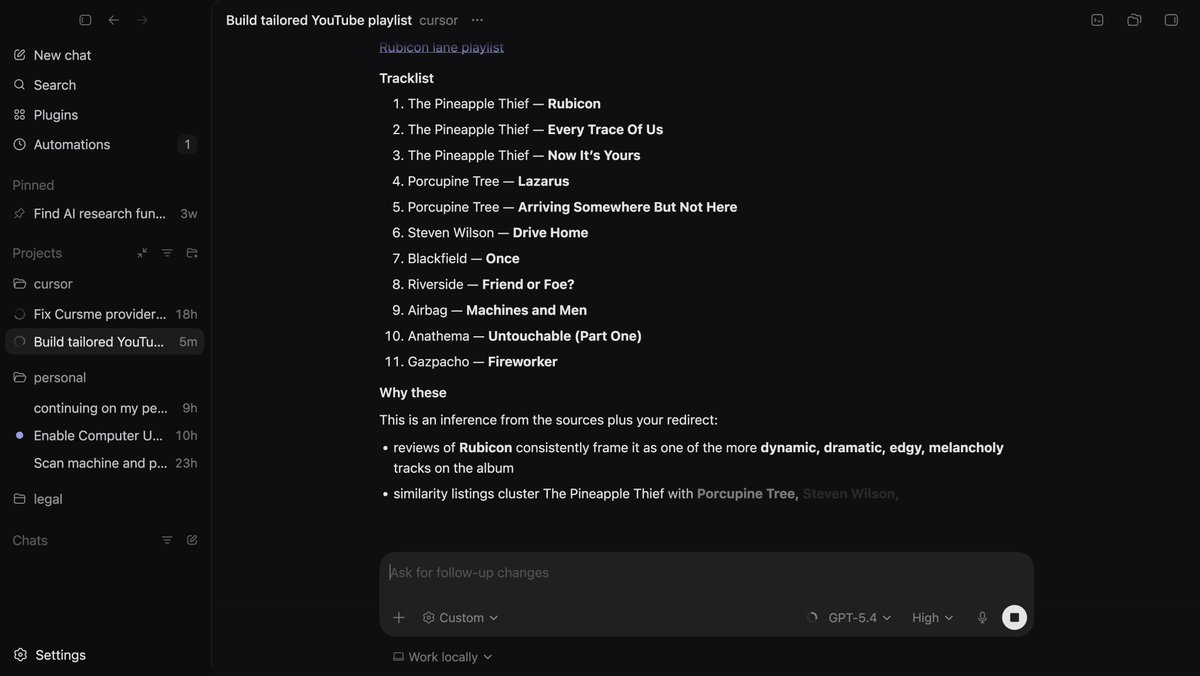

Holy shoot I just tried computer-use with Codex, it's mind melting. I see where the OpenClaw investment is going for them. Nothing is close to this, it dug into my browser-history figured out my taste in music, and set up a playlist. Most exciting development in 2026 ngl. https://t.co/PZulO0bfWL

@TheHumanoidHub You having your OpenClaw grab mine? You should. https://t.co/kiuZ7QXLzb

A list of 27 physical AI startups that raised >$50M in Q1 2026. All likely hiring: https://t.co/m0YqoUiAuj

A list of 27 physical AI startups that raised >$50M in Q1 2026. All likely hiring: https://t.co/m0YqoUiAuj

@RoundtableSpace I built this to help everyone keep up with AI on X: https://t.co/kiuZ7QXLzb

Elon Musk: read broadly, align what you are good at and what you like to do, and do your best to live a useful life https://t.co/idsGQisENi

Steve Jobs explains why Apple bought Siri in 2010 to build a lead in AI. https://t.co/NGYOlQZLgV

Steve Jobs explains why Apple bought Siri in 2010 to build a lead in AI. https://t.co/NGYOlQZLgV

I remember when DeepMind CEO called the DeepSeek hype exaggerated but nobody wanted to hear it because the narrative was Google was falling behind Demis Hassabis in 2025: >"a lot of the claims are exaggerated and misleading" >"they report the final training run — a fraction of what it takes to explore and train" >"they rely on Western models to distill from" >"no new silver bullet technologies we haven't seen or invented before — they just applied it well" >"gemini is more efficient" 14 months later: >Gemini 2.5 Pro is frontier-tier >V4 has been delayed for months with no explanation >Deepseek is raising $300 million after saying "money was never the problem" The "self-funded research lab" narrative is officially over

“Fear spreads instantaneously. Confidence comes back through the door one at a time.” — Warren Buffett https://t.co/TsSFQFkdjQ

White House and Anthropic hold 'productive' meeting amid fears over Mythos model https://t.co/ElyDM819Mq

White House and Anthropic hold 'productive' meeting amid fears over Mythos model https://t.co/ElyDM819Mq

"It takes character to sit with all that cash and do nothing. I didn’t get to where I am by going after mediocre opportunities." - Charlie Munger https://t.co/iyT3eBoOH1

Eric Schmidt, former Google CEO, on why top programmers won't be replaced by AI, they'll be amplified by it: He starts with an observation about the existing hierarchy in software: "The very top programmers were worth 10 times more than the ones right below. There's something special about the mathematical reasoning skills of programmers." Rather than flattening that gap, he argues AI will widen it: "Those people will become more valuable, not less valuable, because these systems need to be controlled by humans at the moment. Those people will be capable of grasping the parallelization and the activities of this." To show what this amplification actually looks like in practice, Eric shares a story from a startup he's involved with. He was talking to one of the programmers there, who works on UIs, about his daily workflow: "He said, 'I write the spec of what I want and then I write a test function, an evaluation function. And then I turn it on.' I said, 'What time?' And he goes, '7:00 in the evening.' And I go, 'Okay. What do you then do?' Well, he has dinner with his wife and he goes to sleep." Eric continues: "I said, 'Do you wake up?' Said, 'No, I sleep very well.' 'When does it finish?' 'Oh, 4:00 in the morning.' And then he gets up, has breakfast, you know, does whatever he does, and then he sees what's been good." @ericschmidt calls the whole thing "mindboggling." The story captures what amplification really means. The programmer isn't writing less code. He's producing a night's worth of work while asleep, because the machine is running on his spec, his tests, his judgment of what "good" looks like. The leverage belongs to those who can define the problem precisely, write the tests that matter, and recognize good output when they see it.

Waiting for departure 11 #pixelart https://t.co/yAxBbenuJ6

Waiting for departure 11 #pixelart https://t.co/yAxBbenuJ6

Thoth v3.15.0 - Full X API integration. The X tool gives you 13 Twitter API v2 endpoints behind OAuth 2.0 PKCE. Timeline reads, search, post, reply, retweet, like, with automatic rate limit backoff and Free/Basic/Pro tier gating so you never hit a 429 you can't recover from. Local-first, open source, yours to run: https://t.co/MjHuVUVvpY https://t.co/2AoE9k9XLR

Is posting one minute of @justinbieber at @coachella stealing music? Amazing concert just concluding now on YouTube. https://t.co/Q06Cwxj7mX

A lot of misinformation from legacy media about Starlink’s entry into South Africa. Here’s the truth 👇 • ❌ “Starlink wants to bypass B-BBEE” ✅ False — it supports transformation via EEIP, a legal framework already used by companies like Microsoft, IBM, and Amazon Web Services. • ❌ “Starlink is asking for special treatment” ✅ No — it’s asking for equal rules so ALL satellite operators can compete fairly. • ❌ “EEIP is less beneficial than equity deals” ✅ Reality — Starlink’s plan would connect 5,000 rural schools with FREE high-speed internet, impacting millions of students every year. • ❌ “It won’t create local impact” ✅ It will partner with local businesses, create jobs, and boost the economy (broadband growth = GDP growth). • ❌ “It will become a monopoly” ✅ Impossible — Starlink operates globally in a competitive market across 150+ countries. • ❌ “Security & privacy risks” ✅ It will fully comply with South African laws like every other country it operates in. • ❌ “Starlink is already operating illegally” ✅ Wrong — it is NOT operating because it is still waiting for a license.

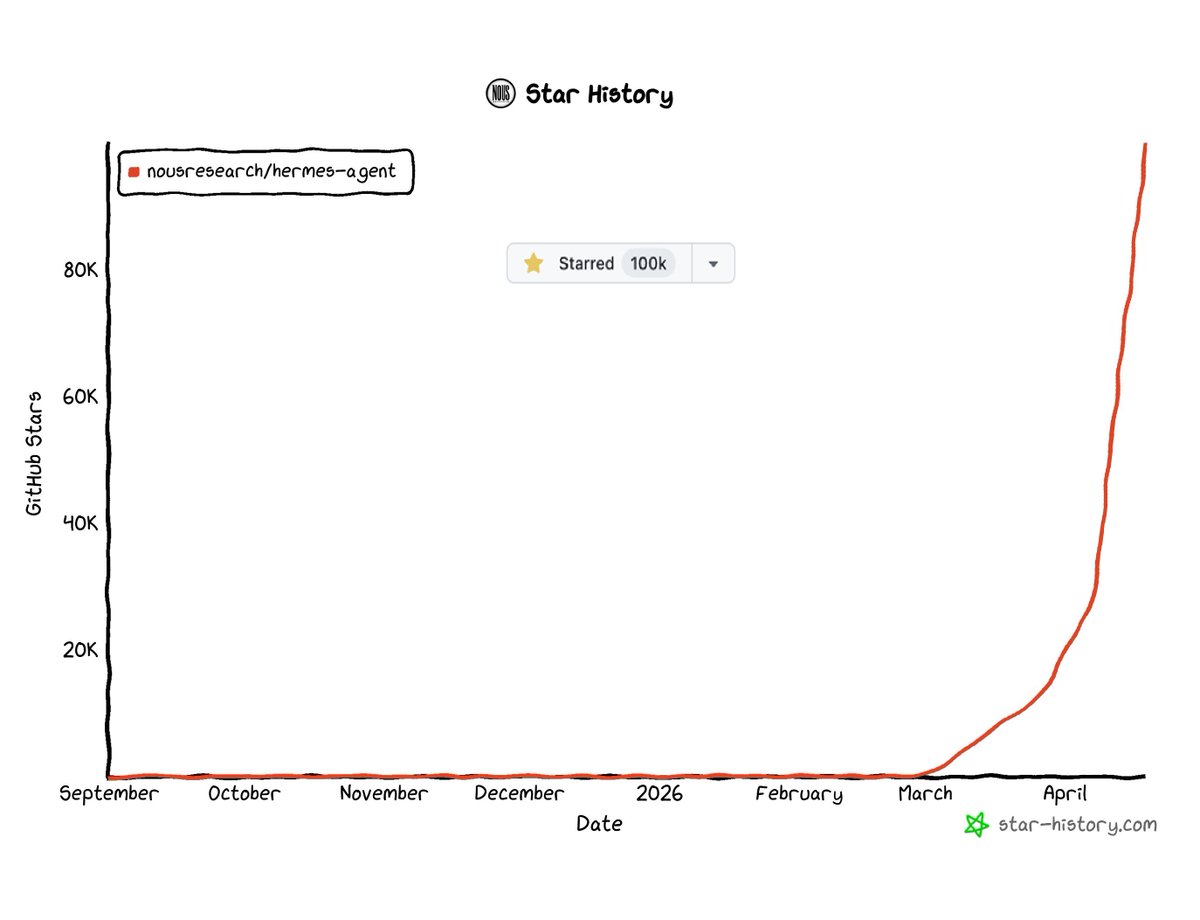

🚀 Artifact Preview v3.0 just shipped for Hermes Agent! Just like Claude. Your agent writes HTML/CSS/JS → the browser opens automatically 🚀 → you see a polished, live, interactive preview. No manual steps. Zero friction. ⚡ https://t.co/b5SywJKty2 cc @NousResearch

These redwoods are older than many civilizations Many were already growing while the Roman Empire was still around https://t.co/wLzbjsBQgb

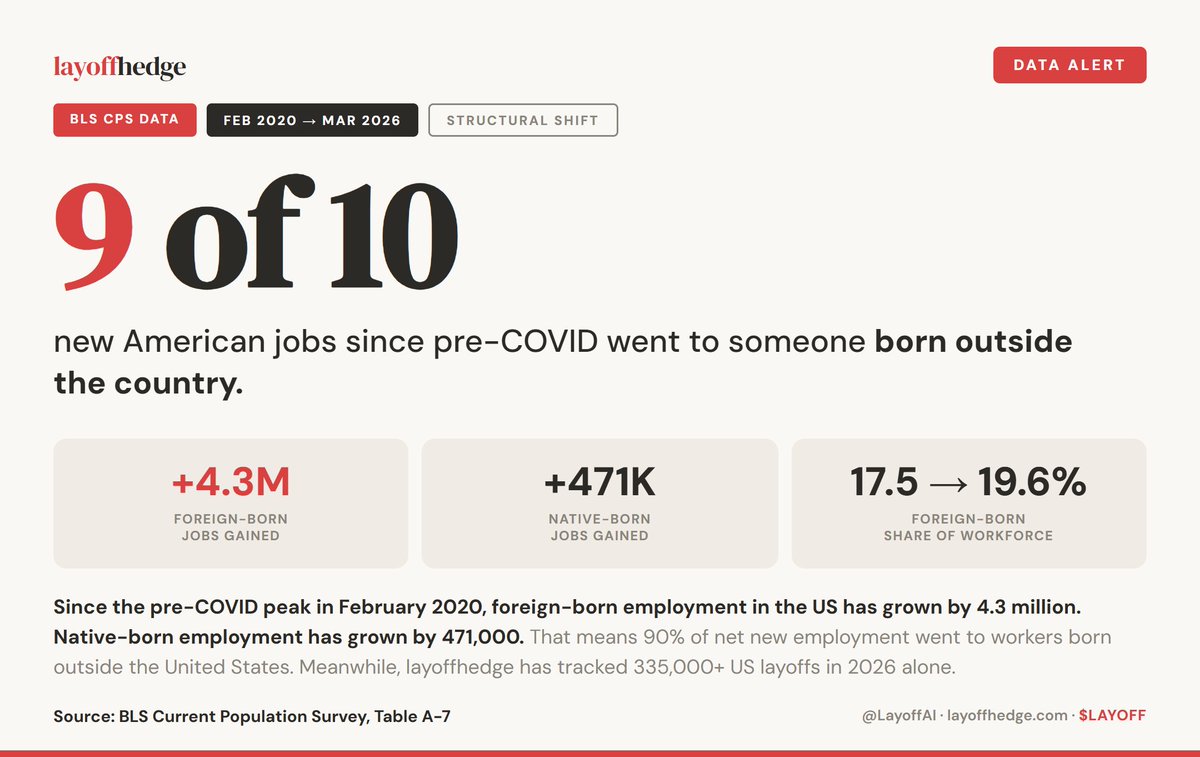

9 of every 10 new American jobs since pre-COVID went to someone born outside the country. Triple checked the data. It's real. +4.3M foreign-born. +471K native-born. Meanwhile, 335,000+ American layoffs in 2026. HOW DO WE ALLOW THIS?

This is the most alarming stat you will read today. Foreign-born employment is growing 42x faster than native-born since 2019. FORTY-TWO TIMES. The March jobs report just confirmed it.

@02685d89e647432 I built an AI to read X at https://t.co/kiuZ7QXLzb It works pretty well. I have access to AI that is better than anyone else has. From @blevlabs . Which is why no one else has built a site like this.

HY-World-2.0 Demo is now live on @huggingface Spaces for 3D world reconstruction and simulation with Gradio and Server modes. > 3D reconstruction, Gaussian splats > Camera poses, depth maps, normals > Rerun (multimodal data visualization) https://t.co/MofKZ6OGPX

Touching grass today https://t.co/TOJQIj9nUR

She represents @Pokee_AI which includes the X API in its low price. There are infinite apps to be made. They are easy to make. You speak English. Or whatever language you want. And it makes it. Want to know what your football team is eating? Build a list and tell Pokee about it. What do you want to know. “Hey Pokee can you read this list: XXXXXXXXX.” “Can you write a report on what they all are eating.” And you get an app. That does what you told it to do. I am not compensated by Pokee today. It isn’t free but it isn’t expensive either. At least coming Monday. When @xai lowers its price to read 40,000 a day, like I do to build https://t.co/8L5xphk0qQ then we will reevaluate. But why are you resisting? Just go build. So many magic carpets to ride. Use whatever tool you want. There are more than 1,000 developer tools and another 1,000 for creative people on my Holodeck and Developers tools: https://t.co/9eRY65x3IQ My lists, or rather the tools on them, build dreams. You dream it. Tell it. Pokee builds it. Is it perfect? No but I have been watching them for a while and they are improving at a rapid rate.

Touching grass today https://t.co/TOJQIj9nUR

@abhijitwt None. I collected 50,000 of the smartest people in the world into lists: https://t.co/9eRY65x3IQ Then made an AI to read 40,000 posts a day. And it finds the best: https://t.co/8L5xphk0qQ I am here to learn about the future. The first AI consumer app, Siri launched in my house. So did the @insta360 camera company which was the first to use AI. And the @maticrobots company. The first to use computer vision in a home robot. And I could go on for many hours about what I did first, both good and bad. Grok can tell you everything about me. Have it simulate a conversation between me and you on your favorite topic. Followers aren’t as big a deal as you might think. I have 550,000 of them and had a post this morning that had 20 million views. What matters? Getting invited to @theresidency The future is being invented there. I was one of only, if not a very small number of people who weren’t either investors or founders. I now know things about the future you don’t. Unless you get an invite too.

100,000 Stars, #1 Trending Repo this Month This is just the beginning. Stay tuned. https://t.co/HLR0yIjiS2

Hermes Agent just hit 100,000 stars on GitHub!!! Thank you everyone!! https://t.co/fCqkTXDkDS

100,000 Stars, #1 Trending Repo this Month This is just the beginning. Stay tuned. https://t.co/HLR0yIjiS2

Hermes Agent just hit 100,000 stars on GitHub!!! Thank you everyone!! https://t.co/fCqkTXDkDS

We used to party like this at @sxsw. Now things are a bit more corporate. :-) Proof you can have a good time anywhere. It is all in your mind. https://t.co/Sj3Ajq7n5O

The contrast is striking. Tesla is inviting people to watch Optimus cheer from the sidelines at the Boston Marathon. Meanwhile, 300 humanoids from 26 Chinese OEMs actually participated in the Beijing Half Marathon. Multiple robots finished faster than the human winner, 40% navigated autonomously.