Your curated collection of saved posts and media

@hexyn7 Every tool call an agent makes, it has to send back the whole chat history EVERY time. so if you are at 50k tokens, each tool call from there sends back 50k tokens more. These tokens are majority input tokens, and cached - input tokens usually cost 5x less, and cached input tokens cost 90% less than input tokens in most cases.

@hexyn7 @Max_brandkernel What tools do you find unnecessary? `hermes tools` to disable whichever you want `hermes skills config` to disable whatever you want auxiliary models are cheaper than the main agent model in most cases, you can set them to dirt cheap ones if you want

This 2-hour Stanford lecture breaks down how models like ChatGPT and Claude are actually built, clearer than what many people in top AI roles ever get exposed to. Save this and set aside two hours today. It might end up being the most valuable thing you learn all week. https://t.co/5u97uZCWxd

AI FOOTBALL ANALYSIS. A FULL COMPUTER VISION SYSTEM. BUILT ON YOLO, OPENCV, AND PYTHON. You upload a regular match video. No sensors, no GPS trackers, just camera footage. The neural network finds every player, referee, and ball on its own. Every frame, in real time. KMeans clustering breaks down jersey colors pixel by pixel. The system splits players into teams automatically. Without a single manual hint. Optical Flow tracks camera movement. Separates it from player movement. Perspective Transformation converts pixels into real meters. Speed of every player. Distance covered. Ball possession percentage. All calculated automatically. Four hours of tutorial from zero to a working system. The model is trained on real Bundesliga matches. Runs on a regular GPU. Python code - take it and run. Sports analytics is no longer behind closed doors. AI leveled the playing field.

Marin is using quantile balancing from @Jianlin_S (who developed RoPE, which was also a good idea) to train our current 1e23 FLOPs MoE. The idea is elegant: assigning tokens to experts by solving a linear program. No hyperparameters to tune. Yields stable training.

Researchers' brilliant ideas often get lost in the sea of endless SOTA claims on weak baselines. At Marin we battle-test ideas in an open arena, where anyone's idea can be promoted to the next hero run. One that recently rose up was @Jianlin_S MoE Quantile Balancing, used in our

See all the gory details on GitHub: https://t.co/CfUbhtcBOp and follow along on wandb: https://t.co/UWU00HPknJ

Thanks @_akhaliq sharing our work! Can frontier Multimodal Agents play games as well as humans? 🤩We are excited to introduce 🎮GameWorld: towards standardized and verfiable evaluation for multimodal game agents. 🕹️ 34 browser games 📌 170 tasks 🤖 18 multimodal agent baselines, covering 1. Computer-use (CUA) agents 👉 raw keyboard + mouse actions 2. Generalist multimodal agents 👉 semantic action parsinga GameWorld show that even sota agents still perform far below novice human players. 📹Watch our live runs: https://t.co/wrhKJD9JVx 🌐project page: https://t.co/J906LQ6Sfj 💻github: https://t.co/W1vL99MDg5 work done with @OuyyyangMingyu @who_s_yuan Hwee Tou Ng, @MikeShou1

I recently gave some talks on PointWorld. In this latest version, I discussed: Why world models? Why 3D? Why it matters amidst scaling data in robotics? Why it’s a missing side of the coin for “The Bitter Lesson”? (It’s more than just a better backbone for training policies) https://t.co/oGhLvuyB6B

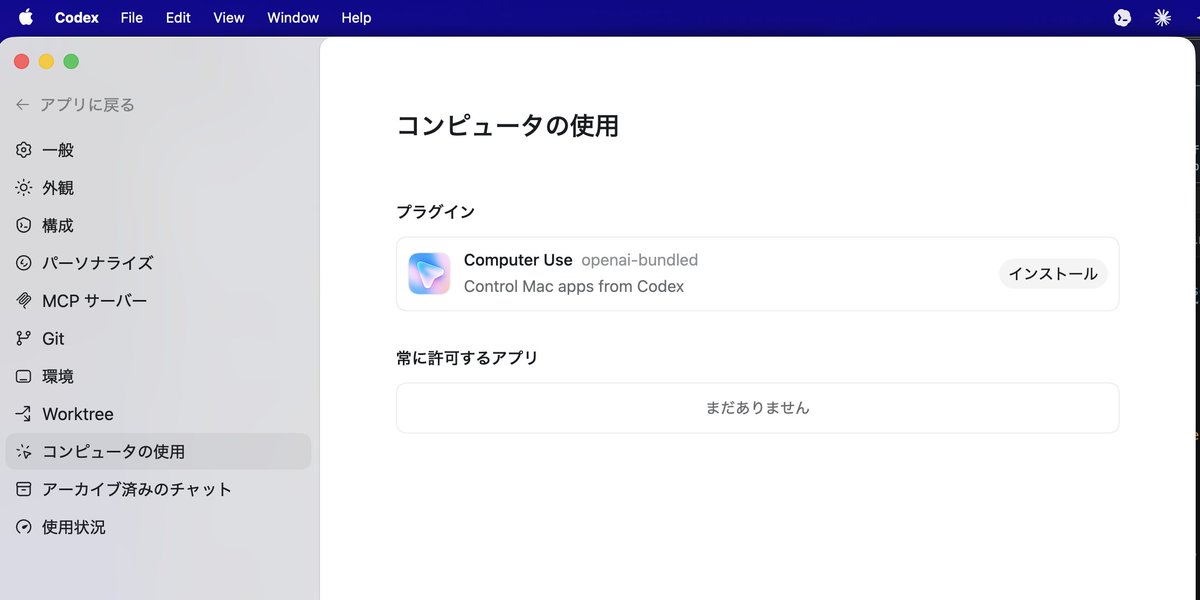

【速報】OpenAI、Codex に大型アップデート…!! ・コンピュータ使用 ・アプリ内ブラウザ ・画像生成/編集 ・90超の新プラグイン(Atlassian / GitLab / Microsoftなど) ・メモリ ・長期タスクの自動復帰(数日〜数週間をまたぐ作業継続) 本日より順次展開👇 https://t.co/CzmZ1MOUOT

macOS 版のCodex Desktop アプリに「Computer Use」機能がついたのでインストールして試してみます。 https://t.co/xBU891BSUa

Let me break down why @NousResearch Tool Gateway is so important for Agents Right now if you want - Image generation - Text to Speech - Browser automation - Web Scraping - LLM Or any of the 100s of other tools you need to signup with each provider and get an API key Dozens of API keys and billing accounts to manage. It's very annoying. With Nous Tool Gateway you get ALL of these tools setup AUTOMATICALLY through your one Nous account Switch your agent to a Nous plan and cancel your laundry list of APIs Signup: https://t.co/3XhGYKH7AC

Tool Gateway is now live in Nous Portal. No separate accounts, no API key juggling. All you need is one subscription, and everything works. A paid Nous Portal subscription now includes access to 300+ models and a growing set of third-party tools. Launching with: → Web scraping

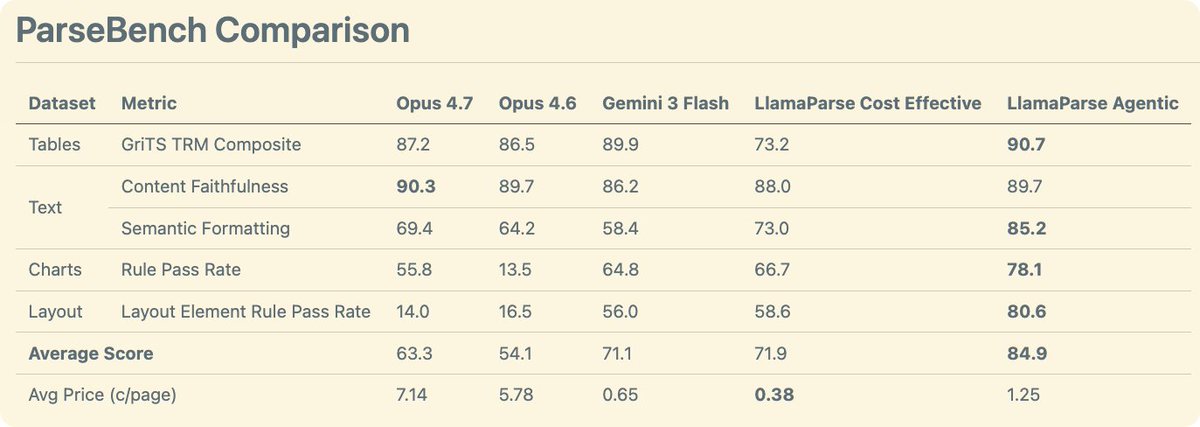

We comprehensively benchmarked Opus 4.7 on document understanding. We evaluated it through ParseBench - our comprehensive OCR benchmark for enterprise documents where we evaluate tables, text, charts, and visual grounding. The results 🧑🔬: - Opus 4.7 is a general improvement over Opus 4.6. It has gotten much better at charts compared to the previous iteration - Opus 4.7 is quite good at tables, though not quite as good as Gemini 3 flash - Opus 4.7 wins on content faithfulness across all techniques (including ours) - Using Opus 4.7 as an OCR solution is expensive at ~7c per page!! For comparison, our agentic mode is 1.25c and cost-effective is ~0.4c by default. Take a look at these results and more on ParseBench! https://t.co/tYiSOMbd6p

THREAD 1/7 Every AI benchmark a lab bragged about this year is compromised. not because labs are cheating. because the game itself is broken.

Codex writes your code → Codex ships it on Render. We built a Codex plugin with @OpenAI that lets you deploy, debug, and monitor your entire stack on Render, without leaving your flow. Just type @render https://t.co/xrgcCksKRj

Codex for (almost) everything. It can now use apps on your Mac, connect to more of your tools, create images, learn from previous actions, remember how you like to work, and take on ongoing and repeatable tasks. https://t.co/UEEsYBDYfo

@ChanPerco The general public is missing an important dimension to judge AI models: operational costs. Compute time is the silent variable that the press and benchmarks ignore. A model that spends 48h of inference to reach what another hits in seconds simply doesn’t show up in today’s data. Yet that’s exactly what would reveal whether the approach is economically viable. Claims of “recursive self-improvement” are mostly bounded optimization over fixed support. LLMs aren’t open-ended learners here: they’re function approximators resampling the same distribution. That alone locks in diminishing returns ≠ takeoff. Every agent loop or test-time compute burns tokens and FLOPs. Benchmarks show the wins. They rarely show the avg@k reality: how many background runs it actually took. Businesses don’t have unlimited VC capital to burn on tokens. The moment the subsidised token pot goes dry, the whole hotdog stand may crash and burn from its own operation. Real organisations optimise for return on spend, not “best output no matter the price.” Technical gains plateau fast while inference costs scale linearly with every extra loop. So you hit two ceilings at once: → Technical: diminishing returns from bounded optimisation → Economic: compounding costs for shrinking gains There’s no free compounding flywheel. You’re trading ever-more compute for incremental refinement, and that trade stops making sense long before takeoff. Your agentic AI workforce looks magically self-sustaining. Right up until the bill arrives and you are forced to close shop.

@saprmarks AI doesn’t have a self, hidden intentions or beliefs. It’s easy to project human traits onto it, but that’s metaphor, not reality. The industry has normalised aspirational narratives while downplaying limitations. Full breakdown: https://t.co/gKLjtn12uz

@theo Works when you trigger ultrathink mode (I know they deprecated it) but somehow reasoning effort is higher now like xHigh. Might be a bug…

Thanks @_akhaliq for sharing our work! If you are interested in multimodal agents on open-web search, please see our thread for more details: https://t.co/uh9rr6hFcZ

Thanks @_akhaliq for sharing our work! If you are interested in multimodal agents on open-web search, please see our thread for more details: https://t.co/uh9rr6hFcZ

MERRIN A Benchmark for Multimodal Evidence Retrieval and Reasoning in Noisy Web Environments paper: https://t.co/UZpJdGxIxY https://t.co/ZmRa2TcuAu

Dogfooding Opus 4.7 the last few weeks, I've been feeling incredibly productive. Sharing a few tips to get more out of 4.7 🧵

AI FOOTBALL ANALYSIS. A FULL COMPUTER VISION SYSTEM. BUILT ON YOLO, OPENCV, AND PYTHON. You upload a regular match video. No sensors, no GPS trackers, just camera footage. The neural network finds every player, referee, and ball on its own. Every frame, in real time. KMeans clustering breaks down jersey colors pixel by pixel. The system splits players into teams automatically. Without a single manual hint. Optical Flow tracks camera movement. Separates it from player movement. Perspective Transformation converts pixels into real meters. Speed of every player. Distance covered. Ball possession percentage. All calculated automatically. Four hours of tutorial from zero to a working system. The model is trained on real Bundesliga matches. Runs on a regular GPU. Python code - take it and run. Sports analytics is no longer behind closed doors. AI leveled the playing field.

Next Tuesday 12pm EST: @erikdunteman will break down the custom agent harness we launched with Modal sandboxes + @OpenAIDevs Agent SDK. Sandboxes, parallel coding agents, context mgmt, and more. Register here: https://t.co/HAIsKAJY6I

Yesterday we launched our custom agent harness built for parallel background coding tasks, built on @modal sandboxes and @OpenAIDevs Agent SDK. I'll be talking in greater depth about harness design, sandboxes, context management, and more this Tuesday, link below https://t.co/mY

We need more challenging benchmarks to test long-horizon coding capabilities. FrontierSWE looks like a nice new set of tasks to test out your best coding agents or harnesses.

Introducing FrontierSWE, an ultra-long horizon coding benchmark. We test agents on some of the hardest technical tasks like optimizing a video rendering library or training a model to predict the quantum properties of molecules. Despite having 20 hours, they rarely succeed http

We comprehensively benchmarked Opus 4.7 on document understanding. We evaluated it through ParseBench - our comprehensive OCR benchmark for enterprise documents where we evaluate tables, text, charts, and visual grounding. The results 🧑🔬: - Opus 4.7 is a general improvement over Opus 4.6. It has gotten much better at charts compared to the previous iteration - Opus 4.7 is quite good at tables, though not quite as good as Gemini 3 flash - Opus 4.7 wins on content faithfulness across all techniques (including ours) - Using Opus 4.7 as an OCR solution is expensive at ~7c per page!! For comparison, our agentic mode is 1.25c and cost-effective is ~0.4c by default. Take a look at these results and more on ParseBench! https://t.co/tYiSOMbd6p

Anthropic says Opus 4.7 hits 80.6% on Document Reasoning — up from 57.1%. But "reasoning about documents" ≠ "parsing documents for agents." We ran it on ParseBench. → Charts: 13.5% → 55.8% (+42.3) — huge → Formatting: 64.2% → 69.4% (+5.2) → Content: 89.7% → 90.3% (+0.6) → T

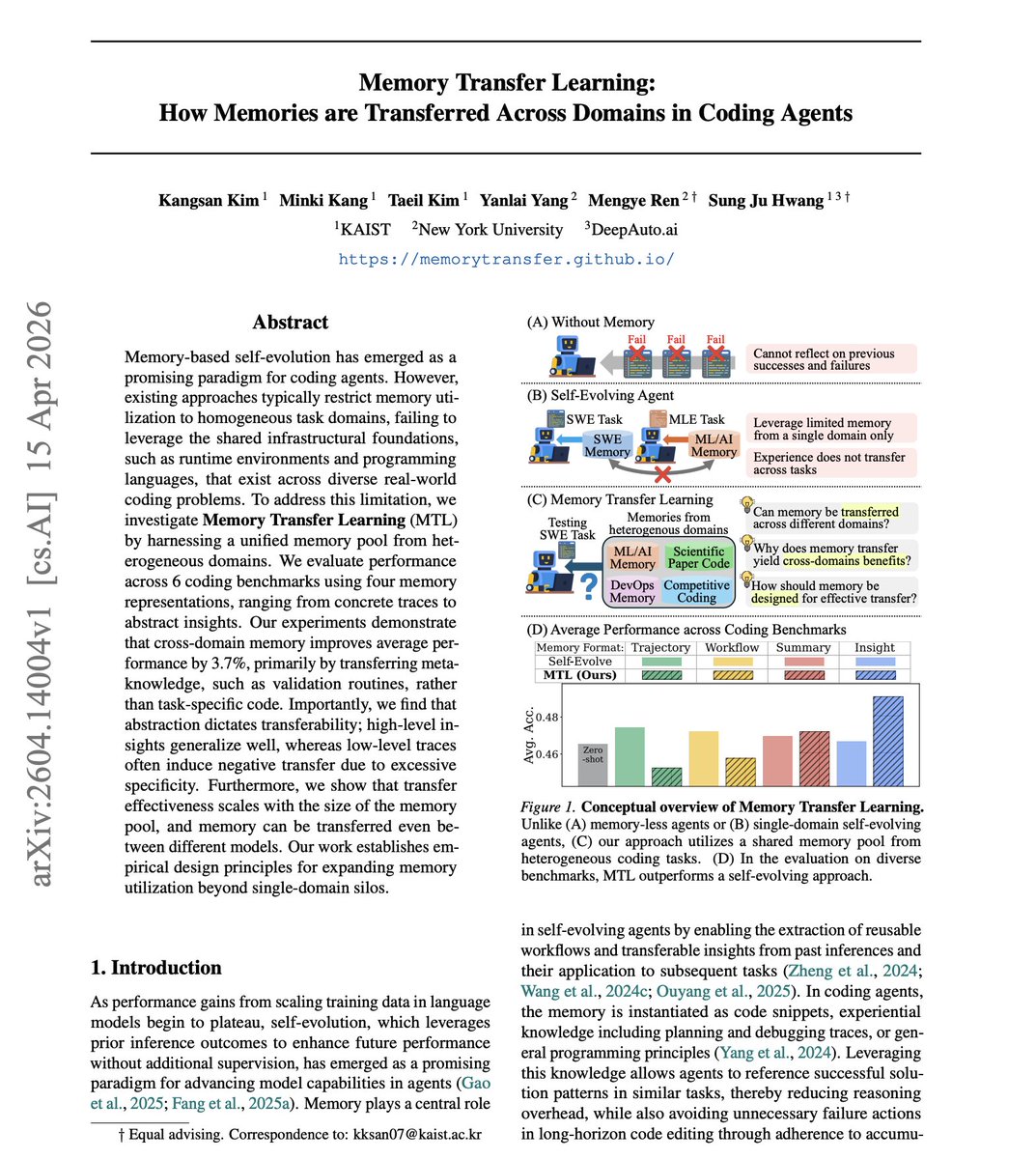

Coding agents learn from experience, but that knowledge stays locked in silos. Solve a thousand SWE tasks, and none of that wisdom helps with competitive coding. What if memories could transfer across domains? The work introduces Memory Transfer Learning, a framework where coding agents share a unified memory pool across 6 heterogeneous benchmarks. They test four memory formats ranging from raw execution traces to high-level insights, and find that cross-domain memory improves average performance by 3.7%. Why does it matter? The transferable value isn't task-specific code. It's meta-knowledge: validation routines, structured action workflows, safe interaction patterns with execution environments. Algorithmic strategy transfer accounts for only 5.5% of the gains. The real benefit comes from procedural guidance on how to act, not what to code. Abstraction dictates transferability: high-level insights generalize well, while low-level execution traces often cause negative transfer by anchoring agents to incompatible implementation details. Paper: https://t.co/XPD5kczsoZ Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

@emollick Hey Ethan! Sean here, PM on https://t.co/KZTlPpbqBQ - thanks for the feedback. This isn't a router, this is the model being trained to decide when to think based on the context -- we've been running this for a while in Sonnet 4.6 in https://t.co/KZTlPpbqBQ as well as Claude Code. Understood that it's not tuned perfectly in https://t.co/3Rk7wAMA7D yet - we're sprinting on tuning this more internally and should have some updates here shortly. Feel free to DM us examples of queries where you expected thinking and didn't see it

🤯 Wild day in AI — three major releases all dropped today! Anthropic's Claude Opus 4.7, OpenAI's big Codex update (computer use, built-in browser, image gen, memory), and Alibaba's open-weights Qwen. Which one are you most excited by?

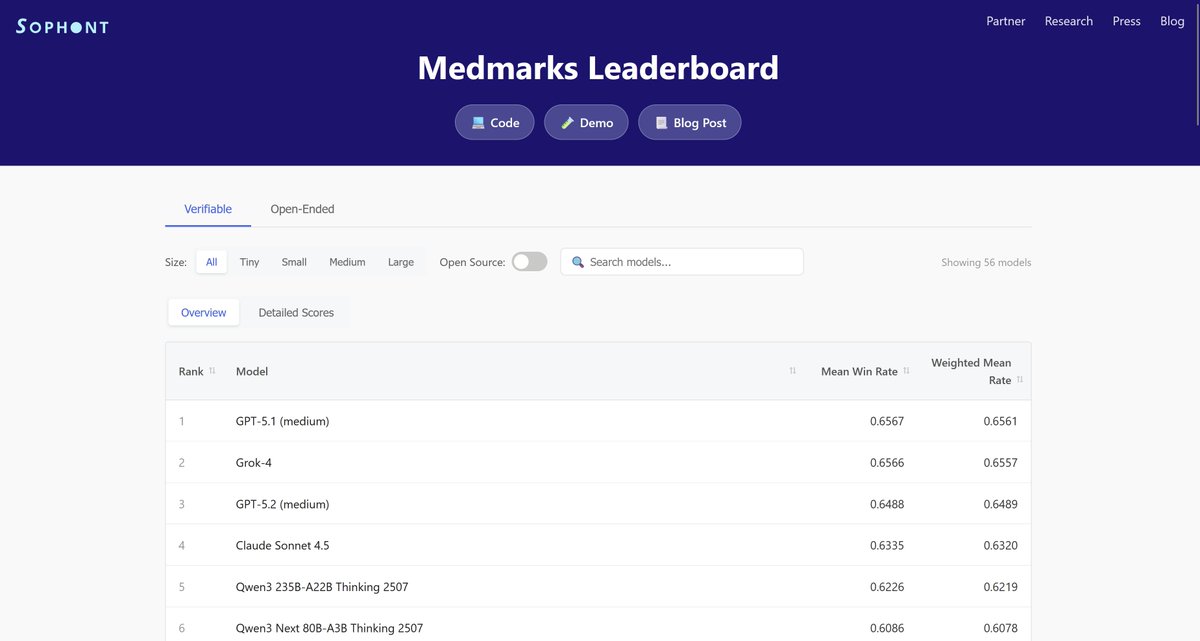

We do!! @SophontAI has released the Medmarks benchmark suite, which is the largest completely open-source automated evaluation suite for medical capabilities. (new version coming soon) We'd love to help any frontier lab evaluate their model using our suite! https://t.co/ACNe1b9Vko

@iScienceLuvr Does Sophont have/building its own bench?

With codex computer use + mac's iPhone Mirror app, GPT can use any app on your phone!!! Seems less accurate with clicks vs actual mac desktop apps, but it does work! Whats the best app to use this with?! https://t.co/44BbT9UJie

Because computer use in Codex doesn't take over your own cursor so Codex can work in the background and you can truly cursor max! 🔥

brb, cursor-maxxing https://t.co/gryiF1MCik

We partnered with @ProximalHQ to run five frontier coding agents on a hard task: rebuild the full Wan 2.1 text-to-video pipeline on MAX (no PyTorch, no diffusers) in 20 hours as part of their new Frontier-SWE benchmark. Two nearly pulled it off. Every model understood the architecture. The agents that produced results kept debugging numerical errors layer by layer. Several others just tried to smuggle in a torch import. Full report: https://t.co/Se2MJ4ySok

Introducing GPT-Rosalind, our frontier reasoning model built to support research across biology, drug discovery, and translational medicine. https://t.co/PubLU0FkSv