Your curated collection of saved posts and media

Marin is using quantile balancing from @Jianlin_S (who developed RoPE, which was also a good idea) to train our current 1e23 FLOPs MoE. The idea is elegant: assigning tokens to experts by solving a linear program. No hyperparameters to tune. Yields stable training.

Researchers' brilliant ideas often get lost in the sea of endless SOTA claims on weak baselines. At Marin we battle-test ideas in an open arena, where anyone's idea can be promoted to the next hero run. One that recently rose up was @Jianlin_S MoE Quantile Balancing, used in our

@breath_mirror @kaiostephens @DJLougen @bstnxbt yeah, that one works as well. doesn't need the minor PR to handle qwen3_5_text rather than the multimodal wrapper. ~2x-4x speedup (my laptop got way too hot and throttled)

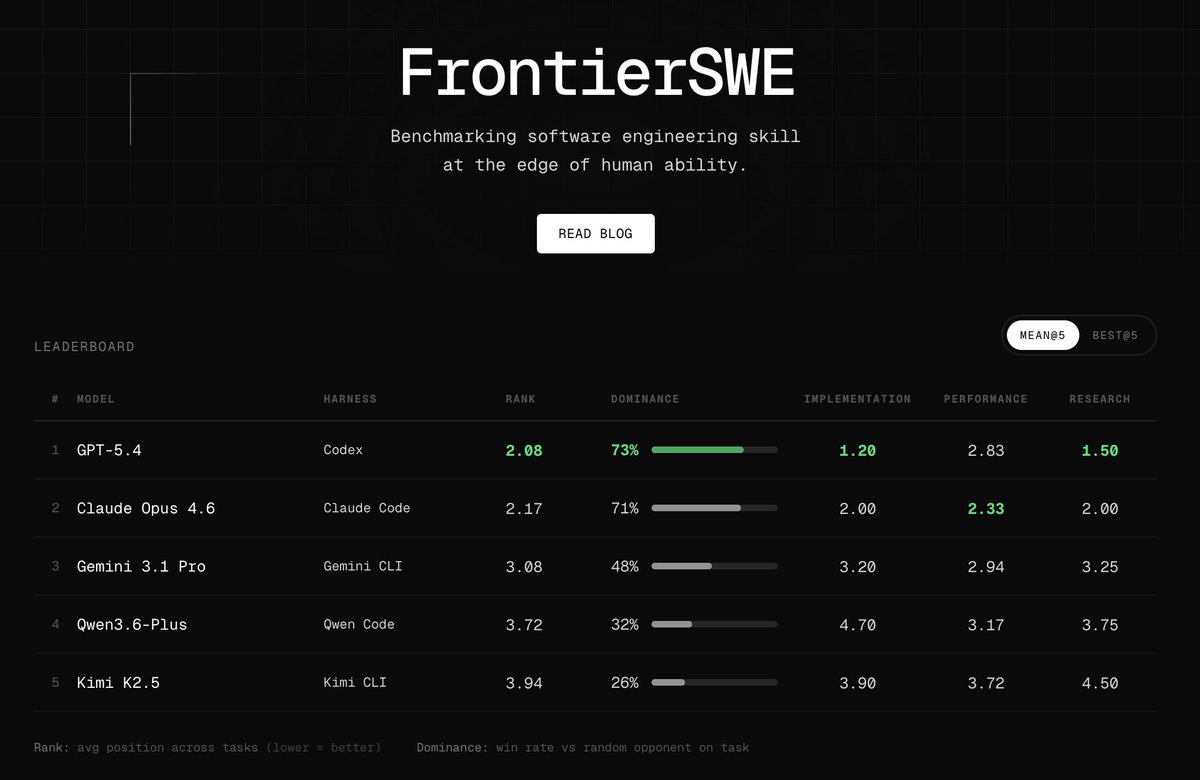

Introducing FrontierSWE, an ultra-long horizon coding benchmark. We test agents on some of the hardest technical tasks like optimizing a video rendering library or training a model to predict the quantum properties of molecules. Despite having 20 hours, they rarely succeed https://t.co/xbqHJRZiPZ

@crypto_fyy @googlegemma @arena We're working on optimizing KV Cache!

Training Qwen2.5-0.5B-Instruct on Reddit post summarization with GRPO on my 3x Mac Minis — trying combination of quality rewards with length penalty! Completed all of the following combination rewards! >METEOR + BLEU >BLEU + ROUGE-L >METEOR + ROUGE-L All the code and wandb charts in the comments --- Training Qwen2.5-0.5B-Instruct on Reddit post summarization with GRPO on my 3x Mac Minis — trying combination of quality rewards with length penalty! Completed all of the following combination rewards! >METEOR + BLEU >BLEU + ROUGE-L >METEOR + ROUGE-L All the code and wandb charts in the comments --- Setup: 3x Mac Minis in a cluster running MLX. One node drives training using GRPO, two push rollouts via vLLM. Trained two variants: → length penalty only (baseline) → length penalty + quality reward (BLEU, METEOR and/or ROUGE-L ) --- Eval: LLM-as-a-Judge (gpt-5) Used DeepEval to build a judge pipeline scoring each summary on 4 axes: → Faithfulness — no hallucinations vs. source → Coverage — key points captured → Conciseness — shorter, no redundancy → Clarity — readable on its own

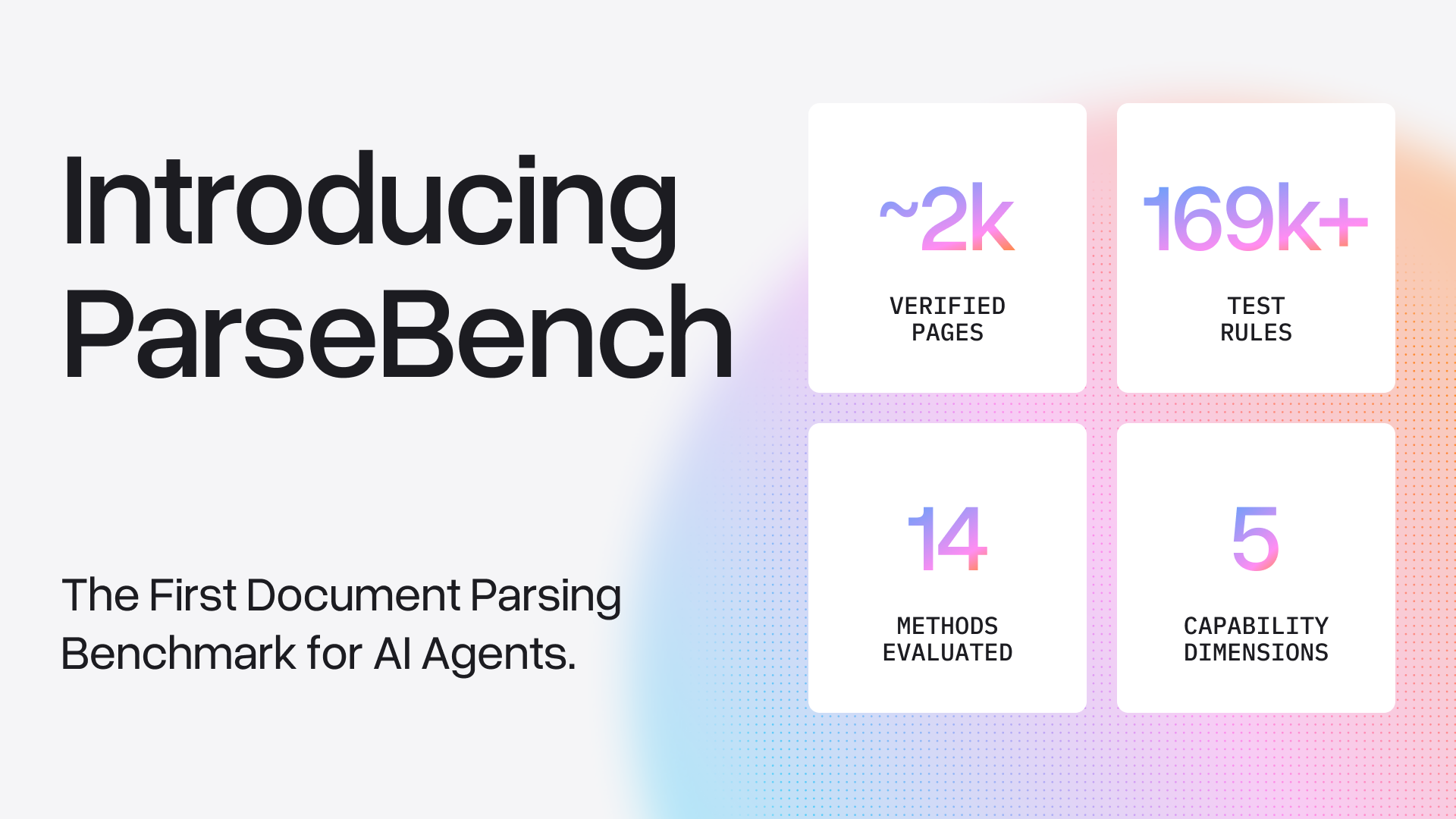

We’re open sourcing the first document OCR benchmark for the agentic era, ParseBench. Document parsing is the foundation of every AI agent that works with real-world files. ParseBench is a benchmark that measures parsing quality specifically for agent knowledge work: ✅ It optimizes for semantic correctness (instead of exact similarity) ✅ It has the most comprehensive distribution of real-world enterprise documents It contains ~2,000 human-verified enterprise document pages with 167,000+ test rules across five dimensions that matter most: tables, charts, content faithfulness, semantic formatting, and visual grounding. We benchmarked 14 known document parsers on ParseBench, from frontier/OSS VLMs to specialized parsers to LlamaParse. Here are some of our findings: 💡 Increasing compute budget yields diminishing returns - Gemini/gpt-5-mini/haiku gain 3-5 points from minimal to high thinking, at 4x the cost. 💡 Charts are the most polarizing dimension for evaluation. Most specialized parsers score below 6%, while some VLM-based parsers do a bit better. 💡 VLMs are great at visual understanding but terrible at layout extraction. GPT-5-mini/haiku score below 10% on our visual grounding task, all specialized parsers do much better. 💡 No method crushes all 5 dimensions at once, but LlamaParse achieves the highest overall score at 84.9%, and is the leader in 4 out of the 5 dimensions. This is by far the deepest technical work that we’ve published as a company. I would encourage you to start with our blog and explore our links to Hugging Face to GitHub. All the details are in our full 35-page (!!) ArXiv whitepaper. 🌐: Blog: https://t.co/57OHkx0pQW 📄 Paper: https://t.co/Ho2oH2xEAM 💻 Code: https://t.co/6P7UxqOZYA 📊 Dataset: https://t.co/YguIXWm41j 🎥 YouTube: https://t.co/6Fh1Nsk9ei

@IgorCarron @LightOnIO ColBERT-Zero matching larger models on public data alone is impressive. Late interaction remains underappreciated. Token-level matching preserves what dense pooling compresses away. Tested this myself on retrieval tasks, the precision gains are real.

New course: Spec-Driven Development with Coding Agents, built in partnership with @jetbrains, and taught by @paulweveritt. Vibe coding is fast, but often produces code that doesn't match what you asked for. This short course teaches you spec-driven development: write a detailed spec defining what to build, and work with your coding agent to implement it. Many of the best developers already build this way. A spec lets you control large code changes with a few words, preserve context across agent sessions, and stay in control as your project grows in complexity. Skills you'll gain: - Write a detailed specification to define your mission, tech stack, and roadmap, giving your agent the context it needs from the start - Plan, implement, and validate features in iterative loops using a spec as your agent's guide - Apply the same repeatable workflow to both new and legacy codebases - Package your workflow into a portable agent skill that works across agents and IDEs Join and write specs that keep your coding agent on track! https://t.co/hI4GwuvhtN

LLM agents loop, drift, and get stuck on hard reasoning tasks up to 30% of the time. Current fixes are either too blunt (hard step limits) or too expensive (LLM-as-judge adding 10-15% overhead per step). New research proposes a smarter middle ground. The work introduces the Cognitive Companion, a parallel monitoring architecture with two variants: an LLM-based monitor and a novel Probe-based monitor that detects reasoning degradation from the model's own hidden states at zero inference overhead. The Probe-based Companion trains a simple logistic regression classifier on hidden states from layer 28. It reads the model's internal representations during the existing forward pass, requiring no additional model calls. A single matrix multiplication is all it takes to flag when reasoning quality is declining. Why does it matter? The LLM-based Companion reduced repetition on loop-prone tasks by 52-62% with roughly 11% overhead. The Probe-based variant achieved a mean effect size of +0.471 with zero measured overhead and AUROC 0.840 on cross-validated detection. But the results also reveal an important nuance: companions help on loop-prone and open-ended tasks while showing neutral or negative effects on structured tasks. Models below 3B parameters also struggled to act on companion guidance at all. This suggests the future isn't universal monitoring but selective activation, deploying cognitive companions only where reasoning degradation is a real risk. Paper: https://t.co/K2vqDADwU8 Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

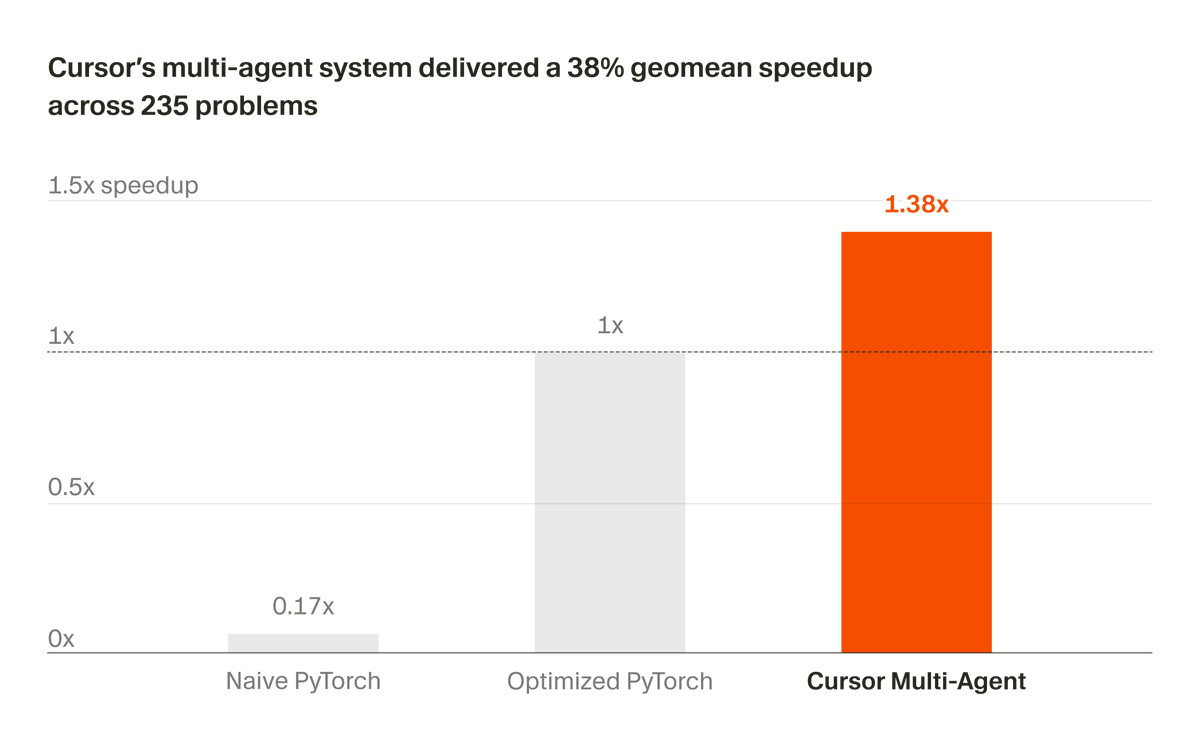

We've been developing a multi-agent system that builds and maintains complex software autonomously. Recently, we partnered with NVIDIA to apply it to optimizing CUDA kernels. In 3 weeks, it delivered a 38% geomean speedup across 235 problems. https://t.co/0YvbXrzVfe