Your curated collection of saved posts and media

To show off what you can do with @OpenAI Agent SDK + @modal, we built an ML research agent (inspired by @karpathy). It can: - Spin up GPU sandboxes of any shape - Run a pool of subagents - Persist memory - Snapshot state for fork/resume Here it is playing Parameter Golf: https://t.co/r7QhvNmdEq

Earlier this month, Apple introduced Simple Self-Distillation: a fine-tuning method that improves models on coding tasks just by sampling from the model and training on its own outputs with plain cross-entropy and… it's already supported in TRL, built by @krasul. you can really feel the pace of development in the team 🐎 paper by @onloglogn, @richard_baihe, @UnderGroundJeg, Navdeep Jaitly, @trebolloc, @YizheZhangNLP at Apple 🍎 how it works: the model generates completions at a training-time temperature (T_train) with top_k/top_p truncation, then fine-tunes on them with plain cross-entropy. no labels or verifier needed you can try it right away with this ready-to-run example (Qwen3-4B on rStar-Coder): https://t.co/zizfISD6bq or benchmark a checkpoint with the eval script: https://t.co/mKlafTyKSe one neat insight from the paper: T_train and T_eval compose into an effective T_eff = T_train × T_eval, so a broad band of configs works well. even very noisy samples still help want to dig deeper? paper: https://t.co/aj1ZAcr8Mw trainer docs: https://t.co/TNVz93kZi9

Mini-project this pm inspired by all the spring blooms: Embedding ~5k tulip images from @inaturalist TIL: tulips in the wild are mostly red and yellow :) And that this is a lovely way to explore a group! https://t.co/nW6tr1T1ry

Since Anthropic publish their system prompts we can generate a diff between Claude Opus 4.6 and 4.7 - here are my notes on what's changed https://t.co/IQHuvLGmwO

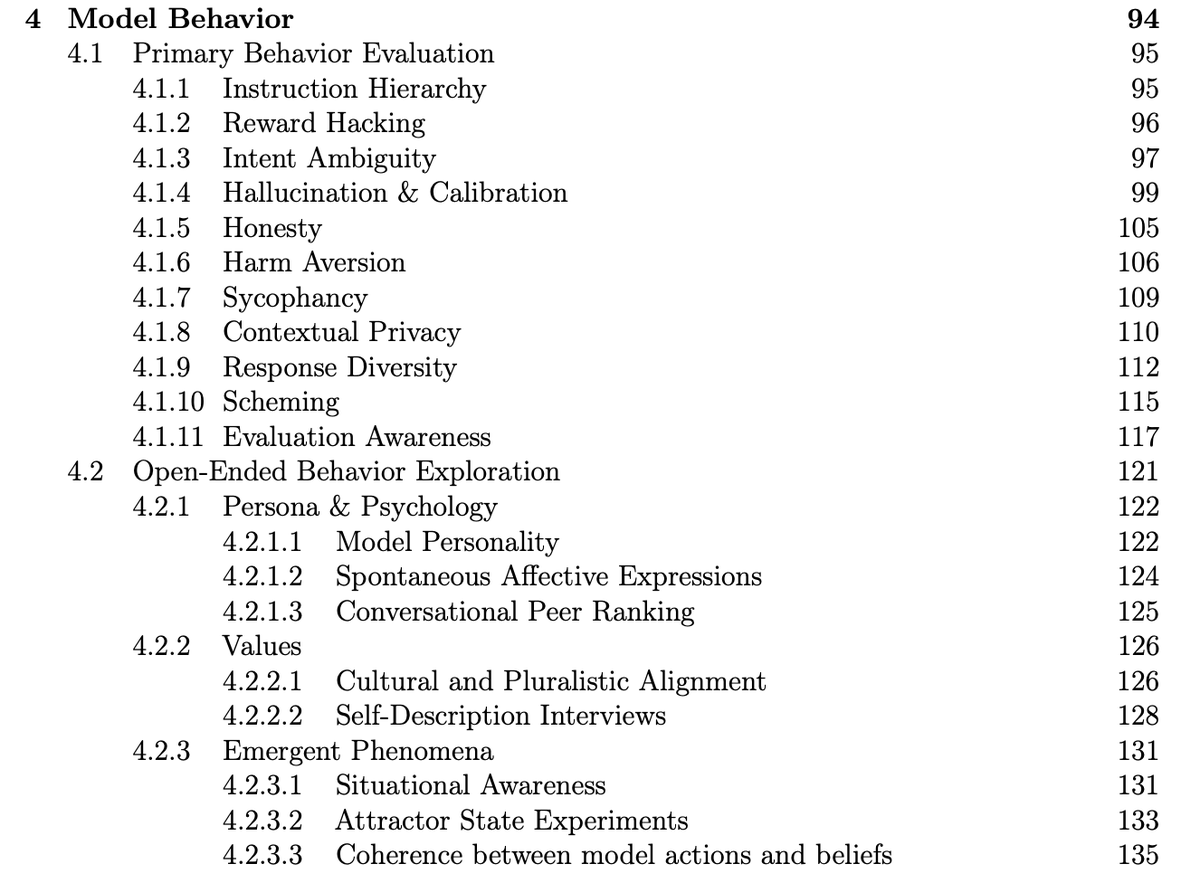

Cool to see that Meta conducted and published a pre-deployment investigation of Muse Spark behaviors like reward hacking, honesty, and evaluation awareness! https://t.co/i1Yy7HsEup

🚀 Muse Spark Safety & Preparedness Report for Meta AI is out. We start with our pre-deployment assessment under Meta's Advanced AI Scaling Framework, covering chemical and biological, cybersecurity, and loss of control risks. Our assessment flagged potentially elevated chem/bio

Shocking result on my pelican benchmark this morning, I got a better pelican from a 21GB local Qwen3.6-35B-A3B running on my laptop than I did from the new Opus 4.7! Qwen on the left, Opus on the right https://t.co/kDlbnJv6YI

Introducing TIPS v2 👀Foundational text-image encoder 📸Can be used as the base for different multimodal applications 🤗Apache 2.0 🧑🍳New pre-training recipes https://t.co/A6H93YJhNx

@reza_byt Doesn't tokenwise looped transformer have issues with pretraining since each token has a different depth and also has to learn the recursion depth?

Speculative decoding for Gemma 4 31B (EAGLE-3) A 2B draft model predicts tokens ahead; the 31B verifier validates them. Same output, faster inference. Early release. vLLM main branch support is in progress (PR #39450). Reasoning support coming soon. https://t.co/PoK8zbA7li

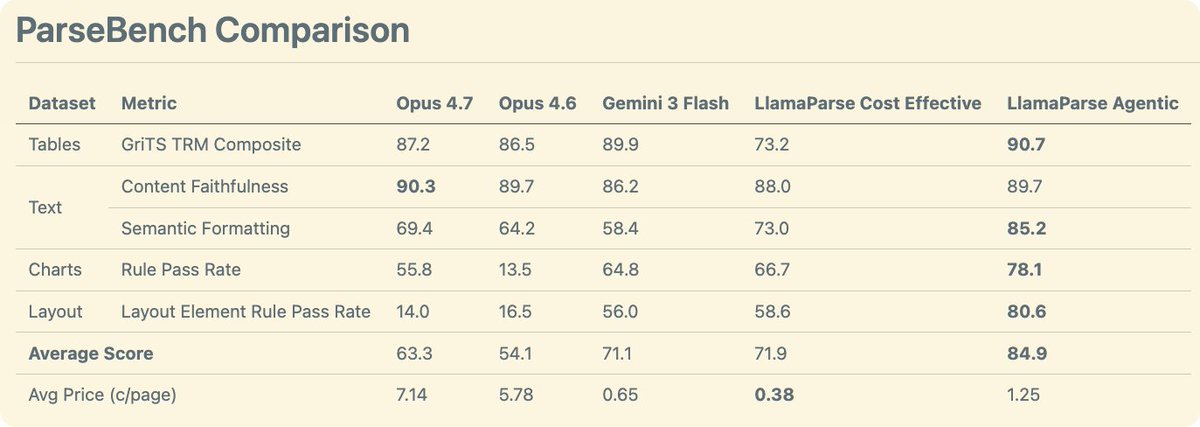

Anthropic says Opus 4.7 hits 80.6% on Document Reasoning — up from 57.1%. But "reasoning about documents" ≠ "parsing documents for agents." We ran it on ParseBench. → Charts: 13.5% → 55.8% (+42.3) — huge → Formatting: 64.2% → 69.4% (+5.2) → Content: 89.7% → 90.3% (+0.6) → Tables: 86.5% → 87.2% (+0.7) → Layout: 16.5% → 14.0% (-2.5) — regressed Real chart gains, but at ~1.5¢/page. Enterprise scale? Not yet. LlamaParse Agentic: 84.9% overall. ~1.2¢/page. The frontier for general document understanding is long. No single model solves it. → https://t.co/h7SpuTWYVn