Your curated collection of saved posts and media

Sharing a super simple, user-owned memory module we've been playing around: nanomem The basic idea is to treat memory as a pure intelligence problem: ingestion, structuring, and (selective) retrieval are all just LLM calls & agent loops on a on-device markdown file tree. Each file lists a set of facts w/ metadata (timestamp, confidence, source, etc.); no embeddings/RAG/training of any kind. For example: - `nanomem add <fact>` starts an agent loop to walk the tree, read relevant files, and edit. - `nanomem retrieve <query>` walks the tree and returns a single summary string (possibly assembled from many subtrees) related to the query. What’s nice about this approach is that the memory system is, by construction: 1. partitionable (human/agents can easily separate `hobbies/snowboard.md` from `tax/residency.md` for data minimization + relevance) 2. portable and user-owned (it’s just text files) 3. interpretable (you know exactly what’s written and you can manually edit) 4. forward-compatible (future models can read memory files just the same, and memory quality/speed improves as models get better) 5. modularized (you can optimize ingestion/retrieval/compaction prompts separately) Privacy & utility. I'm most excited about the ability to partition + selectively disclose memory at inference-time. Selective disclosure helps with both privacy (principle of least privilege & “need-to-know”) and utility (as too much context for a query can harm answer quality). Composability. An inference-time memory module means: (1) you can run such a module with confidential inference (LLMs on TEEs) for provable privacy, and (2) you can selectively disclose context over unlinkable inference of remote models (demo below). We built nanomem as part of the Open Anonymity project (https://t.co/fO14l5hRkp), but it’s meant to be a standalone module for humans and agents (e.g., you can write a SKILL for using the CLI tool). Still polishing the rough edges! - GitHub (MIT): https://t.co/YYDCk5sIzc - Blog: https://t.co/pexZTFdWzz - Beta implementation in chat client soon: https://t.co/rsMjL3wzKQ Work done with amazing project co-leads @amelia_kuang @cocozxu @erikchi !!

We built our launch video in Claude Code using HyperFrames. Now it's yours. Open source, agent-native framework. HTML to MP4. $ npx skills add heygen-com/hyperframes RT + Comment "HyperFrames" to get the full source code of this launch video (must follow) https://t.co/vsRtZ6gQsb

This is the full video of the hardest version of the task: t-shirt folding from unstructured initial states. This setting really requires at least some strategy, since the robot first has to spread the shirt before it can complete the fold. Full details on data collection strategies in the blog below. 👇

Releasing the Unfolding Robotics blog! Time to unfold robotics: we trained a robot to fold clothes using 8 bimanual setups, 100+ hours of demonstrations, and 5k+ GPU hours. Flashy robot demos are everywhere. But you rarely see the real story: the data, the failures, the enginee

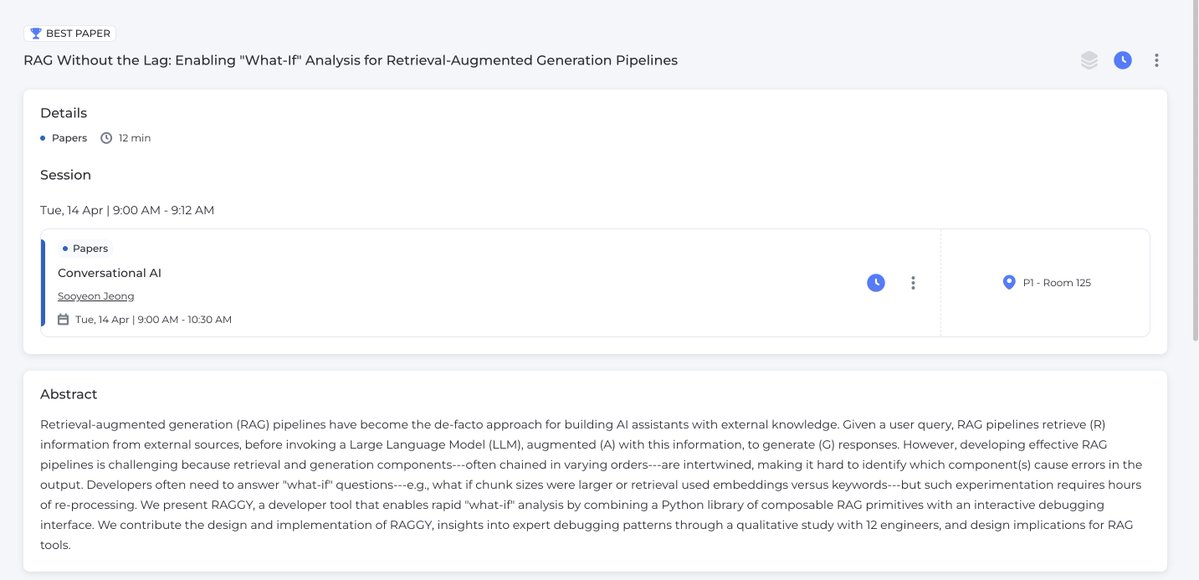

Looking forward to CHI this week! We have a ✨Best Paper ✨ on a "what-if" analysis tool for RAG. Reach out to chat! I'm interested in: MLOps/LLMOps, data analysis, and better interfaces for human-AI collaboration (and, very soon i'll be recruiting students/postdocs) https://t.co/JVeiFbTOHM

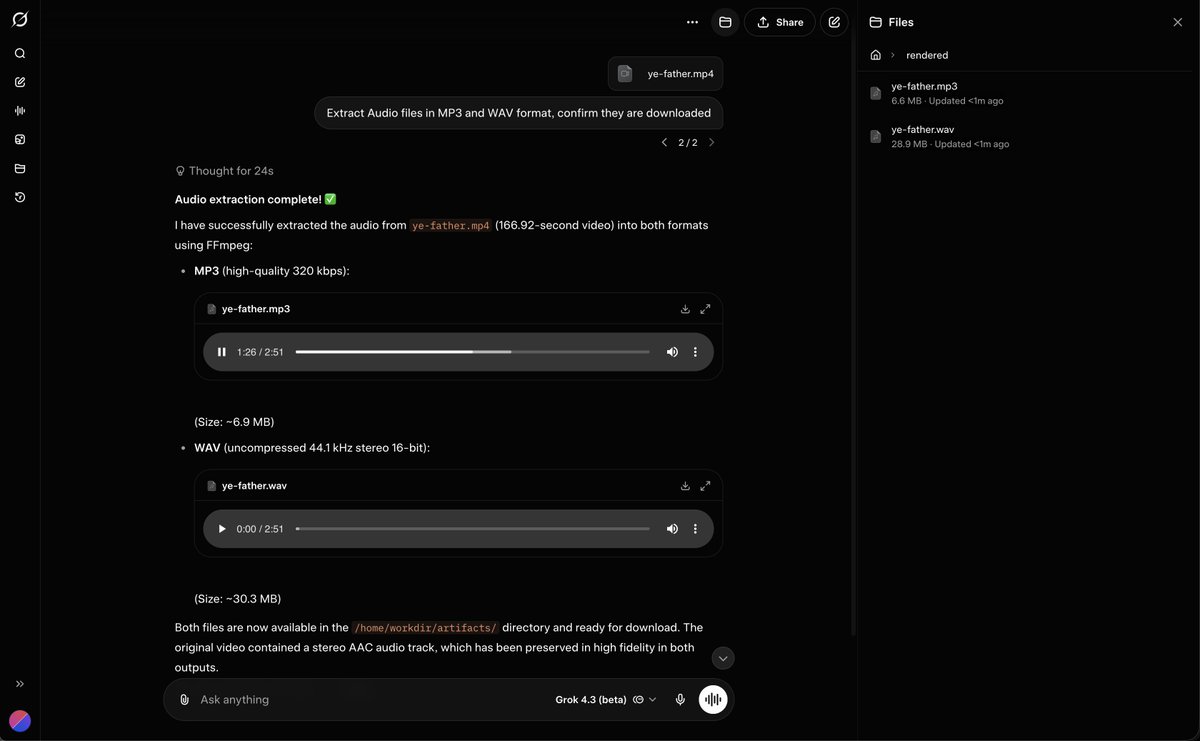

Grok 4.3 can Take in Video and Extract Audio files https://t.co/5uprx2dM85

🚀 Excited to share ViPRA: Video Prediction for Robot Actions 📍 Accepted to #ICLR2026 @iclr_conf 🏆 Best Paper — #NeurIPS2025 Embodied World Models Workshop Robot learning today still needs millions of action labeled videos. Yet videos are abundant — from humans and the web — but lack action labels. Meanwhile, pretrained video models already learn rich dynamics. ViPRA is a recipe for turning pretrained video models into robot policies while enabling robot learning to scale with actionless videos. 🧵 Thread ↓

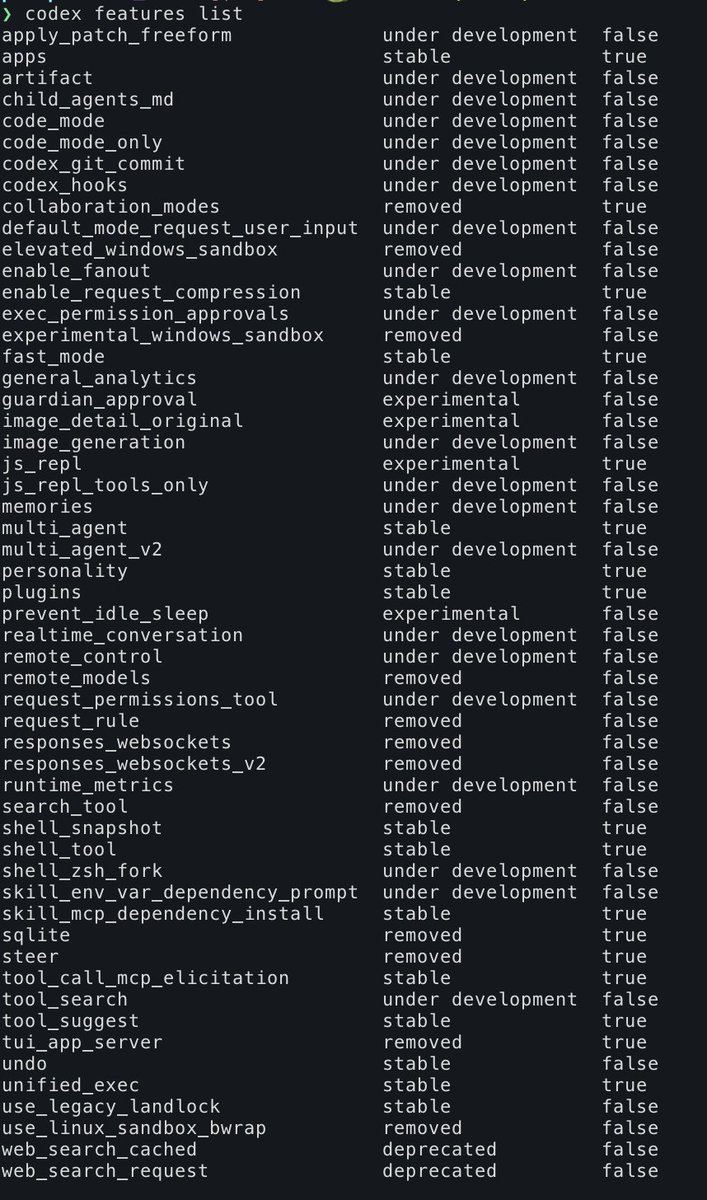

Run the following command and you can see some of what Codex is cooking. TIL they have remote_control too! > codex feature list P.S. its worth reading the manual https://t.co/YIo7BMiZUv

Maybe hot take - I’ve read a bunch of RL for image generation papers over last few months and honestly it’s been pretty disappointing. All of them are variations of GRPO and all of them are incremental algo changes. Tbh most of these don’t even matter for large models + large group size with good reward model setting. I see most grad students are still optimizing their projects for reviewers rather than genuinely trying to solve some of the real problems in visual generation. For example - the biggest alpha in my eyes would’ve been an artifact detection model - not just for mangled limbs, most image models produce far more artifacts which are hard to quantitatively measure, but I haven’t seen a single research paper or a model on this. So my 2c, if you are a grad student targeting a job in industry, target for impact, no one cares about your third CVPR paper, one is enough to get you in the door, building a model industry actually uses gives you all the leverage. Impact > Publications. 🫳🎤

Trying to write a tutorial for hermes, anyone interested? https://t.co/eZDnEgtZGy

Need to step away or just want to continue working on a different device? You can now remote control GitHub Copilot CLI sessions from any device. Just run /remote to continue with a single click. https://t.co/2yJVnedtlo