Your curated collection of saved posts and media

Also, how cool that this is so easy now? This was a few careful asks to Codex, which worked for ~128k tokens/1h to do everything - sourcing the data, embedding with clip (via @replicate), making an exploratory search tool for refining + filtering, and whipping up the final app.

@trq212 This framing degrades technical literacy. Your prompt isn't "communication", it's tokenized, embedded as vectors, processed through frozen weights. No "agent" receives it. No bandwidth grows. Anthropic's own leaked system prompt: 16,739 words of context steering. That's engineering, not dialogue. Research shows anthropomorphic AI discourse creates self-fulfilling alignment degradation. You're not "talking to agents." You're structuring conditional probability queries. High-leverage? Yes. Interpersonal? Never. If the goal is public understanding, not marketing, stop treating inference as a “team member”. That's technically inaccurate. Which makes it dishonest the moment someone with enough expertise verifies it.

dflash-mlx: DFlash speculative decoding, ported to Apple Silicon. Qwen3-4B at 186 tok/s on a MacBook. 4.6× faster than plain MLX-LM. Exact greedy decoding: output matches plain target decoding. https://t.co/VxfyworgAe

We sat down with Kyle Caverly, an AI Performance Engineer on the MAX serve team, to walk through what actually happens inside an inference server from prompt to response. All the code discussed is open source. https://t.co/iwSQ4QA5F1

Most serving stacks run FLUX.2 as four separate stages with Python overhead between each one. We collapsed all four into a single fused execution graph using MLIR-based compilation. On @AMD MI355X, that means a 3.8x speedup over torch.compile, 1024x1024 images in under 3.5 seconds, and a deployment container under 700MB. We ran the same pipeline on Blackwell, too. AMD delivers equivalent generation quality at a 5.5x lower cost. @clattner_llvm is presenting the full breakdown at AMD AI DevDay. Register: https://t.co/Pa1e36BTZn

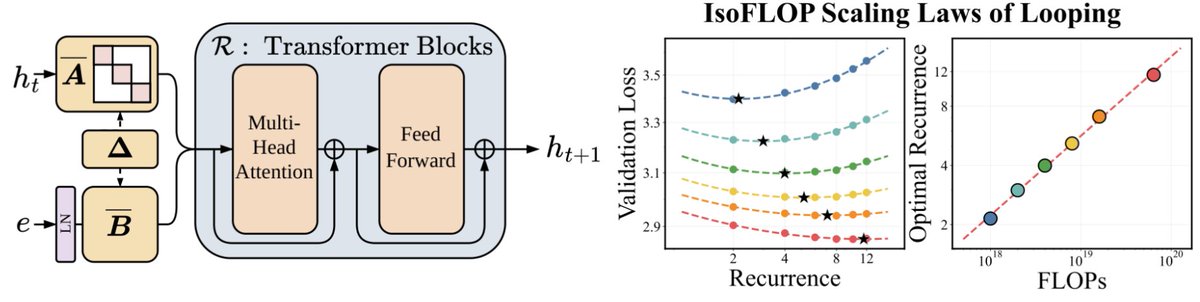

📢 Super excited to announce Parcae! We've been thinking about scaling laws and the "right" way to get more FLOPs. Turns out layer looping - with the right parameterization - gives you a new axis to scale! Parcae matches Transformers 2x their size (w/ the same data), and outperforms prior formulations of looped models. But - you need the right parameterization to get these gains against strong Transformer baselines. Looped models are famously unstable to train, with tons of loss spikes and hyperparameter sensitivity. The main technical challenge with looped models is residual explosion - if you're passing the activations through the same layers over and over, some otherwise benign parameterizations cause huge instability. Our key idea: we can think of the residual stream of a model as a time-varying dynamical system - the same fundamentals behind SSMs like Mamba and S4. Then a few modest modifications to classic Transformers (stable diagonalization of injection params, LN before embeddings) can stabilize the looped models. The resulting models are more stable to train, but also reach higher quality. It's strong enough to start to derive new scaling laws. Classically - we know you need to scale parameters with data to be FLOP-optimal. With Parcae, we find a third axis - given fixed parameters, you additionally want to scale FLOPs by looping as you scale data. Super excited to see how these ideas hold, and what we can do with looped models! Check out @hayden_prairie's great explainer thread below, and see links for our paper, blog, and models. Joint w/ @zacknovack and @BergKirkpatrick, and a fun collab between @togethercompute and my lab at @ucsd_cse. Enjoy!

We’ve been thinking a lot about scaling laws, wondering if there is a more effective way to scale FLOPs without increasing parameters. Turns out the answer is YES – by looping blocks of layers during training. We find that predictable scaling laws exist for layer looping, allowing us to use looping to achieve the quality of a Transformer twice the size. Our scaling laws suggest that for a fixed parameter budget, data and looping should be increased in tandem! 🧵👇

@reza_byt Doesn't tokenwise looped transformer have issues with pretraining since each token has a different depth and also has to learn the recursion depth?

A new PyTorch-native backend is coming to unlock the power of Google TPUs: ✨ Run existing PyTorch with minimal code changes. ✨ Get a 50-100%+ performance boost with Fused Eager mode. Read the engineering deep dive here: https://t.co/GQPRYaKz7E #TorchTPU #PyTorch #MLOps #AI https://t.co/HiIdXVw6Oh

We ran @AnthropicAI Claude Opus 4.7 (High) on APEX-SWE, our benchmark for real-world software engineering work. It scores 41.3% pass@1, placing 2nd on the leaderboard. It is only 0.2% away from GPT 5.3 Codex (High). https://t.co/4U6pKtrvI0