Your curated collection of saved posts and media

A prototype that turns everyday life into something like an adventure game. It’s built on a Pixel 10 Pro with Gemma 4 via AI Core. https://t.co/AnBZ7GeS5F

街をAIが見てくれてゲームみたいにメッセージ表示してくれるやつ作った ローカルVLM(ネット接続不要 https://t.co/nlx5t8cc1H

Is there somewhere a collection of the best agent/coding harnesses for each models, especially open-source and local ones? In my opinion, the biggest reason why people are struggling with open/local models these days is that the agent/coding harnesses in most open agent are not designed for them and expect it to magically work when they switch models from the default.

Codex for (almost) everything. It can now use apps on your Mac, connect to more of your tools, create images, learn from previous actions, remember how you like to work, and take on ongoing and repeatable tasks. https://t.co/UEEsYBDYfo

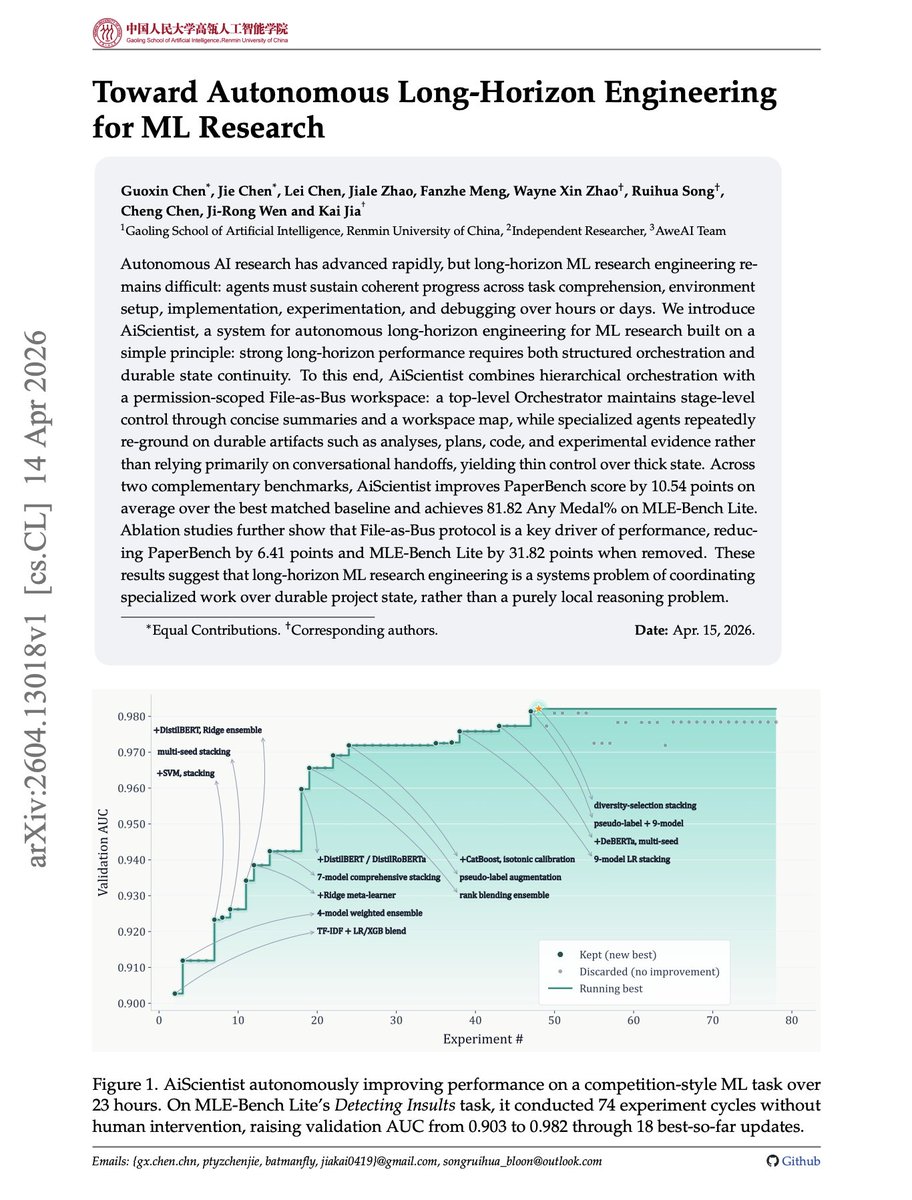

Long-horizon AI research agents are mostly a state-management problem. It is not enough for an agent to reason well in the next turn. ML research requires task setup, implementation, experiments, debugging, and evidence tracking over hours or days. This new paper introduces AiScientist, a system for autonomous long-horizon engineering for ML research. The key idea is to keep control thin and state thick. A top-level orchestrator manages stage-level progress, while specialized agents repeatedly ground themselves in durable workspace artifacts: analyses, plans, code, logs, and experimental evidence. That "File-as-Bus" design matters. AiScientist improves PaperBench by 10.54 points over the best matched baseline and reaches 81.82 Any Medal% on MLE-Bench Lite. Removing File-as-Bus drops PaperBench by 6.41 points and MLE-Bench Lite by 31.82 points. Why does it matter? Autonomous research agents need durable project memory, not just longer chats. Paper: https://t.co/A84c75oumP Learn to build effective AI agents in our academy: https://t.co/1e8RZKs4uX

GLM-5.1 > Claude Code (Opus 4.6)? I'm tripping or CC has become very bad but built a Three.js racing game to eval and it's extremely impressive. Thoughts: - One-shot car physics with real drift mechanics (this is hard) - My fav part: Awesome at self iterating (with no vision!) created 20+ Bun.WebView debugging tools to drive the car programmatically and read game state. Proved a winding bug with vector math without ever seeing the screen - 531-line racing AI in a single write: 4 personalities, curvature map, racing lines, tactical drifting. Built telemetry tools to compare player vs AI speed curves and data-tuned parameters - All assets from scratch: 3D models, procedural textures, sky shader, engine sounds, spatial AI audio! - Can do hard math: proved road normals pointed DOWN via vector cross products, computed track curvature normalized by arc length to tune AI cornering speed You are going to hear about this model a lot in the next months - open source let's go 🚀🚀

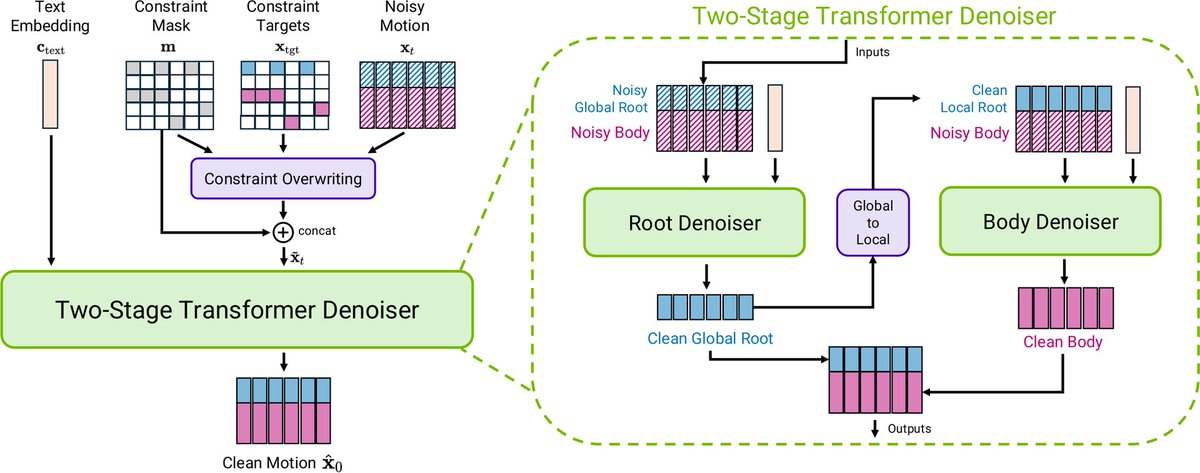

NVIDIA just released Kimodo on Hugging Face A kinematic motion diffusion model trained on 700 hours of optical motion capture to generate 3D human and robot motions controlled by text and kinematic constraints. https://t.co/s7foOXXqqB

Hermes hands on guide: concepts, core mechanisms, and real world use cases. https://t.co/m6bTIbPOck

Let's talk parsing tables. Two days ago we launched ParseBench,the first document OCR benchmark built for AI agents. This deep dive breaks down TableRecordMatch (GTRM), our metric for evaluating complex tables the way your pipeline actually consumes them: as records keyed by column headers. https://t.co/2sq5ncGiel

PyTorch Foundation is expanding its #OpenSourceAI stack with #Safetensors, #ExecuTorch, and #Helion to improve model security, inference, and performance portability, writes Meredith Shubel for @thenewstack. @sparkycollier: Bringing Safetensors into the fold is “an important step towards scaling production-grade AI models.” ExecuTorch becomes a part of #PyTorch Core to expand on-device inference capabilities. Safetensors and Helion join @vllm_project, @DeepSpeedAI, and @raydistributed as foundation-hosted projects. Read Meredith Shubel’s coverage at @thenewstack here: https://t.co/ZoyWbP6Vji @huggingface @Meta

@berryxia I'm very confused where they came up with this many tokens. It is around 3k tokens for the actual system prompt, with another 7.5K or so because of the core toolset.