Your curated collection of saved posts and media

New video model HappyHorse-1.0 by Alibaba-ATH debuts at #1 in Video Edit Arena. It scores 1299, leading Grok Image Video by +42 points and Kling o3 Pro by +48 points. Video editing is an emerging frontier capability for video models, and only a small number of models support it today. Huge congrats to the Alibaba-ATH team on this incredible milestone!

HappyHorse-1.0 is now live on Arena! 🚀 Early evals show exceptional performance in Video Edit. We are now in the final optimization sprint for the official launch in 2 weeks. We invite the community to get early access and test our capabilities at https://t.co/iiyfgPtib5. 🐎✨

Introducing the MLX-Benchmark Suite!! https://t.co/sp4ZMIBxov The first comprehensive benchmark for evaluating LLMs on Apple's MLX framework. 🎯 What is this? MLX Benchmark is a CLI tool and dataset that measures how well large language models understand, write, and debug code for Apple's MLX machine learning framework — covering everything from core array operations to LoRA fine-tuning with mlx-lm, mlx-vlm, and mlx-embeddings. 📊 Dataset https://t.co/5b04a7PKAp - 520 questions across 6 task types: knowledge QA, multiple choice, true/false, fill-in-the-blank, code generation, and debugging - 11 categories spanning the full MLX ecosystem: mlx_core, mlx_nn, mlx_lm, mlx_lm_lora, mlx_vlm, mlx_embeddings, mlx_embeddings_lora, mlx_optimizers, coding, debugging, conceptual - 4 difficulty levels: easy → medium → hard → very-hard - 90+ subcategories covering everything from array_creation to lora_finetuning ✨ Features - 🏃 Multi-provider benchmarking — Ollama, Anthropic, OpenAI, Groq, OpenRouter - ⚖️ LLM-as-judge evaluation — strict scoring with an independent judge model - 🔍 Fine-grained filtering — by type, difficulty, and category - 📝 LaTeX export — --latex generates publication-ready booktabs tables - 📈 PNG chart export — --plot generates grouped bar charts comparing models A detailed paper will be coming as well!!!

@IgorCarron @LightOnIO ColBERT-Zero matching larger models on public data alone is impressive. Late interaction remains underappreciated. Token-level matching preserves what dense pooling compresses away. Tested this myself on retrieval tasks, the precision gains are real.

llama.cpp now supports various small OCR models that can run on low-end devices. These models are small enough to run on GPU with 4GB VRAM, and some of them can even run on CPU with decent performance. In this post, I will show you how to use these OCR models with llama.cpp 👇

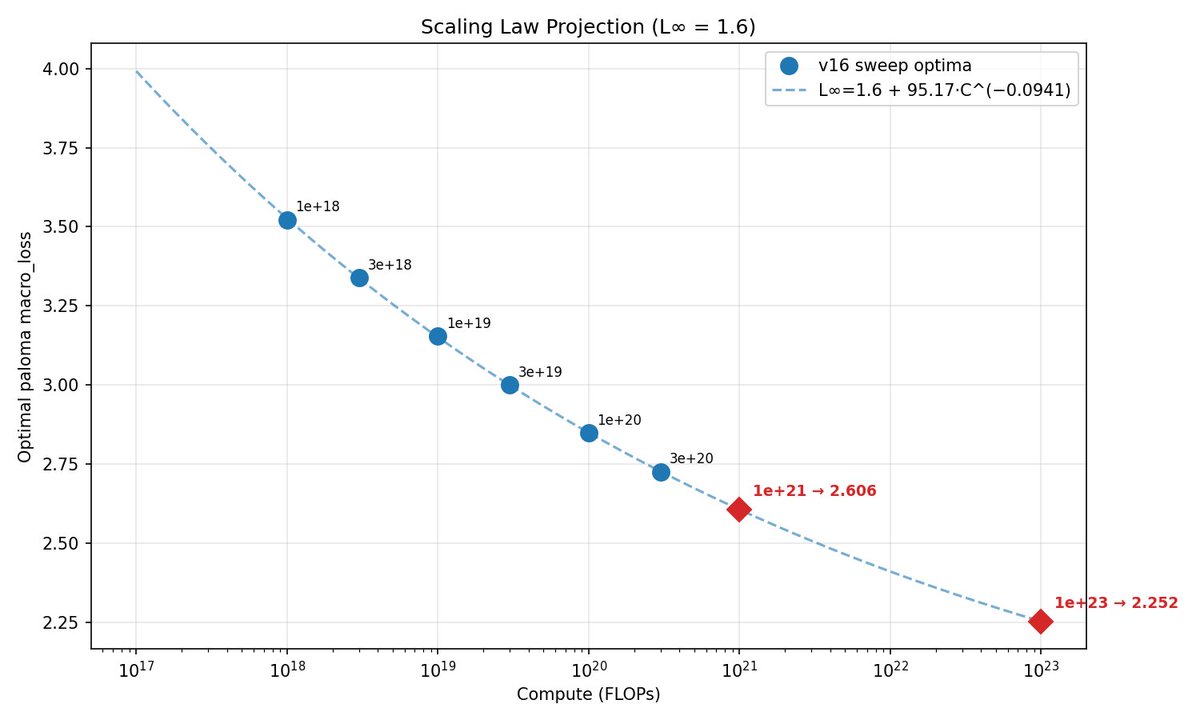

This week, @classiclarryd kicked off a 129B (16B active) 1e23 FLOPs MoE run. In typical Marin style, we have fit scaling laws and have made a loss projection of 2.252. Stay tuned. https://t.co/QnwJ8YxT9H

A downside with using VLMs to parse PDFs is guaranteeing that the output text is *correct* and output in the correct reading order. 1️⃣ Text correctness: making sure that digits, words, sentences are not hallucinated or dropped. 2️⃣ Reading Order: making sure that complex multi-layout pages are linearized into the right 1-d text order. We call this Content Faithfulness in ParseBench, our comprehensive document OCR benchmark for agents. We have 167k rules that measure digit/word/sentence-level correctness along with reading order correctness. It seems relatively table-stakes, but no parser gets this 100% right, and this means that the agent’s downstream decision-making is compromised. Come learn more about how this metric works in the video below, along with our full blog writeup, whitepaper, and website! Blog: https://t.co/57OHkx0pQW Paper: https://t.co/Ho2oH2xEAM Website: https://t.co/g0b0jsCynW

Let's talk content faithfulness. Four days ago, we launched ParseBench, the first document OCR benchmark for AI agents. Its most fundamental metric asks: did the parser capture all the text, in order, without making things up? We grade three failure modes with 167K+ rule-based

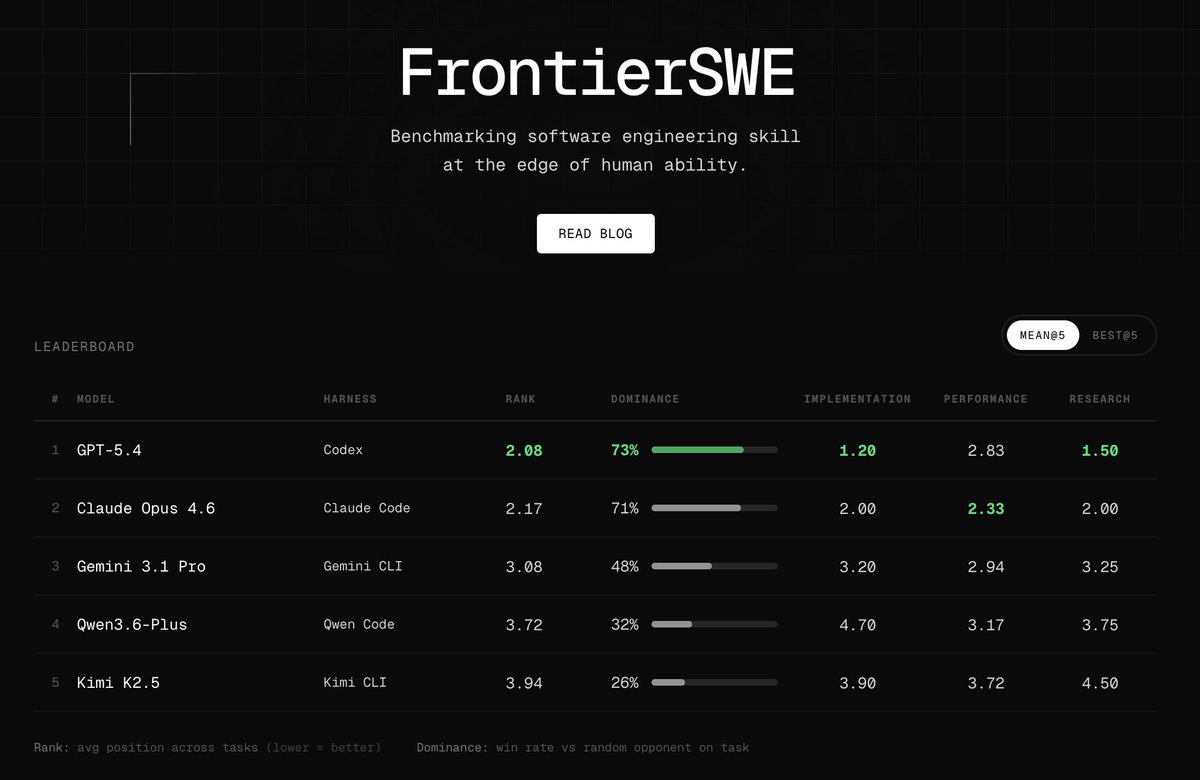

Introducing FrontierSWE, an ultra-long horizon coding benchmark. We test agents on some of the hardest technical tasks like optimizing a video rendering library or training a model to predict the quantum properties of molecules. Despite having 20 hours, they rarely succeed https://t.co/xbqHJRZiPZ

@breath_mirror @kaiostephens @DJLougen @bstnxbt yeah, that one works as well. doesn't need the minor PR to handle qwen3_5_text rather than the multimodal wrapper. ~2x-4x speedup (my laptop got way too hot and throttled)

Training Qwen2.5-0.5B-Instruct on Reddit post summarization with GRPO on my 3x Mac Minis — trying combination of quality rewards with length penalty! Completed all of the following combination rewards! >METEOR + BLEU >BLEU + ROUGE-L >METEOR + ROUGE-L All the code and wandb charts in the comments --- Training Qwen2.5-0.5B-Instruct on Reddit post summarization with GRPO on my 3x Mac Minis — trying combination of quality rewards with length penalty! Completed all of the following combination rewards! >METEOR + BLEU >BLEU + ROUGE-L >METEOR + ROUGE-L All the code and wandb charts in the comments --- Setup: 3x Mac Minis in a cluster running MLX. One node drives training using GRPO, two push rollouts via vLLM. Trained two variants: → length penalty only (baseline) → length penalty + quality reward (BLEU, METEOR and/or ROUGE-L ) --- Eval: LLM-as-a-Judge (gpt-5) Used DeepEval to build a judge pipeline scoring each summary on 4 axes: → Faithfulness — no hallucinations vs. source → Coverage — key points captured → Conciseness — shorter, no redundancy → Clarity — readable on its own

🔈 Every model added to transformers has to be available on Apple Silicon 🍎 at once. We built a Skill and test harness for mlx-lm to get us closer 🔥 It's designed to help contributors AND support reviewers. Read on to see what we did and why it matters.