Your curated collection of saved posts and media

Speculative decoding for Gemma 4 31B (EAGLE-3) A 2B draft model predicts tokens ahead; the 31B verifier validates them. Same output, faster inference. Early release. vLLM main branch support is in progress (PR #39450). Reasoning support coming soon. https://t.co/PoK8zbA7li

Rethinking Generalization in Reasoning SFT A Conditional Analysis on Optimization, Data, and Model Capability paper: https://t.co/AFqLfOfK3R https://t.co/j7gHnlDofv

NEW paper from Apple. Interesting idea: "Attention to Mamba". The paper introduces a two-stage recipe for cross-architecture distillation from Transformers into Mamba. Naive distillation collapses teacher performance. Their trick: first distill the transformer into a linearized-attention student using a kernel adaptation, then transfer that student into a pure Mamba with no attention blocks. On a 1B model trained on 10B tokens, the Mamba student hits 14.11 perplexity against a 13.86 Pythia-1B teacher, nearly matching quality at linear-time inference cost. If you can reliably convert trained transformers into state-space models without retraining from scratch, the entire open-weights ecosystem becomes cheaper to serve at long context. This is the kind of quiet infrastructure work that decides which architectures actually get deployed in agent stacks. Paper: https://t.co/h7k7OrG8Qj Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c

Since Anthropic publish their system prompts we can generate a diff between Claude Opus 4.6 and 4.7 - here are my notes on what's changed https://t.co/IQHuvLGmwO

@jeremyphoward Check this out! I used Lean4 to emit MLIR by way of StableHLO/IREE to train image recognition networks, with proofs for the backprop operations! https://t.co/HqYG6KflSO

(1/5) FP4 hardware is here, but 4-bit attention still kills model quality, blocking true end-to-end FP4 serving. To fix that, we propose Attn-QAT, the first systematic study of quantization-aware training for attention. The result: FP4 attention quality is comparable to BF16 attention with 1.1x–1.5x higher throughput than SageAttention3 on an RTX 5090 and 1.39x speedup over FlashAttention-4 on a B200. Blog: https://t.co/NxVSXKWEgI Code: https://t.co/6irFgQ7GeM Checkpoints: https://t.co/GsrzbJlRY8

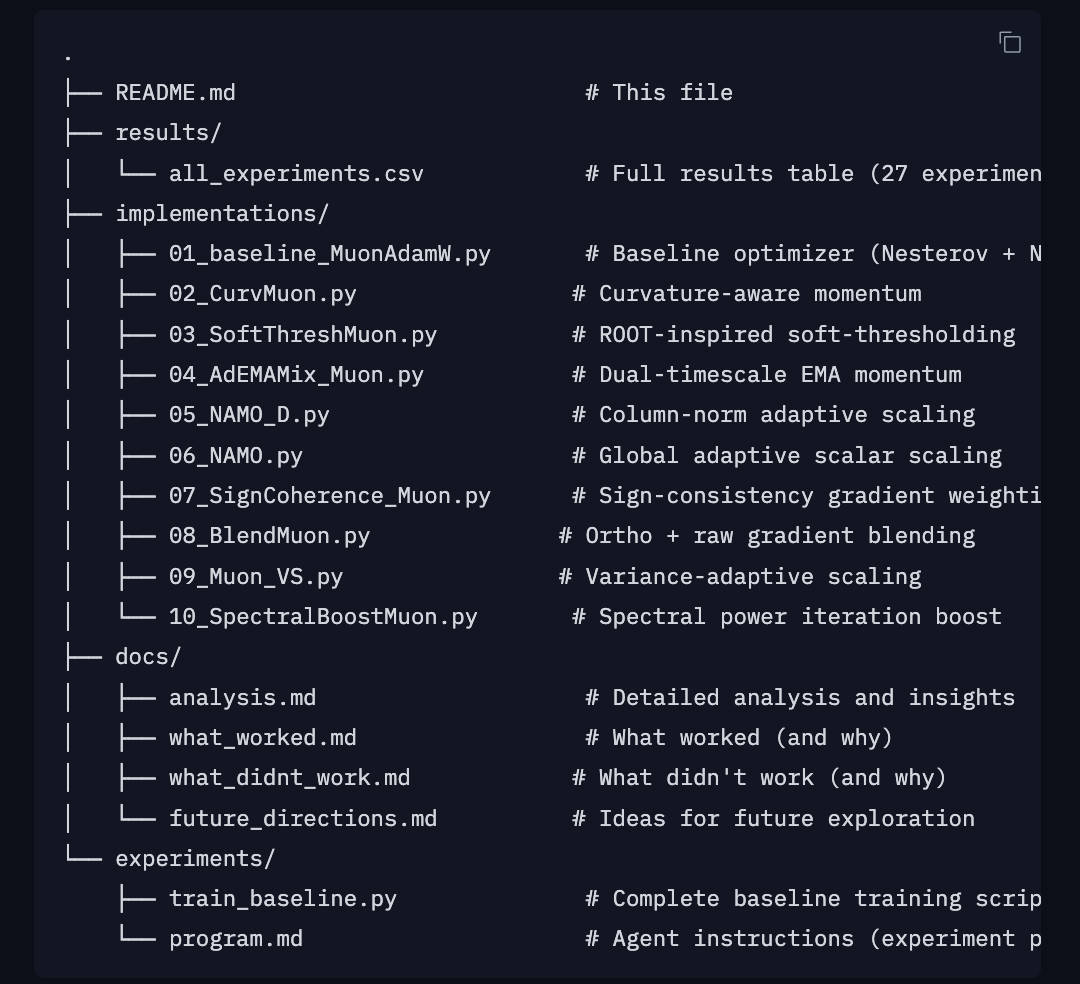

Ran autoresearch on hf to see whether anything can beat MuonAdamW baseline Biggest takeaway: NS orthogonalization is a very strong attractor that absorbs most gradient modifications you throw at it. See all the artifacts at https://t.co/S5DY7MezUp https://t.co/XyIEMeZ4Ft

🚀 NEW GEMMA 4 31B TURBO DROPPED Runs on a SINGLE RTX 5090: ⚡️18.5 GB VRAM only (68% smaller) 🧠51 tok/s single decode 💻1,244 tok/s batched 🤖15,359 tok/s prefill ← yes, fifteen thousand 🚨2.5× faster than base model with basically zero quality loss. It hits Sonnet-4.5 level on hard classification tasks… at 1/600th the cost. Local models are shipping faster than we can test 👇🏻 🔥 HF: https://t.co/XUvVZBj9AX

@replicate Code if you'd like to replicate this without starting from scratch: https://t.co/AzqBsiOHk1 Please tag me so I can see your pretty results if you try this on any different taxa :)

OpenClaw 2026.4.10 🦞 🧠 Active Memory plugin 🎙️ local MLX Talk mode 🤖 Codex app-server harness plugin 🧾 Teams pins/reactions/read actions 🛡️ SSRF hardening + launchd fixes stability, but with attitude🦞 https://t.co/PW7WDumTf1