Your curated collection of saved posts and media

Is there somewhere a collection of the best agent/coding harnesses for each models, especially open-source and local ones? In my opinion, the biggest reason why people are struggling with open/local models these days is that the agent/coding harnesses in most open agent are not designed for them and expect it to magically work when they switch models from the default.

Why are you still using React when you can vibe code something better in a day?

working on a fun little retro terminal to mess with Hermes from @NousResearch combined with Qwen vision. Check it out... https://t.co/EAu8aIFeYT https://t.co/xg1no153gd

Research we co-authored on subliminal learning—how LLMs can pass on traits like preferences or misalignment through hidden signals in data—was published today in @Nature. Read the paper: https://t.co/b1BYwcW9dH

I strongly suspect that Claude Mythos is a looped language model, as described in the paper "Scaling Latent Reasoning via Looped Language Models" from ByteDance The authors of that paper called out graph search as one of the areas where looping provides a huge theoretical advantage over standard RLVR. And look at where Mythos blows out its competitors the most

GDPval is one of the most important benchmarks of AI ability because it is based on human expertise. It compares expert human performance to AI performance using expert human judges who spend an average of an hour evaluating each answer. It also has holdout questions that are not public. It is also very expensive to run, and OpenAI controls it, so I understand the need for alternatives. But GDPval-AA is not a good alternative. GDPval-AA has AI models respond to the public dataset of GPDval questions, and then asks Gemini 3.1 to judge which of two LLM answers are better. There is no reason to suspect that this is highly correlated with what an expert human would think, nor is there a human baseline to compare to.

Hermes hands on guide: concepts, core mechanisms, and real world use cases. https://t.co/m6bTIbPOck

Let's talk parsing tables. Two days ago we launched ParseBench,the first document OCR benchmark built for AI agents. This deep dive breaks down TableRecordMatch (GTRM), our metric for evaluating complex tables the way your pipeline actually consumes them: as records keyed by column headers. https://t.co/2sq5ncGiel

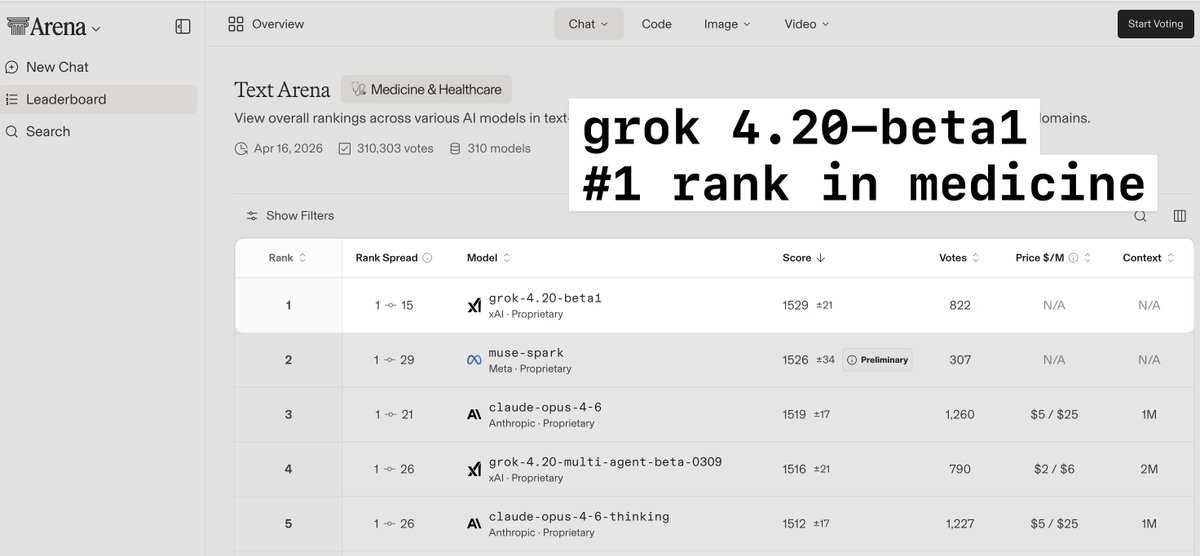

> grok4.20-beta1 is a much smaller model than opus but is #1 ranked in medicine and healthcare > 4.3 and 4.4 will be much larger models, and likely will have a significant boost in performance on complex medical cases > this is massively important in providing accurate diagnostic guidance and advice to both providers and patients

@techdevnotes Supplemental training has been added to 4.3. Grok 4.4 will be twice the size (1T) with training data through early April. Probably ready for release in early May. Grok 4.5 will be 1.5T and hopefully out by late May.

What if virtual humans could see, think, and act in 3D worlds like us?! We present Visually-Grounded Humanoid Agents 🎉 Our agents 👀perceive via RGB-D vision, 🧠plan with context-aware reasoning, and 🏃act with full-body motion in 3D scenes. Check 🔗 https://t.co/kd0zCu7W2h https://t.co/VQZnjE2Pnx