@dair_ai

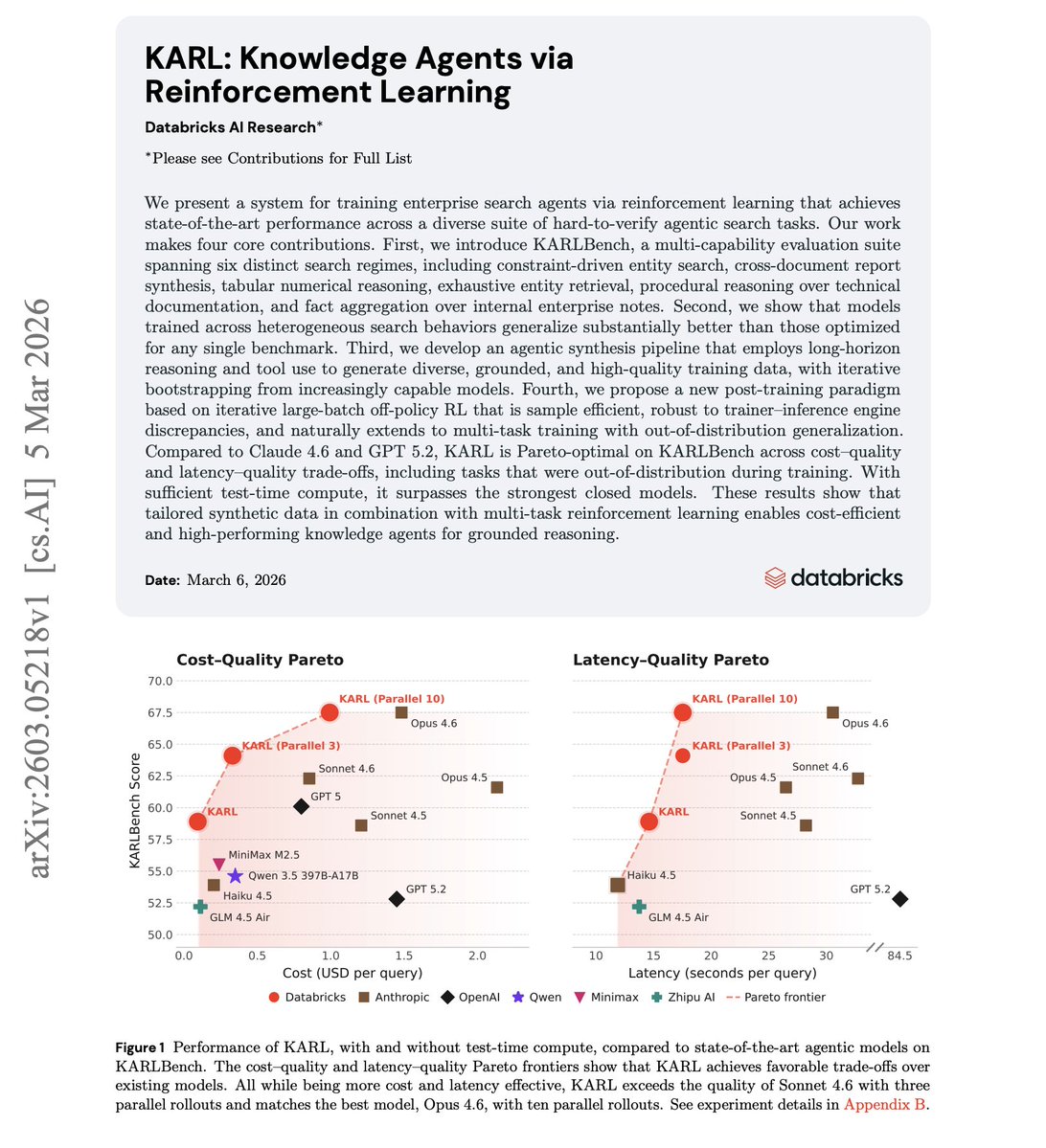

New research from Databricks. It's about training enterprise search agents via RL. KARL introduces a multi-task RL approach where agents are trained across heterogeneous search behaviors, constraint-driven entity search, cross-document synthesis, and tabular reasoning. It generalizes substantially better than those optimized for any single benchmark. KARL is Pareto-optimal on both cost-quality and latency-quality trade-offs compared to Claude 4.6 and GPT 5.2. With sufficient test-time compute, it surpasses the strongest closed models while being more cost efficient. Paper: https://t.co/CToEmDU89J Learn to build effective AI agents in our academy: https://t.co/LRnpZN7L4c