@ModelScope2022

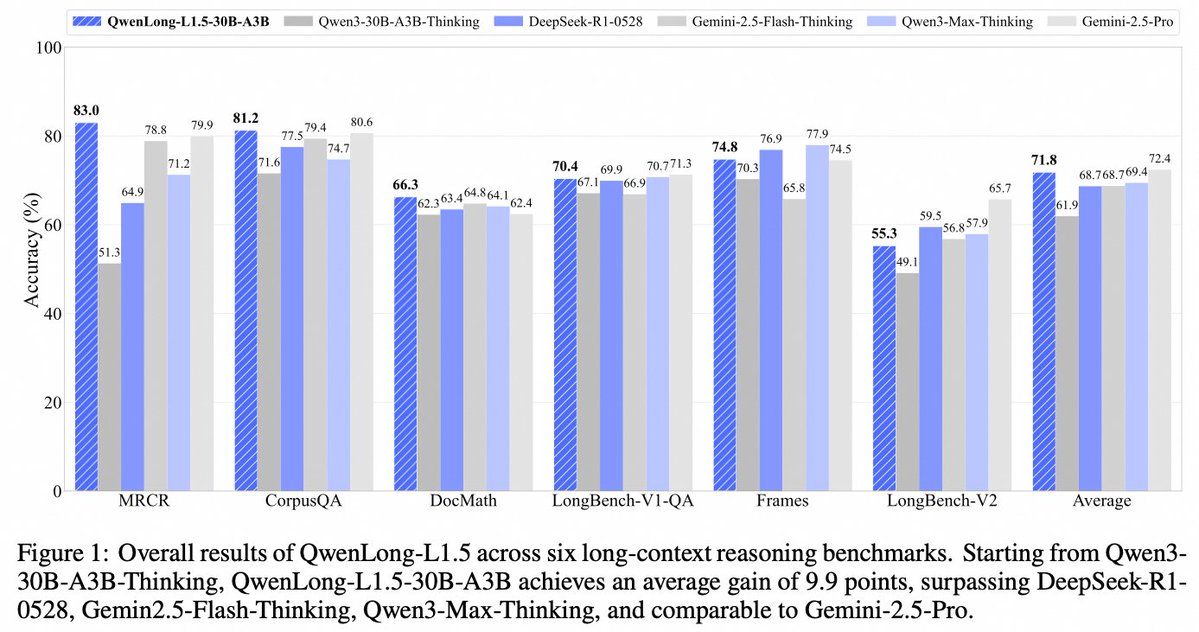

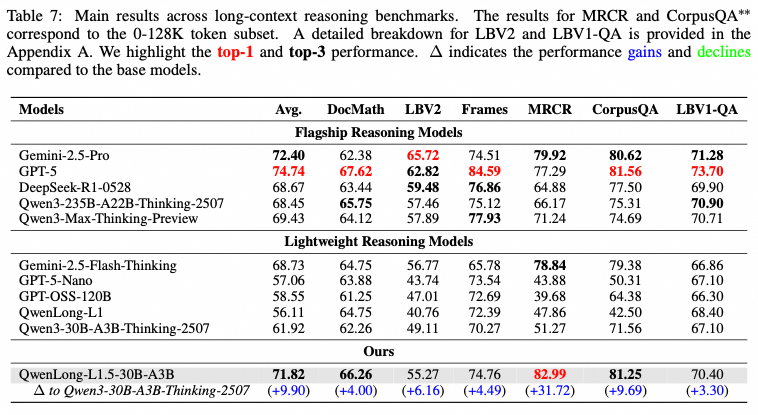

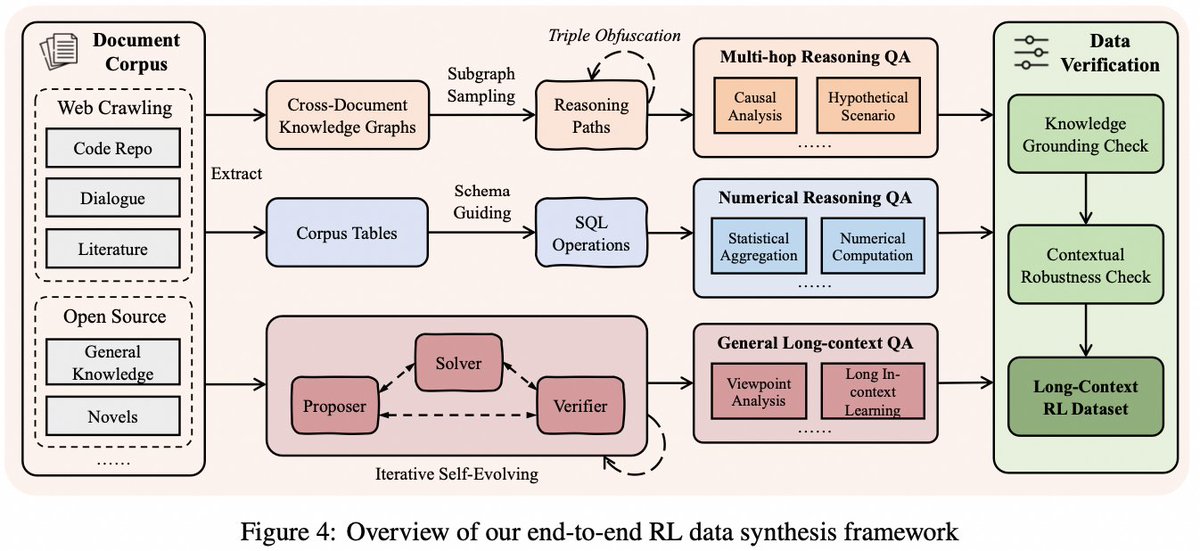

🚀 New on ModelScope: QwenLong-L1.5 is now fully open-source! A 30B model (3B active params) that matches GPT-5 & Gemini-2.5-Pro in long-context reasoning. 🔥 Key wins: ✅ +31.7 pts on OpenAI’s MRCR (128K context → SOTA across all models) ✅ Matches Gemini-2.5-Pro on 6 major long-QA benchmarks ✅ +9.69 on CorpusQA, +6.16 on LongBench-V2 How? Three breakthroughs: 1️⃣ Synthetic data at scale: 14.1K long-reasoning samples from 9.2B tokens — no human labeling. Avg. length: 34K tokens (max: 119K!). 2️⃣ Stable RL training: Task-balanced sampling + Adaptive Entropy-Controlled Policy Optimization (AEPO) for reliable long-sequence learning. 3️⃣ Memory-augmented architecture: Iterative memory updates beyond the 256K window → +9.48 pts on 1M–4M token tasks! All weights, data recipes, and training code are open: 📄 Paper: https://t.co/MzlHXeardA 📥 Model: https://t.co/kE3q4HLhdk 💻 GitHub: https://t.co/KghF3mp9H3