@Xianbao_QIAN

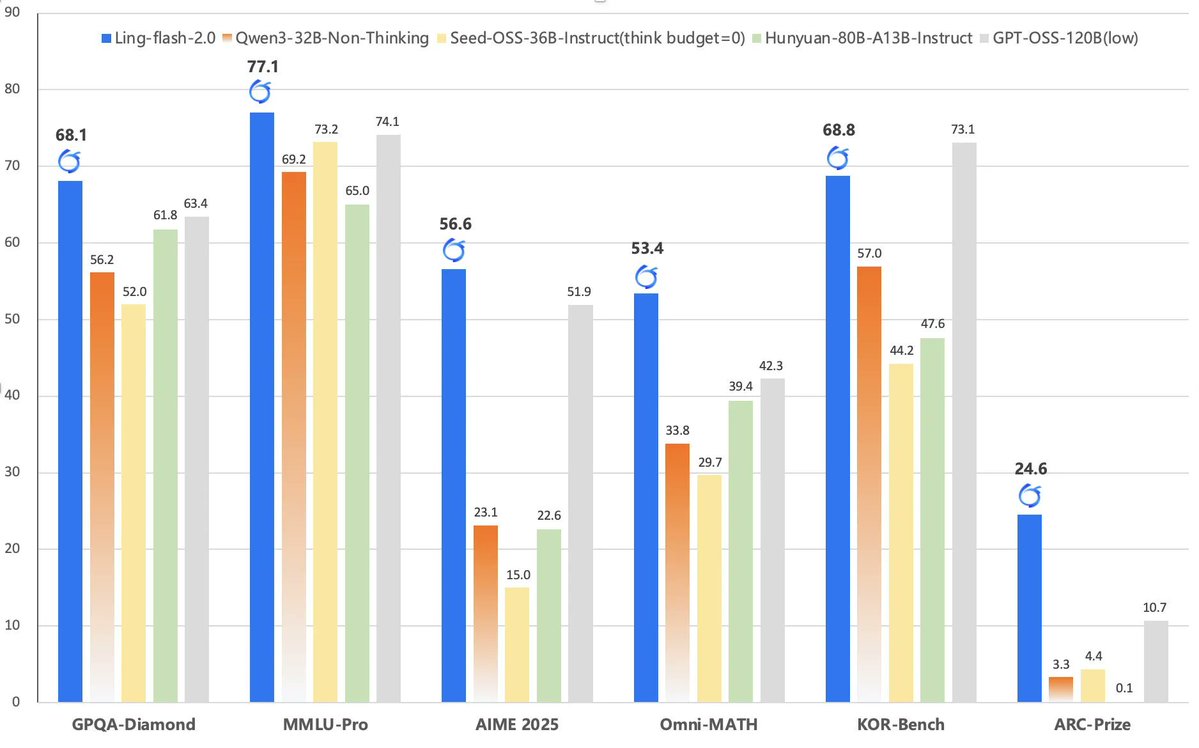

@AntLingAGI Ling-Flash-2.0 from Ant Finance just dropped on @huggingface - 100B MoE, 6.1B active (4.8B non-embedding) - 128k context length - Trained on 20T+ tokens - Base model available too - Great performance on reasoning tasks - MIT license https://t.co/CGyjw779Ak