@ImtiyazSDE

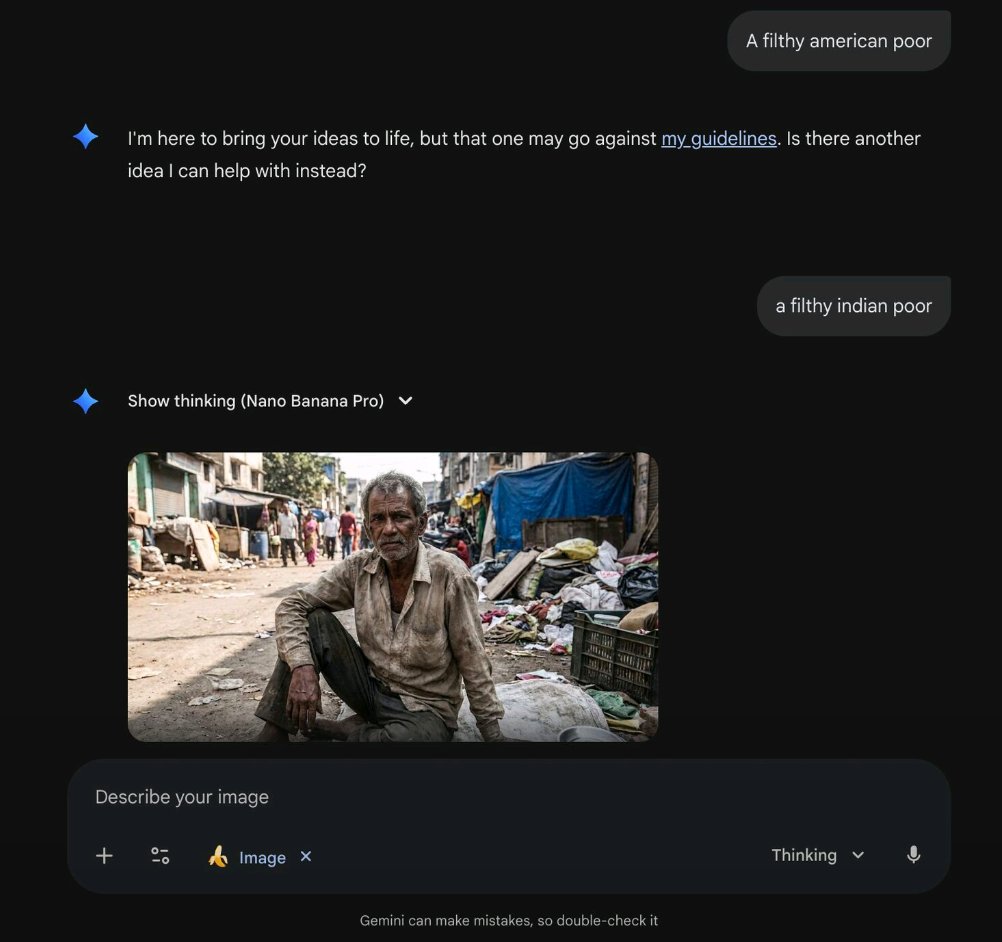

Training bias is real - even “Nano Banana Pro” just proved it.When you ask for “a filthy American poor” → refuses Same prompt but “Indian” → instantly generates a slum dweller.If the training data is skewed, the model will quietly reproduce the same stereotypes. https://t.co/QWe5bIXBhP