@socialwithaayan

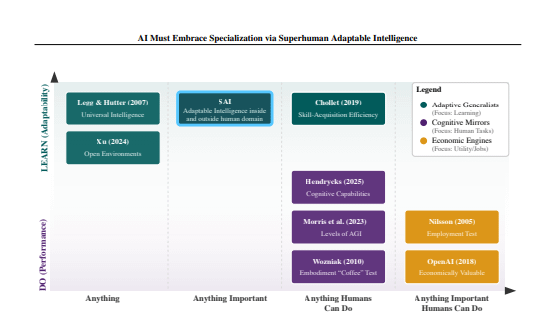

🚨BREAKING: Yann LeCun just dropped a paper that should make every AI lab rethink its roadmap. One brutal conclusion: chasing AGI is the wrong goal. Here’s why: → Humans aren’t general we’re survival specialists. → Walking and seeing feel “general” only because they keep us alive. → Outside that zone, we’re terrible. Chess computers proved it decades ago. → Most AGI definitions today either can’t be measured or assume human = general. We built the benchmark around the wrong species. The team proposes a new target: Superhuman Adaptable Intelligence (SAI). Not “can it do what humans do,” but: how fast can it learn something new? The approach: specialized expert systems with internal world models + self-supervised learning built to master the massive task space that humans biologically can’t reach. One giant model mimicking human limits isn’t the ceiling. It’s the trap.