@charlespacker

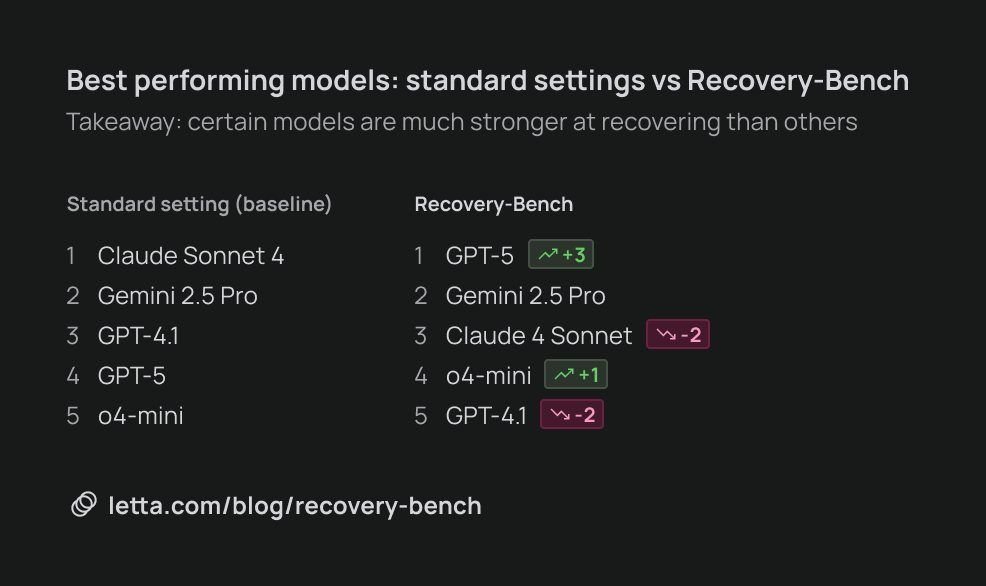

Prior to GPT-5, Sonnet & Opus were the undisputed kings of AI coding. It turns out the GPT-5 is significantly better than Sonnet in one key way: the ability to recover from mistakes. Today we're excited to release our latest research at @Letta_AI on Recovery-Bench, a new benchmark for measuring how well model can recover from errors and corrupted states. Coding agents often get confused by past mistakes, and mistakes that accumulate over time can quickly poison the context window. In practice, it can often be better to "nuke" your agent's context window and start fresh once your agent has accumulated enough mistakes in its message history. The inability of current models to course-correct from prior mistakes is a major barrier towards continual learning. Recovery-Bench builds on ideas from Terminal-Bench to create challenging environments where an agent needs to recover from a prior failed trajectory. A surprising finding is that the best performing models overall are clearly not the best performing "recovery" models. Claude Sonnet 4 leads the pack in overall coding ability (on Terminal-Bench), but GPT-5 is a clear #1 on Recovery-Bench. Recovering from failed states is a challenging unsolved task on the road towards self-improving perpetual agents. We're excited to contribute our research and benchmarking code to the open source community to push the frontier of continual learning & open AI.