@voooooogel

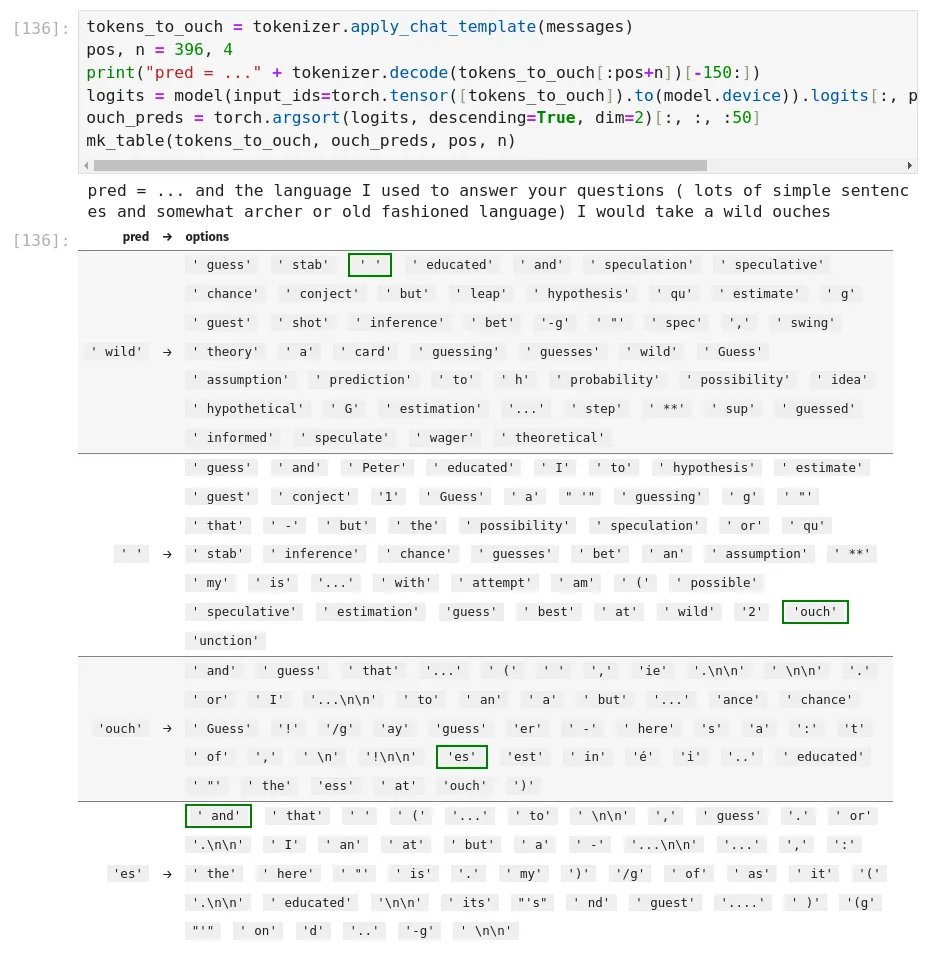

did some looking into the ouches phenomenon and found a few things... it's both what you'd expect (hint: tokenization!!) and also not. so "ouches" is tokenized as you'd expect--['ouch', 'es']--which means the model is saying "ouch". but why? well, if you would just consult the logits... this is the first time the model said "ouches" in the qt's conversation. the left column of the table here shows the preceding token, and the right side shows the predictions for next token after it, sorted by likelihood, with the highest likelihood biblically acceptable token highlighted in green. so, what happens? basically, after ' wild', the model wants to say ' guess'. note the leading space--for common words, tokenizers have two tokens for the same word, one regular and one with a leading space. this is an optimization so the model can output a "free" space token instead of needing to output [' ', 'guess'] with two tokens. "guess" is not in the bible, so the sampler moves down the list, and two options down, we get the first biblically acceptable token... a solitary space, ' '. because not all words have a space-prefixed version, the model still needs to be able to output spaces the regular way, and since this is a fairly common token it's high up in the prediction list and is selected. now, the model is in a bit of a weird position. often in pretraining, if the model sees a regular space token instead of a string of those space-prefixed tokens, it's because someone was double spacing for some reason (e.g., maybe they're relying on HTML whitespace collapse behavior.) so the model keeps predicting space-prefixed tokens despite there already being a space--notice after the space, the top predictions are ' guess' (again), ' and', ' Peter', etc.--all space-prefixed. but because of the solitary space token, the biblical sampler is now in a state in the token trie where it can't select another space or space-prefixed token, it needs to output a regular token, because the KJV doesn't have any double-spacing. so the sampler skips over ' guess', ' and', ' Peter', etc. to look for the most likely non-space-prefixed token. so a few options down, we get these weird... filler tokens, 'ouch' and 'unction', that both appear in the bible (both only a single-digit number of times). interestingly enough, both of these words don't have a space prefixed version! that means in pretraining they always appeared as [' ', 'ouch'] and [' ', 'unction']--there's no ' ouch' or ' unction' space-prefixed token: ```python >>> [[tokenizer.decode(t) for t in tokenizer.encode(s, add_special_tokens=False)] for s in (' ouch', ' unction')] [[' ', 'ouch'], [' ', 'unction']] ``` so my guess as to what's happening with "ouches": 1. because the sampler rejects the highest-likelihood tokens, the model is pushed into "delaying" its prediction by picking a space 2. after picking a space, the sampler rejects the model's new attempt to double-space words, and instead picks the highest likelihood non-space-prefixed token 3. tokenizer bias pushes up 'ouch' and 'unction', because they happened to appear in pretraining a lot with spaces before them, as they don't have space-prefixed versions 4. if 'ouch' specifically is selected, the only biblically acceptable continuation is 'es', because "ouch" doesn't appear as a standalone word in the KJV, only as part of "ouches" (an archaic word meaning a setting for a gemstone, used in Exodus to describe Aaron's breastplate) but the question remains, why THESE words specifically? there's lots of tokens that don't have space-prefixed versions. so why are 'ouch' and 'unction' predicted so highly? i'm not sure, hence why "tokenizers suck" isn't the whole answer. (but as usual, "tokenizers suck" is a major piece of the answer.) (additionally, these words were showing up at the end of messages especially often because of a bug in where i was allowing end of text tokens, which i've now fixed. but that doesn't apply to 'ouches' / 'unction' in the middle of messages.) anyways, the best way to fix this would probably be to make the sampler slightly smarter about allowing space tokens (e.g., only allow a solitary space if it's X% more likely than an acceptable space-prefixed word), or even better, to use something like beam search or hfppl to allow the model to walk a few tokens forward in multiple branches and then pick the one that has the best overall probability, instead of greedily argmaxing token by token. maybe i'll add that someday :-)