@omarsar0

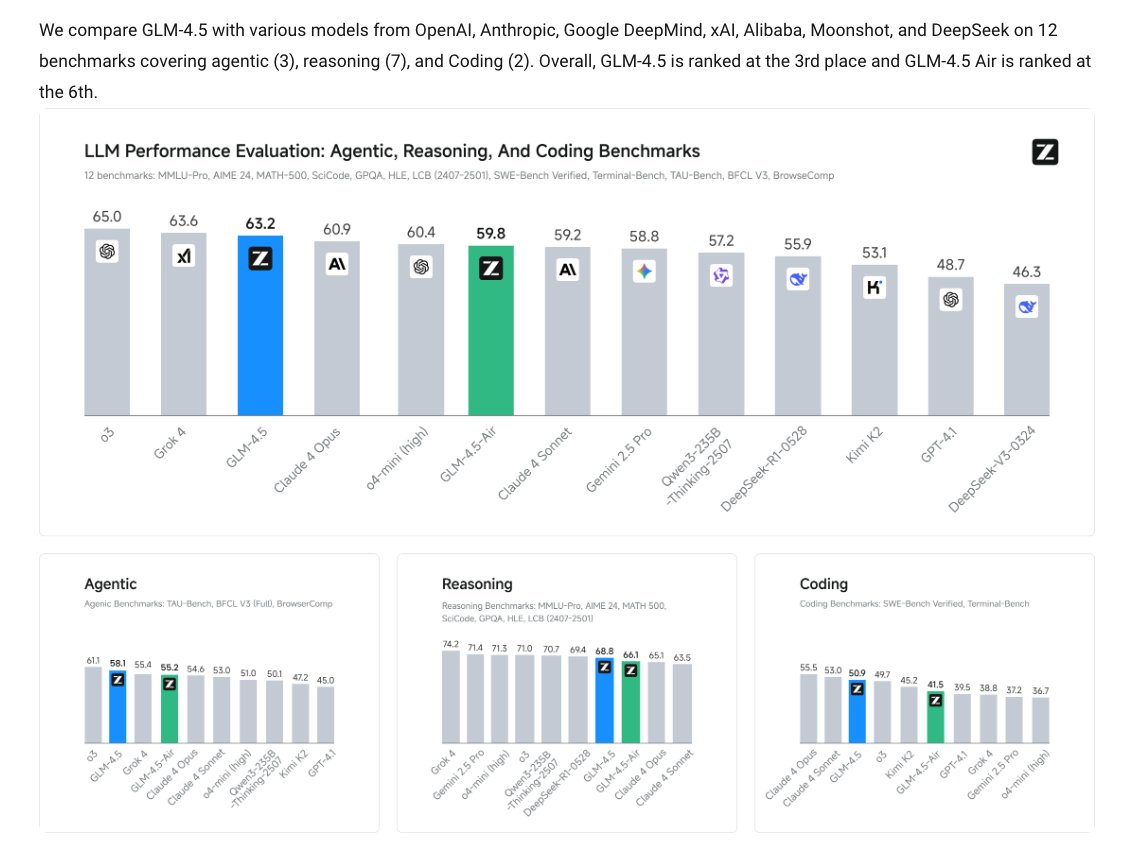

GLM-4.5 looks like a big deal! > MoE Architecture > Hybrid reasoning models > 355B total (32B active) > GQA + partial RoPE > Multi-Token Prediction > Muon Optimizer + QK-Norm > 22T-token training corpus > Slime RL Infrastructure > Native tool use Here's all you need to know: https://t.co/QXFr4QBxkk