@ipoupyrev

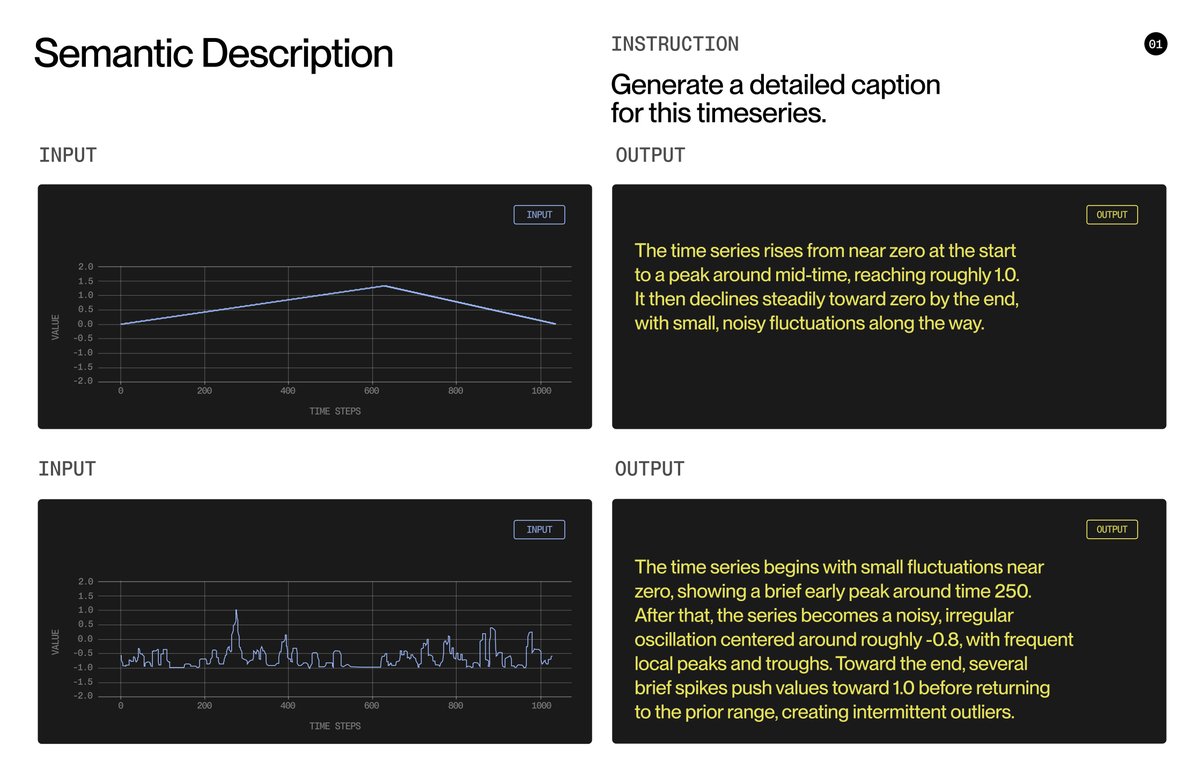

Introducing TimeFusion, our new multimodal foundation model that unlocks a unified language between humans and sensors. For decades, organizations had access to billions of sensor signals across industry, infrastructure, energy systems, and personal devices but almost none of that data has been easy to understand or act upon directly. TimeFusion changes that. That means you can *ask* a machine about vibration anomalies, *generate* new signals from a text description, or *forecast* what comes next — all in plain English. Here is how it works: 🔹 TimeFusion is a general sensor–language fusion model: a 2-billion-parameter transformer trained to ingest and produce both natural language and raw time-series signals in a single continuous framework. 🔹 Unlike previous approaches that compress sensor data into narrow text-only formats, TimeFusion uses Universal Tokens to combine time-series signals and language inside one shared vocabulary. This enables the model to truly understand physical data instead of translating it through various hacks — and to perform forecasting, anomaly detection, filtering, imputation, captioning, QA, and generation through one unified interface. 🔹 TimeFusion outperforms much larger models like GPT-5, Claude Sonnet, GLM 4.6 and others on sensor-related tasks despite being orders of magnitude smaller. And the model isn’t just translating signals into text. It can already do powerful text-to-signal transformations: forecasting the future of a waveform, reconstructing missing data, filtering noise, or reshaping a signal based on a natural-language prompt producing new signals rather then text as output. This opens the door to an entirely new category of interfaces with the physical world — where engineers, operators, doctors, city systems, and even consumers can converse with the machines and environments around them, instead of digging through raw numbers and graphs. A new way to talk to the physical world is here 🌍 #PhysicalAI