@LightningAI

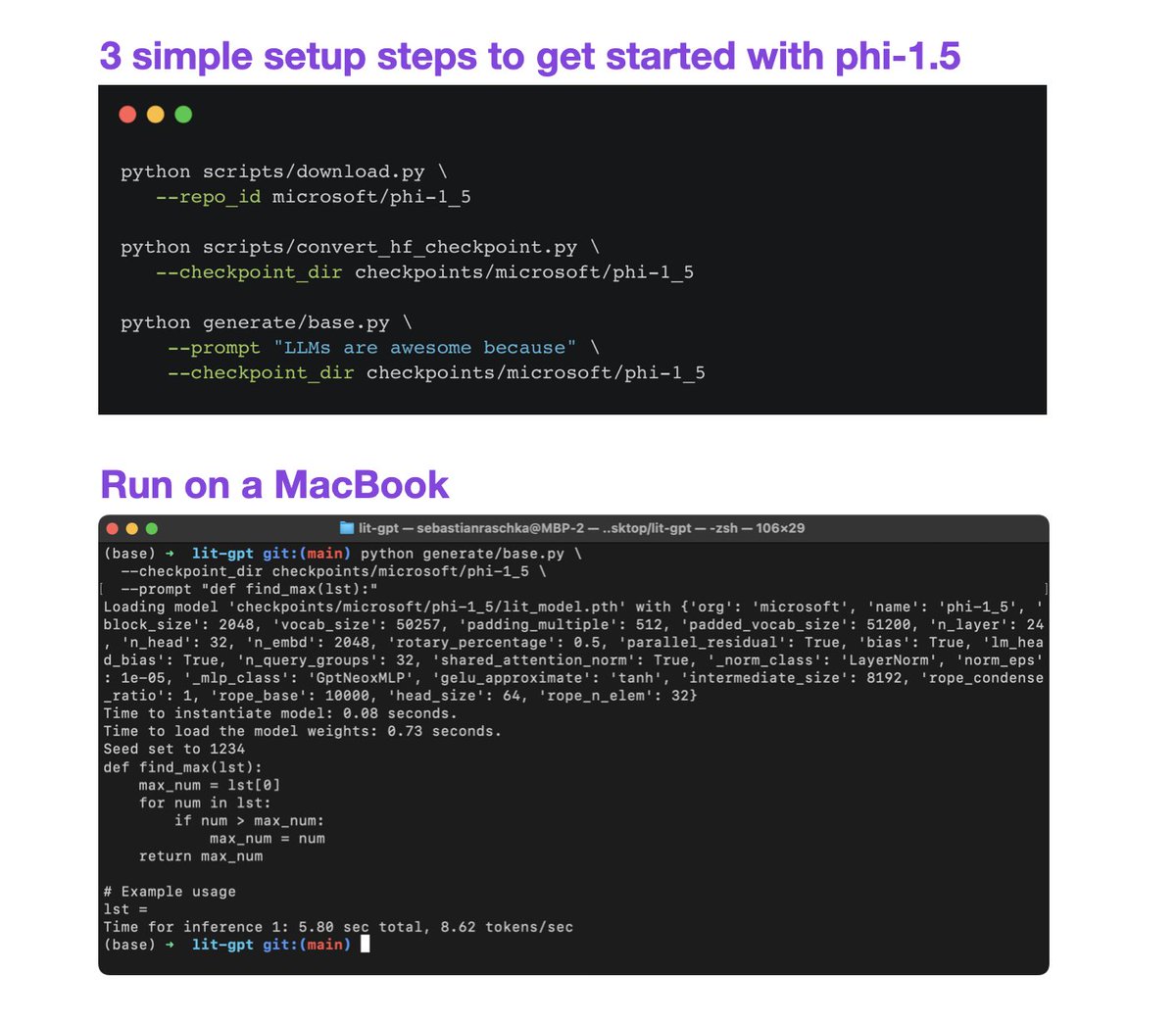

Looking for a small but very capable LLM to avoid out-of-memory errors? Lit-GPT now supports phi-1.5, a SOTA 1.3B parameter LLM! ⚡️ Finetune it on a single GPU ⚡️ Pretrain it on a cluster ⚡️ Just use it on a MacBook More info: https://t.co/Yk8dKmpedk #LLMs #MachineLearning #DeepLearning