@omarsar0

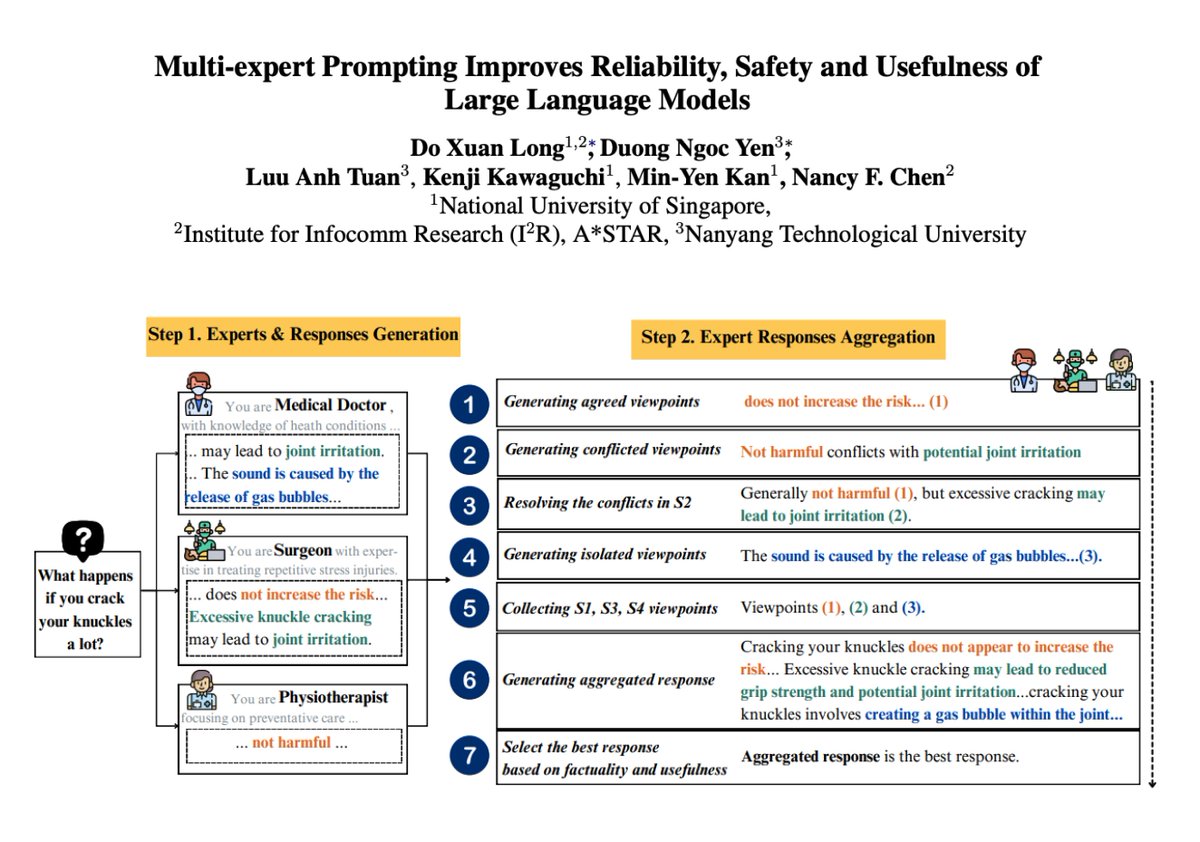

Multi-expert Prompting with LLMs Multi-expert Prompting improves LLM responses by simulating multiple experts and aggregating their responses. Multi-expert Prompting guides an LLM to fulfill input instructions by simulating multiple experts and selecting the best response among individual and aggregated views. It achieves a new state-of-the-art on TruthfulQA-Generation with ChatGPT, surpassing the current SOTA of 87.97%. It also improves performance across factuality and usefulness while reducing toxicity and hurtfulness. This is a very nice prompting approach that has huge potential when building agentic workflows. Prompt examples are shared in the paper.