@zach_nussbaum

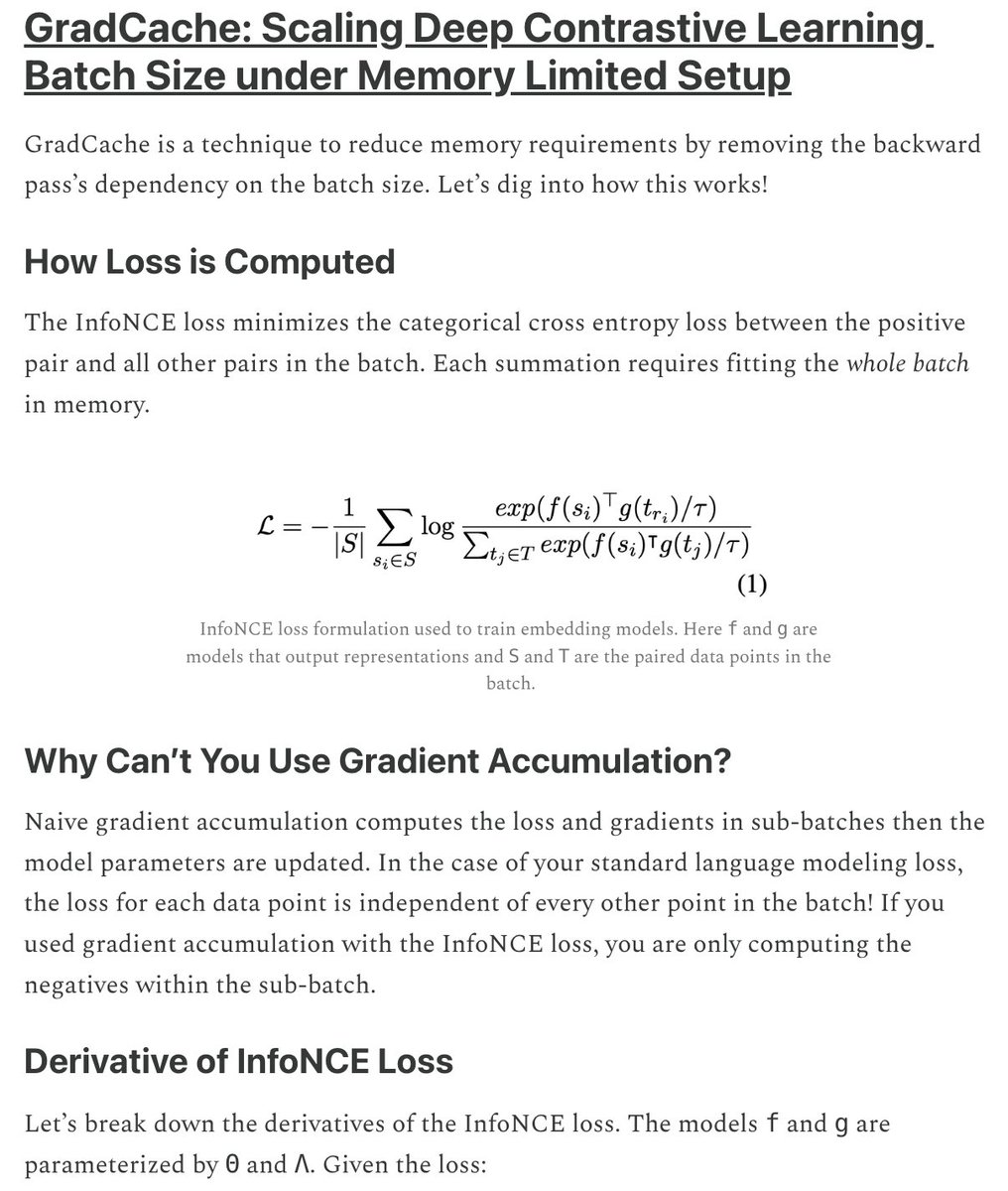

We trained all of the Nomic Embed models on limited compute. One trick that helped us train SoTA embeddings on 16 H100s? GradCache, a gradient checkpointing-like technique tailored for contrastive learning. I kept forgetting how it works, so I dug into the math and wrote about it… https://t.co/d0s8zR7nK0