@AravSrinivas

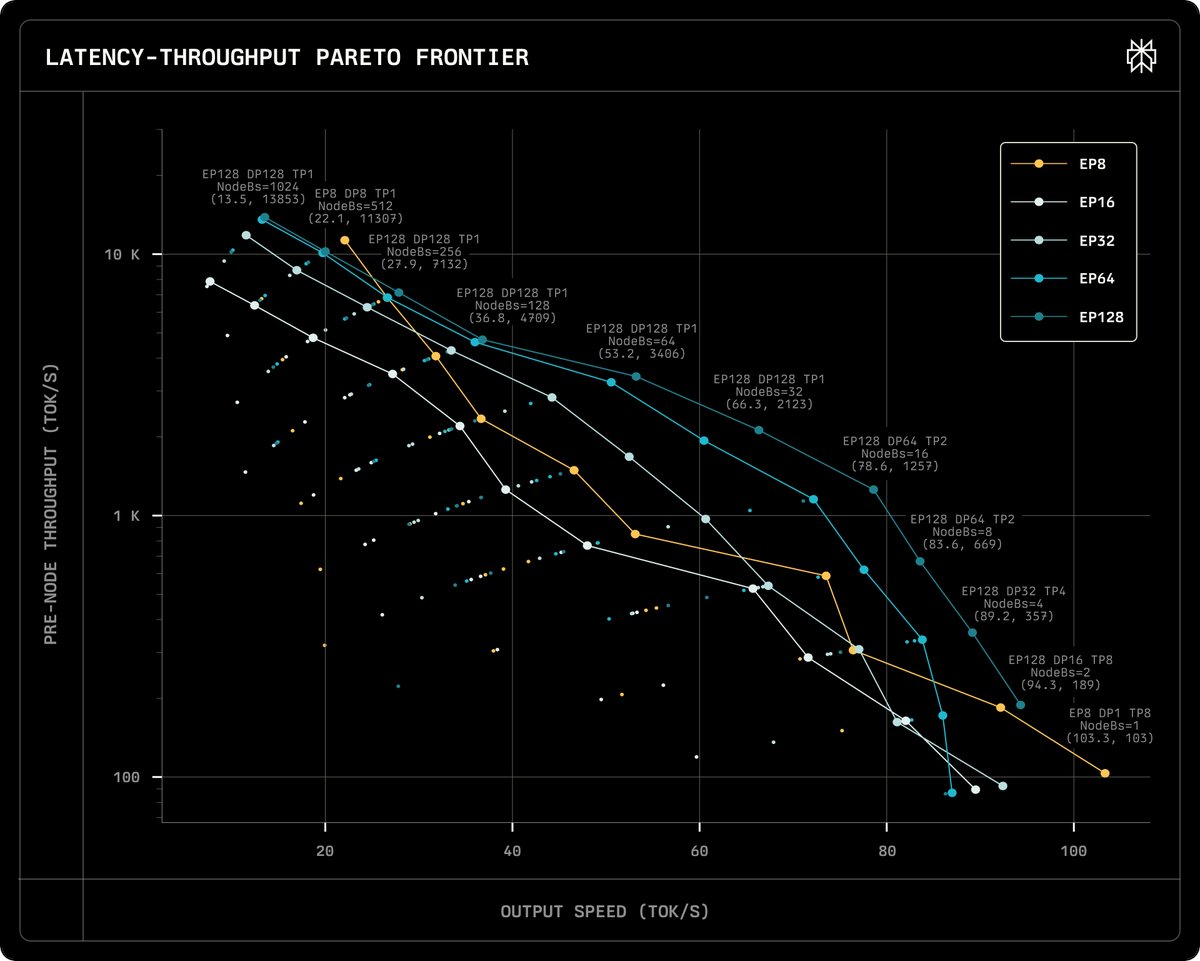

Perplexity serves MoEs like post-trained versions of DeepSeek-v3. These models can be made to utilize GPUs efficiently in multi-node settings, achieving high throughput and low latency simultaneously, compared to single-node deployments. https://t.co/pZwOaRb0oZ