@HelloSurgeAI

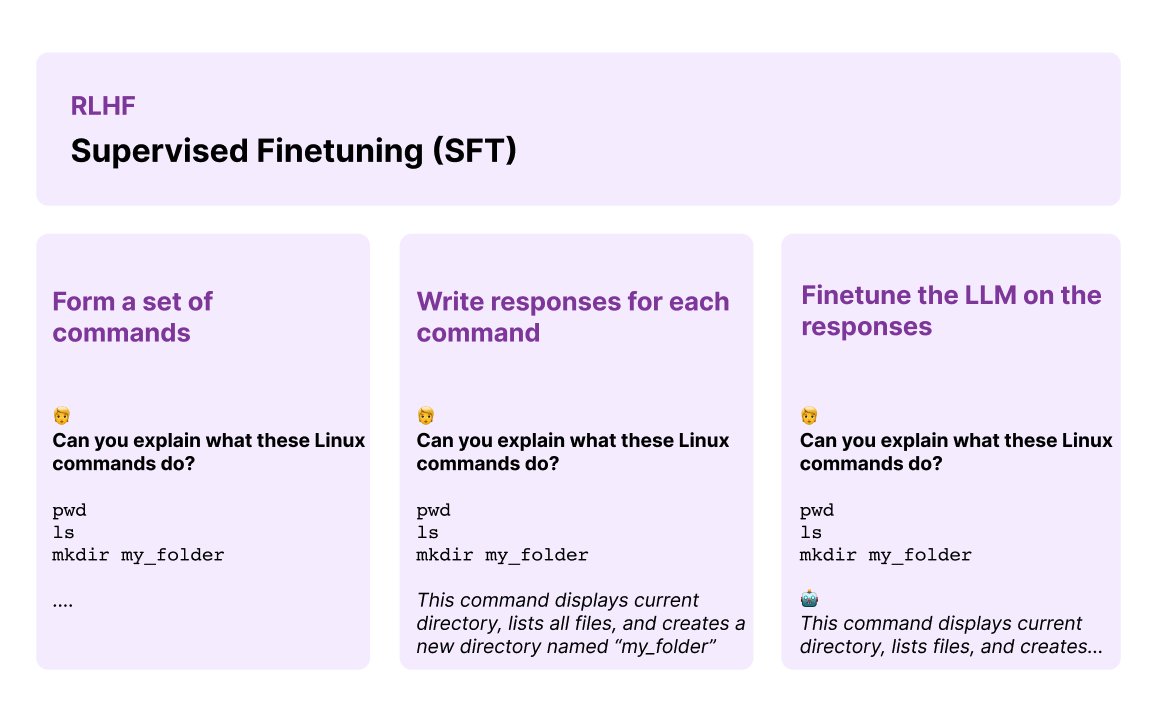

What is SFT data and what role does it play in state-of-the-art LLMs? Supervised finetuning (SFT) in the context of RLHF deals with further tuning an initial language model using demonstration data. At Surge AI, we provide SFT data for top LLM teams to finetune their LLMs. Here is what we have observed: SFT data typically involves collecting demonstration data including prompts and in-depth responses written by human annotators demonstrating how the model should respond to the prompt. Specifically, You take a set of commands and obtain human-written responses for each. The SFT training dataset consists of <prompt, ideal generation> pairs used to finetune the pre-trained LLM to output human-like responses. So let’s say you are aligning an LLM-powered dialogue system then you need to collect dialogue-style instructions/responses data that cater to that use case. Similarly, as shown in the figure, if you want high-quality code generation capabilities you can also provide instruction + written responses as part of the SFT data. This leads to the first important component, also referred to as supervised policy, for training an RLHF LLM. But why go through all this process when training RLHF LLMs? The core idea of SFT is to provide a high-quality initialization for the RLHF process. It’s widely applied by some of the most advanced closed and open-sourced LLMs. To make this work, you need to collect lots of demonstration data but the challenge is collecting high-quality and diverse demonstration data at scale. SFT data can be written by different annotators and can incorporate a lot of noise as response quality and style can vary from annotator to annotator. Controlling for this is key. According to reported insights, you will need to collect thousands of examples to ensure you are tuning a high-quality LLM. SFT data helps to improve target areas that allow steering the LLM better to your needs. We can help with your SFT data needs! If you need help with collecting high-quality preference or SFT data, reach out to our team here: https://t.co/OSm4aHIOP6