@omarsar0

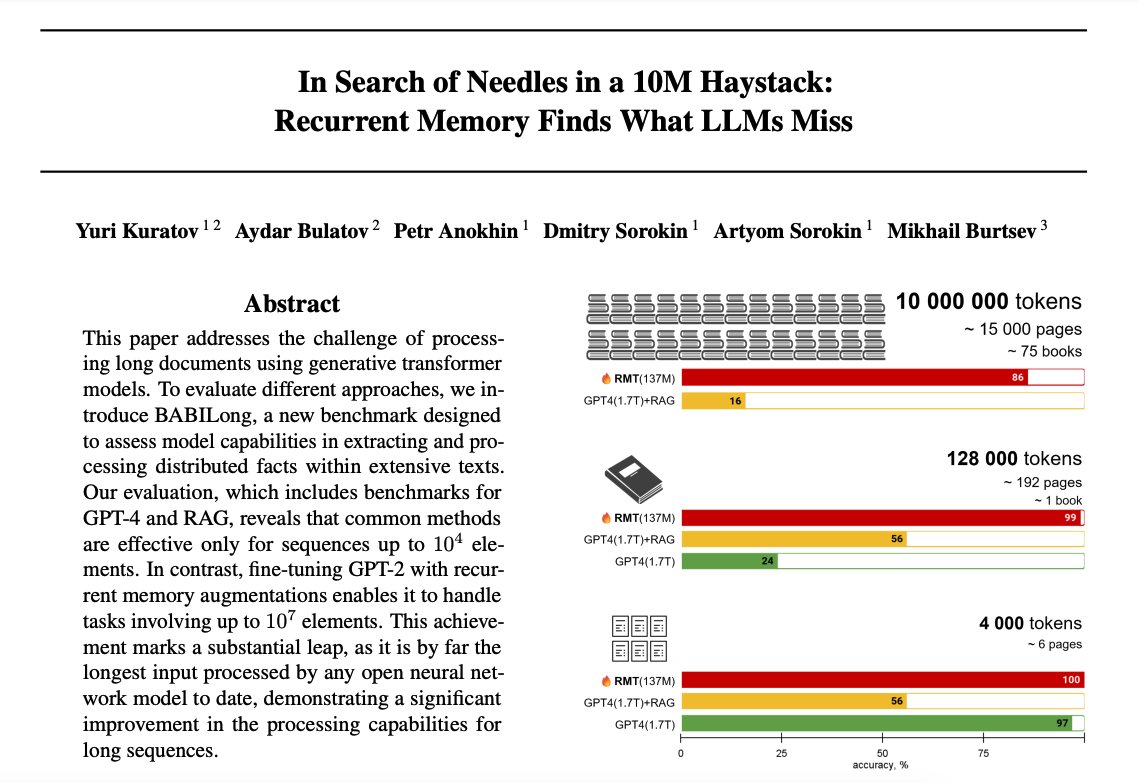

Recurrent Memory Finds What LLMs Miss Explores the capability of transformer-based models in extremely long context processing. Finds that both GPT-4 and RAG performance heavily rely on the first 25% of the input, which means there is room for improved context processing mechanisms. The paper reports that recurrent memory augmentation of transformer models achieves superior performance on documents of up to 10 million tokens. The recurrent memory seems to enable effective multi-hop reasoning which is challenging for current LLMs and RAG systems. It also has the desirable effect of filtering out irrelevant information which is key in long context processing. With all the recent releases of long context models, this is an interesting and timely paper. I like the idea of combining both the recurrent memory and retrieval to these large models to make them more generalizable for complex tasks that require long context processing.