@neural_avb

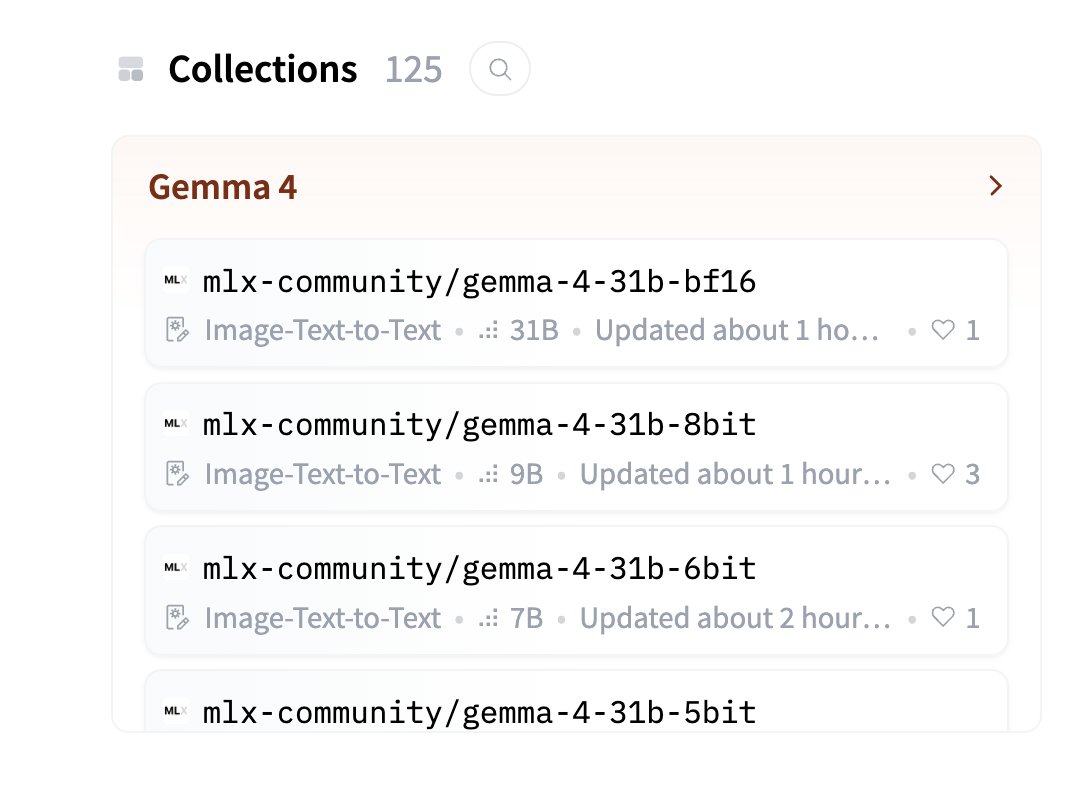

This guy is BEYOND CRACKED. Gemma 4 already on MLX, bro has uploaded all models with quantization. 125 models uploaded in last few hours 🤯 New mlx-vlm repo also supports turbo-quant, and rf-detr too (among other things) If you are a mac dev, you better be jumping at this. Bookmark him, turn his notifications on, sponsor his work.