@llama_index

The code behind this case study is now available! Check out the team's in-depth blog post about the project: https://t.co/8tPnIY8VQa Or head straight to the repo: https://t.co/EfUToAARR3 https://t.co/wTpbMhnfUn

Viewing enriched Twitter post

The code behind this case study is now available! Check out the team's in-depth blog post about the project: https://t.co/8tPnIY8VQa Or head straight to the repo: https://t.co/EfUToAARR3 https://t.co/wTpbMhnfUn

{

"data": [

{

"id": "",

"type": "photo",

"url": null,

"media_url": "https://pbs.twimg.com/media/GbAly2HagAAA3UU.jpg",

"media_url_https": null,

"display_url": null,

"expanded_url": null

}

],

"score": 0.984,

"scored_at": "2025-08-09T13:46:07.550469",

"import_source": "network_archive_import",

"media": [

{

"type": "photo",

"url": "https://crmoxkoizveukayfjuyo.supabase.co/storage/v1/object/public/media/posts/1851021061594497501/media_0.jpg?",

"filename": "media_0.jpg",

"original_url": "https://pbs.twimg.com/media/GbAly2HagAAA3UU.jpg"

}

],

"storage_migrated": true

}{

"user": {

"created_at": "2022-12-18T00:52:44.000Z",

"default_profile_image": false,

"description": "Build LLM agents over your data\n\nGithub: https://t.co/HC19j7vMwc\nDocs: https://t.co/QInqg2zksh\nDiscord: https://t.co/3ktq3zzYII",

"fast_followers_count": 0,

"favourites_count": 1261,

"followers_count": 82611,

"friends_count": 26,

"has_custom_timelines": false,

"is_translator": false,

"listed_count": 1366,

"location": "",

"media_count": 1375,

"name": "LlamaIndex 🦙",

"normal_followers_count": 82611,

"possibly_sensitive": false,

"profile_banner_url": "https://pbs.twimg.com/profile_banners/1604278358296055808/1696908553",

"profile_image_url_https": "https://pbs.twimg.com/profile_images/1623505166996742144/n-PNQGgd_normal.jpg",

"screen_name": "llama_index",

"statuses_count": 2997,

"translator_type": "none",

"url": "https://t.co/epzefqQqZx",

"verified": true,

"withheld_in_countries": [],

"id_str": "1604278358296055808"

},

"id": "1851021061594497501",

"conversation_id": "1851021061594497501",

"full_text": "The code behind this case study is now available! Check out the team's in-depth blog post about the project:\nhttps://t.co/8tPnIY8VQa\n\nOr head straight to the repo: https://t.co/EfUToAARR3 https://t.co/wTpbMhnfUn",

"reply_count": 0,

"retweet_count": 20,

"favorite_count": 77,

"hashtags": [],

"symbols": [],

"user_mentions": [],

"urls": [

{

"url": "https://t.co/8tPnIY8VQa",

"expanded_url": "https://developer.nvidia.com/blog/creating-rag-based-question-and-answer-llm-workflows-at-nvidia/",

"display_url": "developer.nvidia.com/blog/creating-…"

},

{

"url": "https://t.co/EfUToAARR3",

"expanded_url": "https://github.com/NVIDIA/GenerativeAIExamples/tree/main/community/routing-multisource-rag",

"display_url": "github.com/NVIDIA/Generat…"

}

],

"media": [

{

"media_url": "https://pbs.twimg.com/media/GbAly2HagAAA3UU.jpg",

"type": "photo"

}

],

"url": "https://twitter.com/llama_index/status/1851021061594497501",

"created_at": "2024-10-28T21:59:46.000Z",

"#sort_index": "1851021061594497501",

"view_count": 11331,

"quote_count": 0,

"is_quote_tweet": true,

"is_retweet": false,

"is_pinned": false,

"is_truncated": false,

"quoted_tweet": {

"user": {

"created_at": "2022-12-18T00:52:44.000Z",

"default_profile_image": false,

"description": "Build LLM agents over your data\n\nGithub: https://t.co/HC19j7vMwc\nDocs: https://t.co/QInqg2zksh\nDiscord: https://t.co/3ktq3zzYII",

"fast_followers_count": 0,

"favourites_count": 1261,

"followers_count": 82611,

"friends_count": 26,

"has_custom_timelines": false,

"is_translator": false,

"listed_count": 1366,

"location": "",

"media_count": 1375,

"name": "LlamaIndex 🦙",

"normal_followers_count": 82611,

"possibly_sensitive": false,

"profile_banner_url": "https://pbs.twimg.com/profile_banners/1604278358296055808/1696908553",

"profile_image_url_https": "https://pbs.twimg.com/profile_images/1623505166996742144/n-PNQGgd_normal.jpg",

"screen_name": "llama_index",

"statuses_count": 2997,

"translator_type": "none",

"url": "https://t.co/epzefqQqZx",

"verified": true,

"withheld_in_countries": [],

"id_str": "1604278358296055808"

},

"id": "1849847301680005583",

"conversation_id": "1849847301680005583",

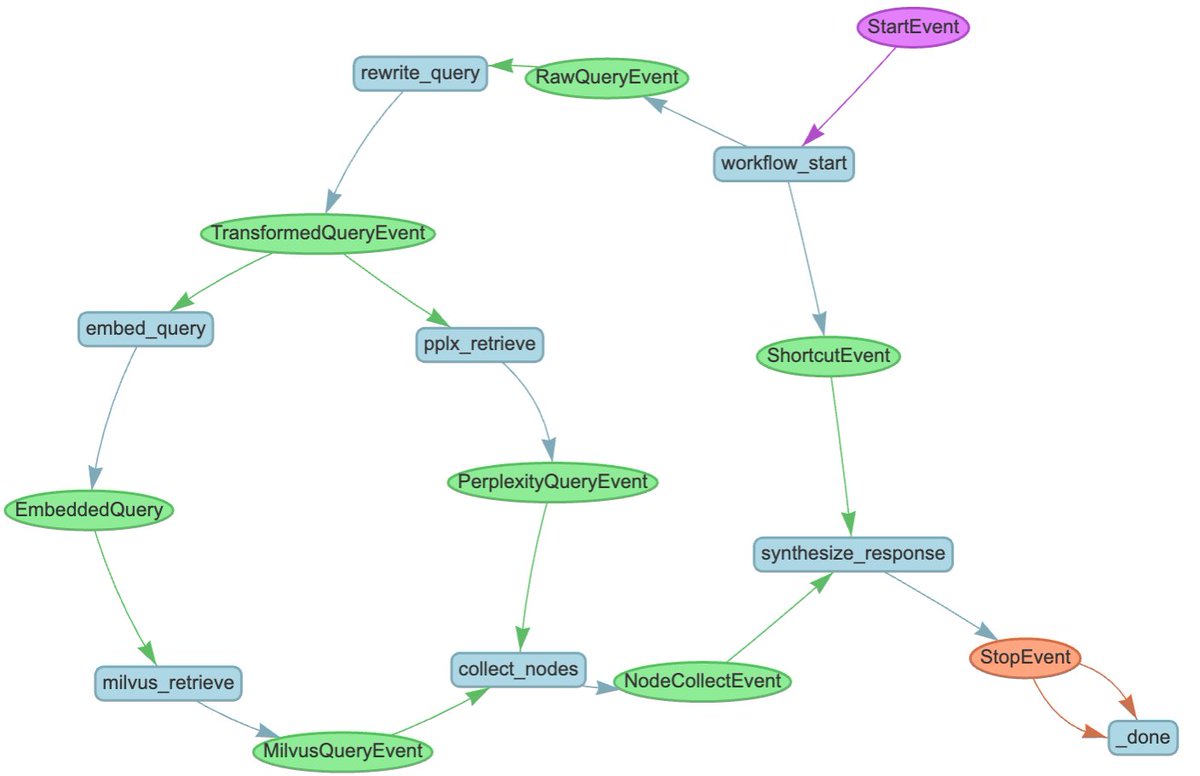

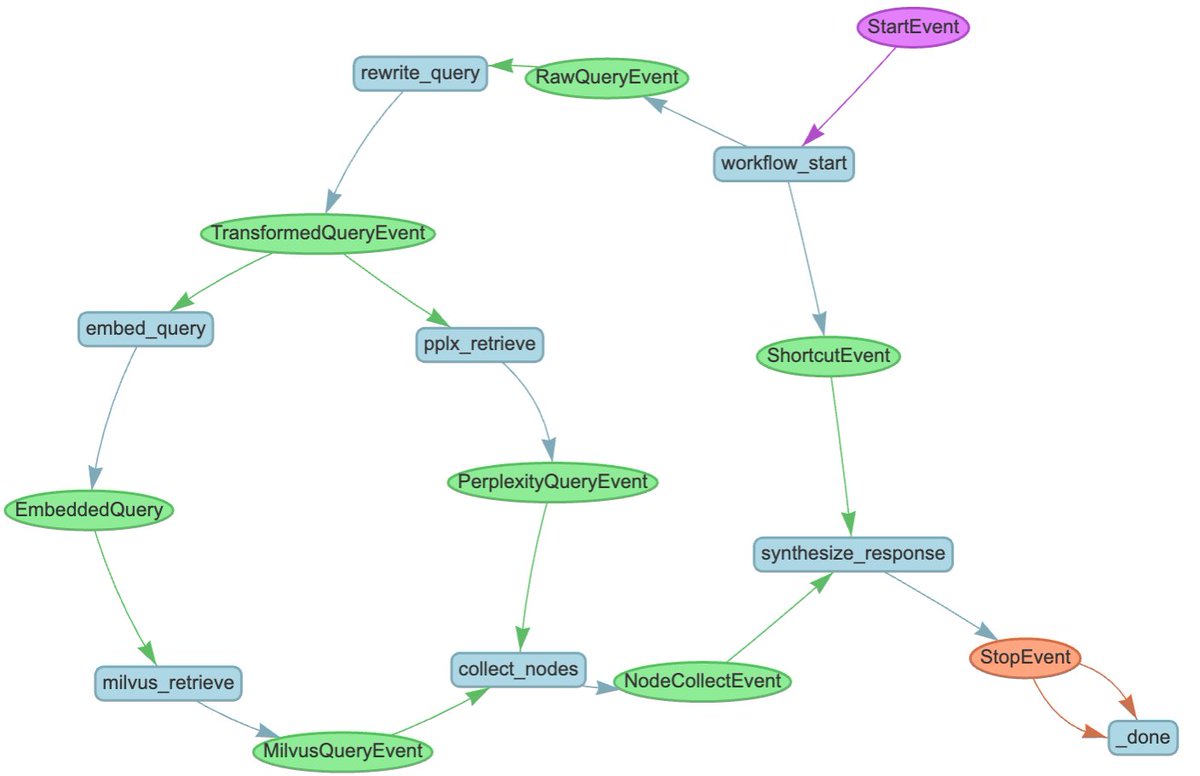

"full_text": "We are thrilled to announce a case study of a successful internal deployment of LlamaIndex at @nvidia, an internal AI assistant for sales 🧑💼🤖\n\n* Uses Llama 3.1 405b for simple queries, 70b model for document searches\n* Retrieves from multiple sources: internal docs, NVIDIA site, and web\n* LlamaIndex Workflows handles routing and core functionality\n* @chainlit_io provides the chat interface for sales reps\n* Parallel retrieval system searches multiple sources simultaneously\n* Built-in context augmentation helps handle company acronyms/terms\n* Real-time inference achieved through NVIDIA NIM optimization\n\nSales automation is a top use case for agents. Check out our case study here: https://t.co/AApFNVjp0v",

"reply_count": 5,

"retweet_count": 74,

"favorite_count": 275,

"hashtags": [],

"symbols": [],

"user_mentions": [

{

"id_str": "61559439",

"name": "NVIDIA",

"screen_name": "nvidia",

"profile": "https://twitter.com/nvidia"

}

],

"urls": [],

"media": [

{

"media_url": "https://pbs.twimg.com/media/Gav6TKdaQAADt6P.jpg",

"type": "photo"

}

],

"url": "https://twitter.com/llama_index/status/1849847301680005583",

"created_at": "2024-10-25T16:15:39.000Z",

"#sort_index": "1851021061594497500",

"view_count": 51185,

"quote_count": 4,

"is_quote_tweet": false,

"is_retweet": false,

"is_pinned": false,

"is_truncated": true

},

"startUrl": "https://x.com/llama_index/status/1851021061594497501"

}