@omarsar0

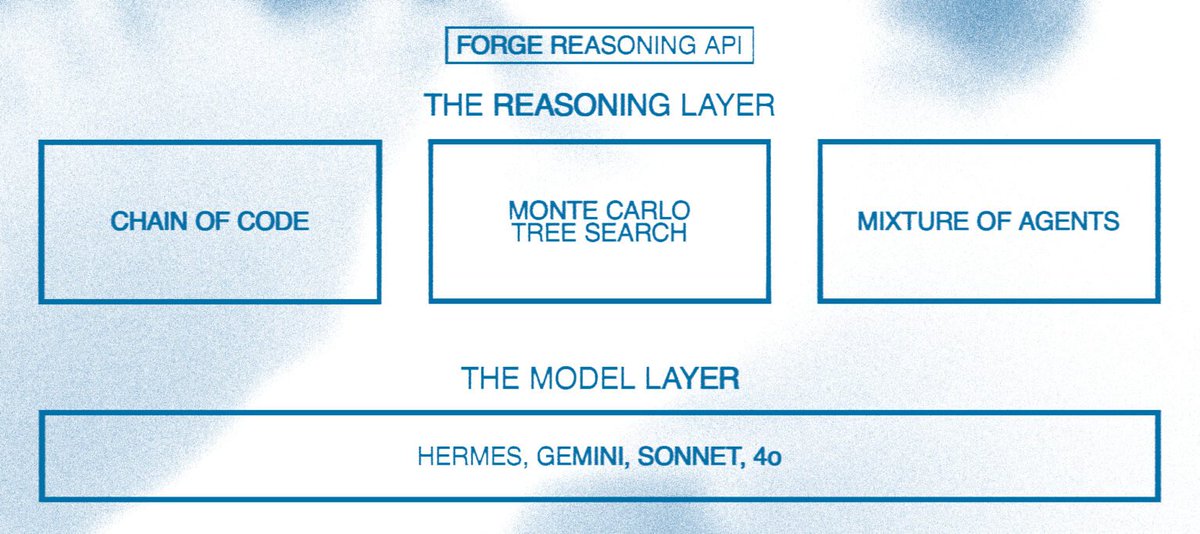

Reasoning LLMs is one of the most interesting trends to watch going into 2025. I’ve been thinking a lot about how to build with reasoning LLMs, specifically agentic workflows. How can AI devs take advantage of components like MoA and MCTS when there is barely any research for it, not to mention the lack of insights and best practices? First, how do we enable devs to build with reasoning capabilities? I like how Nous Research is approaching this with their Forge Reasoning APIs and “Reasoning Layer” components (MoA, MCTS, and Chain of Code). I think it’s way too early for such a reasoning layer but it seems that things are quickly moving in that direction; the o1 model series together with this forge reasoning API is a good indication of what’s to come. Some thoughts on the Forge Reasoning API vs o1 for language agents: I’ve been experimenting extensively with the o1 models and they are hard to customise. However, for many multi-agent systems, there is a need to get them to take on a persona that helps produce richer and more reliable outputs and facilitates better communication between agents. To achieve this, I often need to prompt the agents to behave and act a certain way and take on different roles depending on where they are in the conversation or process. Having the ability to use the right reasoning component or a combination, with configurable parameters (similar to the LLM itself), will be useful to build more complex and effective agentic systems. Customization is key here. Extended thoughts here: https://t.co/XglPppukqc