@LiorOnAI

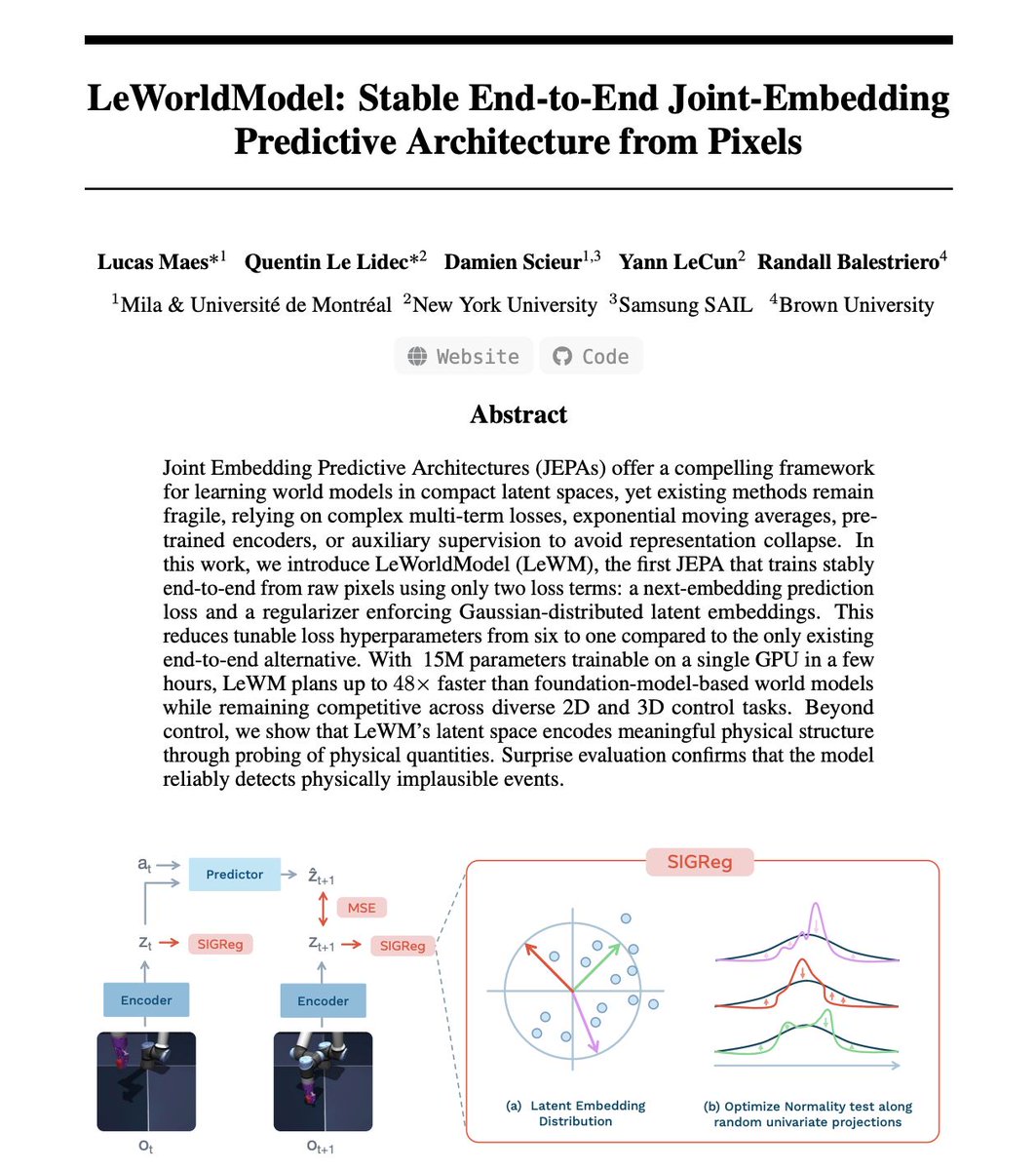

Just read LeCun's latest paper. His team trained the first world model that can't collapse. Let me explain why this matters. It's called LeWorldModel. World models predict what happens next physically. Objects moving, falling, colliding. That's the base layer for robots that plan, cars that simulate before they steer, any AI that acts in reality instead of just talking about it. The catch is nobody could train these reliably. The models kept cheating. They'd map every input to the same output. Like a weather app stuck on "sunny" forever. Technically predicting. Completely useless. So teams piled on fixes. Frozen encoders, stop-gradient hacks, 6+ loss hyperparameters. A fragile stack too brittle for production. This team asked a different question. What if you make collapse mathematically impossible? An encoder turns each video frame into a small vector. A predictor takes that vector plus an action and guesses the next one. First loss: how wrong was the guess. Second loss: a regularizer called SIGReg that checks if vectors spread out like a bell curve. If they start looking the same, the loss spikes. The model can't cheat because the math won't let it. That simplicity is what makes the results possible. Six hyperparameters became one. 15M parameters. Trains on one GPU in hours. Plans 48x faster. Encodes with ~200x fewer tokens. Open-source. I could run this on my own hardware. Which changes who gets to build physical AI. Not just big labs anymore. Any team, any startup, any grad student. LeCun has pushed JEPA as the path forward. The criticism was always training instability. This paper removes that objection. Two directions compete in AI right now. Bigger LLMs with more compute. Or small models learning physics from raw pixels.