@Xianbao_QIAN

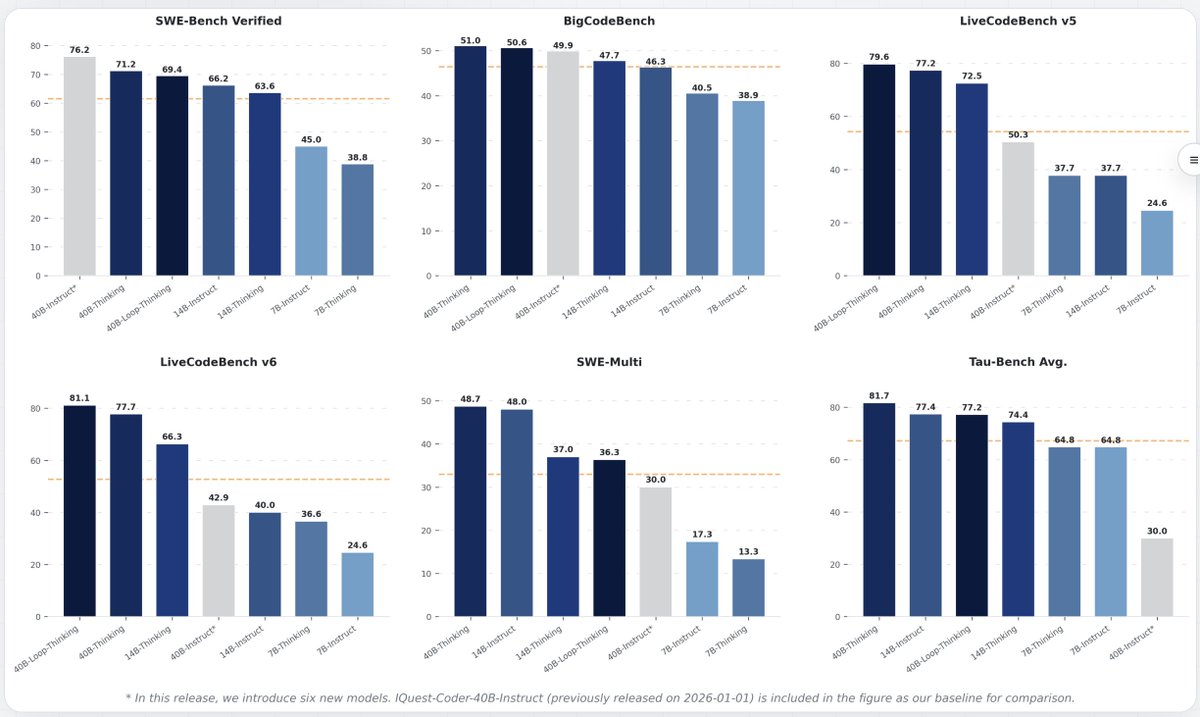

New model updates from iquestlab. If you're trying to find an inference model that you can run offline, this is probably the one you're looking for. - 7B and 14B coding models - Optimized for tool use, CLI agents and HTML generation - 128k context length - Explicit and detailed prompting works best - MiT license with requirement of display logo - available on @huggingface