@seb_ruder

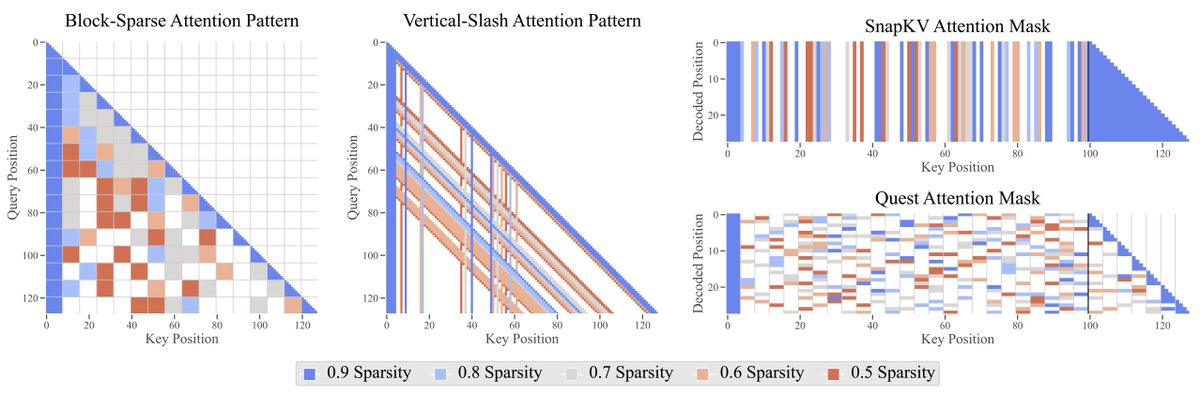

The Sparse Frontier Efficient sparse attention methods are key to scale LLMs to long contexts. We conduct the largest-scale empirical analysis that answers: 1. 🤏🔍 Are small dense models or large sparse models better? 2. ♾️What is the maximum permissible sparsity per task? 3.… https://t.co/prWfrljmzQ