@arankomatsuzaki

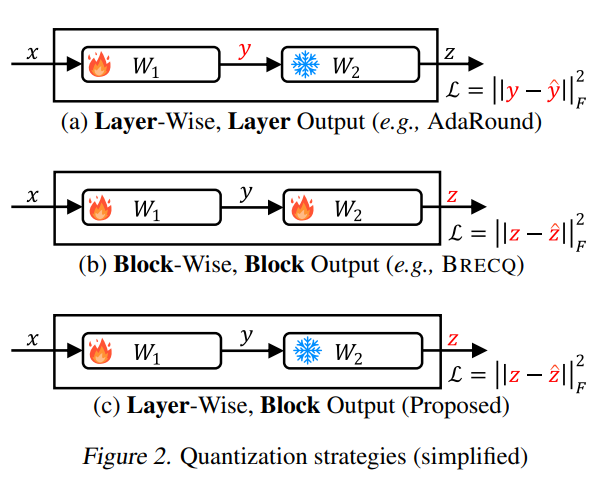

Samsung presents aespa Towards Next-Level Post-Training Quantization of Hyper-Scale Transformers Outperforms conventional quantization schemes by a significant margin, particularly for low-bit precision (INT2) https://t.co/StOzcGQz2k https://t.co/qXudeN8dLL