@vllm_project

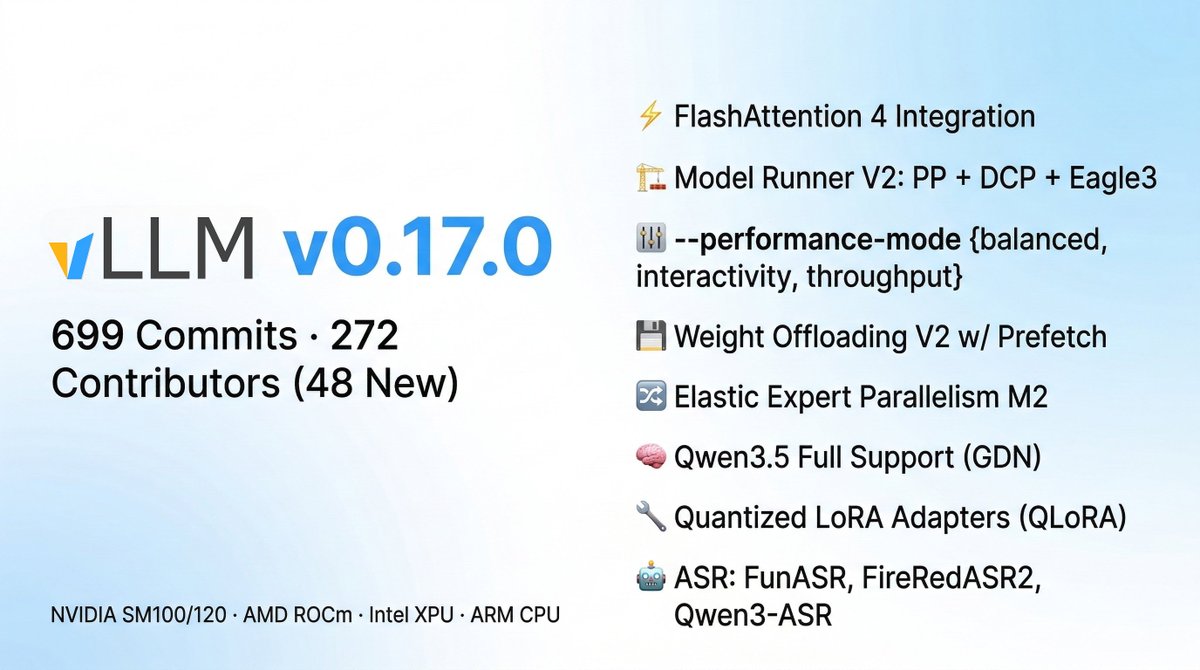

🚀 vLLM v0.17.0 is here! 699 commits from 272 contributors (48 new!) This is a big one. Highlights: ⚡ FlashAttention 4 integration 🧠 Qwen3.5 model family with GDN (Gated Delta Networks) 🏗️ Model Runner V2 maturation: Pipeline Parallel, Decode Context Parallel, Eagle3 + CUDA graphs 🎛️ New --performance-mode flag: balanced / interactivity / throughput 💾 Weight Offloading V2 with prefetching 🔀 Elastic Expert Parallelism Milestone 2 🔧 Quantized LoRA adapters (QLoRA) now loadable directly