@sudoingX

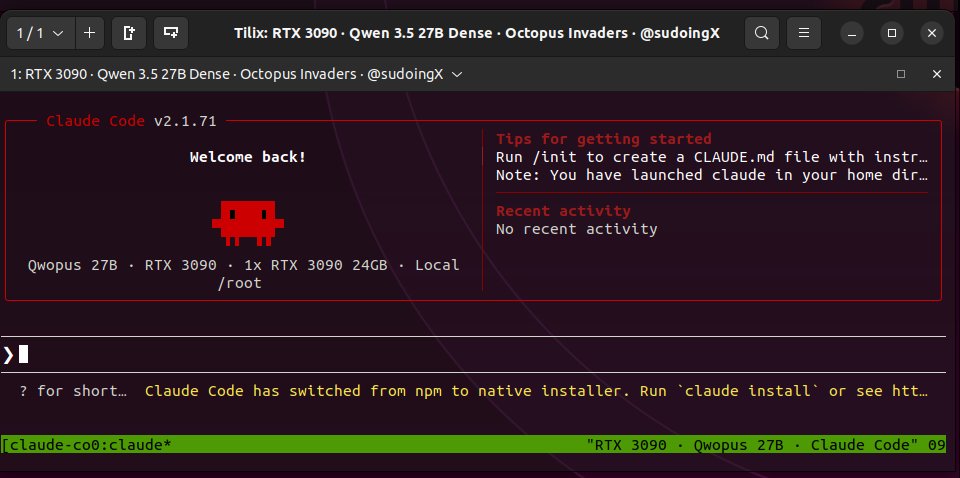

spent the entire day testing Qwopus (Claude 4.6 Opus distilled into Qwen 3.5 27B) on a single RTX 3090 through Claude Code. this is my new favourite to host locally. no jinja crashes. thinking mode works natively. 29-35 tok/s. 16.5 GB. the harness matches the distillation source and you can feel it. the model doesn't fight the agent. my flags: llama-server -m Qwopus-27B-Q4_K_M.gguf -ngl 99 -c 262144 -np 1 -fa on --cache-type-k q4_0 --cache-type-v q4_0 if you want raw speed, base Qwen 3.5 MoE still wins at 112 tok/s. but for autonomous coding where the model needs to think, wait for tool outputs, and selfcorrect without stalling, Qwopus on Claude Code is the cleanest setup i've found on this card. i want to see what everyone else is running. drop your GPU, model, harness, flags, and tok/s below. doesn't matter if it's a 3060 or a 4090, nvidia or amd. configs help everyone. let's push these cards to their ceilings. let's make this thread the reference.