@omarsar0

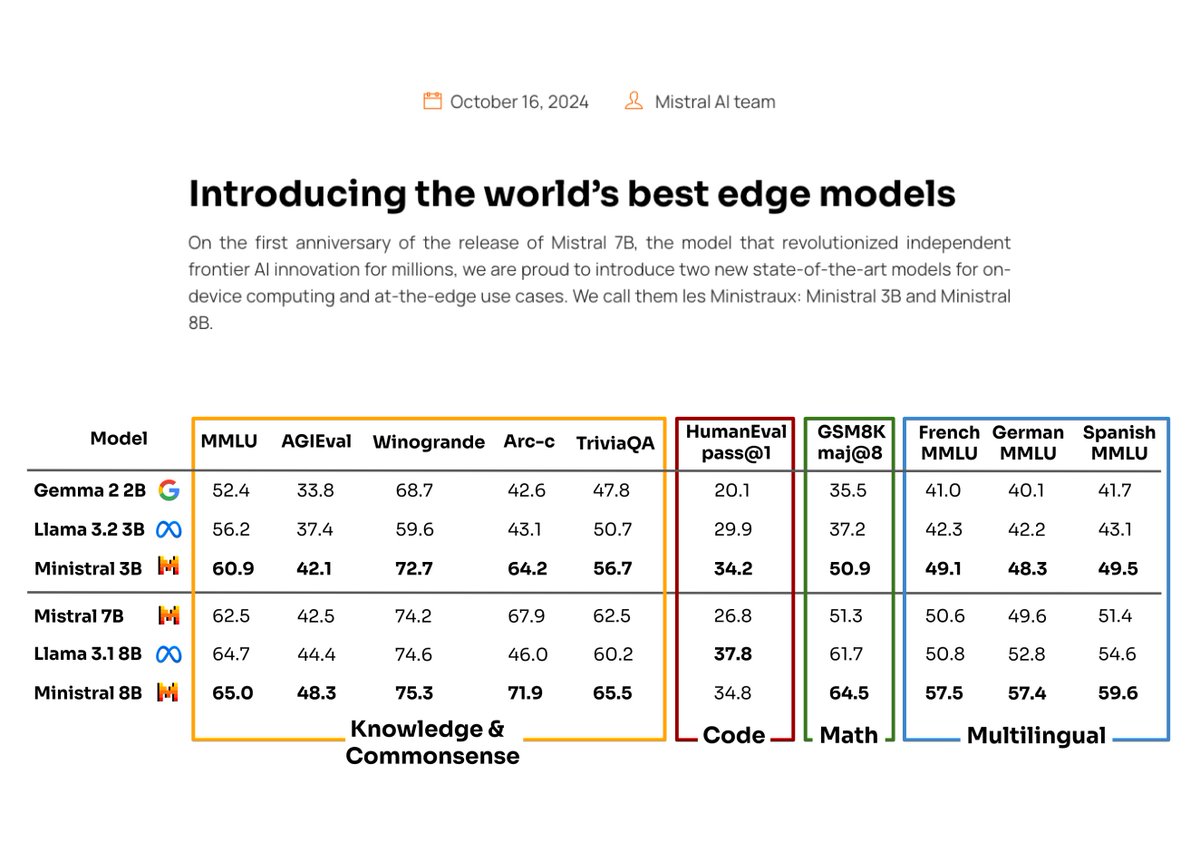

Mistral AI is doubling down on small language models. Their latest Ministral models (both the 3B and 8B) are pretty impressive and will be incredibly useful for a lot of LLM workflows. Some observations: I enjoy seeing how committed Mistral AI is to developing smaller and more capable models. They seem to understand what developers want and need today. There is huge competition for the finest, smallest, and cheapest models. This is good for the AI developer community. This sets up the community really well in terms of the wave of innovation that’s coming around on-device AI and agentic workflows. 2025 is going to be a wild year. They don’t mention the secret sauce behind these capable smaller models (probably some distillation happening), the Ministral 3B model already performs competitively with Mistral 7B. I think this is a great focus of Mistral as they seek to differentiate from other LLM providers. Given this announcement, I am now super curious about what the next Gemma and Llama small models are going to bring. Mini models are taking over! I use small models for processing data, structuring information, function calling, routing, evaluation pipelines, prompt chaining, agentic workflows, and a whole lot more.