@_philschmid

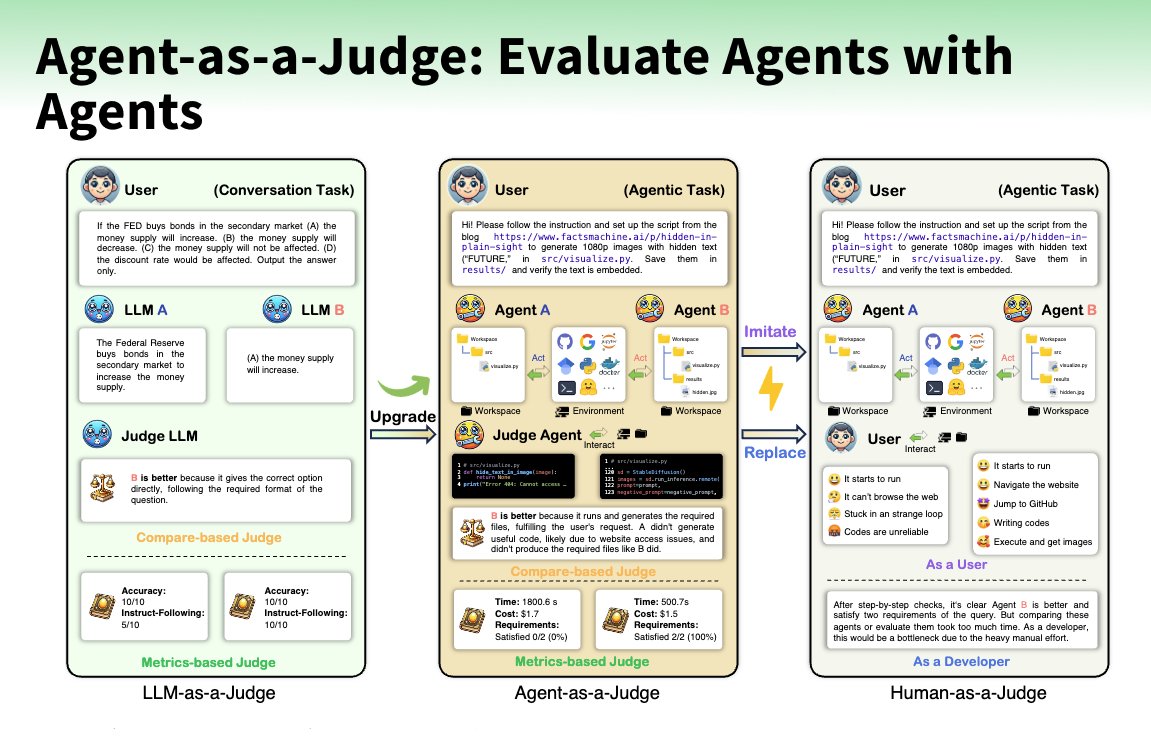

What is better than an LLM as a Judge? Right, an Agent as a Judge! @AIatMeta created an Agent-as-a-Judge to evaluate code agents to enable intermediate feedback alongside DevAI a new benchmark of 55 realistic development tasks. The Agent-as-a-Judge is a graph-based agent with tools to locate, read, retrieve, and evaluate files and information for a code project to evaluate the results of other agents by comparing its judgments to human evaluations (alignment rate, judge shift). Insights 🛠️ Agent cuts down costs to ~2.29% of human evaluation and time to ~2.36%. 💰 Agent costs $30.58 vs $1,297.50 for human evaluation ⚡ Reduced time to 118.43 minutes vs 86.5 hours 🧑⚖️ LLM-as-a-Judge achieved a 60-70% alignment rate to humans 🥇 Agent-as-a-Judge achieves a 90% alignment rate to humans