@jerryjliu0

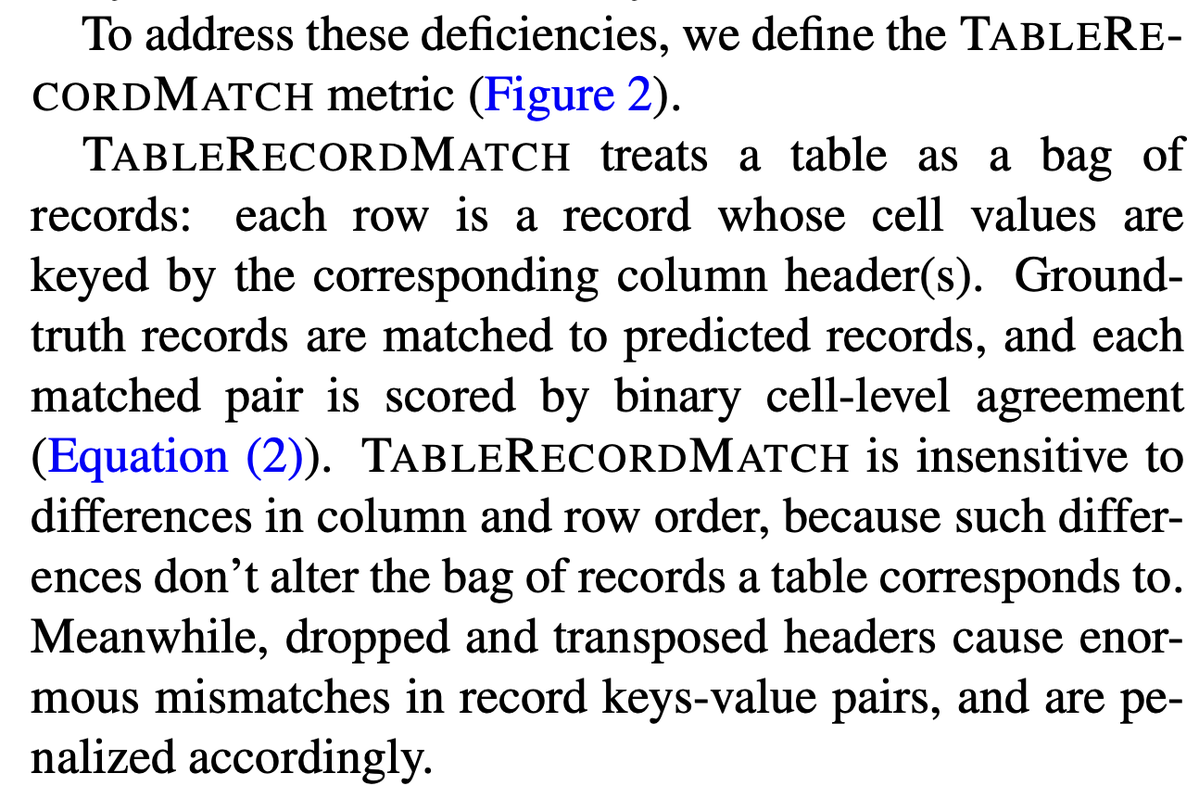

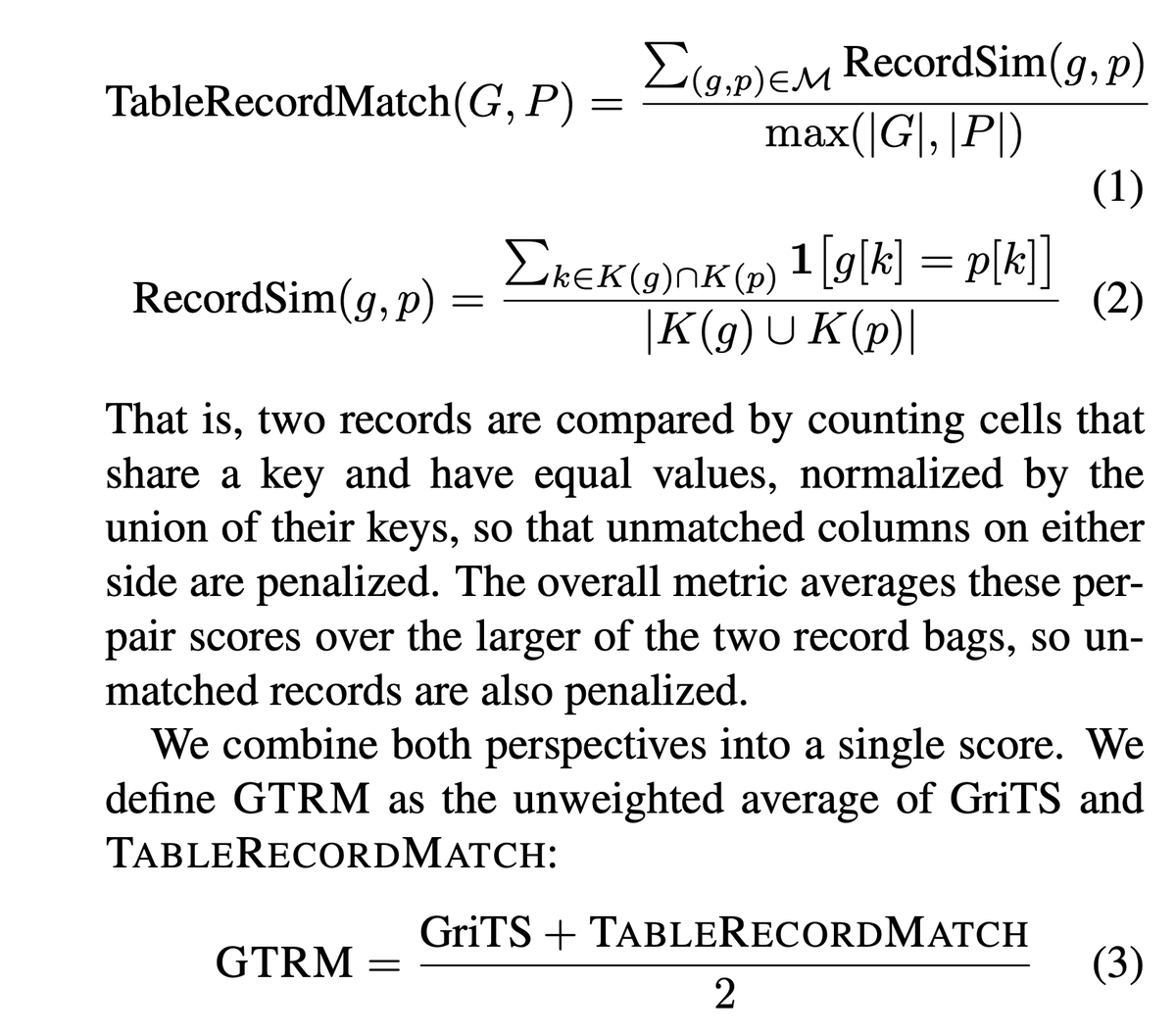

Parsing complex tables in PDFs is extremely challenging. Existing metrics for measuring table accuracy, like TEDS (tree edit distance similarity), overweight exact table structure and underweight semantic correctness. 🚫 Overweight: If the rows within a table are out of order - even if the semantic meaning is still consistent - then TEDS heavily penalizes these values, even though the downstream AI agent would have no problem interpreting the values. 🚫 Overweight: If the HTML is semantic equivalent but output with different tags (th vs. td), TEDS will penalize 🚫 Underweight: If the header is dropped or transposed, then TEDS mildly penalizes these values, even though the entire semantic meaning of the table is destroyed. We recently released ParseBench, a comprehensive enterprise document benchmark with a heavy focus on *semantic correctness* for tables. We define a new metric: TableRecordMatch - which treats tables as a bag of records, where each record is a dictionary of key-value pairs, with keys being the headers and values being the cell values. We combine it with the GriTS metric (more robust than TEDS) to come up with the final GTRM score. It’s worth giving our full paper a read if you haven’t already. Also come check out our website hub! Website: https://t.co/k564afEGJo Blog: https://t.co/57OHkx0pQW Paper: https://t.co/Ho2oH2xEAM