@PyTorch

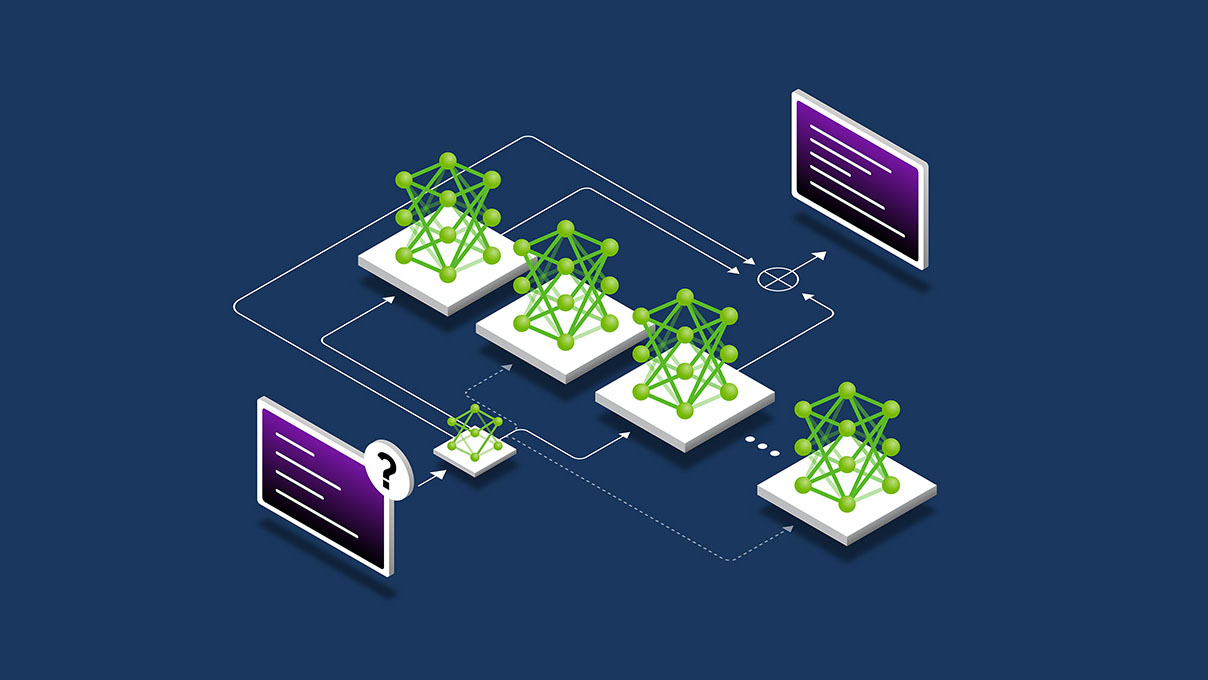

Need to accelerate Large-Scale Mixture of Experts Training? With @nvidia NeMo Automodel, an open source library within NVIDIA NeMo framework, developers can now train large-scale MoE models directly in PyTorch using the same familiar tools they already know. Learn how to make MoE training of massive models simple and efficient by reading NVIDIA’s developer blog: 📎 https://t.co/xGnx95jY8y #PyTorch #MoE #OpenSourceAI