@danielhanchen

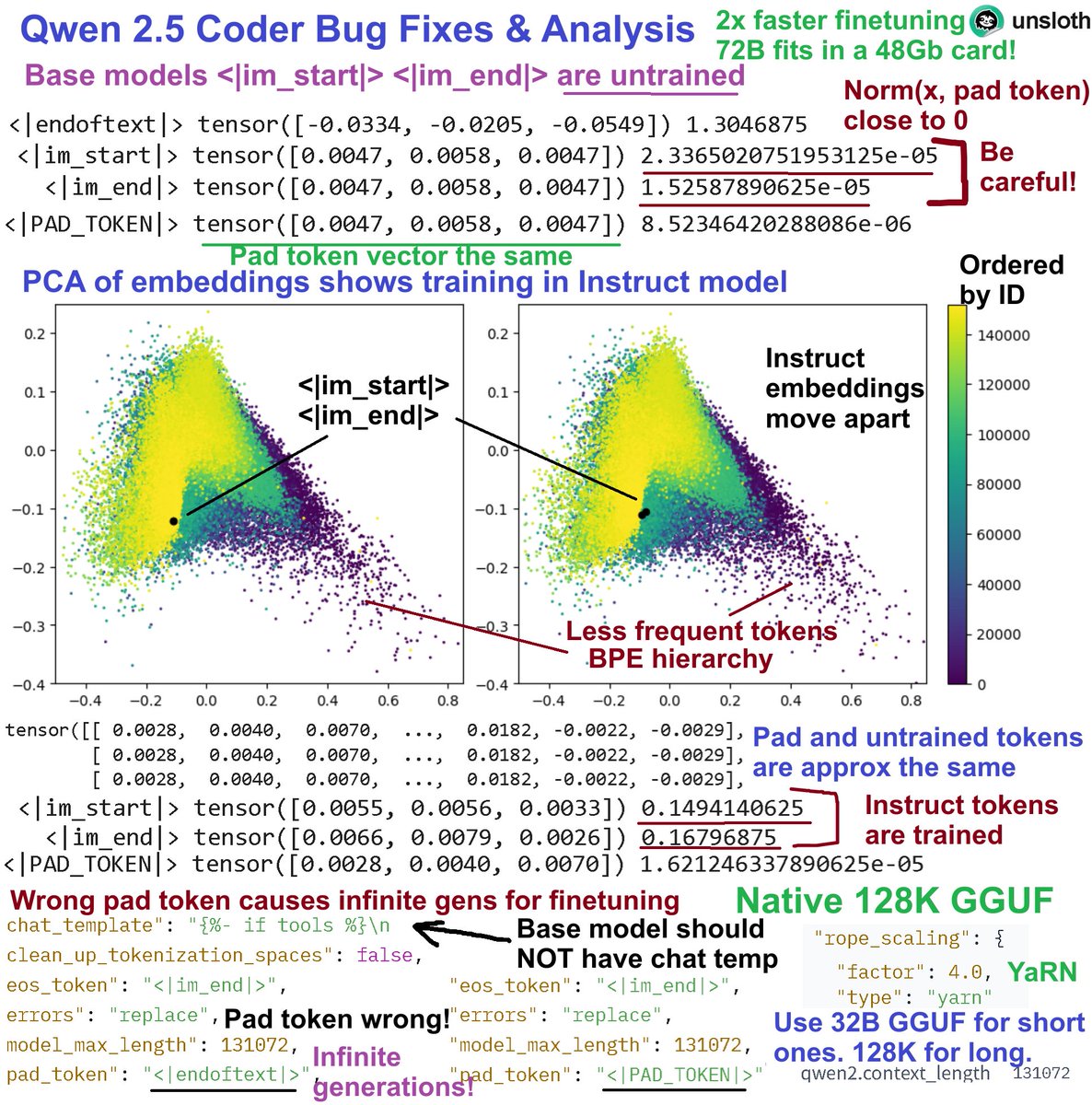

Bug fixes & analysis for Qwen 2.5: 1. Pad_token should NOT be <|endoftext|> Inf gens 2. Base <|im_start|> <|im_end|> are untrained 3. PCA on embeddings has a BPE hierarchy 4. YaRN 128K extended context from 32B 5. Fixed versions + 128K GGUFs: https://t.co/gHMS1CeFLF Details: 1. Pad token bug - for finetuning, never use pad_token = EOS - this will result in infinite generations since finetuning will ignore them. Base model also has a chat template - remove this. @UnslothAI versions fixed them 2. Untrained tokens issues. Do NOT use the Qwen 2.5 chat template for the base version - <|im_start|>, <|im_end|> are untrained since Norm(<im_x>, pad_token) is close to 0. Instruct version have them trained. 3. PCA on embeddings for Base and Instruct show a BPE hierarchy. Less frequent tokens are obvious since they're ordered by ID. PCA shows <|im_x|> moving away from being untrained. Same phenomenon for Llama & more models. 4. Uploaded native 128K extended YaRN GGUFs for Coder 0.5B all the way until 32B to https://t.co/ZoGyVKiLFX. Use the 128K version for long contexts. Use the 32B native version for general chats. Also, Unsloth can finetune 14B in a free Colab! Conversational style finetuning: https://t.co/NcPZiB0Wj9 Kaggle 14B notebook: https://t.co/3Jr44emNqM Unsloth can also finetune the 72B variants in a 48GB card!