@rohanpaul_ai

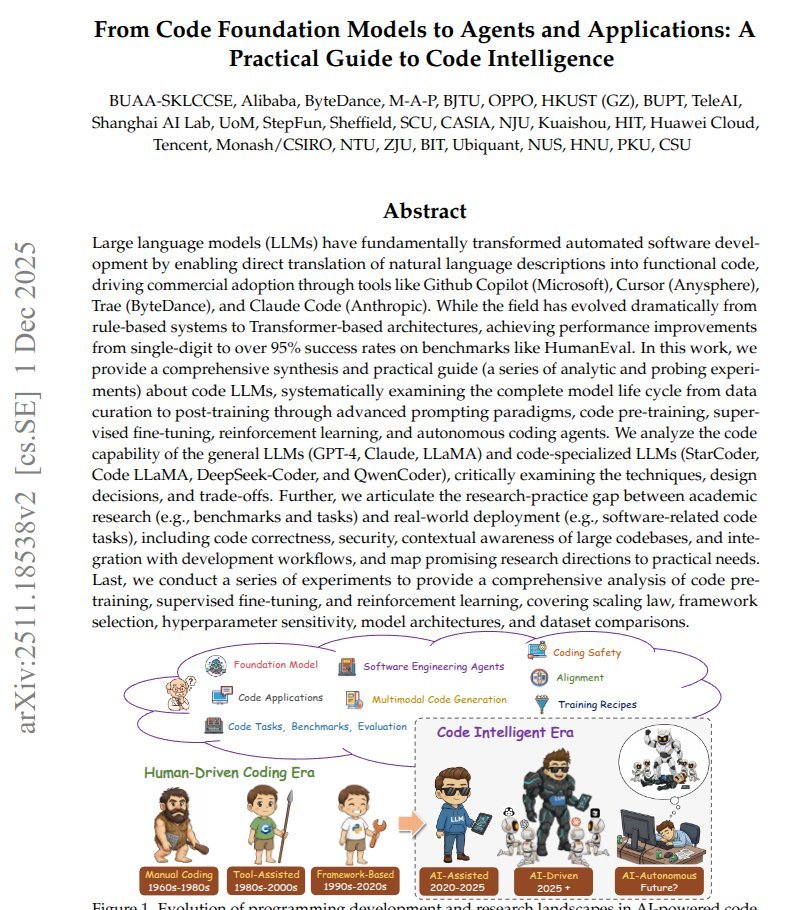

A MASSIVE 303 page study from the very best Chinese Labs. The paper explains how code focused language models are built, trained, and turned into software agents that help run parts of development. These models read natural language instructions, like a bug report or feature request, and try to output working code that matches the intent. The authors first walk through the training pipeline, from collecting and cleaning large code datasets to pretraining, meaning letting the model absorb coding patterns at scale. They then describe supervised fine tuning and reinforcement learning, which are extra training stages that reward the model for following instructions, passing tests, and avoiding obvious mistakes. On top of these models, the paper surveys software engineering agents, which wrap a model in a loop that reads issues, plans steps, edits files, runs tests, and retries when things fail. Across the survey, they point out gaps like handling huge repositories, keeping generated code secure, and evaluating agents reliably, and they share practical tricks that current teams can reuse.