@weights_biases

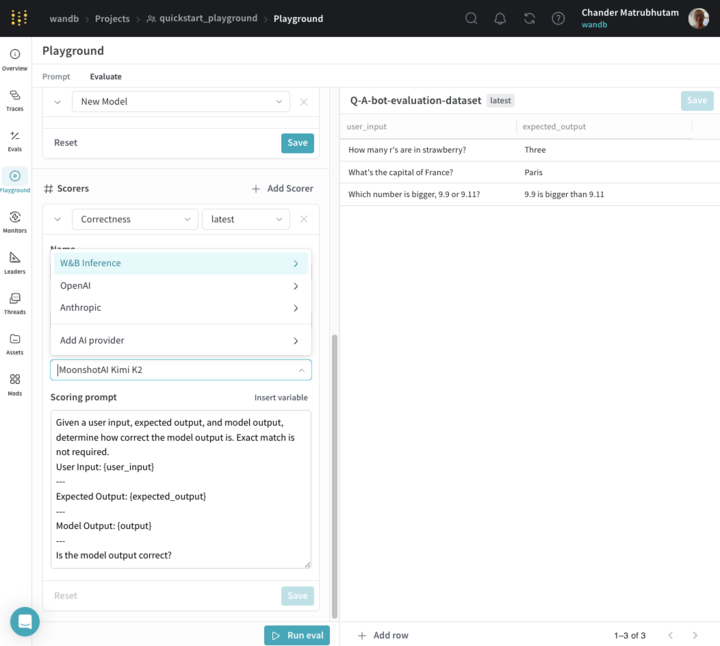

How it works: - Open Playground → Evaluate. - Create or upload a dataset (CSV, TSV, JSON, etc). - Add Weave models: provider model + system prompt. Compare multiple models all in one run. - Once your prompt is ready, share it with your dev team so they can carry the prompts forward and build the end application using the Weave evaluation and evaluation-logger apis.