@dair_ai

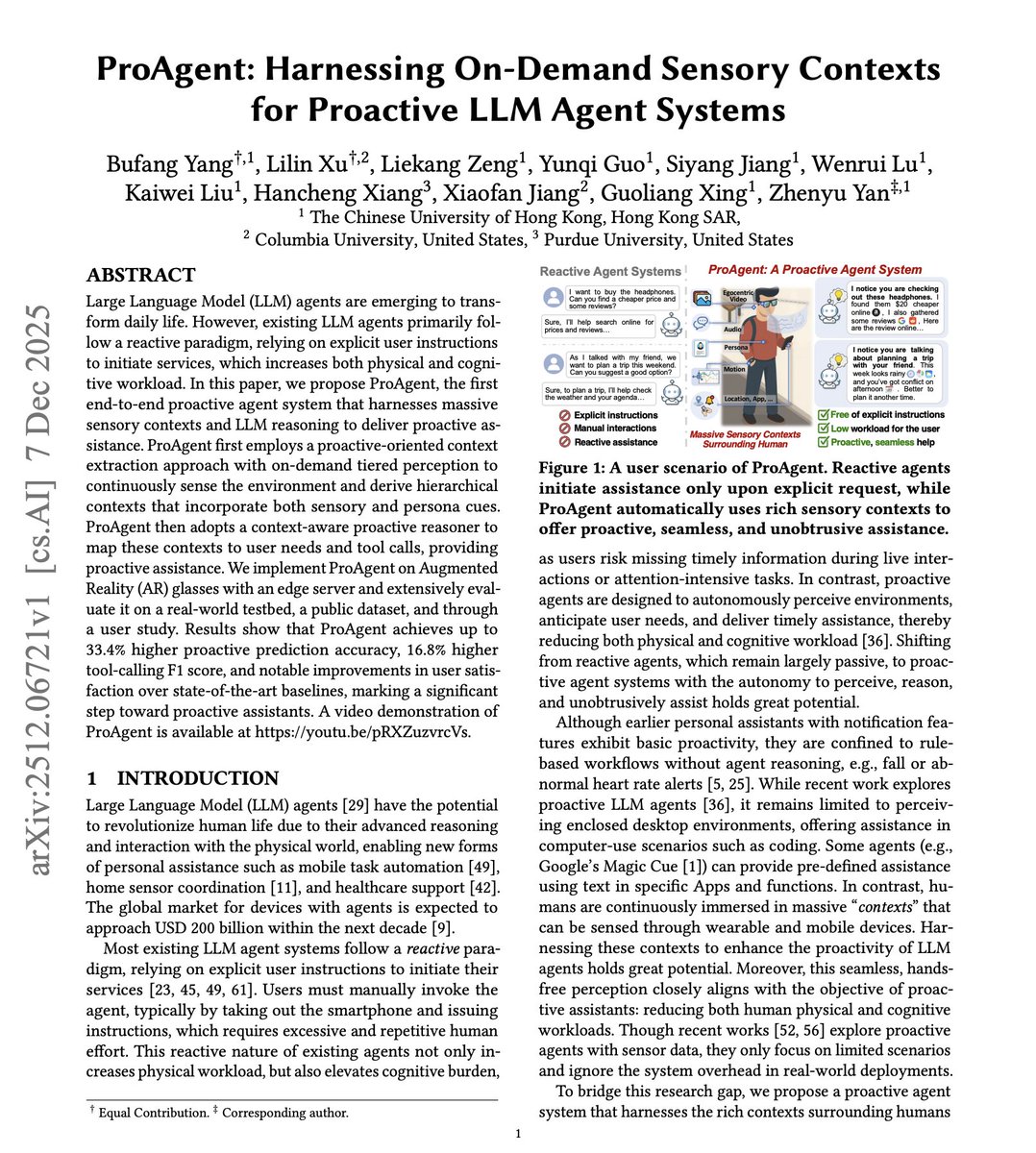

Very few are talking about proactive agents, but they are coming! Current LLM agents wait for you to ask for help. But the best assistant anticipates what you need before you ask. Existing agents follow a reactive paradigm. Users must unlock their phone, navigate to an app, and issue explicit instructions. During a conversation about travel plans, you have to manually ask for weather updates. While shopping, you have to explicitly request price comparisons. This new research introduces ProAgent, an end-to-end proactive agent system that continuously perceives your environment through wearable sensors and delivers assistance before you ask. The key idea: instead of waiting for commands, ProAgent uses egocentric video, audio, motion, and location data from AR glasses and smartphones to anticipate user needs. An on-demand tiered perception system keeps low-cost sensors always on while activating high-cost vision only when patterns suggest assistance opportunities. When you're at a bus stop, ProAgent notices the last bus just left and offers to book an Uber. During a conversation about weekend plans, it proactively checks the weather and your calendar for conflicts. While browsing headphones in a store, it finds lower prices online and gathers reviews. Results across real-world testing with 20 participants: ProAgent achieves 33.4% higher proactive prediction accuracy, 16.8% higher tool-calling F1 score, and 1.79x lower memory usage compared to baselines. User studies show 38.9% higher satisfaction across five dimensions of proactive services. The system runs on edge devices like NVIDIA Jetson Orin with 4.5-second average latency, keeping all data local for privacy. Shifting from reactive to proactive agents reduces both physical and cognitive workload. You stop missing timely information during conversations and attention-intensive tasks. Paper: https://t.co/3zFVP5igxe Learn to build effective agents in our academy: https://t.co/zQXQt0PMbG